So I was poking around a partner portal during some routine security research, and I stumbled into something ugly. I changed one HTTP header, literally just swapped X-PRM-TenantId: 986 to X-PRM-TenantId: 200, and suddenly I was looking at a completely different company's partner data. No login required. No tricks. Just... change a number.

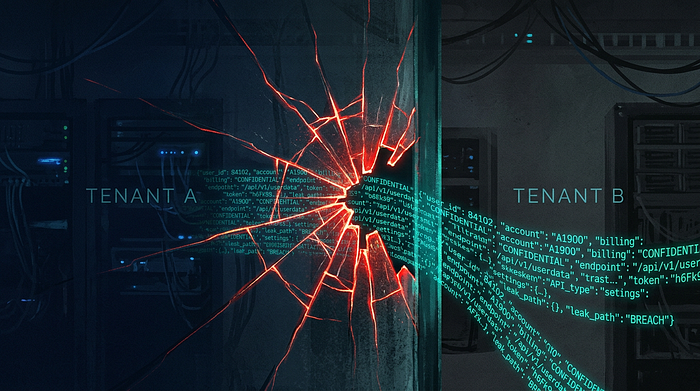

That's how I ended up discovering that a widely-used Partner Relationship Management (PRM) platform had basically zero tenant isolation. I want to walk through what I found, how it happened, what the vendor said about it, and what everyone involved can learn from this.

How I Found It

I was looking at how the partner portal made API calls and noticed every request included a header called X-PRM-TenantId with a plain numeric value. If you've spent any time testing web apps, that should immediately make you uncomfortable. The client is telling the server which tenant it belongs to? That's the kind of thing that goes wrong fast.

I had to check. I swapped the tenant ID to a different number and fired off the request.

GET /prm/api/objects/v1/Account?take=5 HTTP/1.1

Host: target-portal.platform.example

X-PRM-TenantId: 200

Accept: application/jsonBack came 187 partner accounts. They weren't mine. They belonged to a completely different organization on the same platform.

What I Could See

I spent about an hour confirming the scope before stopping and reporting everything. Here's what was sitting there, wide open:

The Account API gave up:

- Partner company names and websites

- Revenue figures (ranging from $1.1M to $558M)

- Locations and mailing countries

- Partnership tiers and status

- Pipeline data

The Forms API exposed:

- Over 619 email addresses

- Over 278 full names

- Submission dates and metadata

The schema endpoints revealed:

- The full database structure (49 object types)

- Every field definition and data type

- Picklist values showing which competitor products partners were selling

The worst part? None of it required any authentication at all. You didn't need an API key, a session cookie, nothing. Just curl and a URL.

Breaking Down What Went Wrong

The Tenant ID was Client-Controlled

The whole system relied on a single HTTP header to decide whose data to show:

X-PRM-TenantId: 986Three things were broken at once:

- That header value came from me, the client

- There was no authentication check

- The server never verified whether I actually had any business accessing that tenant

Basically the server's logic was: "Oh, you say you're tenant 200? Cool, here's everything." Imagine walking into a bank, telling the teller "I'm account holder #12345," and they just hand you the statements without asking for ID. That's what was happening here.

The APIs Were Completely Open

Even setting aside the cross-tenant problem, these APIs were returning sensitive business data to anyone who asked. I could have been sitting in a random coffee shop running:

curl "https://any-company.platform.example/prm/api/objects/v1/Account?take=100" \

-H "X-PRM-TenantId: 986"No credentials whatsoever. And back would come partner names, revenue numbers, contact details. Competitors could scrape entire partner lists. Attackers could build targeted phishing campaigns with real data. Revenue figures for private companies were just… out there.

Any API that serves business data needs authentication. Period.

Personal Data Was Exposed Through Forms

The Forms API was the same deal. No auth required, and it was returning form submissions with real people's information:

{

"email": "real.person@real-company.com",

"firstName": "Real",

"lastName": "Person",

"company": "Real Company Name",

...

}(I've obviously changed those values, but you get the picture.)

This is where GDPR becomes relevant. Some of these people were EU residents, and their personal data was accessible without any controls. That's a clear violation of Articles 5(1)(f), 25, and 32. The fines for that kind of thing can go up to €20M or 4% of global annual turnover.

The Entire Database Schema Was Public

There was a _describe endpoint that returned everything about the database structure:

curl "https://target.platform.example/prm/api/objects/v1/_describe" \

-H "X-PRM-TenantId: 986"49 object types, all their fields, all the data types. One picklist caught my eye. It listed the exact competitor products that partners were selling:

{

"fieldName": "Competitor_Products__c",

"picklistValues": [

"Security Platform A",

"Endpoint Protection Product B",

"Network Security Solution C",

"Cloud Security Platform D",

...

]

}That's competitive intelligence you'd normally pay consultants a lot of money for. And it was sitting there for anyone to grab.

Schema exposure on its own isn't as bad as data leaks, but it gives attackers a complete map of what's worth stealing and where to find it.

Why This Happened

When I reported all of this, the vendor's first response caught me off guard:

"This is functioning as expected."

Seriously.

It turned out that "anonymously accessible" was a configuration option, and it was turned on by default for things like public partner directories. The vendor eventually admitted the defaults "were not as transparent or manageable as they should be" and changed them. But it raises an important question: who's responsible here?

The vendor, for shipping insecure defaults? The customer, for not reviewing what those defaults actually meant? I'd say it's mostly on the vendor. If you're building a platform that handles sensitive data for multiple companies, you don't ship it with everything wide open and hope customers figure out they need to lock it down. Secure should be the default. If someone wants to make something public, they should have to explicitly choose that, understanding what it means.

That said, customers also have a responsibility to actually review the security settings of the platforms they use. "I assumed it was secure" isn't really good enough when you're storing partner revenue data and contact information.

What Proper Isolation Looks Like

Here's the kind of code that was probably running (simplified):

# DON'T DO THIS

@app.route('/api/v1/accounts')

def get_accounts():

tenant_id = request.headers.get('X-PRM-TenantId') # Trusting client!

accounts = database.query(f"SELECT * FROM accounts WHERE tenant_id = {tenant_id}")

return jsonify(accounts)And here's what it should look like:

# DO THIS

@app.route('/api/v1/accounts')

@require_authentication

@require_authorization(['read:accounts'])

def get_accounts():

user = get_authenticated_user()

tenant_id = user.get_tenant_id()

if not user.can_access_tenant(tenant_id):

abort(403, "Forbidden")

accounts = database.query(

"SELECT * FROM accounts WHERE tenant_id = :tenant",

tenant=tenant_id

)

audit_log(user.id, 'read', 'accounts', tenant_id)

return jsonify(accounts)The key difference: the server figures out who you are from your authenticated session. You don't get to tell it. On top of that, you want row-level security at the database itself:

-- PostgreSQL example

ALTER TABLE accounts ENABLE ROW LEVEL SECURITY;

CREATE POLICY tenant_isolation ON accounts

USING (tenant_id = current_setting('app.current_tenant')::integer);That way even if your app code has a bug, the database won't let data leak across tenants.

How I Reported It

The customer's bug bounty program had this asset listed as out of scope. So I had a choice: walk away, or report it anyway. I reported it. Just because something is out of scope for a bounty doesn't mean real people aren't affected.

Here's the timeline:

Feb 2: I sent the full write-up to the customer's security team and their CISO. Reproduction steps, impact assessment, list of affected tenants.

Same day: The customer started investigating and reached out to the PRM vendor. I also filed a report with the vendor through their bug bounty program.

Feb 6: After the customer's security team pushed the issue with the vendor, fixes were deployed. APIs started requiring authentication, permission settings were updated.

Feb 9: The customer confirmed everything was remediated across all endpoints.

Eight days from initial report to full fix. That's honestly pretty good, and it's what coordinated disclosure should look like.

The Vendor's Response

The vendor closed my report as "informative," their way of saying "working as designed."

But then they went ahead and deployed fixes that required authentication and changed the permission settings. So… it clearly wasn't working as designed, was it?

To their credit, once the vendor actually understood the severity of what was happening, they moved fast. They didn't just patch it for this one customer. They reviewed the default permission settings across their platform and updated them for all customers. They also acknowledged that those defaults "were not as transparent or manageable as they should be," which is vendor-speak for "yeah, we messed up the defaults."

What I think happened is that anonymous access was originally a feature for public partner directories, and nobody really thought through what "anonymously accessible" actually meant in practice. The customer probably didn't realize that setting meant literally anyone on the internet could query their APIs and pull business data. And the vendor didn't make that clear enough.

Multiple organizations ended up with their partner data exposed because of this gap.

What an Attacker Could Have Done

If someone malicious had found this instead of me, here's what they could have pulled off:

They could have mapped out a competitor's entire partner network: who their partners are, how much revenue they bring in, what their go-to-market strategy looks like. That's the kind of intelligence companies spend serious money trying to gather.

They could have built extremely convincing phishing campaigns using real partner company names, real contact info, real deal context. "Hey, I noticed our partnership tier is up for review" hits different when the attacker actually knows your partnership tier.

They could have scraped everything and sold it. B2B contact lists, competitive intelligence, relationship maps.

And this wasn't limited to one company. An attacker could have cycled through tenant IDs and pulled data from every organization on the platform in one sitting.

The Ransomware and Extortion Risk

This is the part that should keep executives up at night. An attacker sitting on this kind of access has real leverage:

"We have your complete partner list, revenue breakdowns for every partner, and the email addresses of 619 people in your network. Pay up, or we send it all to your top three competitors."

That's not a hypothetical. It's exactly how modern data extortion works. Groups like Cl0p and LockBit don't even bother encrypting systems anymore in some cases. They just steal data and threaten to publish it. A vulnerability like this one is a gift-wrapped opportunity for that kind of attack. No need to deploy malware, no need to get deep into the network. Just hit the API, grab everything, and start making demands.

And the leverage gets worse when you factor in regulation. Once an attacker has proof they accessed personal data, the victim company is potentially on the hook for GDPR breach notifications within 72 hours. The attacker can use that as pressure: "If you don't pay, we'll make it public, and then you'll have to notify regulators and every affected individual." That's a clock ticking against the victim, and attackers know how to exploit it.

The Legal Exposure Nobody Talks About

Here's the angle that I think gets overlooked in most vulnerability write-ups: the lawsuit risk.

Think about it from the perspective of the affected partner companies. Their data (company names, revenue figures, contact information, partnership details) was exposed without their knowledge or consent. They didn't sign up for the PRM platform. They didn't agree to have their data stored in a system with no authentication. The customer organization put their data there, and the vendor failed to protect it.

If this had been exploited, every one of those partner companies could potentially sue:

- The PRM vendor for negligent security practices, specifically shipping a platform where tenant data was accessible without authentication by default. A product liability or negligence claim would focus on the fact that the vendor knew (or should have known) that "anonymously accessible" defaults on APIs serving business data is unreasonable.

- The customer organization for failing to protect third-party data they collected. When you ask partners to submit their company info, revenue numbers, and contact details through your portal, you're taking on a duty of care. If that data gets exposed because you didn't review the security settings of your own platform, that's on you.

- Both of them jointly, which is usually what happens. Plaintiff lawyers would go after whoever has deeper pockets and let the vendor and customer fight over liability between themselves.

The causes of action could include negligence, breach of contract (if partner agreements included data protection clauses), breach of fiduciary duty, and in some jurisdictions, violations of data protection statutes that create private rights of action.

And here's the multiplier: this affected multiple organizations on the platform. If an attacker had exploited this and the breach became public, you wouldn't just have one company dealing with lawsuits. You'd have every affected tenant potentially facing claims from their own partners. The vendor would be looking at class action territory.

For the partner companies whose revenue data was exposed (some with figures up to $558M), the competitive harm alone could be significant. If a competitor got hold of that data and used it to poach partners or undercut deals, the damages could be substantial and provable.

The GDPR Problem on Top of All That

Layer GDPR on top of the lawsuit risk and it gets even messier. Several of the affected organizations had European partners, so the regulation applies. Unauthenticated access to names and email addresses violates Articles 5(1)(f), 25, and 32 pretty clearly.

The potential consequences stack up fast: fines up to €20M or 4% of global annual turnover, mandatory breach notifications to authorities within 72 hours, potential notification to every affected individual, regulatory investigations, and on top of all that, the private lawsuits from affected parties that GDPR enables in some member states.

British Airways got hit with a £20M fine for a breach affecting 400,000 people. This case exposed over 619 individuals across multiple organizations. And unlike a typical breach where attackers exploit a sophisticated vulnerability, the defense here would be particularly weak. "Our API didn't require authentication" is a hard position to defend in front of a regulator.

Testing for This Kind of Thing

If you do security research on SaaS platforms, here are the patterns to watch for:

Look for client-controlled tenant identifiers, things like X-Tenant-ID, X-Organization-ID, or URL parameters like ?tenant=123. If you can control the value from the client side, check whether the server actually validates it.

Try subdomain-based access:

# Logged into companyB.platform.example

curl "https://companyB.platform.example/api/data?tenant=companyA"Try direct object references across tenants:

curl "https://platform.example/api/accounts/12345" # Tenant A

curl "https://platform.example/api/accounts/67890" # Tenant BIf tenant IDs are sequential, you can enumerate them trivially:

for id in {1..1000}; do

curl "https://platform.example/api/data" -H "X-Tenant-ID: $id"

doneCheck for exposed schema/metadata endpoints, things like /api/_describe, /api/schema, /api/metadata.

And test every endpoint without any credentials at all. Drop the auth headers, drop the cookies. If business data comes back, that's a finding.

But here's the important part: once you've confirmed the issue, stop. I used the minimum number of requests needed to verify the problem and understand the scope. I didn't download all the data, I didn't test every tenant, I didn't try write operations. Prove it exists, document it, report it.

What I Took Away From This

This was my first time finding something this serious on an out-of-scope asset. A few things I learned:

Scope boundaries matter, but ethics matter more. I stopped testing as soon as I realized it was out of scope, but I still reported what I'd found. People's data was at risk.

Keep your testing to the bare minimum. Confirm the pattern, sample enough to demonstrate impact, then stop. Don't go exploring out of curiosity.

Don't keep the data. I didn't save any of the emails, names, revenue figures, or company information I saw. The technical details of how the vulnerability works are what matter for the report. The actual data isn't yours to keep.

Coordinate with everyone. This affected the customer, the vendor, and every other organization on the platform. I reported to the customer and the vendor and let them handle notifying the rest.

The customer organization recognized the research with a charitable donation and said they'd credit me on their security disclosures page when it launches. Was that proportional to findings that affected multiple organizations? Probably not. But the asset was out of scope, so there was no obligation to pay anything. What mattered more was that everything got fixed in 8 days and nobody's data was actually breached.

The Bigger Picture

Multi-tenant architecture is everywhere. Salesforce, Slack, Zoom, HubSpot, basically every modern SaaS product. It's efficient and it scales well. But it's also complicated, and when isolation fails, it fails for everyone on the platform at once.

This isn't a new problem either. Salesforce had cross-tenant search leaks back in 2007. Microsoft's Azure CosmosDB had the ChaosDB vulnerability in 2021. Google Cloud had privilege escalation issues in 2020. It keeps happening because multi-tenant security is genuinely hard to get right.

All four vulnerabilities were fixed within 8 days, largely because the customer's security team took the report seriously and pressured the vendor to act. The vendor started requiring authentication on all endpoints, changed the default permission settings, and added server-side tenant validation.

What I'd Tell Vendors

If you're building a multi-tenant platform: don't trust anything that comes from the client to determine tenant context. Derive it from the authenticated session, server-side. Require authentication on every endpoint that serves data, not just the "sensitive" ones. Ship with secure defaults and make customers opt in to permissive settings, not the other way around. And if you have a setting called "anonymously accessible," make damn sure your customers understand what that actually means.

What I'd Tell Customers

If you're buying SaaS, review the security configuration during onboarding. Ask what the defaults are and what they mean. Include third-party platforms in your regular security assessments. Ask your vendor how tenant isolation works, how they derive tenant context, and whether all their APIs require authentication. If they can't give you clear answers, that should worry you.

What I'd Tell Researchers

Report what you find, even when it's out of scope. Minimize your testing footprint. Don't retain the data you access. Coordinate with all the affected parties. And when you write about it, focus on the lessons, not on naming and shaming.

The 8-day turnaround on this fix shows what happens when researchers and companies actually work together. That's the outcome we should all be pushing for.

I'm Sahar Shlichove, an independent security researcher working on web app security, API vulnerabilities, and SaaS platform assessments. I do manual testing, responsible disclosure, and I write about what I find so others can learn from it.

LinkedIn: https://www.linkedin.com/in/sahar042/

This article is for educational purposes. Everything was responsibly disclosed and fixed before I published this. Don't try to reproduce any of this on systems you don't own or have permission to test. Unauthorized access is illegal pretty much everywhere (CFAA, Computer Misuse Act, Israeli Computer Law, etc.). Company names have been left out intentionally. If you have questions about the technical details or the disclosure process, feel free to reach out.