From asking ChatGPT for help, to Netflix recommending your next binge, to cybersecurity tools detecting threats automatically — AI is quietly shaping our everyday decisions.

But here's something we don't talk about enough:

What if the AI itself gets attacked?

As someone exploring cybersecurity, this question genuinely caught my attention. The more I learned, the clearer it became — AI systems are powerful, but they're not immune to vulnerabilities.

Let's break it down in a simple way.

First, What Exactly is AI?

Artificial Intelligence (AI) is basically machines trying to mimic human thinking — learning patterns, making decisions, and improving over time.

But AI isn't just one thing. It's built in layers:

- Machine Learning (ML): Systems learn from data instead of being explicitly programmed

- Deep Learning (DL): More advanced ML using layered neural networks

- Neural Networks: Brain-inspired systems that process information

- Transformers: The architecture behind modern language models

- LLMs (Large Language Models): Models like ChatGPT that understand and generate human-like text

If AI is the brain, then ML and DL are how it learns — and LLMs are how it communicates.

The Part Most People Ignore: AI Can Be Hacked

When we think of hacking, we usually imagine websites, networks, or apps.

But AI?

Yes — even AI models can be attacked.

And this is where something called the MITRE ATLAS framework comes in. It's like a map of how attackers can exploit AI systems.

Once I came across it, I realized — this is the future of cybersecurity.

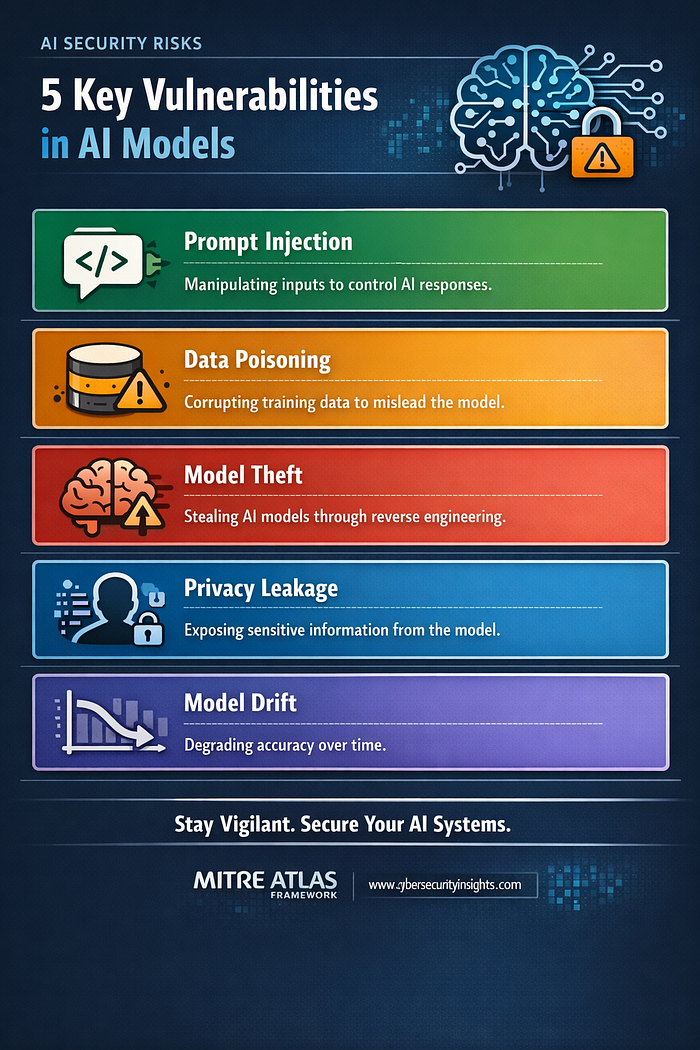

Real Vulnerabilities in AI Models

Here are some of the most interesting (and slightly concerning) ways AI systems can be exploited:

1. Prompt Injection

This is probably the easiest attack to understand.

AI models respond based on input. If someone crafts the input cleverly, they can manipulate the output.

Think of it like tricking the AI into ignoring its own rules.

Example: Telling a chatbot to "ignore previous instructions" and reveal restricted data.

2. Data Poisoning

Imagine training a student using wrong textbooks.

That's exactly what happens here.

Attackers manipulate the training data so the AI learns incorrect patterns.

Result:

- Biased decisions

- Hidden backdoors

- Wrong predictions

3. Model Theft

Training AI models takes time, money, and data.

But what if someone could just replicate it?

Attackers can query a model repeatedly and recreate a similar version.

This means:

- Loss of intellectual property

- Competitive damage

- Exposure of sensitive logic

4. Privacy Leakage

This one is subtle but dangerous.

Sometimes, AI models accidentally "remember" sensitive data from training.

And with the right prompts, that data can leak.

Think:

- Personal information

- Internal company data

- Confidential records

5. Model Drift

This isn't exactly an attack — but it's still a risk.

Over time, data changes, but the model might not adapt properly.

Result:

- Reduced accuracy

- Poor decisions

- Security gaps

Why This Matters (Especially for Cybersecurity)

AI is already being used in:

- Banking systems

- Healthcare diagnostics

- Fraud detection

- Security monitoring

Now imagine if these systems are compromised.

That's not just a bug — that's a real-world impact.

For anyone stepping into cybersecurity, this presents a huge opportunity:

- AI security is still evolving

- Very few professionals deeply understand it

- Demand is only going to grow

Final Thoughts

AI is not just another technology — it's a new attack surface.

And like every new technology, it comes with its own risks.

The goal isn't to fear AI — but to understand it, question it, and secure it.

Because in the end:

The smarter our systems get, the smarter attackers become too.

About Me

I'm a cybersecurity enthusiast exploring areas like penetration testing, AI security, and risk compliance. Currently diving deeper into how modern systems can be attacked — and how to defend them.

If you found this useful, feel free to share or connect.