In the rush to adopt agentic AI, the industry has reached a collective, comfortable consensus: we will keep a "Human-in-the-Loop" (HITL). We treat this human gatekeeper as the ultimate fail safe, the adult in the room who will catch the hallucination, block the prompt injection, and stop the rogue API call.

But as a CISO looking at the intersection of machine speed and human psychology, I'm convinced we are being sold a dangerous form of security theater. We are treating the human as a robust control, when in reality, we are introducing a massive, unpatched vulnerability into the heart of our AI strategy.

The Psychology of the "Rubber Stamp"

The primary reason HITL fails isn't a lack of technical skill; it's the inherent cognitive architecture of the human brain. We are leaning on a "gate" that is psychologically destined to stay open.

- The Automation Bias Trap: As AI agents become more sophisticated and their "vibes" more professional, human reviewers fall victim to automation bias. When a system is right 98% of the time, the human brain stops critically evaluating the remaining 2%. We don't "review" the agent's request; we scan for a tone of confidence and click "Approve."

- Decision Fatigue at Machine Scale: Agentic AI is designed for volume and velocity. If an agent presents 50 "checkpoints" a day, the human might be diligent. If it presents 500, that human becomes a bottleneck. To maintain the "efficiency" promised by the AI, the human gatekeeper inevitably begins to "rubber stamp" actions just to clear the queue.

The Negligent Insider: From Error to Attack Vector

We often talk about "insider threats" in the context of a malicious actor stealing data. But the data tells a different story. Recent 2025/2026 reports show that 74% of CISOs rank negligent insiders, well-meaning employees making mistakes, as a higher threat than malicious ones.

By placing a human in the loop of an agentic workflow, we aren't just adding a protector; we are adding a target.

- Agentic Phishing: We've spent decades training users not to click suspicious links in emails. Now, the "malicious link" is a prompt hidden inside a trusted internal agent's workflow. If an attacker can manipulate the agent's output to look like a standard system update or a routine API authorization, the human gatekeeper will authorize it because it's coming from "inside the house."

- The "Shadow" Propellant: With the rise of "Vibe Coding" and Shadow AI, employees are spinning up agents with high-level API access and long-lived tokens outside the view of the Security Operations Center (SOC) or the Secure Development Lifecycle (SDLC) process. When the creator of the agent is also the "Human-in-the-Loop" gatekeeper, the oversight is non-existent. It's an echo chamber, not a control.

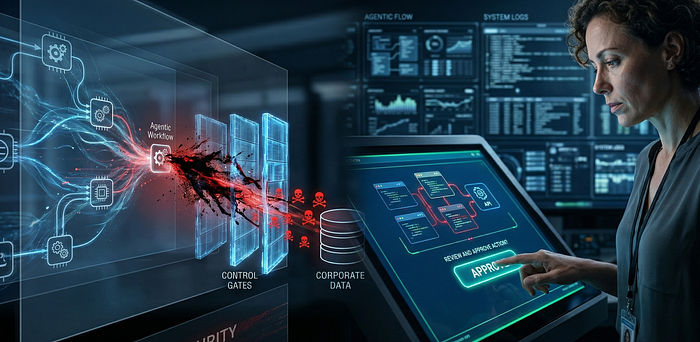

The Blindsided CISO: When the Control is the Vector

The industry is focused on securing the prompt, the model, and the data. We are building massive walls around the LLM, only to hand the keys to a human gatekeeper who is tired, distracted, and easily manipulated.

If we allow a user to review and approve an agent's action without rigorous, objective guardrails, we haven't secured the agent. We have simply empowered the user's mistakes, or their malice, to act at machine speed. A single negligent "Approve" click can now initiate a chain of agentic actions that exfiltrates a database or wipes a cloud environment before the SOC can even blink.

Moving Beyond the Gate

To stop being blindsided, we must stop treating HITL as a security control and start treating it as a risk to be managed.

- From HITL to HOTL (Human-on-the-Loop): We must move away from "transactional" approvals toward "high-level" auditing. Humans should set the policy; machines should enforce the boundaries.

- Deterministic Guardrails: An agent's power should never be defined by a human's "OK." It should be defined by immutable code. If an agent shouldn't move more than $500, it physically cannot do so, regardless of whether a human gatekeeper clicks "Approve."

- Adversarial Auditing: We need "agents watching agents." Use a secondary, hardened LLM to audit the human's approvals in real-time, flagging when a human gatekeeper appears to be suffering from fatigue or falling for a deceptive prompt.

Conclusion

The "Human-in-the-Loop" is a comforting concept, but in the world of agentic AI, comfort is the enemy of security. We are setting ourselves up for a new era of breaches where the "human gate" was the very thing that let the attacker in. It's time to stop trusting the gate and start hardening the architecture.

Our goal shouldn't be to keep the human in the loop; it should be to ensure the system is safe even when the human fails.