TL;DR

In the cloud era, developers got autonomy and finance got surprise bills. FinOps emerged reactively after billions were wasted.

AI agents are now getting API access with zero economic guardrails. Unlike cloud, agents don't just consume infrastructure — they trigger real-world spend.

If you don't enforce policy at tool execution, you're replaying 2014.

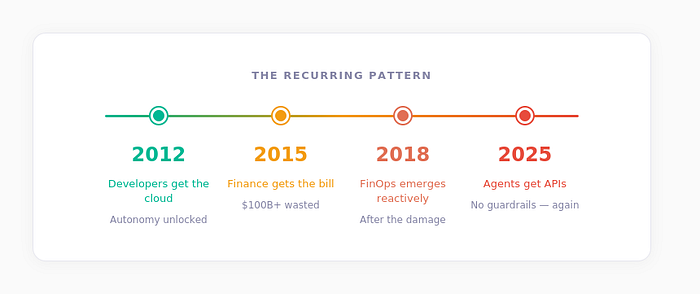

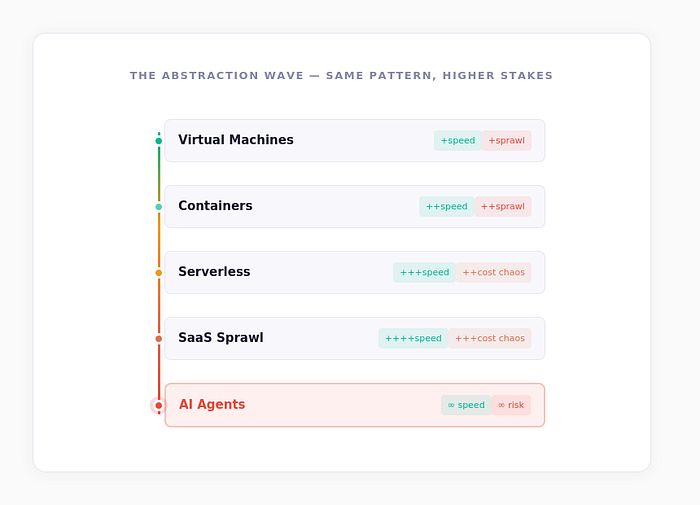

The Pattern We Already Lived Through

In 2012, developers got the cloud. In 2015, finance got the bill. In 2025, agents are getting APIs — and no one is watching the spend.

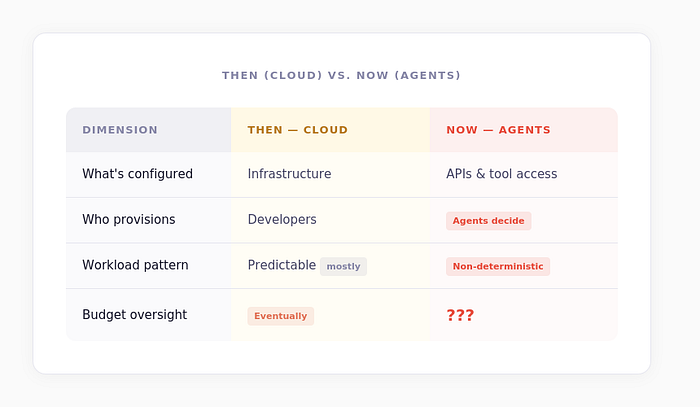

When we moved from on-prem to cloud, three fundamental things changed:

It was revolutionary. It was also chaotic. Infrastructure became programmable, spend became elastic, and suddenly every team could create cost.

The result? Hundreds of billions in cloud waste before FinOps became mainstream.

Now look at what's happening with agents:

We are replaying the exact same structural shift. But this time, it's more dangerous.

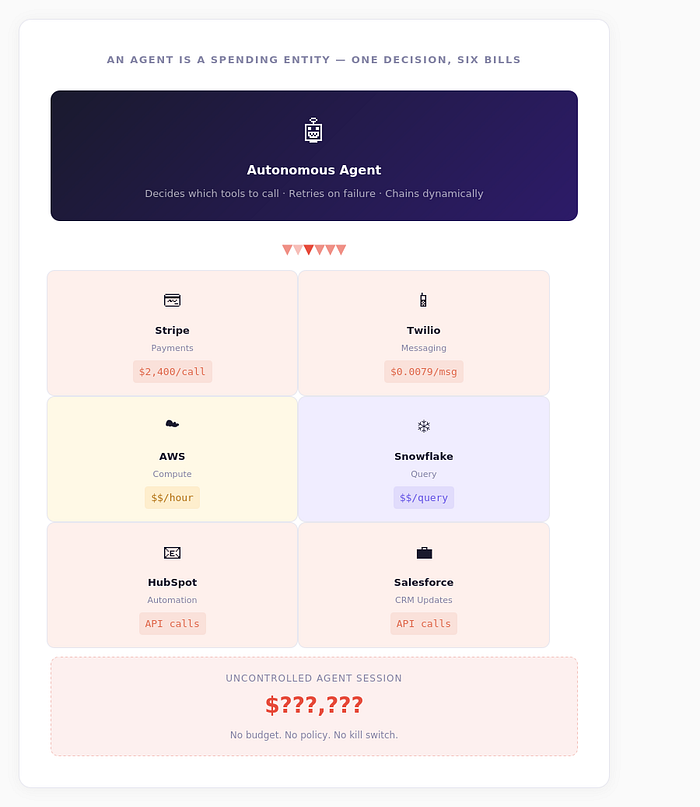

Agents Don't Just Consume Compute

Cloud waste hurt. Agent misuse is structurally different.

Cloud increased infrastructure velocity. Agents increase economic velocity. An agent can decide which tool to call, how often to call it, retry on failure, chain calls dynamically, and operate continuously.

It is no longer "just code." It is a spending entity.

We gave developers APIs. Now we are giving agents wallets.

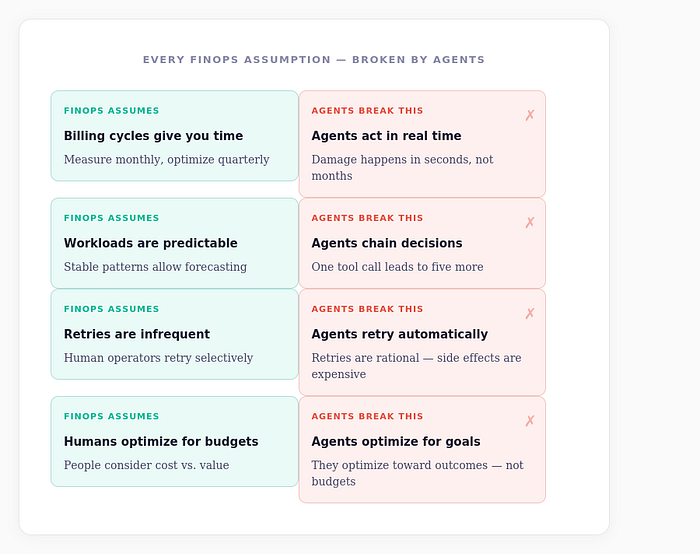

Why Traditional FinOps Doesn't Work Here

FinOps was reactive by design: measure usage, allocate costs, create dashboards, optimize later. It worked — eventually — because cloud workloads were somewhat predictable, spend scaled with infrastructure, and humans were in the loop.

Agents break every one of those assumptions.

And most importantly, the problem is not just cost. It's compliance violations, unapproved vendors, data exfiltration, policy breaches, and financial exposure.

The Core Shift

You don't need better reporting. You need enforcement.

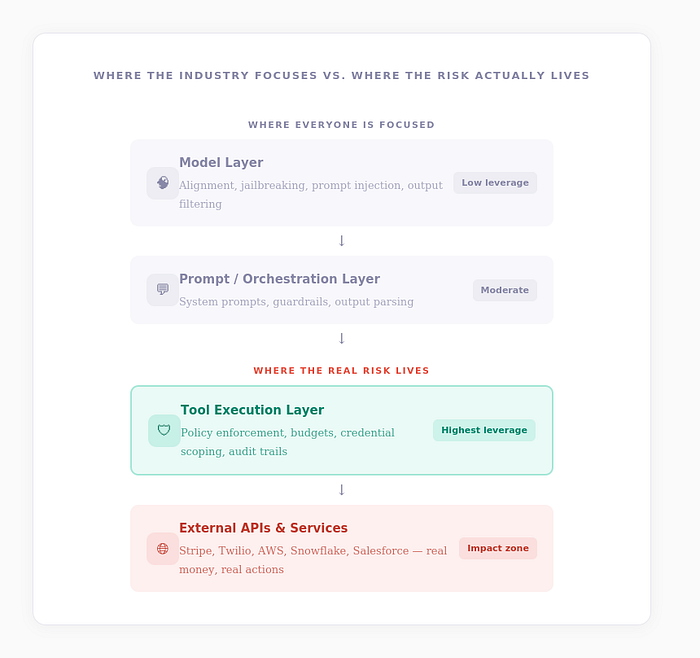

The Industry Is Securing the Wrong Layer

Right now, the entire AI safety conversation is focused on jailbreaking LLMs, prompt injection, alignment, and output filtering. Those are model problems.

But production incidents won't come from poetic hallucinations. They'll come from valid tool calls.

Don't fight the brain. Control the hands.

Secure the Tools, Not the Model

If an agent calls Stripe, the critical question isn't "Was the prompt aligned?"

It's:

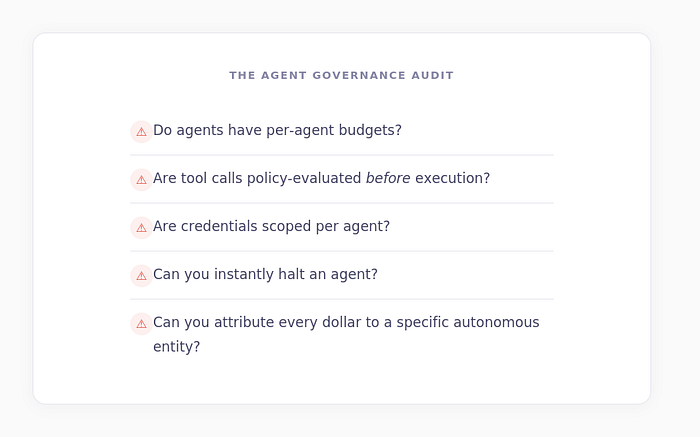

- Is this within budget?

- Is this vendor allowed?

- Is this token scoped?

- Is this action compliant?

- Can we roll it back?

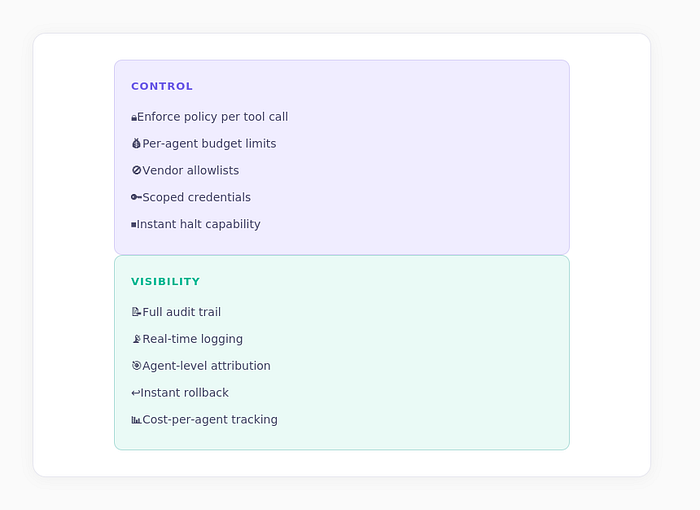

Every tool call should pass through policy enforcement, per-agent budget checks, vendor allowlists, scoped credentials, real-time logging, and attribution.

This is not monitoring. This is economic governance.

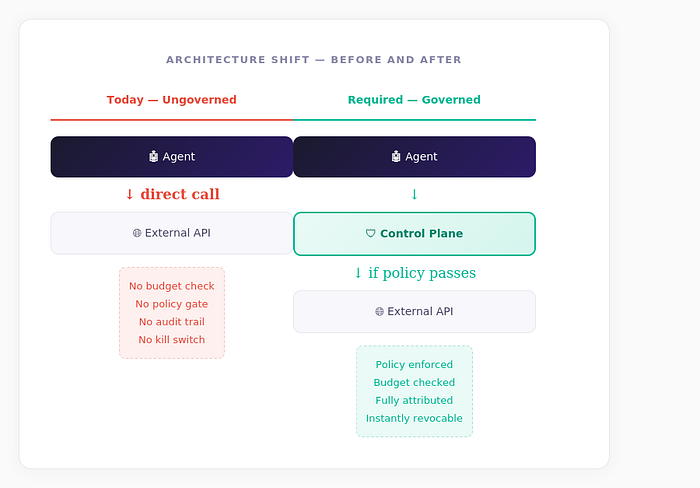

The Missing Primitive: A Control Plane for Agents

In the cloud era, the primitive was infrastructure as code.

In the agent era, it must be: action as a governed transaction.

That control plane should provide two things:

The Hard Truth

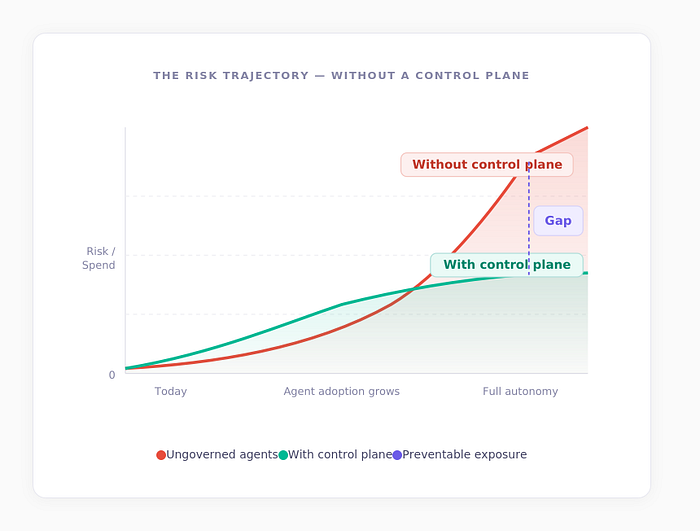

If this layer doesn't exist, governance will be reactive again. And reactive governance is always too late.

The Mistake We're About to Make — Again

Every abstraction wave follows the same path: speed increases, control decreases, spend and risk explode, and governance tools emerge — reactively.

The only difference this time? Agents make autonomous economic decisions.

The First Major Autonomous Incident

The first major "autonomous incident" won't be "The LLM said something weird."

It will be: "An agent executed $X million in unintended transactions."

And we will scramble to build the control layer after. Again.

Autonomy Without Financial Risk

Cloud taught us something profound:

Autonomy without guardrails creates waste. Guardrails without autonomy kill innovation. The winning layer enables both.

Agents will run workflows, trigger transactions, coordinate systems, and move money. The only question is: will they operate with a control plane, or will we wait for the first $500M autonomous headline?

If You're Building With Agents, Ask Yourself

The Bottom Line

If the answer to any of these is no, you're not experimenting with AI.

You're replaying 2014.

Don't Wait for the Headline

We're building the control plane for autonomous agents — policy enforcement, budget controls, and attribution at the tool execution layer. Govern the action, not just the model.