AI writes code faster. Yet business performance remains unstable. That mismatch deserves attention.

Something doesn't add up. Across enterprise IT, a pattern is hard to ignore. Development velocity increases. Delivery timelines remain unstable. Business value realization does not improve proportionally. Engineering throughput has increased. Organizational throughput has not.

After nearly three decades in IT, I have seen this pattern repeat across industries when delivery stalls, code is rarely the real constraint.

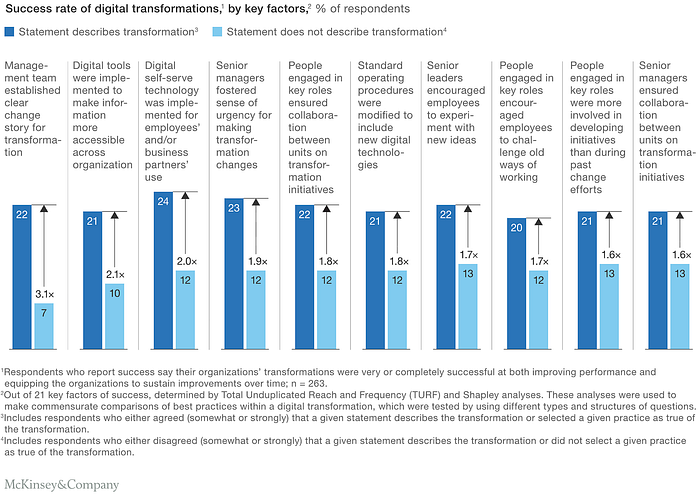

The data is clear

GitHub's controlled study shows developers using Copilot complete tasks ~55% faster.

McKinsey estimates generative AI can improve developer productivity by 20–45%.

AI writes code faster. That is not controversial. But enterprise transformation success rates remain statistically constrained. Only about one-third of large projects deliver fully on time and within budget.

If code production accelerates and delivery does not, the constraint lies elsewhere.

The conclusion is clear — code is being written faster than ever before. However, available evidence suggests that delivery performance has not improved proportionally. Empirical transformation data indicates that delivery success rates remain statistically constrained despite engineering acceleration.

Business success was never about typing speed

A large-scale survey published in Harvard Business Review, drawing on data from thousands of executives and project leaders across industries, indicates that only approximately one-third of projects are delivered on time, within budget, and within scope. Despite decades of methodological evolution, overall success rates have remained statistically constrained.

Even in digitally mature organizations, consistent project success remains difficult. This suggests something important — project success has never been constrained by typing speed. It has always been constrained by structure, management and maturity of their processes.

Faster code does not mean faster delivery

AI can generate production-level code in seconds. Naturally, we expect delivery timelines to shrink. But that's not what the data shows. Atlassian's State of Teams report, based on survey responses from thousands of knowledge workers and engineering professionals globally, highlights that a substantial portion of developer time is allocated is still consumed by meetings, coordination, context switching, integration discussions, approval cycles.

The bottleneck did not disappear, it moved. Code execution accelerated. Organizational execution did not. And that distinction changes everything.

What standards actually define as productivity

Under ISO 9001 (Quality Management), productivity means consistently delivering outputs that meet requirements.

Under ISO/IEC 25010 (Software Quality Management), quality includes functional suitability, reliability, performance efficiency, maintainability and security

IEEE 730/830 (SRS standards) link effectiveness to requirements traceability, verification and validation, defect density and maintainability

None of them measure productivity in lines of code. They measure outcome integrity.

Code as historical scarcity

There was a time when code was scarce. Engineers studied for years, compilers were breakthroughs, and even punctuation could alter system behavior. Programming required deep theoretical grounding and limited access to knowledge.

Today, models generate code in seconds, repositories are public, and learning barriers are dramatically lower. When scarcity disappears, pricing models must evolve. The question is no longer how many lines are written, but who defines the context, checks the quality and ensures integrity.

The narrative layer

In enterprise environments, when velocity stalls, explanations emerge: "oh, it's legacy…", "we inherited this…", or "need a gap analysis…" and my favorite "it's a technical debt…"

Stripe's Developer Coefficient report similarly highlights that productivity bottlenecks often lie outside pure engineering output. Instead of confirming "we failed…"

Complex terminology can obscure simple accountability.

When technology improves faster than management

Over years in enterprise IT, one pattern repeats — technology evolves faster vs management discipline.

Projects rarely fail because engineers cannot write code. They fail because goals are unclear, accountability is diluted, ownership is fragmented and decisions are justified narratively.

When velocity is measured in narrative rather than measurable outcome, acceleration becomes theatre. If code becomes cheaper, the real bottleneck becomes governance.

Capital scale reality

In capital-intensive programs, the pattern becomes even clearer. I have seen multi-hundred-million initiatives stall — not because engineers failed, but because procurement logic, decision opacity, and diluted accountability overwhelmed execution.

Budgets were formally compliant. Controls were technically in place. Delivery still drifted.

When procurement exceeds internal economic benchmarks by multiples yet remains procedurally valid, the failure is not technical. It is governance.

As engineering becomes cheaper, governance inefficiency becomes disproportionately expensive.

The bottleneck has shifted

Historically:

Business velocity ≈ Engineering capacity.

Today:

Engineering capacity ↑ Delivery success ≈ stable.

The constraint migrated upward. Business velocity now depends on:

- governance quality

- alignment

- ownership clarity

- decision discipline

When lower-level constraints compress, higher-level constraints dominate. The bottleneck moved from syntax to structure.

Data-driven management vs story-driven management

Clear, measurable outcomes reduce interpretive flexibility, e.g. profitable or not, delivered or not, aligned with strategy or not, lower TCO/Total Cost Ownership or not.

When decisions are grounded in numbers, storytelling has less space to expand. But metrics alone are insufficient. Metrics require integrity. KPIs are ineffective without aligned incentives and accountable governance structures. Accountability determines whether metrics influence organizational behavior.

If code is no longer scarce

Historically, engineering capacity was scarce. Now AI compresses code generation time dramatically. As writing code becomes increasingly abundant for typical LOB/Line-of-Business Application, then alignment speed, accountability, and decision quality become the dominant constraints.

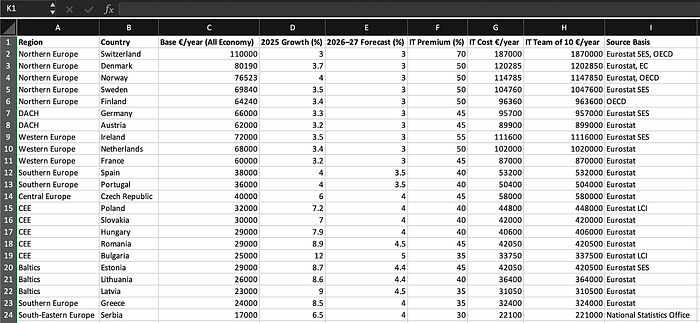

Cost Reality. Across OECD and Eurostat labour statistics, engineering cost continues to rise despite automation improvements. When input cost increases but systemic throughput does not, pricing logic deserves re-evaluation.

Across OECD labour statistics, ICT wages have increased over the last decade despite automation gains. If cost per engineering unit rises while systemic throughput remains statistically stable, value attribution requires reconsideration.

Most enterprise software is not a moonshot and follows a simple structural pattern, e.g. get the data, transform and display the data. Input, processing, output. The complexity rarely lies in typing code.

It lies within:

- agreeing on what data matters (private, sensitive etc)

- defining transformation correctly

- aligning stakeholders

- integrating with existing systems or any other 3rd parties, APIs

- ensuring security, compliance and traceability

AI can generate the transformation logic faster. It can scaffold APIs. It can produce UI templates, but it cannot resolve conflicting stakeholder expectations, unclear ownership and it cannot enforce governance discipline. When the bottleneck is the management, then generating more code simply increases the volume of unmanaged complexity.

I have seen this up close

In one large-scale environment, we reduced operational load from 16 engineers to 1.5 FTEs while maintaining service continuity.

Not by writing more code. Not by adding more tools. But by restructuring governance, clarifying ownership, and eliminating decision ambiguity. The technical stack barely changed. The management structure did.

That shift created more value than any refactoring effort.

Final view

AI accelerates code. It does not accelerate responsibility. If velocity increases but results do not, the bottleneck moved.

From developers to decision-makers. If code is no longer scarce, manageability becomes the edge.

And that is where value lives.