Running stranger's code on your own server is the developer equivalent of handing someone your keys and saying "don't touch anything." But that's exactly what every LeetCode, Codeforces, and AI evaluation pipeline does — millions of times a day.

I built one from scratch. Here's what I learned.

The Problem Nobody Talks About

Anyone can spin up a sandbox that runs "Hello World." The hard part is what happens when a user submits this:

import os

while True:

os.fork()A fork bomb. In 3 seconds, your server is dead. Every other user's request? Dead too.

This is the real engineering challenge of a Remote Code Execution (RCE) engine — not the happy path, but surviving the attacks, the accidents, and the edge cases that make production systems fail.

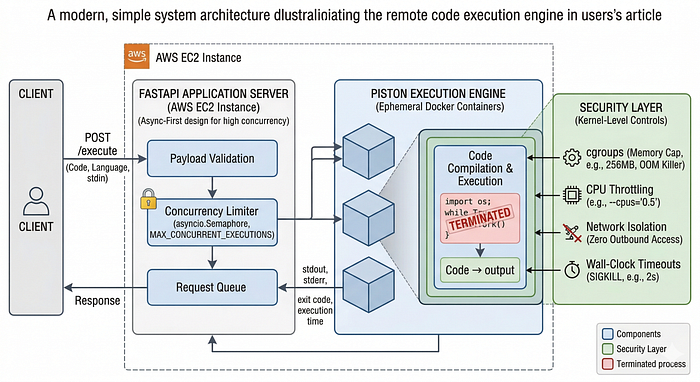

The Stack I Chose (And Why)

FastAPI — Because Blocking is a Death Sentence

An RCE engine is I/O-bound by nature. Every request sits and waits while a container spins up, code compiles, and output streams back. If you're using a synchronous framework for this, you're leaving 90% of your server's capacity on the table.

FastAPI's async-first design means a single worker can juggle hundreds of in-flight executions simultaneously. It doesn't wait. It just… handles the next thing.

Piston — The Secret Weapon

Writing custom execution scripts for every language is a trap. You'll spend 3 weeks getting the Java classpath right and still have broken Go support.

Piston is a battle-tested code execution engine that ships pre-configured Docker images for 50+ languages. You POST code, you GET output. It handles the gnarly internals — compilation, runtime setup, process management — so you can focus on what actually matters: the security layer around it.

Docker — Not Just for Deployment

Most developers think of Docker as a deployment tool. In an RCE engine, it's your security model.

Every code submission gets its own ephemeral, isolated container. When execution ends, the container is destroyed. No state. No persistence. No escape route.

AWS EC2 — Dedicated Compute for a Reason

You cannot run an RCE engine on serverless. Lambda's cold starts, memory limits, and lack of kernel access make it a non-starter. You need a real machine you control end-to-end.

A dedicated EC2 instance gives you direct access to the host OS — which is exactly what you need to configure kernel-level security controls.

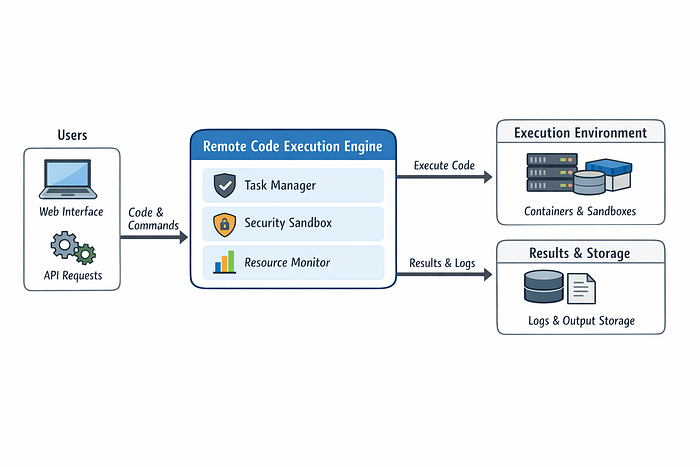

The Security Architecture (This is the Important Part)

Here's the brutal truth: Docker alone is not enough. A container gives you process isolation. It does not save you from a well-crafted resource exhaustion attack.

Here's how I locked it down:

1. cgroups — Killing Memory Hogs at the Kernel Level

Linux control groups (cgroups) let you hard-cap the RAM available to any process tree. If user code tries to allocate a 4GB array on a machine with a 256MB limit, the kernel's Out-of-Memory (OOM) killer steps in and terminates the process instantly.

No gradual degradation. No swapping. Instant death.

docker run --memory="256m" --memory-swap="256m" ...2. CPU Throttling — Because an Infinite Loop Shouldn't Brick the Server

docker run --cpus="0.5" ...CPU-intensive infinite loops are a classic attack vector. By throttling each container to a fraction of available CPU, a runaway process can't starve the FastAPI router or degrade concurrent executions. The loop spins harmlessly in its corner while everything else keeps working.

3. Network Isolation — Your Server Is Not a DDoS Proxy

By default, execution containers have zero outbound network access. User code cannot:

- Make HTTP requests to external services

- Exfiltrate data from your internal network

- Use your compute as a DDoS amplification node

This single rule eliminates an entire category of attack.

4. Wall-Clock Timeouts — SIGKILL, Not Polite Requests

Piston enforces hard execution timeouts. When the clock hits zero, the process doesn't receive a gentle SIGTERM — it gets a SIGKILL. No cleanup hooks. No graceful shutdown. Terminated.

Language Timeout C++ 1 second Python 2 seconds Java 3 seconds

The Part That Actually Broke Everything

The hardest problem wasn't security. It was concurrency tuning.

When traffic spiked, I let too many Docker containers spin up simultaneously. Each container initialization requires disk I/O — pulling layers, setting up the filesystem, mounting volumes. When 50 of these happen in parallel on a single instance, I/O saturation is inevitable.

The result: cascading timeouts. Every active request starts failing. The queue backs up. Users see errors. The whole system looks down even though it's technically running.

The fix was a concurrency limiter — a simple queue that caps simultaneous active containers at a tuned threshold. Requests that exceed the limit wait in line rather than competing for I/O bandwidth.

semaphore = asyncio.Semaphore(MAX_CONCURRENT_EXECUTIONS)

async def execute(code, language):

async with semaphore:

return await run_in_piston(code, language)One asyncio.Semaphore. Entire class of failure eliminated.

The Request Lifecycle, End to End

Client POST /execute

↓

FastAPI validates payload (language, code, stdin)

↓

Semaphore check — are we under the concurrency limit?

↓

Piston spins up ephemeral container

↓

Code compiles + executes (sandboxed, resource-capped)

↓

Container destroyed on completion or timeout

↓

stdout + stderr + exit code + execution time → ClientNo shared state between executions. No container reuse. Every run is completely isolated.

What's Next: From One EC2 to Kubernetes

A single EC2 instance has a ceiling. As submission volume grows, the next step is horizontal autoscaling — spinning up additional worker nodes based on real-time queue depth.

Kubernetes with a custom HPA (Horizontal Pod Autoscaler) tied to queue metrics means:

- 10 concurrent users → 1 worker node

- 10,000 concurrent users → autoscaled fleet

- Traffic drops → nodes terminate automatically

The architecture is already designed for this. The FastAPI layer is stateless. The execution model is stateless. The only migration required is moving the queue from an in-process semaphore to a distributed queue like Redis or SQS.

Building something similar or have questions about the architecture? Drop a comment — happy to dig deeper on any of these systems.