A lecturer in my university gave the class an assignment to be submitted in 3 days. As expected, he warned against copying off of others and plagiarism. He also strongly advised against using AI software like chat gpt to write the assignment.

"I know all your tricks because I was a student myself. If you lift this assignment from chat gpt or meta, I will know

For some reason I believed him —he's in his early thirties so he's very much in tune with modern technology. I decided to jettison my plans to be lazy and just go the hard way.

My friend on the other hand was still adamant. He had a foolproof masterplan to bypass the prying eyes of a man who, from all indications, had all the time in the world to go through each submission with a fine-toothed comb. You want to know what it was?

"I'll just ask chat gpt to make the story more human and less like AI"

(Cue the Tony Stark eye roll gif —please tell me you get the reference)

Never in my life had I witnessed such dumbassry. Over two decades spent on earth and the best his brain could conjure up was to essentially tell chat gpt to not be chat gpt?

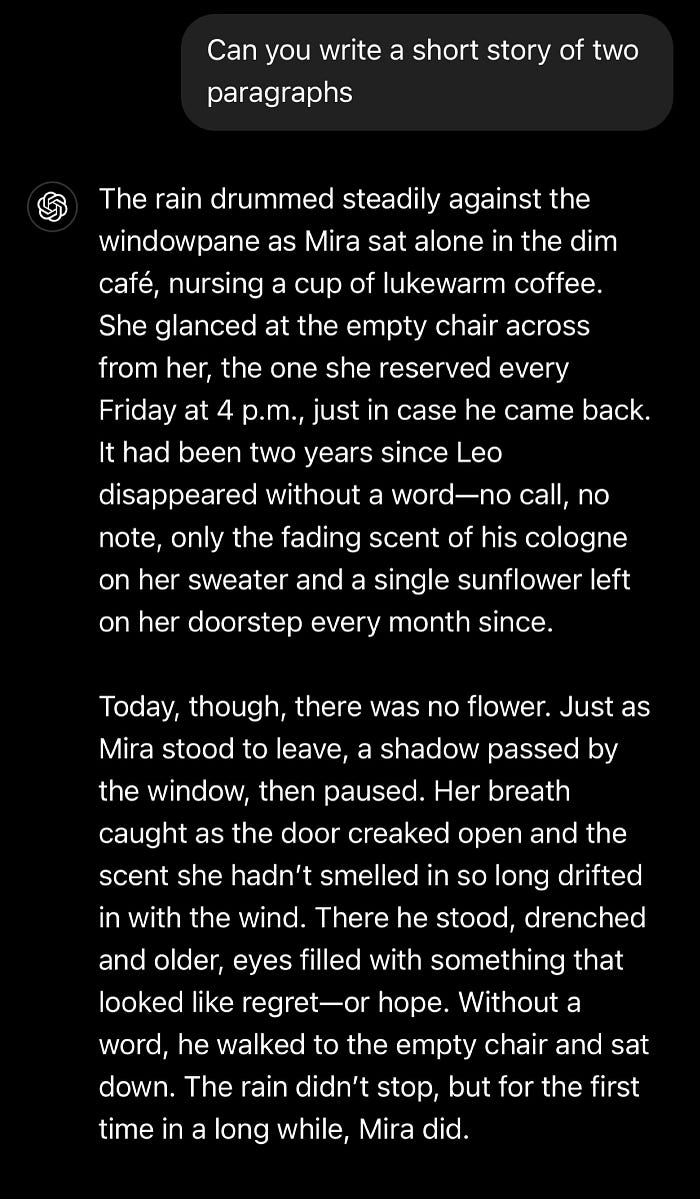

Okay here's an example of chat gpt struggling to be a human being. I prompted it to write a 2-paragraph story out of nothing and this was the result

Here we have the obvious idiosyncrasies of chat gpt at full blast. The wordiness, the creative elaboration, the futile attempts to be deep; I mean look at that last sentence.

"The rain didn't stop, but for the first time in a long while, Mira did." Ooohhh so deep, what the fuck does the sentence even mean?

If you have the slightest bit of experience with writing, and reading, and generative AI, the first sentence of that story should be a dead giveaway that whoever's —or whatever's behind it may or may not pass a reCAPTCHA test. In other words, it's clear chat gpt wrote it.

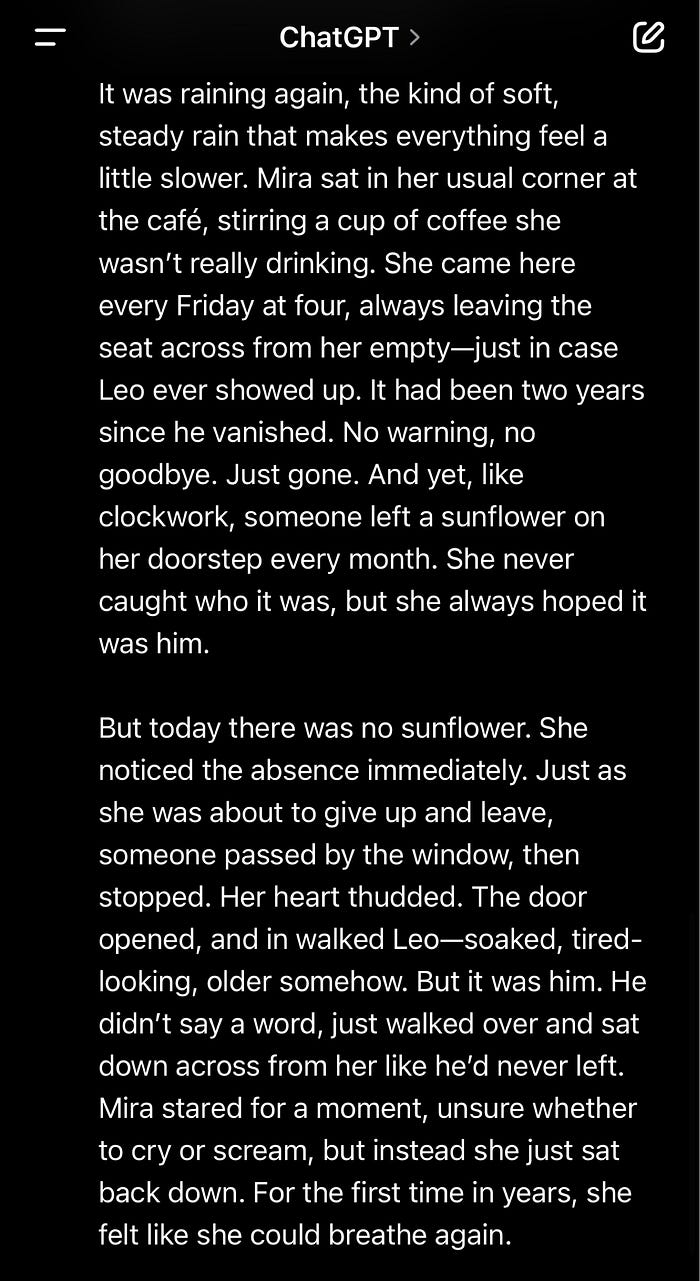

I decided to try my friend's idea and prompt it to be more human. It worked out as well as you could expect.

You ever watch those movies where the alien guy shape-shifts into a person and needs to blend in, after which he pretty much googles "human stuff" so he can understand how humans behave?

He then gets the keywords but due to a lack of context and nuance, starts talking like he's got a stick up his ass.

This is exactly what you get when you try to make AI sound more human. You get overcompensation, like a guy puffing his chest, trying to walk through a rough neighborhood like a tough guy so he doesn't get robbed.

You don't get normal human behavior, you get what chat gpt thinks a human being acts like. You get cliches, you get attempts. I hope you get the point im trying to make. Look at the first sentence of the second story.

"It was raining again, the kind of soft steady rain that makes everything feel a little slower."

The part after the comma is already a dead giveaway for me. I see that and my alarm bells start ringing. If I wrote that, I'll just say "It was raining softly again." My readers don't need to be spoon-fed. They'll understand the point I'm trying to make.

You simply cannot prompt your way out of sounding artificial when you use chat gpt because the giveaways are numerous. While there are the obvious ones most can spot, there are the more subtle ones which can only be detected by people with trained eyes, like writers, academics, journalists, and HR personnels.

I'm not kidding about the last part. Almost half of AI generated resumes are dismissed by hiring managers. There are a few red flags here and there that they're trained to look out for, and believe me when I say one of them is if your resume is

1. Too damn polished

It sounds weird but it actually raises their eyebrows when you turn over a resume that's cleaner than an operating table. What's even weirder is the fact that they've recently somehow developed a preference for receiving poorly written human resume over a spotless one written by AI.

Minor grammatical errors and typos signify human authenticity. As a reader rightfully pointed out, this isn't an encouragement to submit sloppy resumes because they make you sound human. I am not advising you to, I'm only pointing out what research carried out on hiring managers have stated.

This also applies outside the corporate system and is one of the ways I, or pretty much anyone else with experience can tell your story was written by chat gpt.

I maintain that unless you're in a formal setting, or English isn't your primary language, you don't have to overdose on Grammarly. Also, if you exist outside the above mentioned categories and anytime you paste your story to Grammarly, it consistently scores you an average of 65%, you don't necessarily have to go through the trouble every time.

65% and above means your core message is clear, but there's still room for improvement. You can choose to be a perfectionist if you want but I'm okay with a little bit of typos and errors here and there. It makes me human —it makes us humans.

We have our quirks and flaws with the way we write and express ourselves. Some may misuse certain punctuations, create longer paragraphs than required because they're trying to fit it all in there in one thought, and many others.

Chat gpt isn't designed like this. It can never be designed like this because it will hurt their reputation. As a result, stories written by chat gpt turn out oversanitized.

Note: some of these reasons may not apply in isolation but combined with some more. For example, a clinically polished piece of content alone is not a complete guarantee it was written by AI. Some people are just perfectionists.

However, it might need to combine with other factors which I'll

2. Delve into

Chat gpt is notorious for always delving into things. I don't know who taught it that humans talk like that. I have literally never met anyone who said "let's delve into this…"

There are some words and phrases that chat gpt can't help but use whenever it's conjured. For example:

Delve, vibrant, comprehensive, pivotal, notably, realm, landscape, tapestry, embark.

Most notable phrases include:

Dive into

Delve into

Navigating the

Delving the intricacies of. Etc

These are not complete giveaways but they do set off alarm bells.

Chat gpt has gotten a lot smarter and you should see these tells fade away with subsequent updates.

3. Never in the first person

I started this story in the first person narrative. It's quite normal for people to switch to different narrative styles when they're trying to get their point across.

"Chat gpt has gotten a lot smarter and you should see these tells fade away with subsequent updates. I remember trying hard to keep up with their updates and innovations"

This statement flows from 2nd person to first person. Chat gpt can barely do this. It maintains one perspective throughout. Take a look at the monstrosity that ensued when I asked it to seamlessly switch between 1st 2nd and 3rd person perspectives.

The transitions went as well as a toddler's attempt at frosting a birthday cake. There's no problem if you're writing a formal piece but asides that, humans typically don't maintain the same perspective throughout their stories because they'll branch off a bit.

Chat gpt doesn't do this, but there is something it does rather too common, and that's the

4. Em dash

Asides chat gpt, another serious and serial offender is Grok AI. Im not kidding, I literally had to tell it to knock it off because the dashes were excessive.

I'm quite lenient with this because I happen to love em dashes so much. You can probably tell because I've used it several times in this story.

However, I do not use it incessantly like chat gpt and the likes. There's nothing human about riddling your story with lots of dashes, there's only so many times you can use it before it loses its impact.

Also, if it's used too much, it disrupts the flow of reading and makes your story appear disjointed. Real writers know this and use it sparingly —chat gpt does not. See what I did there?

5. Chat gpt is too anodyne

Chat gpt doesn't have a stance. It always tries to be diplomatic and placate both sides. If I walk the streets of the bluest town in New York and I ask a core, deep democrat about the scandals of the republican party in modern history, I'll expectedly get the most partisan and biased response imaginable.

There's nothing wrong here. We have our biases and our leanings, which affects our judgement.

Once the democrat is done with me, I'd think the republican party was responsible for the holocaust if I didn't know any better. Okay that was an exaggeration. This whole thing also applies vice versa.

And then there's chat gpt, too scared to step on anyone's toes. Here's what happened when I punched this same issue into the chat box.

Look at the summary. I never asked about the democratic party at all. I also already know that scandals are not exclusive to one party, I never asked for that info in the first place. Oh and lastly, I didn't ask for a similar list for the democratic party.

It assumes this on-the-fence position in cases like this. I mean it's only fair to learn about the scuminess of the democratic party while I'm learning about the republican party right? The problem is that I didn't ask for it.

To be fair it's ultimately working in the interest of shareholders. It makes zero economic sense to alienate a section of its consumers as a result of picking a side or even appearing to be biased.

This is why it strives to be nuanced. Humans, however, struggle to be nuanced because it's more mentally demanding. It also poses the risk of rupturing that echo bubble they've spent years —probably their whole life crafting.

This is why people avoid nuance; heck, the brain even actively fights it through confirmation bias.

TLDR: Stories written by chat gpt tend to not have a definite stance. They actually make attempts at appealing to the sensibilities of whoever is reading.

Conclusion

A few of what I mentioned above may not apply anymore in a matter of time because the developers are constantly making tweaks to their systems in response to public feedback.

For example, as of writing this, I went back to grok ai to check out the problems with the em dashes and noticed it had reduced a bit. Still there but not as bad as it was like a week ago.

They can tweak all they like but there'll always be tells. Chat gpt is at best, a brilliant mimic and not an artist.

While we write to persuade, entertain, provoke, educate, and heal, chat gpt writes strictly to follow orders. It doesn't have a dog in the fight or a skin in the game, or a personal reputation to protect because it's not alive.

No matter how many updates or point xyz they attach to the end of their software version, it's still going to have that hollow and generic feel, most especially in emotionally charged topics.

It so happens that I can spot all of this a mile away.

Love writing? 🥰 Join Beedium 🐝 and discover tools that will make your articles better! ✨

Sweet.pub is a family — 💚 Short, 💙 Long, 💜 Niche, and 🧡 Deep. Discover the stories that will make your 🤍 beat!

This article was published on May 16th, 2025 in Long. Sweet. Valuable. publication.