Over the past two years, a peculiar belief has quietly taken hold in the AI community: that the future of intelligence lies in building agents. Everywhere you look, engineers are creating RAG pipelines, chaining tools together, wrapping large language models with orchestration frameworks, and calling the result "AI systems."

It looks impressive. It feels productive. It satisfies the engineering instinct to build.

But here is the uncomfortable truth: most AI agents are not progress. They are decoration.

They do not meaningfully extend intelligence. They do not create new economic value. And they rarely survive the next generation of base models. In most cases, building AI agents is not a step toward the future of intelligence — it is a temporary distraction from understanding what intelligence actually is.

This is not a technical argument. It is a structural one.

A Simple Mathematical Reality

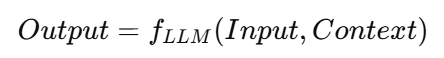

At its core, a large language model is a function approximator:

Everything an LLM produces is a transformation of input signals and its learned internal representation. It does not invent information ex nihilo. It rearranges, compresses, and extrapolates.

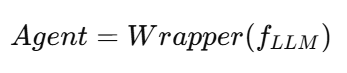

An AI agent is merely:

You add memory. You add retrieval. You add tools. You add workflow logic.

But structurally, you have not created a new intelligence. You have created a wrapper around an existing one.

This matters because wrappers are economically fragile. Any improvement in the base model instantly erodes their value. When the LLM becomes better at reasoning, memory, or long-context processing, most agent logic becomes redundant overnight.

If your system can be replaced by a larger context window and a better prompt, you do not own a product. You own a temporary inconvenience.

Agents Do Not Increase Information

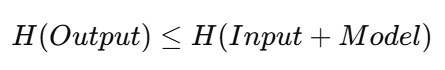

From an information-theoretic perspective, agents face an even harsher constraint.

Shannon taught us that information cannot be created inside a closed system. An agent does not add entropy to the world; it only redistributes it:

Your RAG pipeline does not introduce new truth. It only reshuffles existing information between documents, embeddings, prompts, and tokens.

This means agents are not intelligence multipliers. They are interface rearrangements.

They change ergonomics, not cognition.

They improve convenience, not understanding.

Why RAG Is a Transitional Technology

Retrieval-Augmented Generation feels powerful today because base models still have limits:

- Context length is finite

- Training data is frozen

- Hallucinations still occur

- Domain memory is imperfect

RAG compensates by injecting external memory.

But this advantage is temporary.

As LLMs absorb:

- Larger contexts

- Live knowledge integration

- Better internal memory compression

RAG becomes what CD-ROMs became to cloud storage: a workaround that only made sense during a technical transition.

RAG is not the future. It is the scar tissue of current limitations.

Agents Reduce Intelligence for Experts

This is the most misunderstood part.

Agents appear helpful, but for professionals they are actually regressive. They trade freedom for structure.

An agent predefines:

- Which questions can be asked

- Which paths are allowed

- Which tools are permitted

- Which outputs are expected

That is useful for beginners. It is dangerous for experts.

A lawyer does not think in workflows. A mathematician does not reason in pipelines. A scientist does not explore truth through menu-driven cognition.

Great thinking is non-linear, improvisational, and context-sensitive. Agents freeze cognition into templates.

They convert intelligence into bureaucracy.

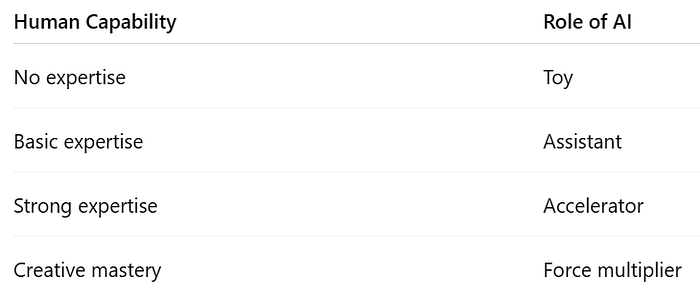

The Fundamental Value Equation

The true equation of AI is not:

It is:

If domain expertise is zero, AI multiplies zero. If domain expertise is deep, AI becomes leverage.

This is why:

AI does not democratize intelligence. It polarizes it.

Why "Weekend Agents" Have No Economic Future

If an agent can be built in a weekend, it has no moat.

Its cost of replication is near zero. Its dependence on base models is absolute. Its lifespan is measured in API releases.

This violates every principle of durable business:

- No defensibility

- No proprietary cognition

- No compounding advantage

You are not building infrastructure. You are decorating someone else's.

The Education Crisis Nobody Wants to Admit

Parents now ask: "Should my child study AI"?

But they misunderstand what AI is.

There are only two real AI careers:

- Model builders — mathematicians, statisticians, and systems engineers

- AI-amplified professionals — doctors, lawyers, engineers, scientists, investors

The middle category — agent builders — is mostly an illusion.

It produces demos, not value.

We are training a generation to polish interfaces instead of mastering domains.

That is a catastrophic misallocation of talent.

Why People Love Agents Anyway

Because agents offer psychological comfort:

- They feel like ownership

- They feel like mastery

- They feel like progress

They give the illusion of control in a world where intelligence has become abstract and centralized.

But illusion is not leverage.

A More Honest Definition of AI

AI is not a profession. AI is not a product. AI is not a replacement for intelligence.

AI is a mirror.

It reflects what you already are:

- If you have no structure, it becomes entertainment

- If you have weak structure, it becomes assistance

- If you have strong structure, it becomes power

The Final Principle

AI does not reward engineering cosmetics. It rewards cognitive depth.

And more brutally:

If an AI agent can be built in a weekend, it is probably not a business. It is a toy.

The future of intelligence is not in chaining prompts together. It is in chaining human understanding with machine amplification.

Agents will fade. Expertise will compound.

AI will not equalize humanity. It will expose it.

About me

With over 20 years of experience in software and database management and 25 years teaching IT, math, and statistics, I am a Data Scientist with extensive expertise across multiple industries.

You can connect with me at: