Our team is responsible for reliably loading data from various production-grade databases into our Data Warehouse (BigQuery), enabling quick and easy access to data on organization level.

Pouring all data into a single BigQuery DW has several advantages. Firstly, running analytical queries directly on production DBs can impact service traffic, so it usually goes without saying that analytic workload isolation is crucial. We can also leverage BigQuery's powerful distributed processing capabilities to unlock incredibly fast analysis of large-scale data. Finally, consolidating various data sources scattered across services into one place enables cross-service analysis.

Today, I want to share the challenges we faced especially with MongoDB and how we built MongoDB CDC to solve them.

Background

Karrot uses various DBMSs suited to each service's characteristics, including MySQL, PostgreSQL, DynamoDB, and MongoDB. We load data from all these DBs into BigQuery to establish an analytics foundation. For MongoDB specifically, we had been using the Spark Connector for dumps.

However, as our service grew, so did our data volume. For MongoDB, the existing approach couldn't satisfy both requirements:

- Generally trying our best to minimize load on the source database.

- Our internal 2-hour data delivery SLO (which would force some load on the source databsae)

This trade-off became unsustainable. After evaluating several options, we decided to implement MongoDB CDC.

Why CDC?

Goals

- Improve the dump method for specific tables that are both large and frequently updated

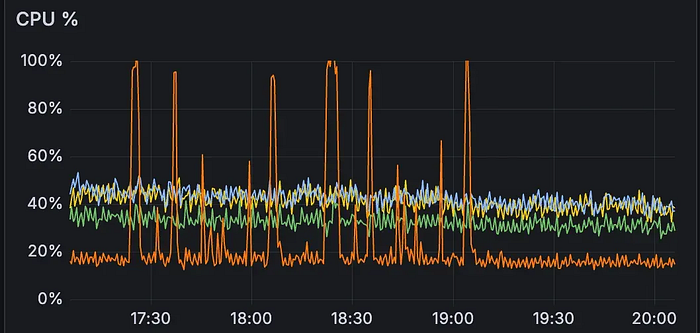

- Stabilize database CPU usage below 60%

- Enable existing dump jobs to complete within the 2-hour SLO

CDC (Change Data Capture) was the most efficient approach to achieve these goals.

CDC works by directly reading database change logs (binlog, oplog, etc.) to capture changed data. It can detect INSERT, UPDATE, and DELETE operations without requiring additional columns in the table. To construct the final table using captured change data, you first take a full snapshot, then merge subsequent changes.

You might think, "Why not just incrementally query data based on timestamps?" However, such incremental querying methods require some assumptions on timestamp fields like created_at or updated_at to be consistent and correct, which, unfortunately, cannot be guaranteed to be true in all cases. For scalability across various tables, we needed an approach independent from such assumptions on the table schema.

We first selected the top 5 collections based on data size and update volume as CDC migration candidates. This approach would allow stable data synchronization without bulk queries, significantly reducing DB load while meeting SLO.

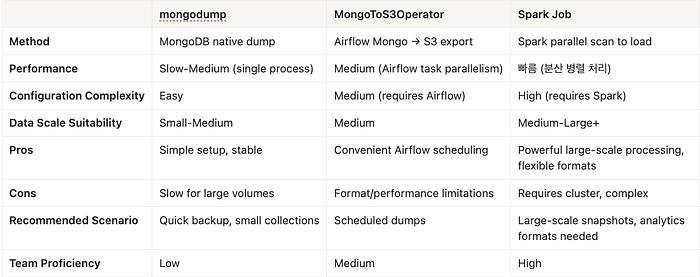

Comparing Technology Options

We evaluated several technology stacks capable of implementing CDC.

Final Choice: Flink CDC

After evaluating multiple candidates, we chose Flink CDC. Here's why:

1. Native MongoDB Change Stream Support

Flink CDC natively supports MongoDB's Change Stream.

We could reliably read change logs without developing custom connectors, and Change Stream's resume token (pointing to the last processed position) naturally integrates with Flink's checkpoint, enabling accurate resumption from the exact point after failures.

This allowed us to build a CDC-based pipeline without significantly increasing operational complexity.

2. Robust State Management and Stable Checkpoint Mechanism

Flink uses a checkpoint strategy that periodically saves state as snapshots to distributed file systems (HDFS/GCS/S3, etc.). Since state is preserved in external storage, we could safely restart from previous points while maintaining Exactly-Once processing guarantees even after failures.

This was particularly important for reliability in large-scale pipeline operations.

3. Integrated Pipeline: CDC → Transform → Sink in One

CDC tools like Debezium specialize in change data extraction, but require separate systems for post-processing or transformation. Flink CDC handles everything in a single Job:

- Extract change data via CDC

- Perform necessary data cleaning/transformation/filtering

- Reliably load to sinks like BigQuery

This end-to-end integrated configuration reduced the number of pipelines and lowered operational complexity. The ability to handle data model restructuring and format conversion on the MongoDB to BigQuery path without a separate transformation layer was particularly attractive.

4. Excellent Scalability Based on Parallel Processing

Flink can subdivide work into computational units and execute them in parallel. Increasing TaskManager count scales throughput nearly linearly, allowing stable response to CDC event spikes by scaling the entire pipeline.

Scalability was a critical evaluation criterion in our service environment with rapid data growth.

Implementation Process

Architecture Design

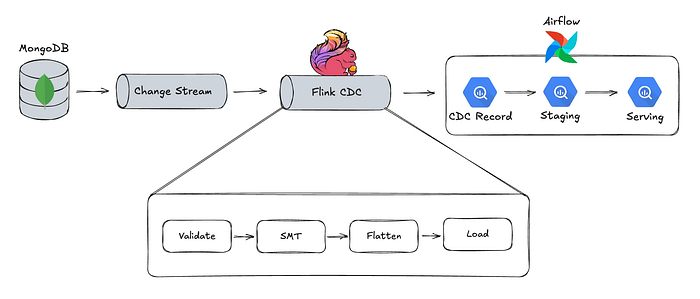

We designed the CDC data pipeline architecture to be relatively simple.

Our priority was quickly resolving the DB load issue, and we determined that current traffic levels could be handled without intermediate queues like Kafka.

MongoDB's CDC Mechanism

MongoDB has a special log called Oplog. All write operations (insert, update, delete) are recorded in this log. The Change Stream feature allows real-time subscription to this Oplog.

Change Stream is a high-level API that safely wraps Oplog, enabling applications to directly consume change events.

Flink CDC subscribes to this Change Stream to receive change events. The overall data flow is:

- Data change occurs in MongoDB (insert, update, delete)

- Change Stream generates change events

- Flink CDC to the Change Stream and receives events

- If necessary, flink performs transformation and processing

- Transformed data is sent to BigQuery

- Data is now available for analysis in BigQuery

Batch Pipeline Structure

The backend batch pipeline runs hourly and consists of four stages:

- Schema Evolution: Compare schema repository with BigQuery tables and auto-add missing fields

- Extract CUD Latest: Extract recent change events from CDC source and deduplicate

- Merge to Raw: Merge into raw table in JSON format

- Materialize to Final: Apply schema to materialize final table

Why Hourly Batch?

We chose hourly batch over real-time streaming for several reasons. First, there was no real-time requirement — meeting the 2-hour delivery SLO was sufficient, so we chose a simpler design rather than investing resources in real-time pipelines.

Additionally, generous time windows allow stable recovery of late-arriving events, and clearly defined failure intervals make reprocessing easier during incidents. Batch processing also makes it easier to guarantee idempotency.

Since it's a CDC-based architecture, we left room for future streaming conversion if real-time requirements emerge. However, at this point, we chose to avoid unnecessary complexity and achieve both SLO compliance and operational stability within given constraints.

Reaching this design involved four key considerations:

- How can we process transactions without gaps?

- How should the initial snapshot phase work?

- How do we handle schema evolution from NoSQL to SQL (MongoDB to BigQuery)?

- How do we verify CDC consistency, and what criteria determine production readiness?

1. MongoDB Transaction Processing

The first thing we needed to verify when introducing CDC was transaction order guarantee. Without order guarantee, CDC itself becomes meaningless.

For example:

- If events occur as

INSERT → UPDATE → DELETE, the row should be deleted in the final table - If

INSERT → UPDATE → UPDATE, upsert with the last UPDATE state

Change events for the same primary key must be collected in order to correctly reflect them in the final table.

We conducted a PoC to verify Change Stream guarantees this. Results confirmed that Change Stream operates on oplog basis, with each event containing a timestamp (ts_ms), allowing event collection in transaction order.

Here's an example of Oplog for transactions:

// 1. Insert

{

"_id": "1",

"fullDocument":"{\\"_id\\": \\"1\\", \\"v\\": \\"A\\"}",

"operationType":"insert",

"ts_ms":1,

...

}

// 2. Update (v: A → B)

{

"_id": "1",

"fullDocument":"{\\"_id\\": \\"1\\", \\"v\\": \\"C\\"}",

"operationType":"update",

"ts_ms":2,

"updateDescription":{

"updatedFields":"{\\"v\\": \\"B\\"}"

},

...

}

// 3. Update (v: B → C)

{

"_id": "1",

"fullDocument":"{\\"_id\\": \\"1\\", \\"v\\": \\"C\\"}",

"operationType":"update",

"ts_ms":3,

"updateDescription":{

"updatedFields":"{\\"v\\": \\"C\\"}"

},

...

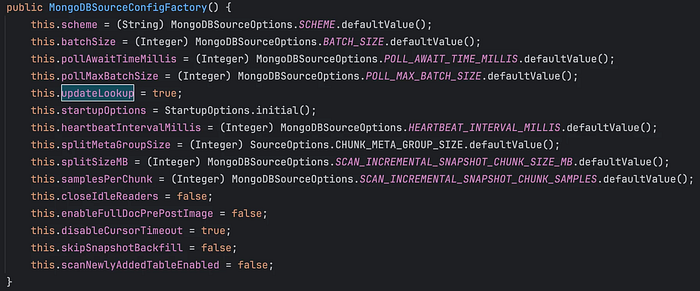

}When Change Stream's fullDocument option is set to "updateLookup", fullDocument contains the complete document data. When consecutive changes occur within the same transaction, all events' fullDocument are delivered with the same final state.

Since the Flink MongoDB CDC connector defaults to true, no additional configuration was needed. Ultimately, we only needed to process the fullDocument field of the last event per primary key, simplifying transaction handling.

2. Initial Snapshot Phase

CDC only captures changes after pipeline activation. Therefore, an initial snapshot phase is needed to retrieve data from the past.

Flink CDC offers an Initial Snapshot mode that automates this process. However, our testing showed that the default settings couldn't complete within the Oplog retention period. Even after scaling up resources and tuning, DB load only increased while still being slower than Spark.

Further tuning might have improved things, but given our limited timeline, we decided it was more practical to switch to tools we were already familiar with. The remaining options were:

We were already operating a Spark cluster environment, and after comparing methods, Spark's performance was significantly better for simple read operations, making Spark Job an easy choice for the initial snapshot phase.

3. Schema Evolution

The biggest challenge in building MongoDB CDC was schema management.

MongoDB has flexible schemas — developers can add new fields whenever they want. But BigQuery requires explicit schema definitions. Ensuring that MongoDB schema changes are stably reflected in BigQuery tables was our biggest concern.

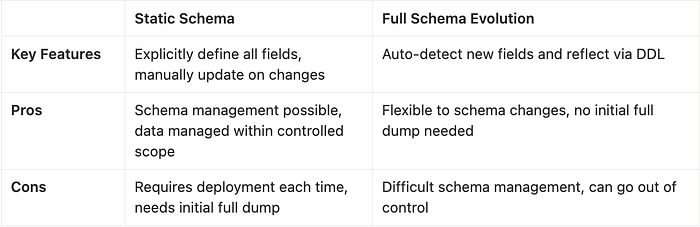

We considered two approaches for loading data into schema-based BigQuery:

Both methods had pros and cons, so we first organized our requirements:

[Data Team Requirements]

- Pipeline must operate stably

- Schema changes must not cause unexpected problems (e.g., BigQuery Breaking Changes)

[Service Team Requirements]

- Schema changes shouldn't take too long to reflect

- Schema changes should be easy to make

Based on this, we adopted Option 1 (Static Schema). We determined that managing schemas within a controlled scope was necessary to prevent unexpected problems. To also satisfy "quick and easy reflection," we designed the following structure:

1. Automation of the Request-Approve-Evolve Framework for Schema Changes

When the service team members request schema additions, the central data team (which is, us) reviews and approves. Approved changes are automatically detected and new schemas are added to BigQuery. Schema changes are reflected without manual deployment.

2. Two-Stage Table Separation

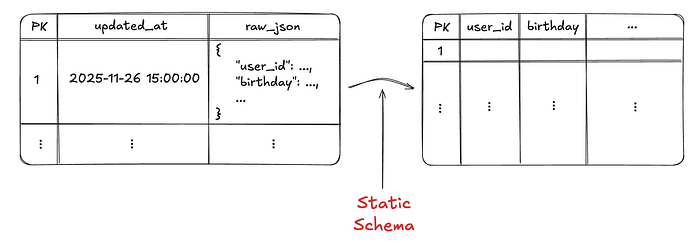

Even when schemas are added, the process of filling field values in existing data is necessary. To handle this efficiently, we separated tables into two stages:

Stage 1: JSON Raw Table

- Stores data in original JSON format

- Preserves raw data regardless of schema changes, enabling reprocessing without Full Dump when schemas change later

- Clustering applied by primary key — reduces scan range in join queries during CDC change updates, minimizing computation costs

Stage 2: Final Table

- Upon schema changes, the new schema is applied to the stage 1 raw data and the final table is overwritten by the result.

Now when schemas change, since raw data is preserved in Stage 1, we only need to regenerate Stage 2 without any expensive re-runs of the snapshot phase.. The schema evolution process that previously took 2–3 hours was reduced to under 20 minutes.

4. Consistency Checks

More important than building the CDC system was whether we could trust the data.

Since we planned zero-downtime migration for tables already in service before switching from Full Dump to CDC, thorough consistency verification was essential. We first defined consistency criteria, then ran both the existing Full Dump and CDC pipelines simultaneously (dual write) to compare and verify results. We automated this process with alerts for continuous monitoring.

Check Items

- Record count match: Does data count during Full Dump match the CDC data count during dual write?

- Data freshness: Is CDC data arriving properly every hour?

- Duplicate ID check: Are there duplicate IDs in the ID column?

These items were used as ongoing monitoring metrics, and for CDC migration consistency verification, we conducted stricter validation. We compared checksums to confirm whether all fields have identical values for the same ID, verifying 100% consistency.

Finally, we confirmed this consistency was maintained without issues for 2 weeks before determining migration readiness.

Operations: Learning from Experience

Building and deploying a system is just the beginning. What really matters is operating it stably.

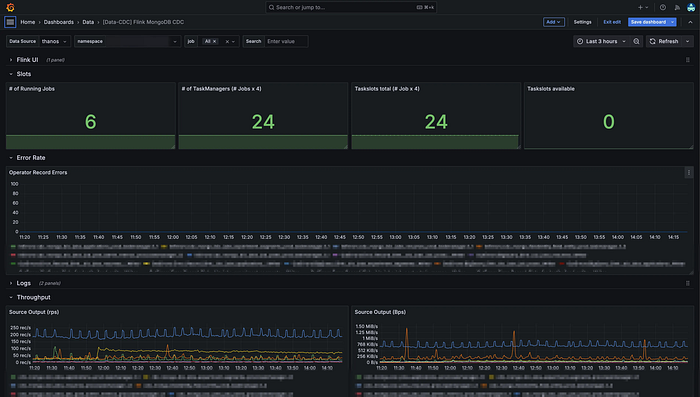

Monitoring System

We monitored the following metrics to check CDC system status:

Core Metrics

- Flink Job Status Is the Job running normally? Are enough TaskManagers attached? Any restarts or failures?

- Data Throughput How many records/bytes per second are being processed across the entire MongoDB → Flink → BigQuery pipeline?

- MongoDB Read Load Is CDC causing excessive load on MongoDB? Check CPU, memory, and network usage.

- BigQuery Load Success Rate Are records being loaded normally at the Sink stage? Any errors?

- Backpressure Where in the pipeline are bottlenecks occurring? Any processing delays?

- Checkpoint Stability Are Flink checkpoints completing stably? Is duration or size increasing abnormally?

Through actual operations and failure experiences, we set up automatic alerts for SLO violations on Flink Job Status and Backpressure.

Fault Tolerance

We experienced various failure scenarios during operations. Here's how Flink behaves in each:

Scenario 1: Flink Job Failure Flink Kubernetes Operator automatically restarts the Job and resumes processing from the last checkpoint. Recovery typically takes under 3 minutes.

Scenario 2: MongoDB Connection Lost Reconnection attempts use exponential backoff. Temporary network instability auto-recovers; alerts are sent if it persists over 10 minutes.

Scenario 3: BigQuery Load Failure Failed data is stored in BigQuery via a Dead Letter Queue defined in the Flink application, with alerts sent within 1 hour. Manual reprocessing follows problem resolution.

Conclusion

Through this project, we reaffirmed that "trust" is ultimately what matters most in data pipelines. Investing time in thorough PoC and consistency verification was the biggest factor in gaining team confidence, and choosing technology suited to our situation rather than the latest technology was also a good decision.

Going forward, we have the following goals for faster and more stable data delivery:

- Minimize end-to-end latency within available resources

- Increase throughput relative to cost through efficient resource utilization

This post's scope is limited to CDC with MongoDB, but there are so much more going on at Karrot to ensure consistency, stability, and performance in data delivery.

If you're interested in data pipelines and infrastructure, join the Data Team and help build data infrastructure for Karrot's users!

👉 Karrot Data Team Job Openings