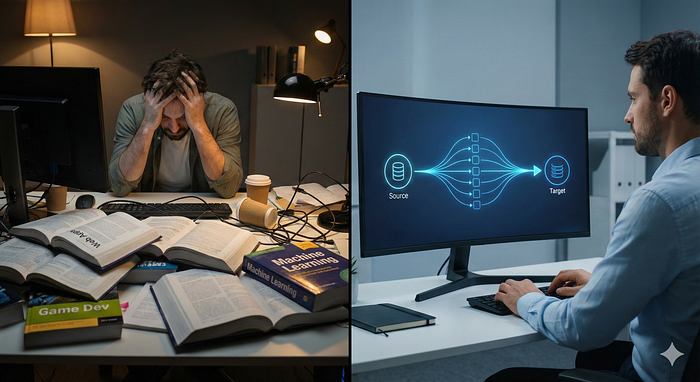

Last week, a friend called me in a panic.

"Abhishek," he said, "I've been learning Python for a year. I've done the courses. I've built the games. But when I try to do actual Data Engineering work? I feel completely lost."

I asked him to show me his curriculum. It was a disaster.

He wasn't learning too little. He was learning too much.

He was deep-diving into web development frameworks, complex machine learning algorithms, and advanced libraries that he would never touch as a Data Engineer. He was stuck in "tutorial hell," memorizing syntax for tools he didn't need, while his boss was breathing down his neck for a working ETL script.

This is the trap.

Most beginners start with a generic Python course and proceed topic by topic. By the time they reach chapter 10, they've forgotten chapter 1.

If you want to be a Data Engineer, you don't need to be a software developer. You don't need to build the next Instagram. You need to move data from point A to point B without it breaking.

Here is the "Pipeline-First" method to learning Python — the exact roadmap to learning only what you need, fast.

The Reality Check: You Are a Plumber, Not an Architect

At its core, Data Engineering is not about building software; it's about building pipelines.

A pipeline is simple:

- Extract: Pull data from a source.

- Transform: Clean and enhance it.

- Load: Put it somewhere useful.

Stop learning Python features in a void. Instead, map every Python concept to one of these three boxes.

Phase 1: The "Extract & Load" Box

Your first job is to get data out of a database, a CSV file, or an API, and dump it into your system. You aren't trying to be smart here; you are just moving boxes.

What you actually need to learn:

- Data Structures: Forget complex algorithms. Master Dictionaries (for config files and JSON) and Lists (to manage batches of files). That's 90% of your configuration work.

- Loops: You have 50 files? You need a

forloop to iterate through them. - File Handling: Learn to read and write CSV, JSON, and Parquet files.

- The Secret Weapon: PySpark. Python alone is too slow for Big Data. You need to understand how to start a Spark session to handle the heavy lifting.

If you are building a web scraper for a recipe site, you are wasting your time.

Phase 2: The "Clean & Enhance" Filter

Now you have the data, but it's garbage. Dates are strings, names have weird spaces, and half the rows are null.

What you actually need to learn:

- Control Flow: This is where

if/elseshines. If data type is string, convert to lower case. - String & Date Functions: Master

.strip(),.lower(), andto_date(). You will use these every single day. - Functions: Stop copying and pasting code. Write a modular function for "Clean Text" and reuse it across your pipeline.

Phase 3: The Business Logic

This is where the money is made. You take clean data and join it with other data to answer business questions.

What you actually need to learn:

- Aggregations:

.sum(),.count(),.avg(). - Joins: How to merge two DataFrames without crashing the server.

- SQL in Python: Here is a pro tip — sometimes the best Python code is actually SQL. Using

spark.sql()allows you to write business logic in a language business people understand, right inside your Python script.

The "Boring" Stuff That Gets You Promoted

Finally, there are two things generic courses skip that are mandatory for Data Engineers:

- Logging: If your pipeline fails at 3 AM, you need a log file that tells you why. Learn the

logginglibrary. - Error Handling: Wrap your dangerous code in

try/exceptblocks. Never let a single bad row crash your entire pipeline.

Stop Learning Randomly

If you learn Python in this order, it stops feeling endless. You aren't just "learning to code"; you are building the exact toolkit you need to do the job.

Don't memorize the dictionary. Learn the words you need to speak the language.