"We're letting you go."

My manager's face on Zoom looked tired. Not angry. Just tired. Behind him, I could see the dashboard still showing red — our app had been down for three hours during Black Friday. Three hours of lost revenue because the server-side rendering kept timing out under load.

I built that. I championed it. I was so sure Next.js was the answer.

Six months earlier, I'd convinced our CTO to rewrite our customer portal. Our React SPA was "dated," I said. We needed SSR for SEO. We needed automatic code splitting. We needed the framework everyone was talking about on Twitter.

What we actually needed was someone who understood the difference between developer experience and production reliability.

The Siren Song

Next.js looked perfect on paper. Server-side rendering out of the box. Automatic static optimization. File-based routing that felt magical after years of wrestling with React Router configs. Vercel's marketing was flawless — they made every alternative look archaic.

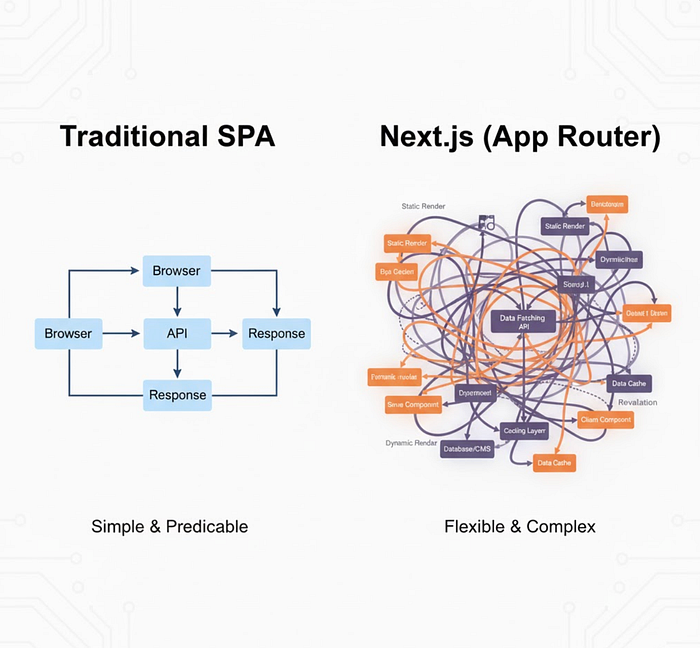

Our old app was simple: a Create React App that talked to our REST API. It worked. Users didn't complain. But it felt old. That's not a technical argument. That's ego.

I thought SSR would make our pages load faster. Turns out, when every page request hits your Node server, you're just moving the bottleneck. Our API was never designed for the thundering herd of concurrent requests that server-side rendering creates.

The First Cracks

Memory leaks appeared within two weeks of launch. Not obvious ones — the slow kind that creep up over hours. Our Next.js server would start at 400MB and balloon to 2GB before the health check finally killed it. Then a new container would spin up, and users would hit cold starts. Three seconds of blank screen while Node bootstrapped.

I dove into heap dumps. Most of the memory was tied to API response caching we'd implemented to avoid hammering our backend. Sounds smart, right? Cache responses to reduce load?

Except Next.js runs on a server that shares state between requests. Our cache kept growing. Every unique URL path created a new cache entry. We had thousands of product pages. Do the math.

// What I wrote (simplified):

const cache = new Map();

export async function getServerSideProps(context) {

const key = context.resolvedUrl;

if (cache.has(key)) {

return { props: cache.get(key) };

}

const data = await fetchAPI(key);

cache.set(key, data);

return { props: data };

}Classic. A global cache with no eviction strategy. In a Create React App, this doesn't matter — each user runs their own browser instance. But server-side? You're accumulating garbage from every visitor.

The fix seemed simple: use a proper cache with TTL and size limits. But by then, we'd discovered other problems.

When Your Framework Knows Too Much

Next.js does a lot of magic. Automatic static optimization decides at build time whether pages can be static or need server rendering. Great — until it guesses wrong.

We had a dashboard page with user-specific data. Obviously server-side, right? But we also had a banner component that showed the same marketing message to everyone. Next.js couldn't optimize that. The whole page rendered server-side because one component needed dynamic data.

The solution is getStaticProps with revalidate, but that creates its own nightmare. Now you're managing incremental static regeneration, stale-while-revalidate behavior, and CDN cache headers. The complexity compounds.

Short. Impact.

Our frontend developer — sharp with React but new to Node — couldn't debug production issues. When rendering failed, error messages were cryptic. Was it a build issue? Runtime? The middleware chain? Nobody knew. We'd traded React's client-side transparency for Next.js's server-side opacity.

Team friction escalated. Pull requests took longer. Everyone needed to understand server rendering semantics, data fetching patterns, and the Next.js compilation pipeline. Our velocity cratered.

The Breaking Point

Black Friday. Our biggest traffic day of the year.

Load increased 10x — normal for us. Our old SPA had handled it fine with a CDN and API rate limiting. But Next.js meant every page view hit our server first. The Node processes maxed out. Response times climbed from 200ms to 8 seconds.

Then the cascading failures started.

Slow responses meant users refreshed. More refreshes meant more server load. Our API started timing out because Next.js was hammering it with parallel requests during server rendering. We'd configured each page to prefetch multiple API endpoints simultaneously — good for user experience, terrible for backend load patterns.

We tried scaling horizontally. Spun up twenty more containers. Didn't help. The bottleneck was our API, now getting destroyed by the request fan-out from server-side rendering.

I'll never forget watching the error rate graph go vertical. Three years of reputation, gone in three hours.

What Actually Happened

The CTO pulled the plug. Rolled back to the old SPA we'd kept in a branch "just in case." Traffic normalized within minutes.

Monday morning, we had the post-mortem. The architecture review was brutal but fair. We'd chosen Next.js for developer experience and SEO without considering operational complexity. Our monitoring wasn't built for server-side rendering. Our team wasn't staffed for it. Our infrastructure wasn't designed for it.

The decision to let me go came two weeks later. They needed someone who made pragmatic technical choices, not someone chasing framework trends.

Fair.

The Rewrite (Again)

After I left, they rebuilt the portal as a traditional SPA with better lazy loading and route-based code splitting using React Router. Added a service worker for offline support. Implemented proper prefetching at the application level where they could control the request patterns.

I heard it shipped in four weeks with fewer bugs than our Next.js version had in six months.

The killer? Their SEO actually improved. They pre-rendered marketing pages at build time with a simple script — no framework needed. The actual user portal didn't need SEO anyway. It was behind authentication.

What I Learned (Expensively)

Next.js isn't bad. It's powerful and solves real problems for certain use cases. Vercel's own site runs on it beautifully. Content-heavy sites with mostly static pages and occasional dynamic content? Perfect fit.

But I picked it for the wrong reasons. I wanted the framework everyone was talking about. I wanted SSR because it felt advanced. I didn't ask: what problem are we actually solving?

Our real problems were slow initial JavaScript bundle size and suboptimal API queries. We could've fixed both without rewriting the entire frontend architecture.

The best technology choice isn't the newest or trendiest. It's the one your team can operate reliably at 3 AM when everything's on fire and you're two whiskeys deep into Thanksgiving dinner.

Here's what matters:

Team capability. Can your team debug it in production? Do they understand the operational model?

Infrastructure fit. Does it play nice with your existing systems or require rebuilding everything around it?

Complexity budget. Every framework costs complexity. Is the problem it solves worth what it costs to maintain?

I didn't ask these questions. I looked at Next.js through the lens of local development, where everything works perfectly. Production is different. Production doesn't care about your hot reload speed.

Where I Am Now

Took three months to find a new role. Used the time to actually understand the tools I'd been using. Read the entire Next.js source code. Learned how server-side rendering actually works at the HTTP level. Studied operational engineering — the unglamorous work of keeping systems running.

My new company runs a boring stack: React SPA, REST API, PostgreSQL. Nothing sexy. Rock solid. When they interview candidates who pitch rewriting everything in the hot new framework, they politely decline.

I get it now.

If you're considering Next.js today: run the numbers. Profile your current app. Identify actual bottlenecks. Talk to your ops team. Make sure you need server-side rendering before committing to the operational complexity it brings.

And if you're a hiring manager reading this: I learned more from getting fired than I did from four years of successful deployments. Sometimes the hard lessons are the only ones that stick.

Build for production. Not for Twitter.

Enjoyed the read? Let's stay connected!

- 🚀 Follow The Speed Engineer for more Rust, Go and high-performance engineering stories.

- 💡 Like this article? Follow for daily speed-engineering benchmarks and tactics.

- ⚡ Stay ahead in Rust and Go — follow for a fresh article every morning & night.

Your support means the world and helps me create more content you'll love. ❤️