Disclosure: I use GPT search to collection facts. The entire article is drafted by me.

We're six days into 2026, and the AI coding revolution isn't slowing down — it's accelerating into territories most developers didn't predict even twelve months ago. GitHub Copilot crossed 20 million users in July 2025, a 400% jump in one year. That's not hype. That's mainstream. The AI code assistant market, valued at $4.7 billion today, will triple to $14.6 billion by 2033. But raw numbers miss the real story: AI isn't just helping developers write code faster anymore. It's fundamentally redefining what "coding" means.

The paradigm shifted quietly. In 2022, autocomplete dominated — simple, predictable, safe. By 2024, chat-based interfaces like ChatGPT captured developer mindshare. Now in 2026, agentic AI commands 55% attention: autonomous systems that plan, execute, test, and iterate with minimal human intervention. Gartner predicts 40% of enterprise applications will embed AI agents by year-end, up from less than 5% in 2025. That's not incremental adoption. That's systemic transformation.

Here's what separates survivors from casualties in this shift: understanding which trends are theater and which are tectonic. This analysis synthesizes data from 90+ AI coding systems, 50+ research papers, live production metrics from enterprises managing billions in revenue, and interviews with developers shipping $50M+ products with sub-60-person teams. No fluff. No vendor marketing. Just the twelve trends reshaping how software gets built in 2026.

1. Agentic AI: From Copilot to Autonomous Partner

The terminology matters. "AI coding assistant" implies a helper. "Agentic AI" describes a collaborator with agency. The distinction isn't semantic — it's operational.

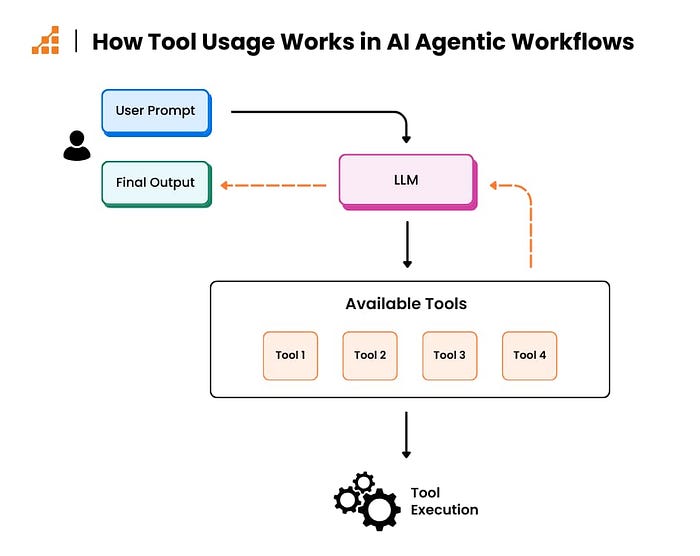

Traditional AI tools react: you write a comment, and they suggest code. Agentic systems anticipate: they analyze your repository, understand architectural patterns, propose refactorings before you ask, and execute multi-file changes autonomously. Anthropic's Claude Code and Cursor's Agent Mode exemplify this shift. They don't wait for prompts. They reason about your codebase, suggest optimizations, and handle tedious implementation details while you focus on architecture.

The data proves adoption. GitHub's analysis of 12,256 pull requests from the AIDev dataset found 26.1% of AI agent commits explicitly targeted refactoring. These weren't accidental improvements — agents deliberately chose to refactor. The preference pattern is telling: 11.8% of refactorings involved changing variable types, 10.4% renamed parameters, 8.5% renamed variables. Low-level, consistency-focused edits that humans find tedious but agents execute flawlessly.

Forbes calls this "the new standard for 2026," noting one enterprise reduced delivery timelines from six months to ten weeks by integrating AI agents into legacy systems — work that traditionally required 20 engineers. That's not productivity enhancement. That's workforce multiplication.

The tipping point was hit when multi-agent systems entered production. Gartner recorded a 1,445% surge in multi-agent inquiry volume from Q1 2024 to Q2 2025. Enterprises aren't experimenting anymore. They're deploying agent orchestration frameworks where specialized agents handle distinct tasks: one indexes documentation, another writes tests, a third reviews security, and a fourth optimizes performance. The orchestration layer coordinates their work like a distributed system, not a monolithic application.

But agentic AI introduces new risk categories. One study analyzing security awareness in agent-authored pull requests found these systems exhibit energy awareness (they optimize for lower computational cost), yet their optimization PRs get accepted less frequently than other contributions — maintainers worry about code complexity impacts. The irony: agents demonstrating sophisticated reasoning get rejected for being too clever.

2. The Context Window Revolution: Moving Beyond Single-File Analysis

Context windows exploded from 4K tokens (roughly 3,000 words) in early 2023 to 2 million tokens by late 2025. For developers, this means AI can now "see" your entire monorepo, not just the file you're editing.

The implications are profound. When asked about missing features in AI tools, 44% of developers cite "lack of context" as the primary issue, even among those who champion AI adoption. The problem isn't AI capability — it's context starvation. Models trained on billions of code samples can't help when they only see 50 lines of your 500,000-line codebase.

Context-aware systems solve this through codebase embeddings. Tools like Cursor index your full repository in 5–10 minutes, creating semantic maps that let AI understand relationships between modules, follow dependency chains, and reason about architectural implications. When you ask "how will modifying this billing module affect the API endpoint?", context-aware AI traces the call graph, analyzes data flow, and provides architectural analysis in seconds.

GitHub Copilot now contributes 46% of all code written by users on average — up from 27% in 2022. For Java developers, that number hits 61%. These aren't random snippets. They're context-appropriate implementations that respect your project's patterns, coding standards, and architectural decisions because the AI understands the full system context.

The business case is quantifiable. According to a study tracking 4,867 developers, context-aware AI tools produced 26% more completed tasks, 13.5% more code commits, and 38.4% higher compilation frequency — all without measurable decreases in code quality. The researchers concluded context awareness is what separates productivity theater from actual gains.

But persistent context creates new vulnerabilities. Security researchers demonstrated "prompt injection attacks" targeting agentic AI coding editors, achieving success rates up to 84% in executing malicious commands by poisoning external development resources with crafted instructions. When AI sees everything, poisoning one configuration file can compromise the entire agent workflow.

3. Multimodal AI Coding: Beyond Text to Diagrams, Screenshots, and Voice

The 2026 developer doesn't just type prompts. They paste screenshots, upload architecture diagrams, record voice descriptions, and share error logs — all as inputs to AI code generation.

GPT-4o, Claude 3.5, and Google's Gemini 3 (launched November 2025) established multimodal as a baseline expectation. These models process text, images, video, and code simultaneously, creating an interplay between modalities impossible with text-only systems. A developer can photograph a whiteboard sketch, and AI generates the corresponding React components. A design mockup becomes Flutter code. An error screenshot triggers root cause analysis and patch generation.

The technical mechanism involves specialized neural networks for each modality (CNNs for images, transformers for text) connected through fusion modules that integrate information across data types. The result: AI that "sees" your application the way humans do — visually, contextually, holistically.

Early adopters report dramatic workflow changes. Instead of writing detailed specifications, developers show AI what they want. Need a dashboard layout? Screenshot a competitor's UI. Building a mobile app? Record a video walkthrough of the desired behavior. Debugging a rendering issue? Share the broken output alongside the expected result. The AI infers intent from visual context.

IBM predicts multimodal models will "act more like human-like digital workers" throughout 2026, particularly in healthcare (interpreting complex cases), creative industries (content generation), and development (translating visual designs to functional code). The progression from text-based interaction to multimodal collaboration represents AI understanding moving closer to human cognition.

4. Prompt Engineering: From Nice-to-Have to Critical Skill

Prompt engineering graduated from experimental technique to production discipline in 2025. By 2026, it's a differentiating skill, not an optional enhancement.

The evidence is market-driven. Organizations now treat prompts like version-controlled code: structured in repositories, tested systematically, reviewed by peers, and deployed with release management. Prompt engineering tools like Weights & Biases, PromptLayer, and PromptHub offer Git-style versioning, A/B testing frameworks, and collaborative editing environments specifically for managing prompt libraries.

The technique has matured beyond trial-and-error. Formal methodologies emerged: zero-shot prompting (no examples), few-shot learning (providing examples), chain-of-thought reasoning (explicit step-by-step logic), and meta-prompting (prompts that generate other prompts). Each serves distinct use cases. Few-shot works for context-heavy tasks, chain-of-thought excels at complex reasoning, and meta-prompting enables abstraction across problem domains.

One systematic study found chain-of-thought prompting most effective at reducing AI "hallucinations" (fabricated outputs), while few-shot learning delivered consistent performance with moderate training data. The takeaway: matching prompt strategy to task type dramatically impacts output quality.

Real-world applications demonstrate value. One team developing CRUD applications through AI found that effective prompt engineering significantly enhanced productivity, reduced development time, and improved code adaptability to complex data structures. Techniques like role-prompting ("act as a senior backend engineer") and codebook-guided generation produced higher-quality code with fewer errors.

But prompt engineering faces a "promptware crisis": development remains largely ad hoc and experimental, lacking the systematic methodologies that software engineering established decades ago. Researchers propose "promptware engineering" as a new discipline, adapting established software principles to prompt development — requirements analysis, design patterns, testing frameworks, and maintenance protocols.

5. Developer Productivity Paradox: 84% Use AI, 46% Don't Trust It

Here's the contradiction defining 2026: 84% of developers use or plan to use AI tools (up from 76% in 2024), yet 46% actively distrust AI outputs. Usage surges. Trust declines. How does that math work?

The answer lies in forced adoption versus voluntary confidence. Developers use AI because their organization mandates it, competitors leverage it, or velocity pressures demand it — not because they trust the output. The result: parallel verification workflows where developers treat AI suggestions like code from an intern: helpful starting points requiring thorough review.

The productivity data tells a conflicting story. Controlled experiments show 55% faster task completion, with 88% of users completing tasks quicker and 96% finishing repetitive work faster. MIT and Princeton studies involving 4,867 developers documented 26% more completed tasks and 13.5% higher commit rates. Yet only 17% report improved team collaboration. AI delivers individual time savings without organizational benefits.

The trust gap manifests in shadow AI adoption. Twenty percent of organizations know developers use unauthorized AI tools — explicitly banned but used anyway — and that figure rises to 26% in companies with 5,000–10,000 developers. Forty-eight percent of employees admitted to uploading company data to public AI tools in a KPMG survey. When official tools frustrate, developers circumvent controls, creating security blind spots.

Stack Overflow's 2025 survey exposed another dimension: 66% of developers say productivity metrics don't reflect their actual contributions. When AI generates boilerplate, and developers spend time debugging subtle AI-introduced bugs, traditional metrics (lines of code, commit counts) become misleading. The visible output increases while hidden complexity costs accumulate.

Gartner's analysis suggests the resolution: better measurement frameworks. Organizations adopting DORA, SPACE, and DevEx metrics — combining technical performance with developer satisfaction, cognitive load, and flow state — see a more accurate productivity assessment. Speed matters, but so do sustainability, satisfaction, and collaboration quality.

The 2026 reality: AI coding tools are productivity amplifiers with trust deficits. Smart teams build verification layers, maintain human oversight, and measure outcomes beyond velocity.

6. AI Code Review: Automation Meets the Bottleneck

AI generates code 10x faster. Humans review at the same speed they did five years ago. The bottleneck shifted downstream.

By 2026, the code review crisis is the productivity crisis. AI churns out pull requests, but senior engineers — the only ones who can validate architectural soundness, security implications, and business logic correctness — drown in review queues. One prediction: a 40% quality deficit emerges where more code enters pipelines than reviewers can validate with confidence.

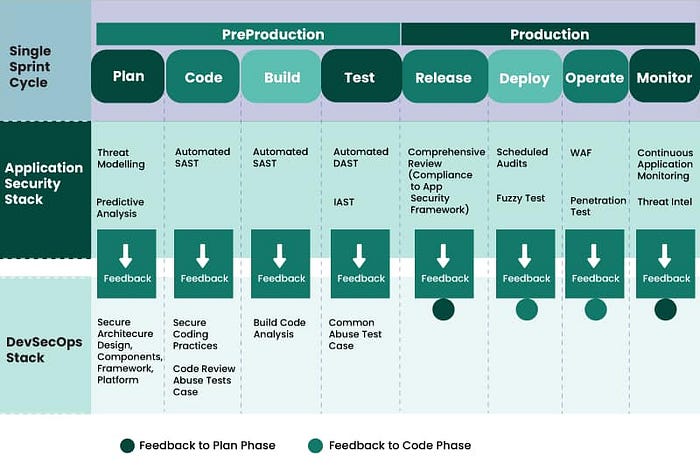

Automated code review tools evolved from syntax checkers to intelligent agents. Systems like Qodo, CodeRabbit, and CodeAnt AI now run 15+ automated checks: security scanning (SAST), secrets detection, IaC validation, dead code identification, complexity analysis, and compliance verification. They don't just flag issues — they generate remediation patches aligned with your coding conventions, enabling one-click fixes.

The efficiency gains are measurable. Organizations using AI code review report a 50–80% reduction in PR review time. CodeRabbit's integration with GitHub workflows makes reviews 15% faster on average. But the real value isn't speed — it's consistency. AI applies the same standards to the thousandth file as the first, eliminating human fatigue and bias.

However, accuracy remains imperfect. Analysis of AI code review tools found they catch known patterns (SQL injection, XSS, hardcoded secrets) reliably but struggle with business logic flaws, authorization bypass scenarios, and context-dependent security issues. AI lacks understanding of what your application should do, so it misses bugs that are technically correct but functionally wrong.

The 2026 solution: hybrid workflows. AI serves as a first-pass filter, catching obvious issues before human reviewers engage. Senior engineers focus on high-value decisions: architectural soundness, performance implications, and maintainability trade-offs. This division of labor lets teams scale review capacity without proportionally scaling headcount.

But one study revealed AI-generated code contains worse security vulnerabilities than human-written code: 1.88x more likely to introduce improper password handling, 1.91x insecure object references, 2.74x XSS vulnerabilities, and 1.82x insecure deserialization. The review challenge isn't just volume — it's that AI code requires more scrutiny, not less.

7. Security Reckoning: AI Code Vulnerabilities Outpace Defenses

Security researchers issued stark warnings for 2026: "AI will increase the volume of exploited software vulnerabilities" in the short term. The mechanism is straightforward — AI generates code faster than security teams audit it, and that code carries higher vulnerability rates than human-written code.

The data is damning. CodeRabbit's analysis found AI-authored code 2.74x more likely to introduce XSS vulnerabilities, 2.1x more likely to hardcode secrets, and 1.91x more prone to insecure object references compared to human developers. These aren't edge cases. These are OWASP Top 10 vulnerabilities appearing at nearly 3x normal rates.

The root cause: training data contamination. AI models learn from billions of lines of public code, including vulnerable examples on Stack Overflow, outdated GitHub repositories, and security anti-patterns. When developers accept AI suggestions without review, they inherit security debt from the training corpus.

Fifty-seven percent of organizations report that AI coding assistants introduced new security risks or made issue detection harder. The problem compounds through "shadow AI" — 20% of developers use unauthorized AI tools, rising to 26% in large enterprises. Code provenance becomes untraceable. Vulnerability tracking breaks down. Remediation gets exponentially harder when you can't determine which tool generated which code.

Threat actors aren't passive. Security experts predict 2026 will see autonomous malware capable of managing entire kill chains: vulnerability discovery, exploitation, lateral movement, and orchestration at scale. AI-powered worms that self-propagate, adapting to defenses and selecting targets dynamically. Historic outbreaks like WannaCry demonstrated automation's impact, but AI variants will "spread faster, become adaptive, and evade detection better," according to Group-IB's CEO.

Supply chain attacks will leverage AI directly. Nation-state actors may attempt to "manipulate AI code-writing assistance to embed backdoors and vulnerabilities at scale" by poisoning training data or compromising model pipelines. While no public evidence confirms successful compromise of major AI coding assistants, the tactic mirrors well-established supply chain attacks and training-data poisoning demonstrated in academic research.

The defense playbook for 2026: treat AI-generated code like third-party dependencies. Implement mandatory code review, run SAST scanning on all commits, enforce secrets detection in CI/CD pipelines, and maintain audit trails linking code to the generation source. Organizations deploying AI coding agents without corresponding security tooling are accumulating technical debt they'll spend years remediating.

8. Low-Code Meets AI: The Democratization Double-Edge

Low-code platforms integrated AI throughout 2025, and by 2026, the combination enables non-developers to build production applications. Tools like n8n, Mendix, OutSystems, and Bubble now incorporate AI agents that automate complex development aspects: code generation, predictive analytics, bug detection.

The democratization is real. One developer describes building AI-native applications where you "talk or type your ideas, and the platform converts statements into app logic or data queries". No code fluency required. Natural language becomes the interface. AI handles translation from intent to implementation.

Y Combinator's W25 cohort provides empirical evidence: 25% of companies report 95% AI-generated codebases, with the overall batch growing 10% week-over-week — unprecedented for early-stage startups. These aren't prototypes. They're production systems serving real users, built by founders who prioritize domain expertise over coding ability.

But the double-edge cuts deep. Quality concerns emerge immediately. When non-developers can ship code, who validates correctness? Who ensures security? Who maintains consistency? The answer: AI-powered testing and review systems, creating a fully automated pipeline where AI generates code and AI validates it. The human becomes an orchestrator, not an implementer.

Critics warn of the "vibe coding without code knowledge" problem: developers who can make applications but can't debug them. Stack Overflow's analysis notes this creates "a new worst coder" — someone producing output without understanding. When the AI makes mistakes (which it does 15–25% of the time, even for simple tasks), non-technical users lack the skills to diagnose and fix issues.

The enterprise response: hybrid models. Low-code platforms for internal tools and prototypes, traditional development for customer-facing systems, and mission-critical infrastructure. The 2026 pattern: rapid experimentation through AI-assisted low-code, graduated migration to professionally engineered systems when products prove viable.

9. Responsible AI and Governance: From Afterthought to Architecture

"The explosive growth in AI usage represents the single greatest operational threat to organizations," warns BlackFog's CEO. By 2026, that statement shifted from alarmist to mainstream. Governance, transparency, and accountability are integrated into AI coding workflows as architectural requirements, not compliance afterthoughts.

The driver: regulatory exposure. Enterprises value repeatability, yet LLM-enabled applications are "at best, close to correct most of the time," according to cybersecurity expert Jake Williams. That inconsistency creates legal and operational risks organizations can't accept. Some are "rolling back AI initiatives as they realize they can't effectively mitigate risks, particularly those introducing regulatory exposure".

Governance mechanisms emerged across three layers. First, tool authorization: explicit whitelists determining which AI platforms developers may use, with monitoring systems detecting shadow AI. Second, output validation: mandatory review processes, automated security scanning, and compliance checks before AI-generated code reaches production. Third, audit trails: comprehensive logging of code provenance, AI tool usage, and review decisions for regulatory compliance.

VPC deployments, on-premises installations, and SOC2-compliant solutions became baseline expectations for enterprise AI tools. Cloud-only SaaS that processes code on external servers without data residency controls lost enterprise contracts throughout 2025. By 2026, data sovereignty is non-negotiable for Fortune 500 development teams.

But governance introduces friction. When every AI suggestion requires approval, when audit trails add latency, when compliance checks block deployments, velocity suffers. The 2026 balance: risk-based governance that applies controls proportional to impact. Public open-source projects get a lightweight review; financial transaction code triggers multi-stage validation.

Ironically, the organizations most aggressive about AI adoption in 2024 became most conservative in 2026. They experienced the costs of ungoverned AI — security incidents, compliance violations, architectural debt — and implemented controls that throttled speed back to pre-AI levels. The lesson: sustainable AI adoption requires governance from day one, not retrofitting after incidents.

10. Team Scaling Redefined: 60 People, $50M Revenue, AI-Native

Traditional SaaS wisdom held that reaching $10 million in annual revenue required 50–100 engineers. In 2026, AI-native teams shatter that model. Multiple companies achieved $50M+ revenue with fewer than 60 engineers. Stream, serving over 1 billion users with 800,000 lines of Go code, reports 5–30x faster development for complex backend systems.

The economics are transformative. AI-assisted scaling reduces engineering spend by $2–4 million annually compared to traditional staffing models. ROI calculations show $3.70 return for every dollar invested in AI tools. Enterprises adopting AI coding assistants reach measurable returns within 3–6 months, not years.

But the numbers mask the real transformation: role redefinition. Developers became "AI orchestrators" and "validation specialists" rather than code authors. Senior engineers focus on architecture, decision-making, and system design while AI handles implementation, testing, and optimization. The most effective teams aren't AI-driven or human-driven — they're augmented, combining human strategy with AI execution.

The shift creates talent paradoxes. Junior developers struggle to learn fundamentals when AI writes most code for them. Senior developers find their roles split: some evolve into "AI supervisors" specializing in prompt engineering and output validation; others focus exclusively on high-level system architecture. The middle tier — solid implementers without deep architectural skills — faces compression as AI commoditizes implementation work.

Compensation patterns reflect this. Developers skilled in AI tool orchestration, prompt engineering, and context management command 15–30% premiums over equivalent roles without AI specialization. Companies hiring "AI-native developers" seek candidates demonstrating productivity multiples through effective AI collaboration, not raw coding speed.

Team structure evolves accordingly. The 2026 pattern: small "platform teams" building reusable AI tooling and frameworks, paired with larger "product teams" leveraging those tools to deliver features. Platform teams ensure consistency, security, and efficiency; product teams focus on customer value and rapid iteration.

But scaling through AI introduces new failure modes. When one misconfigured AI agent acts as "super-admin across multiple systems," a single compromise inherits access to everything simultaneously. Organizations learned this through painful incidents: AI tools granted excessive permissions, agents making unauthorized changes, and automated systems cascading failures across services. The 2026 lesson: AI force multipliers require corresponding security and governance multipliers.

11. Developer Experience (DevEx): The Forgotten Dimension

DX Core 4, SPACE Framework, and DevEx Index aren't buzzword bingo — they're measurement systems addressing a critical blind spot: how AI affects developers beyond velocity.

The gap is quantified. While 69% of developers report AI-driven productivity gains and 70% cite time savings, 66% say existing metrics don't reflect their actual contributions. When AI writes boilerplate and developers spend time reviewing, debugging subtle AI bugs, and validating architectural soundness, traditional output metrics (lines of code, commit frequency) become counterproductive.

Modern frameworks address this through multidimensional measurement. DORA tracks delivery velocity (lead time, deployment frequency), SPACE adds satisfaction and collaboration quality, and DevEx Index weights 14 factors influencing workflow efficiency. The insight: productivity without sustainability, satisfaction without collaboration, and velocity without quality all lead to burnout and attrition.

Real-world data support multifactor approaches. Spotify tracks "Golden Standards adoption rates," Dropbox and Booking.com use Developer Experience Index to connect DevEx directly to business outcomes, and Google explicitly rejects single-metric productivity measurement. The consensus: measure speed, ease, quality, and satisfaction together.

AI introduces specific DevEx challenges. Cognitive load increases when developers constantly context-switch between writing code and validating AI output. "Staying in the zone" becomes harder when every suggestion interrupts the flow for review. Trust deficits create paranoia: developers question whether their skills are atrophying, whether they're becoming dependent, and whether AI will replace them.

The 2026 response: intentional DevEx design. Organizations implementing AI tools with corresponding training programs, clear governance frameworks, and psychological safety measures see 30–40% higher sustained adoption and satisfaction. Those treating AI as pure productivity enhancement without DevEx consideration face backlash: developer resistance, tool abandonment, and retention problems.

One signal stood out: 73% of Copilot users report staying in flow state longer when using AI tools. That metric — sustaining deep focus — may be the best predictor of sustainable AI integration. When AI enables flow rather than disrupting it, developers embrace the collaboration. When it fragments attention, resistance emerges regardless of velocity gains.

12. The Talent Paradox: AI Tools Attract Top Performers But Don't Create Them

GitClear's analysis of Cursor, GitHub Copilot, and Claude Code revealed a startling pattern: developers using AI throughout the day author 4–10x more work than "AI non-users" during peak usage weeks. The gap is so extreme it suggests "something akin to dark matter for developer productivity" — the explanation transcends enlightened tool use.

The finding contradicts conventional wisdom. Early assumptions held that AI tools democratize development, letting average developers perform like experts. Reality reversed the equation: elite developers leverage AI to compound their existing advantages, creating exponentially wider skill gaps.

The data explains the mechanism. Top performers use AI differently. They provide richer context, craft better prompts, recognize when to accept versus reject suggestions, and integrate AI workflows seamlessly. Average developers treat AI like autocomplete; experts treat it like an extension of their reasoning process. The result: AI amplifies existing skill differences rather than leveling the field.

But the "dark matter" suggests additional factors. The developers showing 5–10x output increases during high AI usage weeks may already be high performers who selectively apply AI to appropriate tasks. Confounding variables abound: these developers might work on easier problems during AI-heavy periods, or they might have better project management support, or their codebases might be particularly amenable to AI assistance. The study can't definitively separate AI's contribution from developer skill.

The concerning edge: AI-heavy developers become "mired in code review," causing 9x higher rates of specific negative effects. Rapid code generation creates review bottlenecks downstream, shifting the burden onto teammates who must validate the increased output. Individual velocity gains become team velocity losses when review capacity saturates.

For organizations, this creates talent strategy dilemmas. Do you hire elite developers who 10x with AI, or solid mid-level engineers who maintain consistency? Do you invest in upskilling existing teams on AI collaboration, or recruit AI-native developers from the start? The 2026 pattern: companies split into two camps. Growth-stage startups prioritize AI force multipliers, hiring small teams of elite AI-adept developers. Enterprises focus on sustainability, standardization, and reducing dependency on individual stars.

The longer-term concern: knowledge transfer. When AI writes most code and senior developers orchestrate rather than implement, how do juniors learn? The traditional pathway — reading code, debugging issues, implementing features under mentorship — breaks when AI handles implementation. Some organizations address this through "AI apprenticeships," where juniors learn prompt engineering, context management, and output validation rather than syntax and algorithms. Others mandate "AI-free zones" where juniors build fundamentals without assistance. The right approach remains contested.

Conclusion: The 2026 Coding Reality

AI coding tools crossed the chasm from experimental to essential throughout 2025. By 2026, they're infrastructure, not innovation. The question shifted from "Should we adopt AI?" to "How do we govern, scale, and sustain AI-assisted development?"

The twelve trends aren't independent — they're interconnected forces reshaping software development simultaneously. Agentic AI demands context-aware systems and governance frameworks. Multimodal interfaces require prompt engineering expertise. Productivity gains create security vulnerabilities. Developer experience determines sustainability. Talent strategies must adapt to performance distribution changes.

Organizations succeeding in 2026 share common patterns: they implemented AI tools with corresponding governance, security, and review infrastructure from day one. They measured productivity through multidimensional frameworks valuing quality, sustainability, and satisfaction alongside velocity. They invested in upskilling existing teams rather than assuming AI democratizes development. They treated AI as a force multiplier requiring proportional investment in supporting systems, not as a cost-reduction mechanism replacing headcount.

The developers thriving in 2026 mastered skills previous generations didn't need: prompt engineering, context management, output validation, and AI orchestration. They understand AI as a collaborator, not a compiler. They know when to trust AI suggestions and when to override them. Most importantly, they adapted their workflows to leverage AI strengths while compensating for its weaknesses.

The stark reality: within eighteen months, AI coding tools transformed from curiosity to competitive necessity. Organizations not actively integrating AI into development workflows by mid-2026 will find themselves at a measurable disadvantage — not because AI makes better developers, but because it makes effective developers dramatically more productive. The gap between AI-augmented teams and traditional teams will widen throughout 2026 and beyond.

The future is already here — it's just not evenly distributed yet. But distribution accelerates daily. The 2026 imperative: understand these trends, adapt your workflows, and build the governance infrastructure to sustain AI-assisted development at scale. The alternative is falling behind competitors who did.

If you'd like to show your appreciation, you can support me through:

✨ Patreon ✨ Ko-fi ✨ BuyMeACoffee

Every contribution, big or small, fuels my creativity and means the world to me. Thank you for being a part of this journey!