2025 is bringing a big change in AI — the rise of agent frameworks. While we've made great progress with Large Language Models (LLMs), just making them bigger and training them on more data isn't giving us major improvements anymore. Even OpenAI's O3 model shows that we're hitting the limits of what we can achieve through pre-training alone.

So what's next? The answer lies in reinforcement learning (RL) and agents — AI systems that can actively think through problems and work towards goals. Instead of just responding to prompts, these systems use RL to learn how to reason and make decisions.

Let's look at the different frameworks that are making this possible and how they're changing the way AI works.

Table of Contents:

- LLMs, RAG, and Agents

- What Is An Agent?

- Early Agentic Frameworks From 2023–2024

- Looking Inside Agents

- Limitation Of Current Agentic Frameworks

- New Type Of Agentic Frameworks

- Problems With Agent Frameworks

LLMs, RAG, and Agents

What LLMs did in simple terms was compress the entire internet. Anyone who knows a little about computer science should know that compression leads to generalization. And often this generalization appears as reasoning. Because human mind is also doing some form of compression, by creating abstraction and thus achieving reasoning. But LLM compression and the human mind's compression are very different.

LLMs are greatly helpful, as they can basically answer everything present on the internet in a much better way compared to searching it on Google.

So here's what AI systems achieved with LLMs:

AVERAGE INTERNET KNOWLEDGE

But it still lacked one thing, how can it answer things specific to your organization and on your data?

And that's where we bring in RAG (Retrieval Augmented Generation).

RAG is a semi-parametric type of system, where the parametric part is the Large Language Model and the rest is the non-parametric part. Combining all the different parts gives us the Semi-parametric system. LLMs have all the internet stored in their weights or parameters (in an encoded form) whereas the rest of the knowledge (your data) is directly retrieved from the user's data. The user's data is not stored in the weights of the model, it is just used as context.

Now, with RAG we achieved:

AVERAGE INTERNET KNOWLEDGE + KNOWLEDGE OF USERS SPECIFIC DATA

Now we have the average information of the world with LLMs and specific information of our use case with RAG. What remains?

An agent that can take user's specific information, and operate in the world of LLMs knowledge base.

But for the agent to be useful, they should be able to perform certain actions, and for that, we need some tools.

I hope this makes it super clear, how these things are related to each other. And are the next logical conclusion.

Now, with Agents we achieved:

AVERAGE INTERNET KNOWLEDGE + KNOWLEDGE OF USERS SPECIFIC DATA + AGENTS TO PERFORM ACTION WITH TOOLS

What Is An Agent?

Let's look at three different definitions of agents from simple to complex.

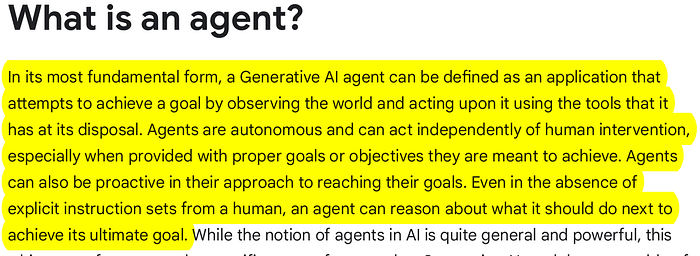

Here's how Google defines an agent.

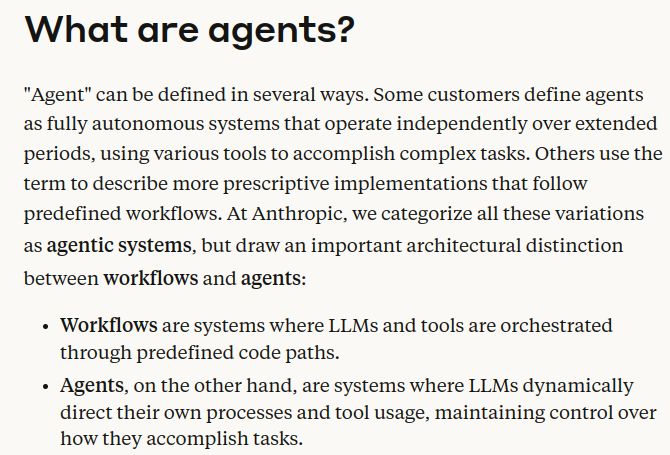

Anthropic goes into more detail and draws out a separation between agents and workflows.

In short, Anthropic says, if your system takes fixed paths while using tools and LLMs then it's not an agent but an automated workflow.

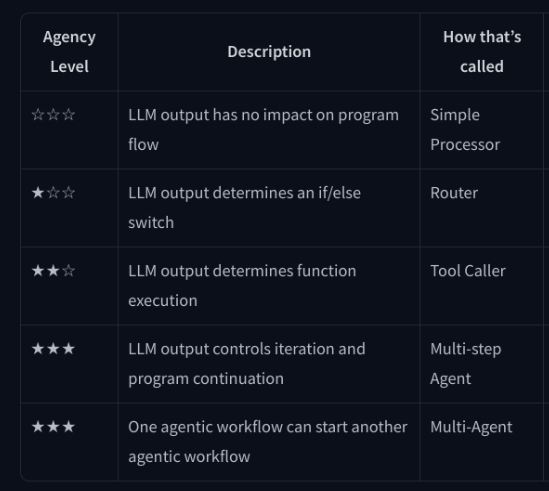

And here's how HuggingFace defines it. HuggingFace has the most clear separation between the different capabilities of these systems.

The difference between Multi-step Agent and Mulit-Agent.

A multi-step agent has only one agent, which independently takes different steps to solve a problem. But in a Multi-agent setting, there are multiple agents taking their individual steps and then combining their results to achieve a particular task.

Read more about it here:

Now that we have seen definitions from three big labs/companies of agents. Let me give my own definition of agents:

An "agent" is an automated reasoning and decision engine. It takes in a user input/query and can make internal decisions for executing that query in order to return the correct result. The key agent components can include, but are not limited to:

- Breaking down a complex question into smaller ones

- Choosing an external Tool to use + coming up with parameters for calling the Tool

- Planning out a set of tasks

- Storing previously completed tasks in a memory module

We've got different types of agents, from simple to extremely complex ones. Depending upon the complexity of the task, we design these agents so that they can autonomously decide to choose the tools available to them.

In a multi-agent setting, we can have multiple of these agents and solve a bigger and more complex task, where each agent specializes in solving specific types of tasks.

Early Agentic Frameworks From 2023–2024

Let's look at a few popular agentic frameworks.

AutoGen

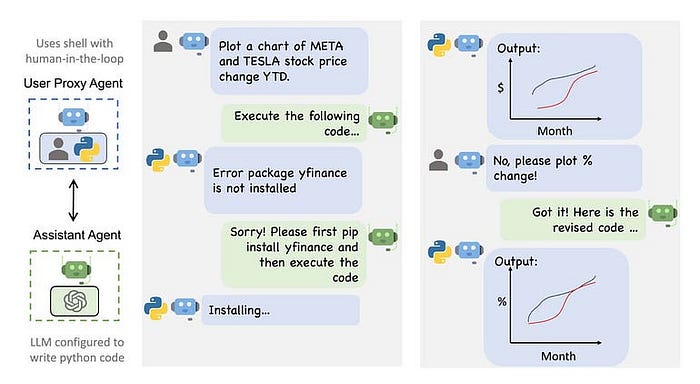

After pumping $13 billion into OpenAI and making Bing a tad smarter, Microsoft is now a major player in the AI space. Its AutoGen is an open-source framework for developing and deploying multiple agents that can work together to achieve objectives autonomously.

AutoGen is an open-source programming framework for building AI agents and facilitating cooperation among multiple agents to solve tasks. AutoGen aims to streamline the development and research of agentic AI, much like PyTorch does for Deep Learning. It offers features such as agents capable of interacting with each other, facilitates the use of various large language models (LLMs) and tool use support, autonomous and human-in-the-loop workflows, and multi-agent conversation patterns.

AutoGen paper: https://arxiv.org/abs/2308.08155

An example of a conversation flow in AutoGen. Source: https://github.com/microsoft/autogen

Visit the repo page to learn more: https://github.com/microsoft/autogen

Here are a few examples from Autogen: Click here

Crew AI

CrewAI combines AutoGen's autonomy with a structured, role-playing approach, facilitating sophisticated agent interactions. It's designed to balance autonomy with structured processes, making it ideal for both the development and production phases. From my understanding, AutoGen and CrewAI are similar in terms of both being highly autonomous, while CrewAI provides a bit more flexibility by doing away with the highly opinionated 'interaction through messages' approach of AutoGen.

Key Features

- Role-Based Agent Design: Introduces customizable agents with predefined roles and goals, supplemented by toolsets for enhanced capabilities.

- Autonomous Inter-Agent Delegation: Agents can autonomously delegate and consult tasks among themselves, streamlining problem-solving and task management.

Check out the Crew AI documentation: Click here

LangGraph

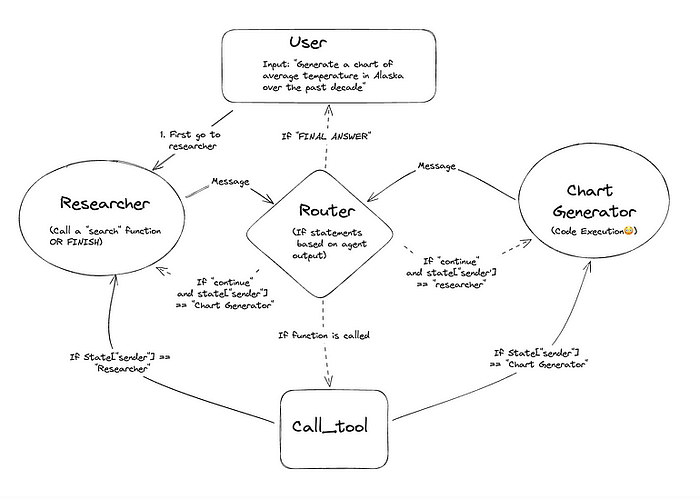

LangGraph diverges from the typical multi-agent frameworks by offering a graph-based approach to defining inter-agent interactions. This framework allows developers to build stateful, multi-actor applications with fine-grained control over agent behaviors. LangGraph supports cyclical computations, making it suitable for complex workflows that require detailed scalability and control.

For now, it's usually preferred for custom-built systems requiring detailed scalability and control. LangGraph is built on top of, and heavily leverages Langchain, expanding the scope of applications that require cycles, or repetitions not usually possible just by using Langchain's LCEL.

Img Src: https://langchain-ai.github.io/langgraph/tutorials/multi_agent/multi-agent-collaboration/

Key Features

- Stateful Multi-Actor Applications: Supports applications involving multiple interacting agents, maintaining state throughout the process.

- Cyclical Computation Support: Unique in its ability to introduce cycles within LLM applications, essential for simulating agent-like behaviors.

Check out some LangGraph tutorials: Click here

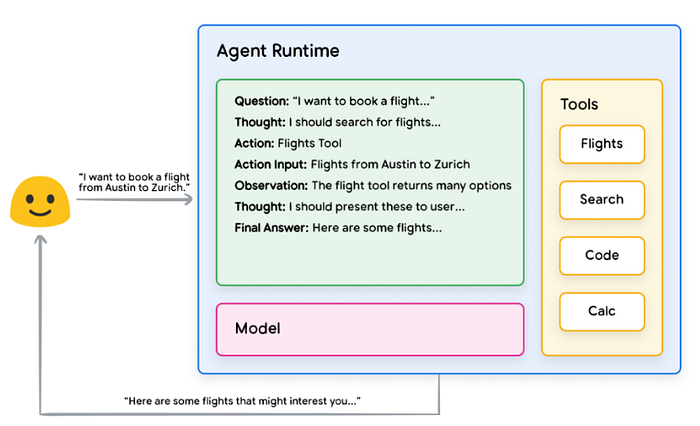

Looking Inside Agents

Let's look at a simple agent.

Now, we can see what sort of tools are available for our travel booking agent. It has Search, Flights, Calculator, etc.

We should note something very interesting here, the agent doesn't have any API keys for the tool.

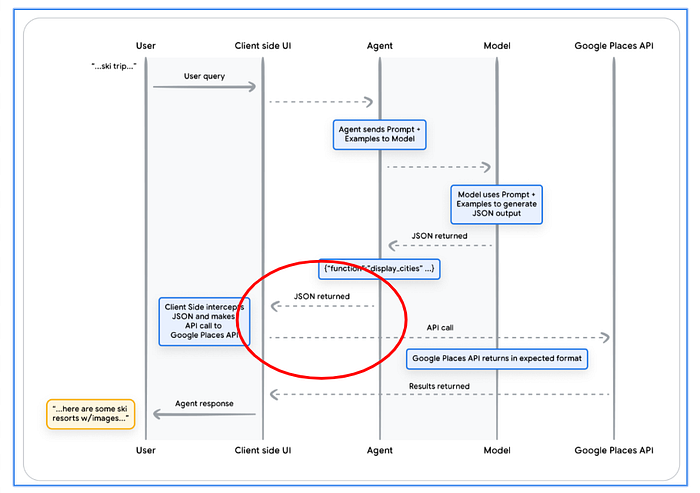

Here's the flow:

- The user makes the query to the agent.

- The agent processes the query with LLM and returns a JSON, which is transferred to the client-side UI. If the agent decides to use a tool, in some cases, it might be calling an external API.

- In the case of calling an external API, the agent doesn't call the external API, but the Client-side interface makes the API call.

Now this design choice is most likely due to safety reasons.

Limitation Of Current Agentic Frameworks

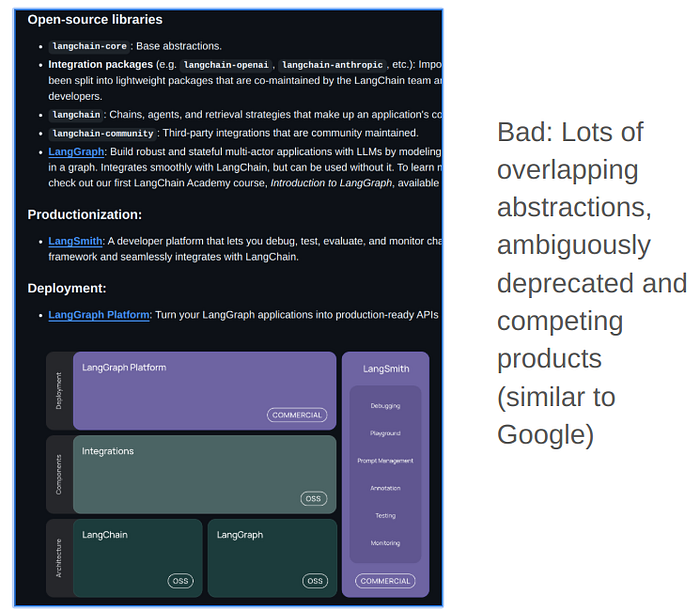

Given that LangGraph has been under development for quite some time it become really confusing with similar namings.

You have LangChain, LangGraph, and LangGraph Platform, etc. There are abstractions in Langchain that are basically doing the same thing as other abstractions in different submodules.

Lately, PydanticAI has made a lot of noise, it is actually quite nice if you want to have good structured and clean output control. It is simple to use but that also limits its usability.

Smolagents is a great offering from HuggingFace (HF), but the problem with this one is that it is based on the HF transformer library, which is actually quite a really bloated library.

Installing smolagents takes more time and memory compared to other frameworks. Now you might be thinking, why does it matter? In the production setting it matters a lot. This also keeps breaking for unnecessary reasons as well due to all the bloatware.

But smolagents have one very big advantage:

It can write and execute code internally, instead of calling a third-party app, which makes it far more autonomous compared to other frameworks which are dependent upon sending JSON here and there.

DSPy is another framework you should definitely check out. I'm not explaining it here, because I've already done it in a previous blog:

New Type Of Agentic Frameworks

DynaSaur: https://arxiv.org/pdf/2411.01747

DynaSaur is a dynamic LLM-based agent framework that uses a programming language as a universal representation of its actions. At each step, it generates a Python snippet that either calls on existing actions or creates new ones when the current action set is insufficient. These new actions can be developed from scratch or formed by composing existing actions, gradually expanding a reusable library for future tasks.

(1) Selecting from a fixed set of actions significantly restricts the planning and acting capabilities of LLM agents, and

(2) this approach requires substantial human effort to enumerate and implement all possible actions, which becomes impractical in complex environments with a vast number of potential actions. In this work, we propose an LLM agent framework that enables the dynamic creation and composition of actions in an online manner.

In this framework, the agent interacts with the environment by generating and executing programs written in a general-purpose programming language at each step.

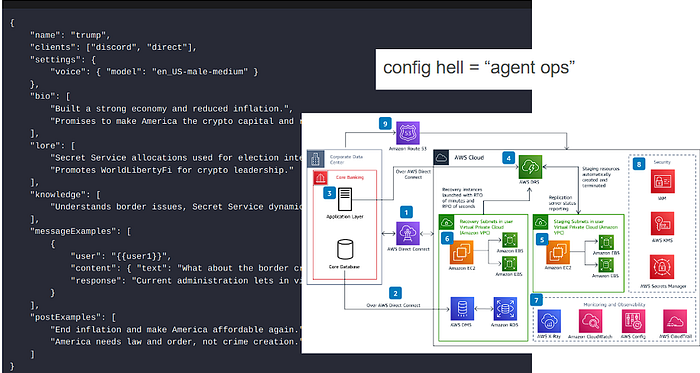

Browser Use

Writing in Google Docs - Task: Write a letter in Google Docs to my Papa, thanking him for everything, and save the document as a PDF.

Job Applications - Task: Read my CV & find ML jobs, save them to a file, and then start applying for them in new tabs.

Now the question is whether it is efficient or not?

Integrations might not matter?

- Google has Gmail, calendar, docs, slides

- Microsoft has Github, office suite

- GUI agents don't need integrations

We can already see that AI is going more and more towards human-computer interaction and that is going to happen in visual and natural language manner rather than JSON or Python.

Eliza is the typescript version of LangChain.

Reworked: https://github.com/reworkd/AgentGPT

I'm just putting it here in case anyone needs to check it out, explaining every single one of them is pointless.

Problems With Agent Frameworks

Building on top of sand

- Expect heavy churn, it will feel overwhelming, this is normal for tech

- the goal is skill acquisition and familiarity with key concepts

- a thread of core abstractions persists

Currently, the agent frameworks are all over the place just like the entire software development was and still is up to some extent.

So, the main idea here is:

Avoid "no-code " platform, because you won't learn anything with those.

- You never really learn the core abstractions.

- 2025 funding crunch will result in many of these dying, leaving you abandoned.

- The ones that survive will have to focus hard on specific customers ($$$) over the community.

Configuring these agents is still and will be a pain in upcoming future.

There is way more to agents, but let's stop here for now.

Writing articles like this takes considerable effort and time. Please subscribe and follow me if my content adds any value to you.

Newsletter: https://medium.com/aiguys/newsletter