There were reports of users getting flagged right from the first week of launch. And then it happened — Anthropic blocked Claude Max tokens from being used in OpenClaw altogether.

My other concern was cost.

Running Claude Sonnet on OpenClaw daily was eating through API credits faster than I expected. Every message sends the full conversation history to the model.

So I started looking for free alternatives.

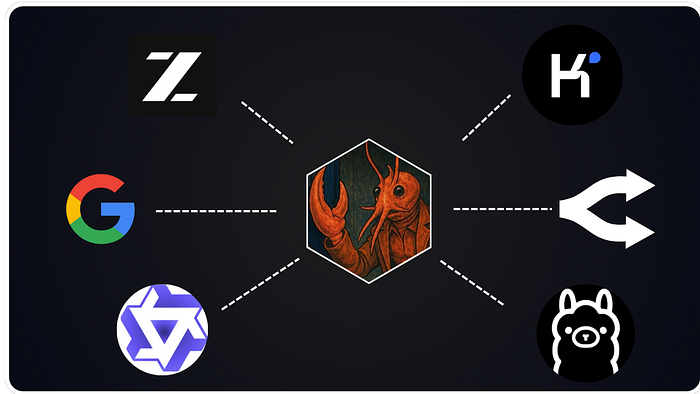

After weeks of testing different models, providers, and configurations, I found 5 models that work with OpenClaw.

Some run locally on your machine. Others use free cloud tiers that are good enough to get the work done.

I learned — "free" means different things depending on the route you take. Local models cost nothing per token but need decent hardware.

Cloud-free tiers give you a quota that resets daily. And some models are so cheap they round to zero.

In this article, I'll break down the 5 models I use, why I use them, and how to set each one up in OpenClaw.

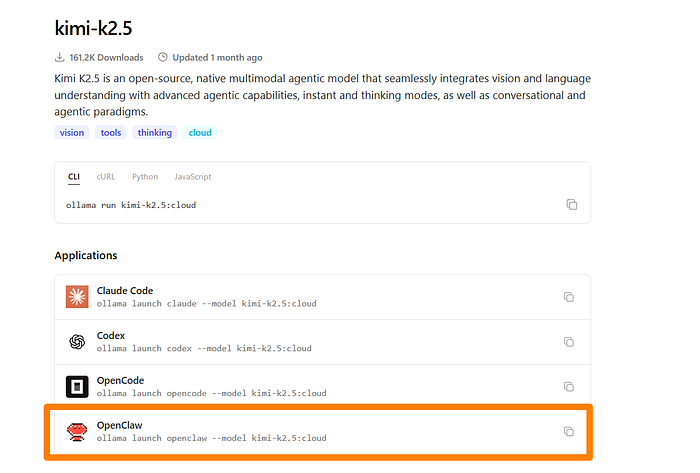

1. Kimi K2.5

Kimi K2.5 from Moonshot AI is the most popular free model on OpenRouter right now.

I started using it as a temporary fallback while I figured out my setup. It ended up becoming my primary model for OpenClaw.

- The reasoning quality is close to Claude Sonnet

- Tool calling works

- The 128K context window handles long OpenClaw sessions without losing track of earlier messages.

- It's ideal for an agent that reads emails, manages calendars, and runs automations

Moonshot has announced permanent free access on its platform. On OpenRouter, the free tier gives you roughly 20 requests per minute with daily resets.

Setting it up :

openclaw config set agents.defaults.model.primary "openrouter/moonshotai/kimi-k2.5"If you use Ollama, there's an even faster route. Kimi K2.5 is available as a cloud model on Ollama, so you can launch it directly with OpenClaw in one line:

ollama launch openclaw --model kimi-k2.5:cloudBest Use: General-purpose agent work, coding tasks, research, and content drafting. This is the model I recommend starting with if you want free cloud inference.

Limitation: Rate limits on the free tier mean heavy users will hit walls during busy sessions.

2. Qwen via OAuth

Most people skip Qwen when looking for free OpenClaw models.

- OpenClaw has a built-in plugin for Qwen's OAuth flow

- You authenticate once through a device code, and you get access to Qwen Coder and Qwen Vision models with 2,000 free requests per day

- Qwen 3.5 has native agentic capabilities; it was built to think, search, use tools, and build.

Here's the setup:

openclaw plugins enable qwen-portal-auth

openclaw models auth login --provider qwen-portal --set-defaultAfter login, your model references look like qwen-portal/coder-model or qwen-portal/vision-model.

The tokens auto-refresh, so you don't have to keep logging in.

I wouldn't run a whole team on this. But for a personal OpenClaw agent handling coding tasks, file reads, and daily automations, the Qwen free tier gets the job done.

Best Use Case: Coding tasks, vision-based workflows, and anyone who wants a reliable free tier without juggling multiple API keys.

Limitation: If the OAuth token expires or gets revoked, you need to re-run the login command.

3. Gemini Flash

Google's Gemini Flash is the best free option for cloud inference if you want a big context window without paying for it.

- The free tier gives you 15 requests per minute and up to 1 million tokens of context

- For OpenClaw sessions that grow long, that context length is a real advantage over most free alternatives.

I use Gemini Flash as my secondary model. When Kimi K2.5 hits its rate limit, Gemini picks up.

Add it to your OpenClaw config like this:

{

"models": {

"providers": {

"google": {

"models": [

{ "id": "gemini-3-flash", "name": "Gemini 3 Flash" }

]

}

}

}

}You'll need a

GEMINI_API_KEYfrom Google's AI Studio. The free tier API key takes about two minutes to generate.

Best Use Case: Long conversations, document summarization, research tasks, and as a reliable fallback when your primary free model hits its daily cap.

Limitation: Google's free tier may use your data for training. For side projects and learning, that's probably fine. For proprietary code or sensitive client work, use a paid tier or run local.

4. OpenRouter Free Models

OpenRouter isn't a model, but a gateway that gives you access to dozens of models from different providers through a single API key.

- What makes it useful for OpenClaw is the

:freesuffix. Append it to supported models, and you get zero-cost inference. - The available free models rotate over time, but there's usually a solid selection available

- You configure Kimi K2.5 as your primary through OpenRouter, add a Llama model as your first fallback, and set Gemini as the last resort

A basic free setup looks like this:

{

"agents": {

"defaults": {

"model": {

"primary": "openrouter/meta-llama/llama-3.2-3b-instruct:free"

}

}

}

}OpenClaw also has a built-in scanner that checks which free models are currently available on OpenRouter and tests them for tool calling and image support:

openclaw models scanThis command ranks available free models by capability and lets you pick your primary and fallbacks interactively.

It saves you from manually checking which free models work with OpenClaw's tool calling requirements.

Best Use Case: Users who want maximum flexibility and automatic failover between free models. Also great for experimenting with different models without changing provider configs each time.

Limitation: Free models on OpenRouter change. A model that's free today might not be tomorrow. Check back and keep your fallbacks updated.

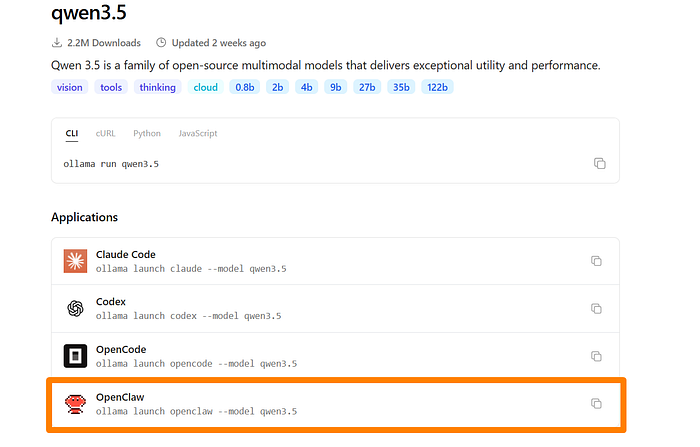

5. Qwen 3.5 via Ollama

Every model I've covered so far depends on someone else's server. Qwen 3.5 running locally through Ollama depends on your hardware.

- I picked Qwen 3.5 because Alibaba built it with agentic workflows in mind.

- It handles tool calling, multi-step reasoning and code generation better than most open-source models at similar sizes.

- The 9B version runs on most modern laptops with 16GB RAM. The 27B version needs 32GB+ but delivers better results on complex tasks.

- If you have an M-series Mac or a dedicated GPU, the experience is smooth.

Ollama recently added a one-command launch for OpenClaw.

ollama launch openclaw --model qwen3.5That single command pulls the model, configures the provider, and connects it to OpenClaw.

If you prefer manual control, you can still install Ollama separately, pull the model with

ollama pull qwen3.5, and wire up the provider config yourself. But for most people, the launch command is ok.

Best Use Cases: Privacy-first setups, offline work, sensitive data you don't trust to any cloud, and developers who want to keep costs as close to zero as possible.

Limitation: You need decent hardware. The 9B model works on 16GB RAM but struggles with complex multi-step tasks.

Final Thoughts

After weeks of testing, this is the configuration I settled on:

- Primary: Kimi K2.5 — handles most daily tasks for free

- Fallback 1: Qwen 3.5 locally via Ollama — picks up when rate limits hit

- Fallback 2: Gemini Flash — ideal for heavy use with that 1M token context

I stopped running OpenClaw with Claude models since there are several free options.

If you're experimenting with any of these models, leave a comment below. I'd love to hear what setup is working for you.

Let's Connect!

If you are new to my content, my name is Joe Njenga

Join thousands of other software engineers, AI engineers, and solopreneurs who read my content daily on Medium and on YouTube, where I review the latest AI engineering tools and trends. If you are more curious about my projects and want to receive detailed guides and tutorials, join thousands of other AI enthusiasts in my weekly AI Software Engineer newsletter

If you would like to connect directly, you can reach out here:

Follow me on Medium | YouTube Channel | X | LinkedIn