Presently many of the AI products are still operating on the static datasets. They extract data (i.e. database or text file), send it to a model. Then a response is generated and their job is completed. But what if the data never stops flowing and the actions need to be taken continuously depending on the input.

Let's assume your AI needs to:

- Monitor live KPIs

- Analyze IoT sensor streams

- Detect anomalies in real time

- Trigger automated actions

This is where Apache Flink and AI Agents become very interesting.

Idea

I am a big fan of Flink, and like many other engineers I'm also very interested in AI. I wanted to test such a use case, and ideally to run it on my laptop without cloud costs.

The goal was simple:

Build a real-time Smart Home monitoring agent that consumes streaming sensor data and makes intelligent decisions.

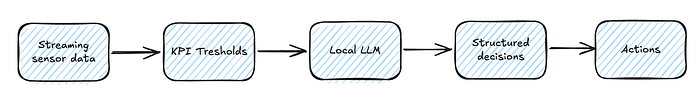

The flow looks like this:

And everything runs inside a Flink job.

The Agent

In this project, I mainly followed the documentation from the Flink community page. It provides practical examples in both Java and Python, and explains the integration with Large Language Models through the Ollama framework.

My implementation is written in Java and the full source code is available here. I used for development IntelliJ IDEA, but any standard IDE would work.

To run the app locally, you first need to install Ollama and download the required model.

On macOS, this can be done with:

curl -fsSL https://ollama.com/install.sh | sh

ollama pull qwen3:8bAfter installation, you can verify that everything is working correctly by running:

curl http://localhost:11434/api/tagsIf everything works fine, all available models should be returned in JSON format.

I selected the qwen3:8b model, because for this type of real-time classification and recommendation task, the model needs to consistently return well-structured JSON while reasoning over multiple sensor signals. In my case, qwen3:8b provided that stability without adding extra GPUs and going into crazy high computational resources.

That said, model choice is flexible. Depending on your hardware, latency requirements, and desired output quality, you can experiment with different models until you find the right fit.

What The Agent Actually Does

Let me explain to you what happens specifically under the hood in the program.

The agent receives messages from IoT devices which have format as below:

{

"homeId": "h1",

"tempC": 29.4,

"humidityPct": 67,

"co2ppm": 1650,

"powerW": 780

}Then it sends the message to the LLM with a strict prompt that forces structured JSON output:

Prompt.fromText(

"Return ONLY valid JSON with these fields:\n" +

"- comfort: OK | WARNING | CRITICAL\n" +

"- issues: array of short strings\n" +

"- energyAnomaly: boolean\n" +

"- recommendedActions: array of short strings"

);And it returns message as below:

{

"comfort": "WARNING",

"issues": ["High CO2 level", "Heat stress"],

"energyAnomaly": false,

"recommendedActions": ["Increase ventilation"]

}The output above is not just a simple LLM response.

Behind the scenes, the agent first evaluates the raw sensor values using deterministic Java logic. Thresholds such as high CO₂ levels or abnormal power usage are checked explicitly in code to ensure reliability and predictability.

Once the signals are validated, the LLM interprets the overall situation. It classifies the comfort level, summarizes the issues, and generates human-readable recommendations.

In other words:

- The code guarantees safety and rule enforcement.

- The model provides reasoning and explanation.

- Flink orchestrates everything in real time.

Why Flink?

Flink is a tool that transforms this example from a simple demo into something more like a real system.

When working with live data, we need something more than just a model call. We need continuous processing, state management, parallel execution, and fault tolerance. That is exactly where Flink fits in.

Any logic on the data streams runs continuously within the Flink job. It keeps state per key that allows each entity to be evaluated independently and consistently. It executes in parallel, supports low-latency processing, and is designed to recover from failures.

We don't call an LLM on static data and return a one-time result. We embed the model directly into the streaming pipeline, which allows making the decisions in the real time. In this setup, the LLM becomes just another operator in the dataflow, that is orchestrated, managed, and scaled like any other processing step.

What's next?

The idea of this example was to create something easy and simple, but with architectural capabilities to evolve in many different directions. It can be extended with streaming ingestion from systems like Kafka, window-based anomaly detection, adaptive thresholds, or multi-agent coordination. Alerting can be integrated with production-grade notification systems.

Even in its current form, this prototype proves that real-time streaming agents can be built with relatively little effort. They can be embedded directly into a streaming architecture, working alongside traditional logic to create intelligent and responsive systems.