YouTube, the world's largest video-sharing platform, serves billions of hours of video content daily to over 2 billion users worldwide. This massive scale requires a sophisticated infrastructure to ensure videos load quickly and play seamlessly, regardless of location or device. At the core of this system is Google's Content Delivery Network (CDN), specifically the Media CDN, built on the same technology that powers YouTube. Key components like Google BigTable for metadata management and a robust video processing pipeline enable YouTube to handle the immense volume of uploads and streams. We'll explore how YouTube leverages Google's CDN, BigTable, and a multi-step video lifecycle to deliver billions of videos daily, with a focus on video processing, storage, and Adaptive Bitrate Streaming (ABR).

What is a Content Delivery Network (CDN)?

A Content Delivery Network (CDN) is a geographically distributed network of servers designed to deliver web content, such as videos, images, and web pages, to users with minimal latency.

The primary mechanism of a CDN is caching, where copies of content are stored on edge servers located closer to end-users. When a user requests content, the CDN routes the request to the nearest edge server, which serves the cached content if available. If the content isn't cached, the edge server fetches it from the origin server, caches it, and then delivers it to the user.

Key reasons YouTube uses a CDN:

- Reduce buffering and load times

- Serve global users from local edge servers

- Handle billions of concurrent streams

- ️ Improve reliability and availability

- ensures a consistent experience for users worldwide.

By caching popular videos on edge servers, YouTube can serve content faster than if every request had to travel to a central data center.

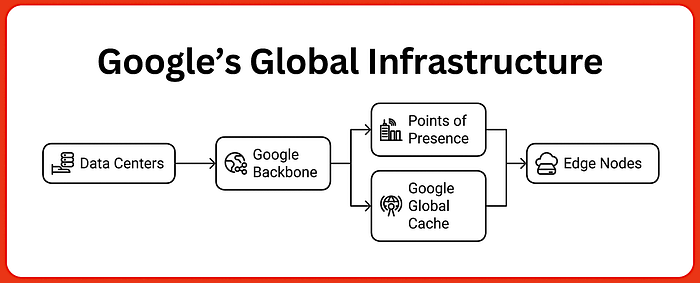

Google's Global Infrastructure:

Google's global infrastructure, one of the largest private networks, supports YouTube, Google Search, Gmail, and more. Key components include:

- Data Centers: Over 30 regions and 90 zones worldwide, hosting servers for video processing, storage, and distribution.

- Google Backbone Network: Spanning over 3.2 million kilometers of fiber optic cables, including underwater cables like Curie (US to Chile) and Equiano (Europe to Africa), ensuring high-speed data transfer.

- Points of Presence (PoPs): Located in over 200 cities across 90+ countries, PoPs reduce latency by connecting Google's network to the internet.

- Google Global Cache (GGC): Caching servers within ISP networks, pre-caching popular videos for local delivery, serving 70–90% of cacheable traffic.

- Edge Nodes: Advanced nodes handling load balancing, request routing, and caching decisions in real-time.

This infrastructure enables YouTube to manage billions of daily video streams with minimal latency. It supports YouTube's ability to handle massive traffic volumes, with Media CDN boasting over 100 terabits per second (Tbps) of egress capacity, enabling it to manage the billions of video streams daily.

The Role of Google BigTable in YouTube's Infrastructure

Google BigTable is a distributed, scalable NoSQL database designed for high read/write throughput and low latency, handling petabytes of data across thousands of servers. Unlike relational databases, BigTable uses a sparse, multi-dimensional sorted map, organized by row key, column key, and timestamp, making it ideal for YouTube's metadata management needs.

BigTable's Role in YouTube

BigTable manages video metadata (e.g., video ID, title, description, tags, streaming URLs), enabling rapid content retrieval. Key functions include:

- Metadata Storage: Stores video metadata indexed by video ID for fast lookup.

- Content Retrieval: Provides metadata to locate video files and cached copies.

- Server Selection: Informs CDN load-balancing decisions based on server availability and proximity.

- Dynamic Updates: Tracks view counts and caching decisions for popular videos.

BigTable's low-latency reads (milliseconds) and high throughput (millions of operations per second) ensure YouTube can handle concurrent requests from millions of users.

YouTube's Video Processing and Storage

When a video is uploaded to YouTube, a complex pipeline is triggered to process, store, and deliver it to users. This pipeline, known as the video lifecycle, consists of five key stages: Upload, Transcoding, Storage, Caching, and Streaming.

Step-by-Step Video Lifecycle

- Upload: A user uploads a video via YouTube's web or mobile interface. The video is sent to one of Google's data centers, typically the nearest available, over a secure HTTPS connection.

- The uploads are handled by YouTube's front-end servers, which authenticate the user and validate the file format (e.g., MP4, AVI). Metadata like title, description, and tags is submitted alongside the video and stored in BigTable.

- With over 500 hours of video uploaded per minute, the system uses load balancing to distribute uploads across data centers, ensuring no single server is overwhelmed.

2. Transcoding: The uploaded video is processed by Google's transcoding infrastructure, converting it into multiple formats and resolutions (e.g., 240p, 360p, 720p, 1080p, 4K) to support various devices and network conditions.

- Specialized hardware like the Video (trans)Coding Unit (VCU), introduced in 2021, achieves 20–33x compute efficiency for codecs like VP9 and AV1. The VCU reduces processing time and supports modern codecs for better quality at lower bitrates.

- BigTable stores encoding details (e.g., available resolutions, bitrates), enabling the player to select the appropriate stream later.

3. Storage: Transcoded video files are stored in the Google File System (GFS), a distributed file system designed for large-scale data storage. Metadata, including pointers to video file locations, is stored in BigTable.

- GFS handles petabyte-scale storage, ensuring durability through replication across multiple data centers. BigTable's schema-less design accommodates diverse metadata, such as video duration, uploader, and view count.

- BigTable's horizontal scaling supports the metadata for billions of videos, while GFS manages the raw video files.

4. Caching: Popular videos are cached on Media CDN's edge servers and GGC nodes, located in over 3,000 locations worldwide. Less popular videos remain on YouTube's servers in data centers.

- BigTable metadata determines which videos to cache based on popularity metrics (e.g., view count, trending status). Predictive caching pre-loads trending content to edge servers, reducing latency for users.

- A viral video in Singapore might be cached on a GGC node within a local ISP, enabling near-instant playback.

5. Streaming:

- When a user requests a video, YouTube's front-end servers query BigTable for metadata, identifying the optimal server (edge, GGC, or origin) to stream from. The video is delivered to the user's device via the CDN.

- Load balancing, informed by BigTable metadata, selects servers based on performance metrics (e.g., latency, server load) and content availability. The video player uses protocols like DASH or HLS for streaming.

- GGC nodes serve 70–90% of cacheable traffic locally, minimizing network hops and ensuring fast delivery.

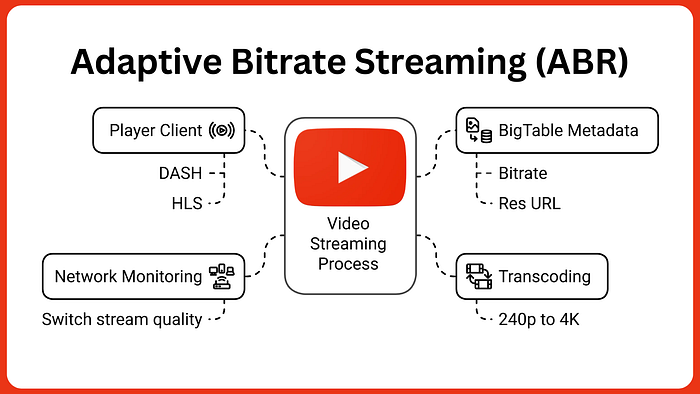

Adaptive Bitrate Streaming (ABR)

Adaptive Bitrate Streaming is a technique that adjusts video quality in real-time based on the user's network conditions, preventing buffering and ensuring smooth playback.

How ABR Works

- Encoding: Videos are transcoded into multiple resolutions and bitrates during the transcoding stage. For example, a video might have versions at 240p (low quality, low bitrate), 720p (HD), and 4K (ultra-high quality, high bitrate). BigTable stores metadata about these versions, including their URLs and bitrates.

- Dynamic Adjustment: The YouTube player, using protocols like Dynamic Adaptive Streaming over HTTP (DASH) or HTTP Live Streaming (HLS), monitors the user's network speed and device capabilities. If the connection slows, the player switches to a lower-resolution stream (e.g., from 1080p to 360p) without interrupting playback.

- Implementation: When a video is requested, BigTable provides metadata about available streams. The player selects the highest quality stream the network can support, fetching segments from the CDN. If network conditions change, the player queries BigTable again to switch streams seamlessly.

- Benefits: ABR ensures a buffer-free experience, even on unstable networks, and optimizes bandwidth usage. For example, a user on a slow mobile network in a rural area might stream at 240p, while a user on fiber optic broadband enjoys 4K.

Technical Details

- Protocols: DASH and HLS break videos into small chunks (e.g., 2–10 seconds), each available in multiple qualities. The player downloads chunks sequentially, switching quality as needed.

- BigTable Integration: BigTable's low-latency reads provide the player with real-time metadata about available chunks, enabling fast stream switching.

- CDN Support: Media CDN's cache stores these chunks, with cache fillers fetching missing segments from origin servers, guided by BigTable metadata.

Network Optimization Technologies

QUIC Protocol

YouTube leverages Google's QUIC (Quick UDP Internet Connections) transport protocol to improve streaming performance. QUIC offers several advantages over traditional TCP:

- Reduced connection establishment time

- Improved congestion control

- Better performance on lossy networks

- Stream multiplexing without head-of-line blocking

This protocol is particularly valuable for mobile users who frequently switch between networks (e.g., from Wi-Fi to cellular).

Google's Global Network Infrastructure

YouTube benefits from Google's private fiber optic network spanning over 1.5 million miles of cable. This dedicated infrastructure provides:

- Higher bandwidth capacity

- Lower latency

- Better reliability than public internet routes

Google's network includes strategically placed points of presence (PoPs) worldwide, ensuring content can be delivered efficiently from the nearest edge location.

Storage Infrastructure

1. Distributed File System

YouTube stores its massive video library (billions of terabytes) using Google's efficient distributed file system built with consumer- and business-grade hard drives. This approach provides:

- Massive scalability

- Cost-effective storage

- Built-in redundancy

Rather than relying on expensive enterprise storage solutions, Google's system distributes data across thousands of commodity servers, with automatic replication for fault tolerance.

2. Modular Data Centers

Most YouTube data resides in Google's Modular Data Centers — portable units that can be deployed wherever additional storage capacity is needed. These self-contained facilities:

- Accelerate deployment in new regions

- Optimize cooling and power efficiency

- Scale incrementally based on demand

YouTube's CDN Operations

YouTube's CDN, built on Google's Media CDN, optimizes video delivery through:

1. Content Distribution

- Popular Videos: Cached on edge servers and GGC nodes, leveraging BigTable metadata to prioritize trending content. For example, a music video with millions of views is cached globally.

- Less Popular Videos: Served from YouTube's servers in data centers, with BigTable providing file locations to minimize retrieval time.

2. Load Balancing and Server Selection

Server selection, informed by BigTable metadata, considers:

- Performance Metrics: Fewest network hops, lowest latency, and server availability.

- Content Popularity: Prioritizes caching for high-demand videos.

- Load Distribution: Avoids server overload, even if serving from a farther server. A 2014 study showed users in Finland might be served from Switzerland or California.

3. Content Propagation

New videos are propagated to main servers (e.g., California, Sweden), with BigTable updating metadata to track availability. Popular videos are then cached on edge servers as demand grows.

Technical Details of Media CDN

Media CDN's architecture includes:

- Router: Manages client requests, routing them to caches based on IP, protocol, and security policies.

- Cache: Stores content, managed by cache keys to reduce origin requests. BigTable metadata optimizes cache decisions.

- Cache Filler: Fetches content from origin servers, guided by BigTable metadata, and saves it to the cache.

- Caching Strategies: Configurable TTL and cache keys, informed by BigTable, optimize storage for popular content.

- Capacity: Over 100 Tbps of egress capacity supports YouTube's scale.

Advance Techniques

1. Caching & Latency Reduction

Edge caching, facilitated by GGC nodes and local ISPs, is critical for latency reduction. Popular videos are replicated across edge PoPs based on access patterns, reducing the need to fetch from distant core data centers. Data replication across regional centers ensures redundancy, while Google's Media CDN optimizes cache eviction policies to prioritize high-demand content. For instance, a viral video might be cached in 90% of GGC nodes globally within hours, cutting latency to under 100ms for most users.

2. Fault Tolerance & Chaos Testing

YouTube maintains near-zero downtime, even during major events like the Super Bowl or live concerts, through fault-tolerant design. Redundant paths between data centers and edge nodes ensure failover if a server or network link fails. Google's chaos testing, using tools like Chaos Monkey, simulates failures to validate resilience. For example, if a GGC node in London goes offline, anycast routing redirects requests to a nearby node in Frankfurt, ensuring uninterrupted service. Spanner's global replication further guarantees metadata availability

3. Security & DDoS Protection

YouTube secures its platform with encryption (HTTPS for transit, AES for storage) and role-based access controls (RBAC). Google's Cloud Armor, integrated with the CDN, mitigates DDoS attacks by analyzing traffic patterns and blocking malicious requests. For example, during a potential attack, Cloud Armor can throttle suspicious IPs or redirect traffic to scrubbing centers. Redundant network paths and rate-limiting further enhance resilience, ensuring uninterrupted service.

Conclusion

YouTube's ability to stream billions of videos daily relies on Google's CDN, a sophisticated system integrating a client-to-backend architecture, edge caching via GGC, adaptive streaming with DASH/HLS, and robust load balancing with anycast and Maglev. Fault tolerance, efficient upload pipelines, and strong security measures like Cloud Armor ensure reliability and protection. For technical engineers, YouTube's architecture offers a masterclass in building scalable, low-latency, and resilient systems for global content delivery.

If you want to read this blog, how Netflix also using Chaos Engineering and Auto-Scaling :-