MimiClaw caught my attention — a full AI assistant running on a tiny ESP32 chip, written entirely in C. No Linux, no Node.js, no Python runtime. Just bare-metal firmware talking to LLM APIs over WiFi.

But there was a catch. MimiClaw only supported cloud APIs (Anthropic Claude, OpenAI GPT) and required an ESP32-S3 board with 16MB flash and 8MB PSRAM. That's a $5 board, and you're paying per API call.

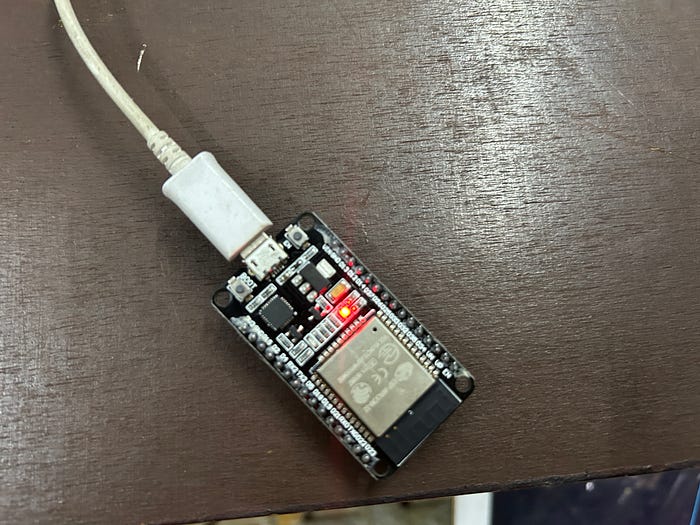

I wanted something different, which I already have. I wanted to run it on my ESP-WROOM-32 — a board you can buy for under $4 — and connect it to Ollama running on my local machine. No cloud. No API keys. No monthly bills.

It took some real engineering to get there. This is what I learned.

What Is MimiClaw?

MimiClaw is an open-source AI assistant built by the memovai community. It turns a thumb-sized ESP32 microcontroller into a personal AI agent that you can talk to through Telegram.

What makes it interesting is the architecture. It implements a full ReAct agent loop — the same pattern used by ChatGPT plugins and LangChain agents — but in pure C, running on a dual-core processor with less RAM than a single Chrome tab.

The agent can:

- Call tools (web search, file management, scheduling) - Maintain conversation history across sessions - Remember things about you in persistent memory files - Schedule autonomous tasks using a cron system - Run 24/7 on 0.5W of power

The entire codebase is about 5,000 lines of C, built on the ESP-IDF framework. It's lean, focused, and surprisingly capable.

GitHub Repo

The Problem: Cloud-Only, Big-Board-Only

Out of the box, MimiClaw had two limitations that bugged me.

No local LLM support

The LLM proxy layer had hardcoded API URLs for Anthropic and OpenAI. If you wanted to use Ollama, LM Studio, or any local model server, there was simply no way to point the firmware at a custom endpoint. The API URLs were baked in at compile time.

For anyone running local models at home — and there are a lot of us now — this was a dealbreaker.

ESP32-S3 only

The firmware was built for the ESP32-S3 with 16 MB of flash and 8 MB of PSRAM. Every large buffer in the system was allocated from PSRAM using ESP-IDF's `heap_caps_calloc(…, MALLOC_CAP_SPIRAM)`. The partition table assumed 16MB of flash. The console driver assumed USB Serial/JTAG was available.

I had an ESP-WROOM-32 brought in 2018. It has 4 MB of flash, no PSRAM, and about 300KB of internal RAM. I decided to try it.

Part 1: Adding Local LLM Support (Ollama / LM Studio)

This was the more straightforward problem to solve, but it required changes across multiple layers.

The API base URL

Ollama and LM Studio both expose OpenAI-compatible APIs. Ollama runs on port 11434, LM Studio on port 1234. The request format is identical to OpenAI's `/v1/chat/completions` — you just need to point at a different host.

I added a configurable base URL to the LLM proxy layer. When set, it overrides the default cloud URLs while preserving the correct API path for the selected provider.

static const char *llm_api_url(void)

{

if (s_api_base_url[0]) {

const char *path = provider_is_openai()

? "/v1/chat/completions" : "/v1/messages";

size_t blen = strlen(s_api_base_url);

if (blen > 0 && s_api_base_url[blen - 1] == '/') blen - ;

snprintf(s_url_buf, sizeof(s_url_buf), "%.*s%s",

(int)blen, s_api_base_url, path);

return s_url_buf;

}

return provider_is_openai() ? MIMI_OPENAI_API_URL : MIMI_LLM_API_URL;

}HTTP vs HTTPS auto-detection

Cloud APIs use HTTPS. Local servers typically use plain HTTP. The original code unconditionally attached the TLS certificate bundle to every connection. Connecting to `http://192.168.1.243:11434` with TLS enabled doesn't end well.

I added a simple scheme check — if the URL starts with `https://`, attach the cert bundle. If it's `http://`, skip it:

bool is_https = (strncmp(url, "https://", 8) == 0);

esp_http_client_config_t config = {

.url = url,

.crt_bundle_attach = is_https ? esp_crt_bundle_attach : NULL,

// …

};No API key? No problem.

The original code refused to make any API call if `s_api_key` was empty. But local models don't need API keys. I relaxed the check to allow calls when a base URL is configured:

if (s_api_key[0] == '\0' && s_api_base_url[0] == '\0')

return ESP_ERR_INVALID_STATE;Runtime configuration via CLI

I added two serial CLI commands so you can configure everything without recompiling:

mimi> set_model_provider openai

mimi> set_api_base_url http://192.168.1.243:11434

mimi> set_model gpt-oss-20bAll settings are persisted to NVS (non-volatile storage) and survive reboots. You can also set them at build time in `mimi_secrets.h`:

#define MIMI_SECRET_MODEL_PROVIDER "openai"

#define MIMI_SECRET_API_BASE_URL "http://192.168.1.243:11434"

#define MIMI_SECRET_MODEL "gpt-oss-20b"With these changes, MimiClaw could talk to any OpenAI-compatible endpoint — Ollama, LM Studio, vLLM, text-generation-webui, you name it.

Part 2: Running on ESP-WROOM-32 (4MB Flash, No PSRAM)

This was the hard part. The ESP-WROOM-32 has roughly 1/4 the flash and zero external RAM compared to the ESP32-S3. Everything had to fit.

New partition table

The ESP32-S3 layout used dual OTA partitions (for safe firmware updates), which is great but takes 4MB just for two app slots. On a 4MB chip, that's the entire flash.

I created a new partition table with a single factory app partition and no OTA:

# Name, Type, SubType, Offset, Size

nvs, data, nvs, 0x9000, 0x6000

phy_init, data, phy, 0xF000, 0x1000

factory, app, factory, 0x10000, 0x1C0000

spiffs, data, spiffs, 0x1D0000, 0x220000

coredump, data, coredump,0x3F0000, 0x10000The factory app partition is 1.75MB. The compiled binary came in at 1.16MB — 36% headroom. SPIFFS gets 2.1MB, more than enough for personality files, memory, sessions, and skills.

PSRAM-conditional allocation

This was the critical change. The original code used `heap_caps_calloc(…, MALLOC_CAP_SPIRAM)` everywhere for large buffers. On a chip with no PSRAM, these calls return NULL immediately.

I added allocation macros to `mimi_config.h` that adapt at compile time:

#if CONFIG_SPIRAM

#define mimi_alloc(size) heap_caps_calloc(1, (size), MALLOC_CAP_SPIRAM)

#define mimi_realloc(p, s) heap_caps_realloc((p), (s), MALLOC_CAP_SPIRAM)

#else

#define mimi_alloc(size) calloc(1, (size))

#define mimi_realloc(p, s) realloc((p), (s))

#endifThen, replaced every `heap_caps_calloc/realloc` call in the LLM proxy, agent loop, and web search tool with `mimi_alloc`/`mimi_realloc`. The ESP32-S3 still uses PSRAM as before. The ESP32 uses internal RAM. Same code, different behaviour.

The memory issues

Here's where things got interesting. The first build compiled fine. I flashed it, booted it, WiFi connected, Telegram connected. I sent `/start` and… `ESP_ERR_NO_MEM`.

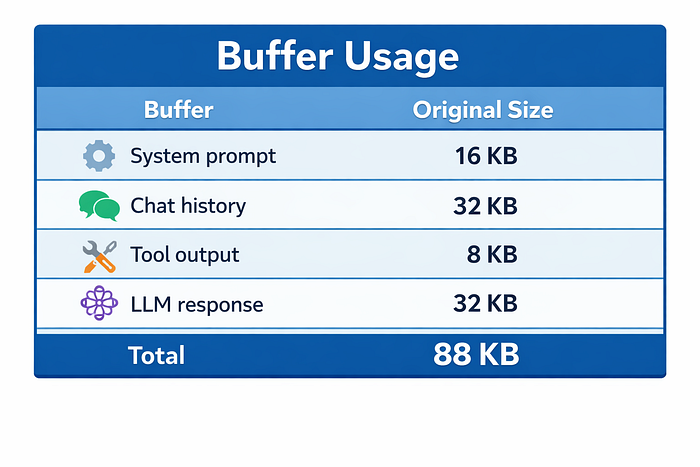

Only 60KB of free internal RAM. The agent was trying to allocate:

88KB of buffers with 60KB free. The math doesn't work.

I checked the actual data sizes. The system prompt was ~3KB. Chat history for a fresh conversation is tiny. The LLM response buffer grows dynamically. There was no reason these needed to be so large on a memory-constrained chip.

I made buffer sizes conditional:

#if CONFIG_SPIRAM

#define MIMI_LLM_STREAM_BUF_SIZE (32 * 1024)

#define MIMI_CONTEXT_BUF_SIZE (16 * 1024)

#else

#define MIMI_LLM_STREAM_BUF_SIZE (8 * 1024)

#define MIMI_CONTEXT_BUF_SIZE (4 * 1024)

#endifNew total: 24KB. After flashing, the LLM call succeeded with 100KB of internal RAM to spare. TLS handshakes stopped failing. Telegram messages went through.

Build scripts

The last piece was making it easy to switch targets:

scripts/build_macos.sh esp32 # Build for ESP-WROOM-32

scripts/build_macos.sh # Build for ESP32-S3 (default)ESP-IDF's `sdkconfig.defaults.<target>` mechanism handles the rest — it automatically picks up the right config file based on the target name.

The Result

MimiClaw running on my ESP-WROOM-32, connected to Ollama on my local network, chatting through Telegram. No cloud. No PSRAM. Under $4 in hardware.

Here's what the memory looks like after an LLM call:

I (39081) agent: Free internal: 100324 bytes100KB free out of ~300KB total. Comfortable headroom for WiFi, TLS, and FreeRTOS.

The binary is 1.16MB, fitting in the 1.75MB factory partition with 36% to spare. The ESP32-S3 build is completely unaffected — no regression, same buffer sizes, same PSRAM allocation.

How to Try This Yourself

If you want to run MimiClaw with a local LLM on your own ESP32/ESP32-S3, here's what you need.

Hardware

- ESP-WROOM-32 dev board (~$4) or ESP32-S3 board (~$10) - USB cable - A computer running Ollama or LM Studio on your local network

Software setup

- Install ESP-IDF v5.5+ following the [official guide]

https://docs.espressif.com/projects/esp-idf/en/stable/esp32/get-started/

2. Clone the repo:

git clone https://github.com/manjunathshiva/mimiclaw.git

cd mimiclaw

git checkout feat/esp32-wroom32-support3. Configure secrets— copy and edit the secrets file:

cp main/mimi_secrets.h.example main/mimi_secrets.hEdit `main/mimi_secrets.h`:

#define MIMI_SECRET_WIFI_SSID "your-wifi"

#define MIMI_SECRET_WIFI_PASS "your-password"

#define MIMI_SECRET_TG_TOKEN "your-telegram-bot-token"

#define MIMI_SECRET_MODEL_PROVIDER "openai"

#define MIMI_SECRET_API_BASE_URL "http://YOUR_PC_IP:11434"

#define MIMI_SECRET_MODEL "your_ollama_model"4. Start Ollama on your PC:

ollama serve gpt-oss-20b5. Build and flash:

scripts/build_macos.sh esp32 # or scripts/build_ubuntu.sh for esp32-s3 or idf.py fullclean && idf.py build

idf.py flash monitor

6. Talk to your bot on Telegram. Send `/start` and you should get a response powered by your local model.

Runtime configuration (no reflashing needed)

You can change everything from the serial CLI:

mimi> set_api_base_url http://192.168.1.100:11434

mimi> set_model gpt-oss-20b

mimi> set_model_provider openai

mimi> config_showWant to switch to LM Studio? Just change the URL and port:

mimi> set_api_base_url http://192.168.1.100:1234

mimi> set_model your-lm-studio-modelWant to go back to cloud? Clear the base URL and set your API key:

mimi> clear_api_base_url

mimi> set_model_provider anthropic

mimi> set_api_key sk-ant-…

mimi> set_model claude-sonnet-4–5–20250514What I Learned

Embedded AI is a memory game. On a chip with 300KB of RAM, every kilobyte matters. The difference between 88KB and 24KB of buffer allocation was the difference between "doesn't work" and "works with 100KB to spare." Know your actual data sizes, not just your theoretical maximums.

Local LLMs change the economics. Running Ollama on a spare laptop means your $4 microcontroller has unlimited, free, private AI inference. No API keys, no rate limits, no data leaving your network. For home automation, personal assistants, and hobby projects, this is a much better model.

Compile-time adaptation beats runtime checks. Using `#if CONFIG_SPIRAM` to switch between PSRAM and internal RAM allocation means zero runtime overhead on either target. The compiler strips out the unused path entirely. Same source code, optimal binary for each chip.

The ESP32 ecosystem is underrated. A chip that costs less than a coffee can run WiFi, TLS, JSON parsing, HTTP clients, a full agent loop with tool calling, and persistent storage — all in C, all on bare metal. The ESP-IDF framework is remarkably complete.

What's Next

The PR is up on the MimiClaw repo. If you have an ESP-WROOM-32 lying around and a machine running Ollama, give it a try. The branch with all changes is here:

https://github.com/manjunathshiva/mimiclaw/tree/feat/esp32-wroom32-support

The upstream project is actively maintained:

I'm excited to see where embedded AI goes. When a $4 chip can run a full AI agent connected to local models, the barrier to entry for always-on, private AI assistants is basically zero.

Support

If you found this article informative and valuable, I'd greatly appreciate your support:

"Give it a few claps 👏 on Medium to help others discover this content (did you know you can clap up to 50 times?). Your claps will help spread the knowledge to more readers."

- Share it with your network of AI enthusiasts and professionals.

- Subscribe to my YouTube channel for AI videos explained in simple English: https://www.youtube.com/@AIBroEnglish

- Connect with me on LinkedIn: https://www.linkedin.com/in/manjunath-janardhan-54a5537/