You've probably generated a few AI images by now. Maybe a headshot, a logo concept, or a weird cat in a spacesuit. But what if I told you there's an image model that lets you generate, edit, composite multiple images, render legible text, and even ground its output with real-time web search — all within a single conversational API?

That model is Nano Banana 2, Google's public-facing name for its Gemini-native image generation capability, and it's quietly becoming one of the most production-ready creative tools available to developers.

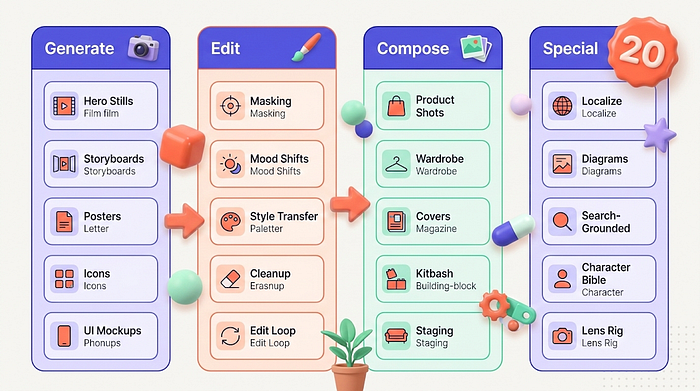

What You'll Learn in This Article:

- What Nano Banana 2 Actually Is: The model architecture, key settings, and what makes it different from every other image generator

- 20 Production Workflows: Each with a cinematic prompt you can copy-paste and run today

- Multi-Image Composition: How to combine multiple reference images into coherent scenes without Photoshop

- Practical Pitfalls and Post Tips: The stuff nobody tells you about making AI-generated images actually usable

What Is Nano Banana 2 and Why Should You Care?

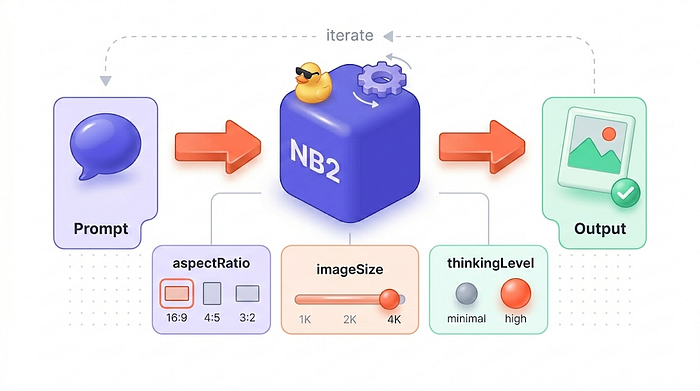

Nano Banana is the umbrella label for Gemini's native image generation. Nano Banana 2 specifically corresponds to the gemini-3.1-flash-image-preview model. It accepts text and image inputs and can output images alone or interleaved text and images, depending on how you configure responseModalities.

Here's what makes it interesting for creative professionals. Unlike most image generators that treat each prompt as an isolated event, Nano Banana 2 works conversationally. You generate an image, then tell it to change just the background fog. Then you ask it to swap the color palette. Then you upscale to final resolution. It's iterative art direction, not prompt roulette.

The key controls you need to know are imageConfig.aspectRatio for framing, imageConfig.imageSize (1K for drafts, 2K/4K for finals), and thinkingLevel (minimal for speed, high for complex multi-constraint scenes). Temperature defaults to 1.0, and seed exists but determinism is best-effort.

One quick note before we dive in: Nano Banana 2 does not expose a "diffusion steps" control like many open-source pipelines. Your quality and speed dials are imageSize and thinkingLevel. Treat them accordingly.

Now let's walk through 20 workflows that unlock the full range of what this model can do.

The 20 Workflows

1. Cinematic Hero Stills

This is your baseline. Prompt Nano Banana 2 like a cinematographer, not an illustrator. Use the film still formula: subject + action + environment + lighting + lens + film stock + grade + composition.

Prompt:

Cinematic film still, 35mm, low-angle medium shot of a street saxophonist under

a neon sign in light rain, reflections on wet asphalt, shallow depth of field,

soft bokeh, teal-orange color grade, subtle film grain, 16:9.Settings: thinkingLevel: minimal | imageSize: 1K draft, 2K final | aspectRatio: 16:9

Pitfall: Contradictory lighting descriptions (like "noir hard light" plus "overcast soft light") produce muddy frames. Pick one direction.

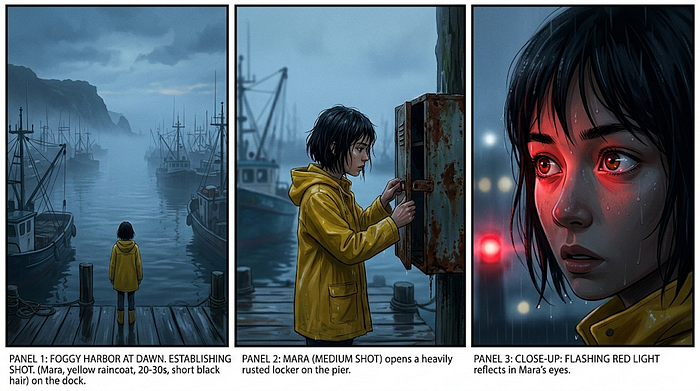

2. Three-Panel Storyboard Frames

Instant previsualization. Generate three beats that keep wardrobe, props, and location consistent by naming characters and reusing the same descriptors across panels.

Prompt:

Create a 3-panel storyboard (single image containing three frames). Same

character throughout: "MARA" = adult woman, short black hair, yellow raincoat.

Scene: foggy harbor at dawn. Panel 1: wide establishing shot. Panel 2: medium

shot as she opens a rusted locker. Panel 3: close-up on her eyes reflecting

flashing red light. Cinematic lighting, 16:9 overall layout.Settings: thinkingLevel: high | imageSize: 2K | aspectRatio: 16:9

Pitfall: Small text inside panels drifts. Add panel labels only if necessary, and overlay clean vector borders in post.

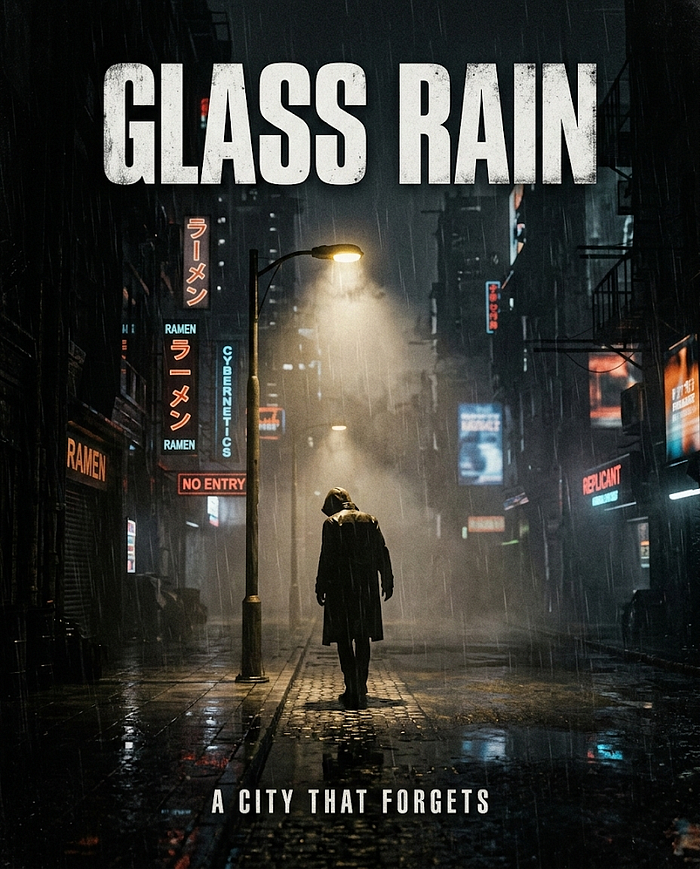

3. Title Cards and Posters with Typography

Nano Banana 2 handles in-image text better than most generators. Put exact words in quotes and describe typography style explicitly.

Prompt:

Cinematic poster design, gritty sci-fi noir. Central image: lone figure under a

streetlamp in thick fog, 35mm, high contrast, film grain. Add the title text

exactly: "GLASS RAIN" in bold condensed sans-serif, slightly distressed, top

center. Add tagline: "A CITY THAT FORGETS" in small caps. 4:5.Settings: thinkingLevel: high | imageSize: 2K+ | aspectRatio: 4:5

Pitfall: Expect occasional letterform errors at small sizes. Treat AI text as a draft and replace with real fonts for final print.

4. Editing Product Lifestyle Shots from Packshots

This is where multi-image composition shines. Combine a product packshot, a background plate, and optional props to create a lifestyle frame. The model matches lighting, shadows, and perspective automatically.

Prompt:

Using the provided images, create a premium lifestyle photo. Place the product

from image 1 on the kitchen counter from image 2. Match the warm window light

direction and add realistic contact shadows and subtle reflections. 35mm,

shallow DOF, cinematic color grade, 3:2.Settings: thinkingLevel: high | imageSize: 2K comp, 4K final | aspectRatio: 3:2

Input Image 1:

Input Image 2:

Output:

Pitfall: Inconsistent lighting between reference images breaks the composite. In post, apply a unified color grade and grain pass.

5. Editing Wardrobe Swap for Fashion E-Commerce

Take a garment from one image and have a person from another image wear it, with lighting and shadows adjusted to match. This enables rapid virtual styling mockups for catalogs and lookbooks.

Prompt:

Create a professional e-commerce fashion photo. Take the jacket from image 1 and

let the model from image 2 wear it. Keep the model's face unchanged, adjust

lighting and shadows to match, realistic fabric folds, 4:5.Settings: thinkingLevel: high | imageSize: 2K+ | aspectRatio: 4:5

Input Image 1:

Input Image 2:

Output Image:

Pitfall: Watch for hand artifacts and implausible hems. This is generative compositing, not cloth simulation — always do a realism check.

6. Editing Interior Redesign with Semantic Masking

No drawn mask needed. Just tell the model what to change conversationally — "only the sofa" — while preserving everything else. This is gold for interior design iteration.

Prompt:

Using the provided image, change only the rug to a vintage Persian rug in deep

red tones. Keep all furniture, wall art, lighting, and camera angle exactly the

same. Preserve shadows and perspective. 16:9.Settings: thinkingLevel: high | imageSize: 2K | aspectRatio: 16:9

Input Image:

Output Image:

Pitfall: Vague targets like "make it nicer" cause global changes. Be surgically specific about what changes and what stays.

7. Editing Day-to-Night and Mood Transforms

Use Nano Banana 2 as a digital gaffer. Turn noon into blue-hour, add fog, shift to tungsten interior lighting, or create a rain pass while preserving scene geometry.

Prompt:

Using the provided photo, transform the scene from sunny afternoon into rainy

night. Keep the camera position and main subject unchanged. Add wet reflections,

distant streetlight glow, subtle mist, cinematic contrast, 16:9.Settings: thinkingLevel: high | imageSize: 2K | aspectRatio: 16:9

Input Image:

Output Image:

Pitfall: Over-adding atmospheric effects looks synthetic. In post, dial back saturation and add consistent grain.

8. Editing Style Transfer for Look Development

Take a location scout photo and re-render it in noir inks, watercolor, synthwave, or painterly impressionism without changing the blocking.

Prompt:

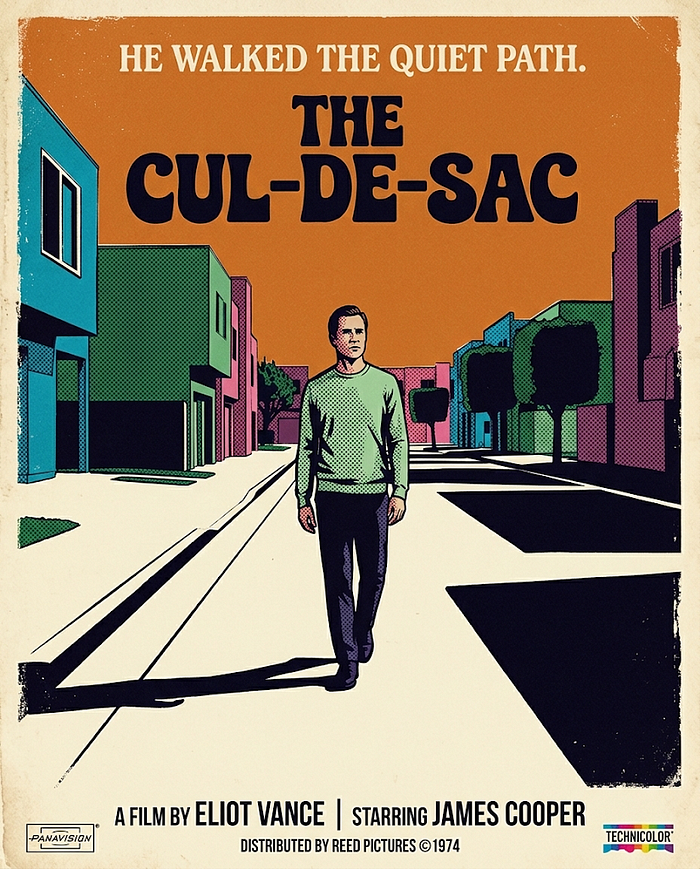

Transform the provided photograph into a 1970s film poster illustration style.

Preserve the original composition, but render with bold halftone texture,

limited color palette, and dramatic shadows. 4:5.Settings: thinkingLevel: minimal | imageSize: 1K draft, 2K final | aspectRatio: 4:5

Input Image:

Output Image:

Pitfall: If you don't explicitly say "preserve composition," the model may reframe your image. Always include that constraint.

9. Lens and Camera-Blocking Exploration

Run the same scene prompt with different lens choices, camera heights, and framing styles. This is a director's sandbox for finding the right cinematography before committing to high-res renders.

Prompt:

Same scene, two-camera test: a detective entering a dim motel room. Render as a

wide 24mm establishing shot, low angle, practical lamp + moonlight, film grain,

21:9.Settings: thinkingLevel: minimal | imageSize: 1K | aspectRatio: 21:9

Input Image:

Output Image:

Pitfall: Changing too many variables at once makes it impossible to see what mattered. Sweep one parameter at a time and build contact sheets.

10. Character Bible for Consistent Protagonists

Consistency is a multi-step workflow, not a single prompt. Generate a character reference sheet with multiple angles, then reuse that named character in future prompts as your identity anchor.

Prompt:

Create a character reference sheet (single image) for "MARA": adult woman, short

black hair, yellow raincoat, calm expression. Include 3 views (front, side, 3/4)

on a neutral background, consistent lighting, cinematic realism, 16:9.Settings: thinkingLevel: high | imageSize: 2K | aspectRatio: 16:9

Pitfall: Drift happens the moment you stop naming the character. Keep one "canon" frame visible and reference it in every follow-up prompt.

11. Localization and Ad Adaptation

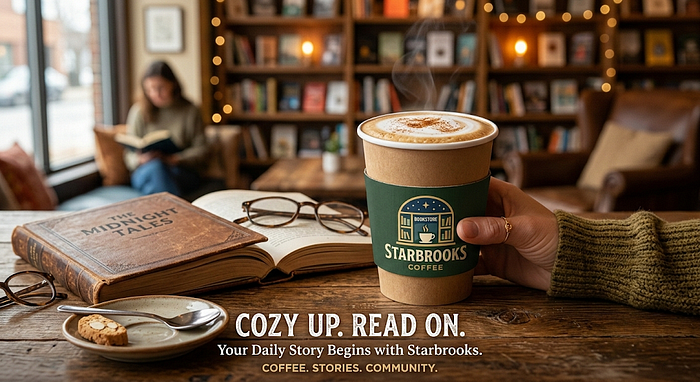

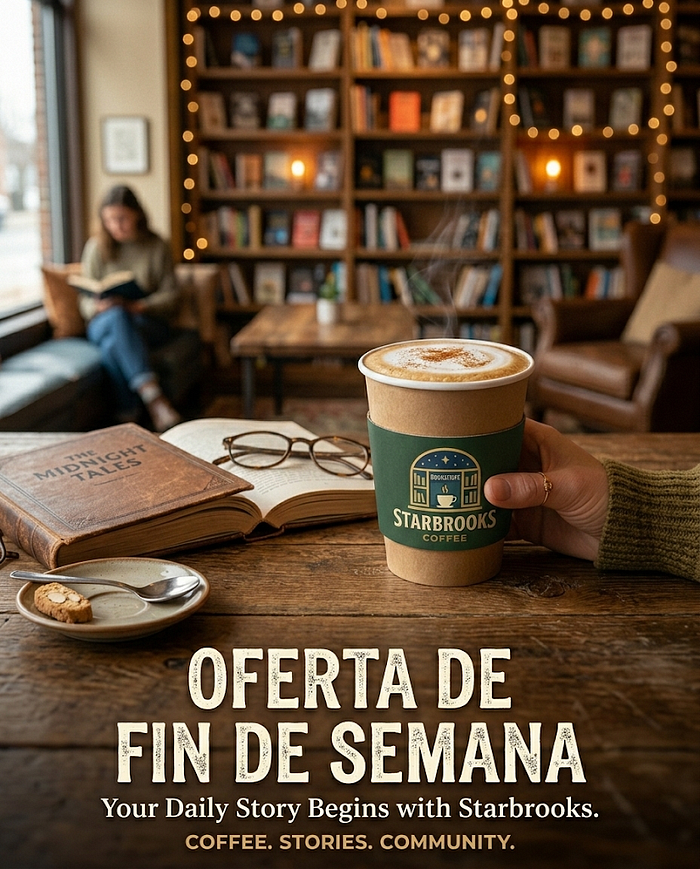

Translate or replace text in an image and adapt visuals for a new market. Keep the layout, swap the language, and adjust small cultural cues like color choices or symbols.

Prompt:

Using the provided ad image, translate only the headline into Spanish and set it

exactly as: "OFERTA DE FIN DE SEMANA". Keep the layout, fonts, and all other

elements unchanged. Preserve colors and framing. 4:5.Settings: thinkingLevel: high | imageSize: 2K+ | aspectRatio: 4:5

Input Image:

Output Image:

Pitfall: Letter-by-letter errors still happen. Treat these as fast drafts and replace final typography in a design tool for client-facing work.

12. Notes-to-Diagram and Infographic Generation

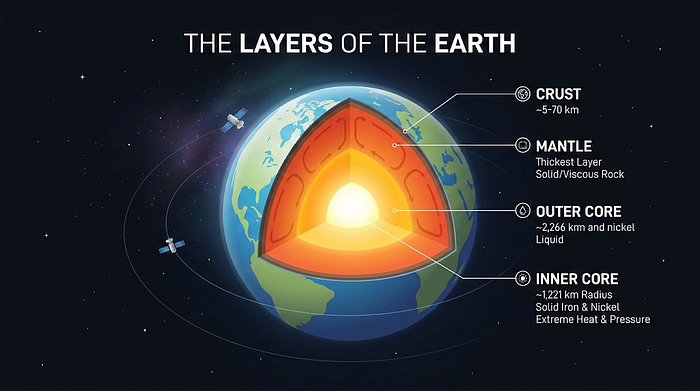

Nano Banana 2 can turn rough notes into clean diagrams and infographics, leveraging world knowledge and optionally web references. Unlike diffusion-only models, Gemini can produce text plus image outputs together.

Prompt:

Create a clean isometric infographic: "The Layers of the Earth" with labeled

crust, mantle, outer core, inner core. Modern minimal style, crisp labels,

subtle glow in the core, 16:9.Settings: thinkingLevel: high | imageSize: 2K | aspectRatio: 16:9

Pitfall: Requesting too many labels causes spacing to collapse. Keep it clean and simple, then add detailed annotations in post if needed.

13. Search-Grounded Accurate Renderings

This is uniquely Gemini. Enable Google Search grounding to verify facts and pull real-time image references before generating. Perfect for travel posters, architecture studies, and "draw this specific thing in style X" requests.

Prompt:

Use web image search references first, then generate: a cinematic wide shot of

Bletchley Park Mansion at sunrise, bright Synthetic Cubism style, no text, 16:9.Settings: Enable google_search tool | thinkingLevel: high | imageSize: 2K+ | aspectRatio: 16:9

Pitfall: Search grounding biases toward common photos. Push originality in post with custom color grading and texture overlays.

14. Magazine Cover and Editorial Collage

Combine a portrait, a texture background, and graphic elements into a magazine cover. The recommended workflow is to generate the cover image without text first, then test typography placement in a second pass, then finalize type in a design tool.

Prompt:

Using the provided images, create a magazine cover mockup: place the subject

from image 1 on the textured backdrop from image 2. Add masthead text exactly:

"NATURE" in bold sans-serif at top. Add 3 short cover lines on the left.

Editorial lighting, 4:5.Settings: thinkingLevel: high | imageSize: 2K+ | aspectRatio: 4:5

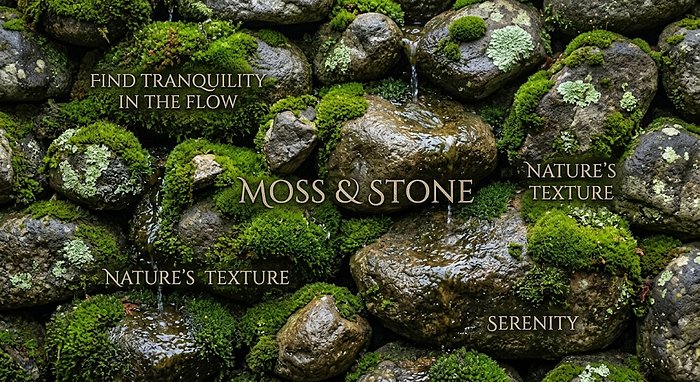

Input Image 1:

Input Image 2:

Output Image:

Pitfall: For print, you'll still need CMYK checks and true font control. Use the AI output as layout direction, not the final deliverable.

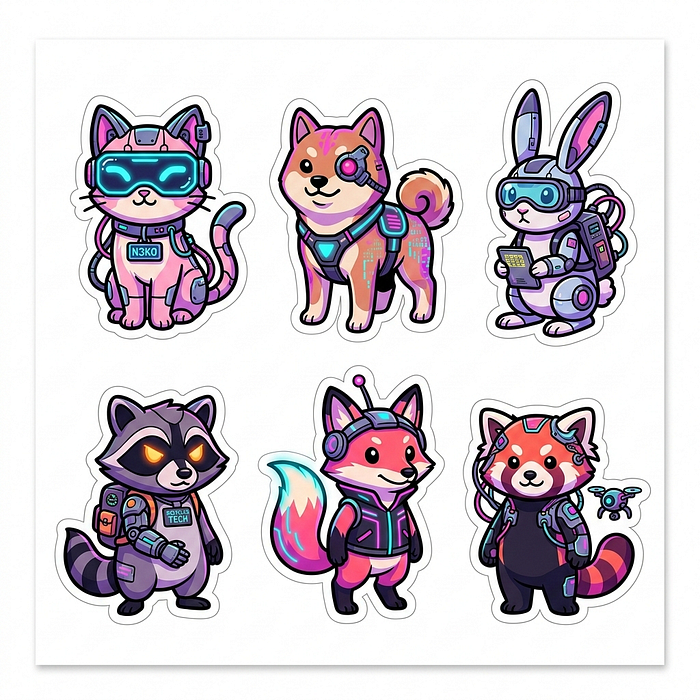

15. Sticker Packs and Icon Sheets

For consistent line weight and shading across many small assets, standardize your palette (3–5 colors), outline thickness, and shading style in the prompt.

Prompt:

Sticker sheet (single image with 6 stickers): cute cyberpunk animals, bold clean

outlines, simple cel shading, limited palette, each sticker with a white border,

clean white background, 1:1.Settings: thinkingLevel: minimal | imageSize: 1K draft, 2K final | aspectRatio: 1:1

Pitfall: Tiny details blur at low resolution. Vector redraw or upscaling with sharpening is almost always needed for production assets.

16. UI Mockups and Device-Framed Product Shots

Nano Banana 2 can draft UI mockups and place them in photoreal device frames. Constrain your typography to few words at large sizes and specify grid systems.

Prompt:

Photoreal product shot: a modern smartphone on a desk, soft window light,

shallow DOF. On screen, show a minimalist music player UI with the exact title

"MIDNIGHT LOOP" and a large play button. Clean typography, 3:2.Settings: thinkingLevel: high | imageSize: 2K+ | aspectRatio: 3:2

Pitfall: Small UI text will be wrong. Replace microcopy with proper font rendering in Figma or your design tool of choice.

17. Environment Kitbash from Multiple References

Vertex AI supports up to 14 input images, enabling a kitbash workflow. Feed sky plates, architectural references, texture swatches, and a subject reference, and ask for a coherent scene with unified lighting.

Prompt:

Using the provided reference images, create a cohesive cinematic cityscape: take

skyline shapes from image 1, street-level mood from image 2, and color palette

from image 3. Enforce one light direction, realistic scale, atmospheric haze,

21:9.Settings: thinkingLevel: high | imageSize: 2K+ | aspectRatio: 21:9

Input Image 1:

Input Image 2:

Input Image 3:

Output Image:

Pitfall: Too many references without a clear hierarchy produces a muddle. Assign each image a specific role and call it out in the prompt.

18. Real Estate Staging and Declutter

Virtual staging made simple. Remove clutter, add tasteful furniture, or modernize fixtures while keeping perspective intact. Always keep original photos and disclose AI modifications where policy requires it.

Prompt:

Using the provided room photo, remove only the clutter on the countertops and

keep everything else unchanged. Preserve lighting, shadows, and camera angle.

16:9.Settings: thinkingLevel: high | imageSize: 2K | aspectRatio: 16:9

Room Photo:

Output Image:

Pitfall: Watch for warped straight lines. Apply lens correction and perspective straightening in post.

19. Continuity Cleanup for Photography

The AI cleanup brush. Make one change at a time — remove an object, fix reflections, adjust color — and preserve your conversation history so the model maintains continuity context.

Prompt:

Using the provided photo, remove only the distracting sign in the background.

Keep the subject's face, hair, and clothing completely unchanged. Match lighting

and grain. 3:2.Settings: thinkingLevel: high | imageSize: 2K | aspectRatio: 3:2

Input Image:

Output Image:

Pitfall: Asking for a "cleaner image" triggers global smoothing. Be specific about what to remove, and add back micro-contrast and grain in post.

20. Rapid Multi-Turn Edit Loop

This is the meta-workflow. Generate a draft, request targeted edits, upscale to final resolution. Use the official SDK chat/history features so thought signatures are managed automatically.

Prompt (Turn 1):

Generate a cinematic still of a rainy neon alleyway with a lone cyclist, 16:9.

Then I will ask for iterative edits - keep composition stable unless explicitly

requested.Prompt (Turn 2):

Using the provided image from the previous turn, change only the color palette

to muted teal and amber, increase fog density slightly, and sharpen the cyclist

silhouette. Keep all geometry unchanged. 16:9.Settings: Start thinkingLevel: minimal for drafts, switch to high for final iteration | imageSize: 1K to 4K | aspectRatio: 16:9

Prompt 1:

Prompt 2:

Pitfall: Don't stack five edits into a single turn. One to two changes at a time keeps things stable.

The Multi-Image Composition Playbook

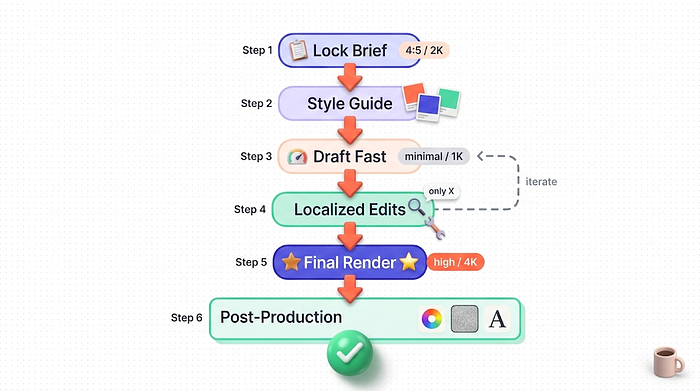

The workflows above cover individual techniques, but the real power of Nano Banana 2 shows up when you combine them into a production pipeline. Here's the pattern that consistently works.

Step 1: Lock your brief. Pick aspect ratio and resolution target before you start. The API supports explicit imageConfig controls, and starting without a spec leads to wasted iterations.

Step 2: Build a style guide. Assemble a palette reference, typography notes (if text is embedded), and 2–4 mood images. Use the style, subject, setting, action, and composition checklist as your rubric.

Step 3: Draft with minimal thinking. Generate a fast draft at thinkingLevel: minimal and imageSize: 1K to explore composition cheaply. Don't burn time on quality until framing is locked.

Step 4: Iterate with localized edits. Use semantic masking language — "change only the background fog density" — to avoid unintentional drift. One change per turn is the sweet spot.

Step 5: Promote to high thinking for the final. For complex constraints (text plus composition plus multi-subject), switch to thinkingLevel: high and bump to your target resolution tier.

Step 6: Post-production. Unify grain and contrast across elements, and replace AI-rendered typography with real fonts if you're shipping professionally. Nano Banana 2 is better at text than most, but production typography still benefits from manual control.

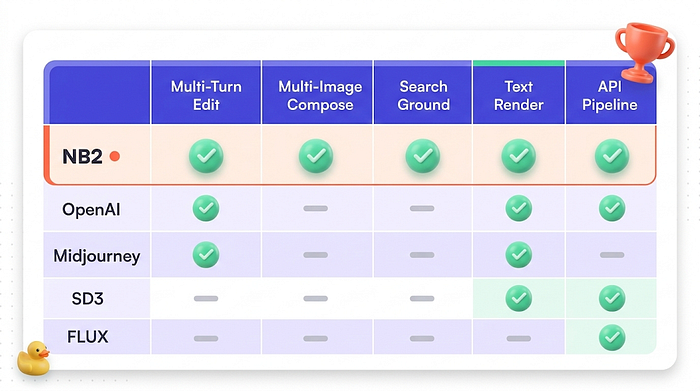

How Nano Banana 2 Stacks Up

For context, here's how it compares to the competition.

Against OpenAI's Image API, both ecosystems support text-to-image and inpainting-style edits. Where Nano Banana 2 pulls ahead is native search grounding and "explain plus render" interleaving for tutorials and infographics.

Against Midjourney, their interactive "Vary Region" inpainting is excellent for artist-driven iteration, while Gemini's advantage is tighter integration into programmatic pipelines and multi-image compositing via chat or API.

Against Stable Diffusion 3, SD3 offers deeper controllability through its open-weights ecosystem, but requires more pipeline engineering. Nano Banana 2 trades low-level knobs for faster end-to-end iteration.

Against FLUX, the open-weight tooling approach emphasizes self-hostable workflows, while Nano Banana 2 emphasizes integrated multimodal reasoning, multi-turn editing, and web-grounded generation.

The differentiator is the workflow, not just the output quality. You can do directive, iterative art direction within one conversation rather than rerolling prompts from scratch every time.

What To Do Next

You now have 20 ready-to-run workflows and a compositing pipeline you can adapt to almost any creative project. Here's how to put them to work.

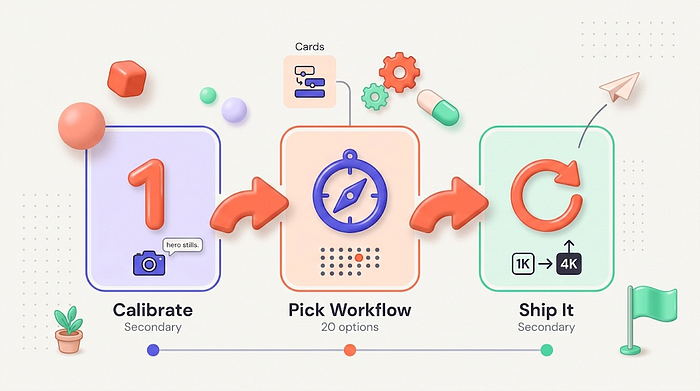

Start with workflow #1 (cinematic hero stills) to calibrate your prompt style and understand how the model responds to camera language. Then pick the workflow closest to your actual production need and run through the draft-to-final loop described in the composition playbook.

The model name is gemini-3.1-flash-image-preview, and you can hit it today through either the Gemini API, Vertex AI or just Gemini. Start with 1K drafts, iterate cheap, and only burn 4K tokens on your final renders.

If you've been stuck in "generate and pray" mode with other image tools, Nano Banana 2's conversational editing might be the thing that finally makes AI image generation feel like a real creative workflow.

Try a workflow this week and let me know what you build. I'm especially curious to see what people do with the search-grounded rendering — that's the one that feels genuinely new.

If you found this useful, follow me for more practical AI implementation guides. I write about making these tools actually work in production, not just in demos.