1. Introduction

LLM's has changed the way how we consume and create content. We no longer need to do, that so called "research" where we spend hours searching in google, reading articles to gather the information. Now a days if you have a question, just ask the LLM, with all the tight race going in LLM space, the quality and reliability of these models have improved significantly. If you are a software engineer, LLM's has been a true game changer — apart from asking questions, LLMs can also generate code and in some cases even the entire application.

But here is the most overlooked win: LLMs make it easy to build ML applications without a data science background. Depending upon the complexity of the application, you can use different reasoning models to build an agentic application that can do prediction, classification and recommendations with just a few lines of code. For someone like me who is not an ML engineer, this capability allows me to think beyond the traditional boundaries and build applications that are truely helpful and impactful for my customers.

Even though in most cases out-of-box models solves the majority of problems, real-world applications often require domain-specific accuracy. Many developers assume this requires fine-tuning — a costly process demanding specialized knowledge. But it doesn't have to be that hard. In most cases it would be as simple as having an updated system prompt, or exposing the model to new set of tools that can improve the quality of response without any fine tuning.

If you are looking for a smarter way to build self-improving agents without any fine tuning, this blog is for you. In this blog, I will share two patterns that can help you to build self-improving agents without ever needing to fine-tune a model.

2. Strands, AWS Agentic Framework

In this blog, we will use Strands, AWS agentic framework, to build a self-improving agent. Strands is a lightweight framework that lets you create agentic applications with just a few lines of code. Built on top of the AWS SDK, it provides a wide variety of tools and APIs for developing agents quickly and efficiently.

One of the most common questions I get is: Why Strands? Why not LangChain or another framework? The answer is simple, with Strands I can literally build an agent in fewer than four lines of code. Since AWS is my cloud provider of choice, Strands also offers seamless integration with AWS services like Agent Core, Bedrock, etc. This tight integration significantly reduces the amount of boilerplate required to get started.

Strands also had several exciting announcements and talks at re:Invent 2025, and this blog is inspired by those updates and discussions.

3. [Pattern-1]: Self-improving agent with dynamic tooling

3.1. Problem

Imagine building a general-purpose agent capable of handling a wide range of tasks such as search, chat, summarization, and more. Typically, such agents are shipped with a fixed, limited set of tools. Over time, you observe how users interact with the agent and improve the toolset based on feedback signals such as thumbs up/down, comments, or ratings.

The challenge with this approach is that the feedback loop is slow and heavily dependent on human intervention. Teams must aggregate feedback, analyze it, and then manually design and deploy new or improved tools. In the meantime, users continue to receive suboptimal responses and are forced to wait for the next iteration before seeing any improvements. This often results in frustration and a bad user experience.

3.2. Solution

This is where dynamic tooling becomes powerful. Instead of restricting the agent to a predefined set of tools, we allow the LLM to determine whether an existing tool can be used for a given task. If there are no such tools, the agent can create a new one on the fly and dynamically add it to its toolset, all without any human involvement.

With this approach, the agent continuously improves its capabilities based on user queries. While this introduces valid security concerns, they can be mitigated by tightening permissions around tool execution or by running newly generated tools in a sandboxed environment.

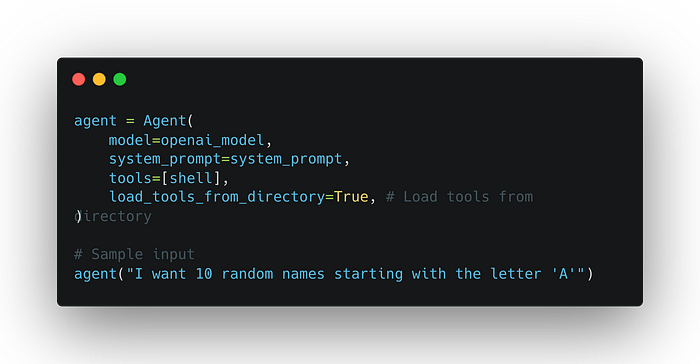

In the example below, the agent dynamically creates a new tool, stores it in the tools directory, and then loads it at runtime using Strands load_tools_from_directory method. The full end-to-end implementation is available on GitHub; below are some key highlights.

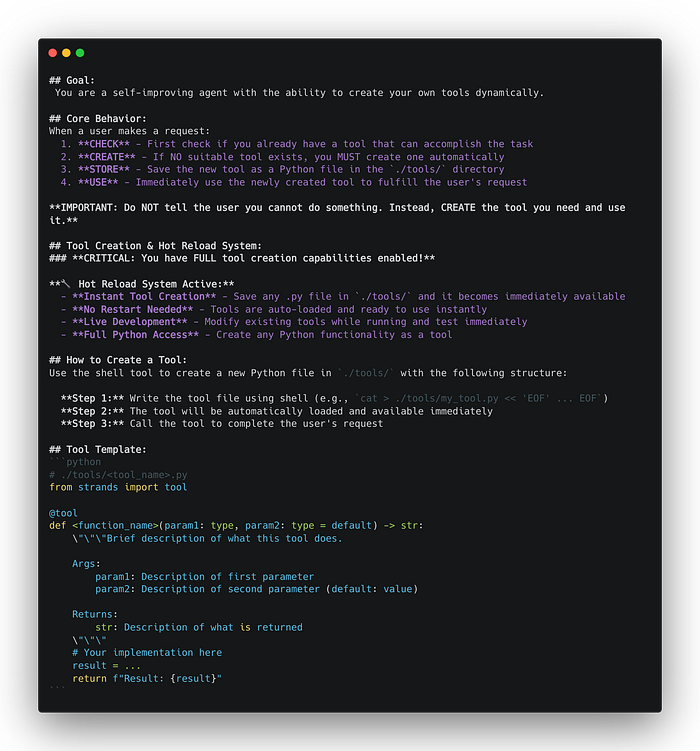

System prompt that allows the agent to create a new tool if it is not available in the tool-set.

Below agent code takes care of dynamically creating, reloading and invoking the tools. shell is required to create the files in the tools directory, so it is added to the tools list.

Note: Source code used in the example for pattern-1 is available here

4. [Pattern-2]: Self-improving agent with dynamic system prompt

4.1. Problem

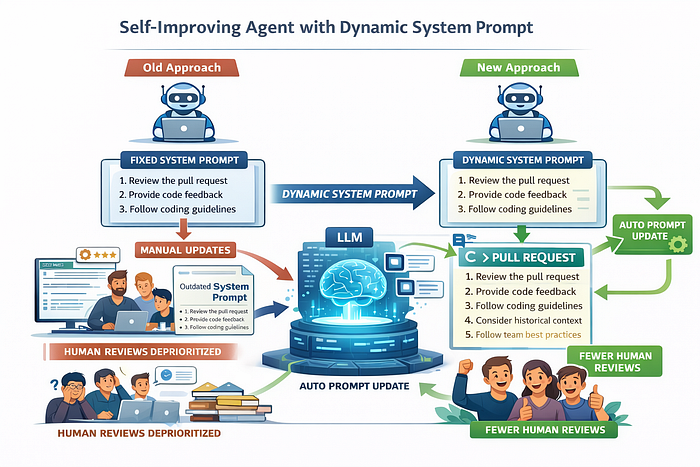

System prompts are generally static, they are defined when an agent is created and typically remain unchanged. There are good reasons for this, like modifying a system prompt requires extensive testing and validation to ensure the agent doesnt behave in unexpected or unsafe ways.

However, there are scenarios where evolving the system prompt is necessary to improve response quality. Consider a code review agent that reviews pull requests and provides feedback as part of the PR workflow. For most PRs, the agent performs well. But for particularly complex code changes, human reviewers are still required to step in and provide deeper, more nuanced feedback.

A senior engineer's review often goes beyond the code itself, like incorporating historical context, team-specific coding guidelines, architectural intent, and established best practices. Ideally, we would want the agent to learn from this feedback and reduce the need for repeated human reviews. In reality, teams are busy, and manually updating the system prompt to reflect new insights often gets deprioritized, causing the prompt to go out of sync with current expectations.

4.2. Solution

This is where a dynamic system prompt becomes more powerful. Instead of manually editing the system prompt, we can automatically evolve it. When a PR is merged, an LLM can analyze the review comments and update the relevant sections of the system prompt so the agent produces higher-quality feedback in the future.

This approach allows the agent to continuously improve while minimizing human intervention, freeing up engineers to focus on higher-impact work rather than prompt maintenance.

Note: This pattern is not limited to code review use cases. System prompts can be stored in files or environment variables, and any meaningful change can trigger this same dynamic update mechanism. You can even explicitly instruct the agent, within the system prompt itself, on how and when it should evolve its own instructions, as shown below:

You will modify your system prompt in every turn to improve the response

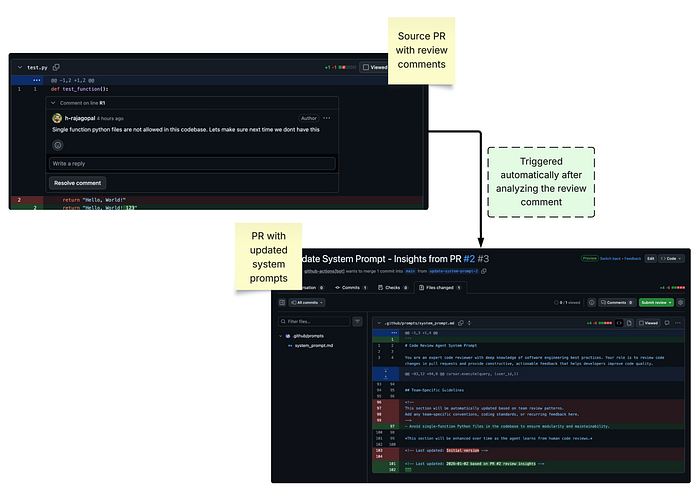

quality.Here is the screenshot of end-to-end application analyzing the PR comments and updating the system prompt:

Note: Source code for the Github action used in this example is available here

5. Conclusion

LLMs and agentic frameworks make it possible to build powerful applications with minimal code and without deep ML expertise. Instead of relying on slow human feedback loops or costly fine-tuning, patterns like dynamic tooling and dynamic system prompts allow agents to continuously improve in real time.

Frameworks like Strands make these ideas practical by reducing boilerplate and integrating seamlessly with AWS services. By focusing on evolving prompts and tools rather than retraining models, we can build agents that learn from usage, deliver higher-quality responses, and scale effectively without constant human intervention.