If you walk into most engineering organizations today and follow the "hot path" of a production request, you'll eventually notice something strange. Every component, no matter how unrelated to messaging it looks, behaves like a queue under load. Not intentionally. Not explicitly. But functionally.

It's queueing all the way down.

We keep telling ourselves we're building request-reply services, RESTful APIs, neat RPC layers, and tightly bounded microservices. But the moment production traffic arrives, the real system reveals itself: everything is buffering, batching, retrying, or deferring something.

You may not design a distributed queue, but the system will build one for you anyway.

This is the architectural tension of the modern web.

Even API Gateways Behave Like Queues

Engineers love to imagine API gateways as simple routers: take the request, forward the request, log the request. But that story only holds under ideal traffic patterns.

The moment real-world traffic hits — traffic that surges, stalls, retries, spikes, fans out — API gateways turn into workload regulators. They hold backflows. They absorb bursts. They retry upstreams. They coalesce requests. They maintain internal buffers to avoid overwhelming services.

Nobody calls that "queueing," but that's exactly what it is.

In many organizations, the API gateway is the biggest queue in the system. Not because it was designed that way, but because it had no choice.

ORMs Quietly Buffer Underneath "Simple" Queries

Developers think of ORMs as a high-level abstraction over SQL. But ORMs are also

- batching writes

- caching intermediate rows

- prefetching relations

- deferring flushes

- retrying transactions

- staging inserts until the transaction commits

A modern ORM almost always contains multiple in-memory queues: result batching queues, connection pool queues, deferred flush queues, transaction retry loops.

Under pressure, these queues become backpressure amplifiers.

A database query you thought was "just a query" can suddenly sit behind a dozen layers of hidden buffering — most of which your team never instrumented.

A database client library is a queue with SQL syntax sugar on top.

Analytics Pipelines Are Built Entirely on Hidden Buffers

Analytics teams love to talk about streaming: real-time dashboards, live event ingestion, sub-second insights. But the reality is that analytics systems are a matryoshka doll of buffers: Kafka topics, consumer lag, snapshotting, query engines pulling segments into memory, compaction queues, aggregation windows.

Even "real-time" analytics is eventually buffered analytics.

Your event is rarely processed when you think it is. It's usually processed whenever the nearest buffer drains enough to let it through.

If you've ever seen an analytics dashboard "catch up" after a spike, you've seen a queue reconnecting with reality.

Retries Create Phantom Queues Everywhere

A retry looks harmless. One line of code. A simple idea:

"Try again if it fails."

But retries produce implicit queues. When retries align across services — usually after a brief glitch — they multiply load, amplify congestion, and recreate work. Retries transform a transient upstream slowdown into a systemwide slowdown.

The retry mechanism is a global, invisible buffer that most organizations don't model until it's too late.

It's the hidden distributed queue nobody accounts for, but everyone pays for.

Connection Pools Are Queues Wearing a Fake Mustache

Developers talk about connection pools as if they're simple resource limiters. In practice, they are priority queues for work — requests waiting for an available slot to talk to a database, cache, or downstream service.

If the pool is exhausted, requests wait.

If waiting takes too long, timeouts trigger.

If too many timeouts occur, the upstream retries… which adds more requests to the queue.

Connection pools create second-order queueing effects that ripple through microservices.

A connection pool is a queue pretending to be an optimization.

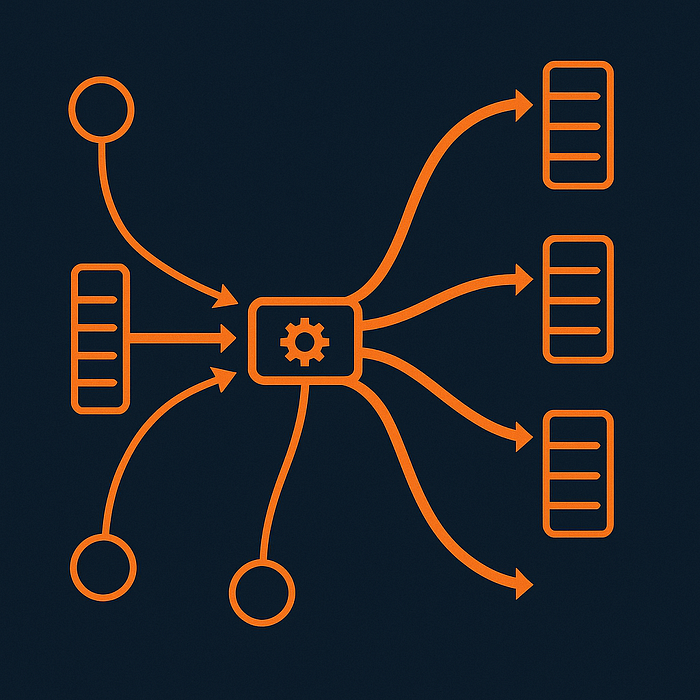

Distributed Systems Turn Everything Into a Queue Because the Network Forces Them To

Whenever your service calls another service, three forms of queueing appear automatically:

- Kernel-level network buffers

- Runtime-level goroutine scheduling queues

- Application-level request queues

Even if your service has no explicit queue in the code, it is still participating in multiple queueing systems that run beneath the language and the OS.

The network does not speak in crisp request/reply cycles. It speaks in partial deliveries, congestion windows, RTT variance, and retry storms. That language is fundamentally queue-shaped.

The further from the monolith you drift, the more the system behaves like a message pipeline.

You may want RPC, but you get queueing.

The Real Problem: Queueing Without Intent

Queueing is not bad. Hidden queueing is bad.

Explicit queues tell you their depth, their lag, their throughput. You can measure them. You can apply backpressure. You can see them filling.

Implicit queues — inside the gateway, inside the ORM, inside kernel buffers, inside retry loops — give you no such visibility. They fill silently. They collapse loudly.

Most outages in distributed systems aren't caused by a queue that was designed poorly.

They're caused by queues that nobody realized they created.

The Senior Engineer's Mindset: Assume Everything Buffers

Veteran engineers learn to visualize systems not as flows, but as reservoirs:

- Where does work sit when it can't move forward?

- What happens if upstream slows by 5%?

- What is the retry multiplier during an outage?

- Where does the system store "temporary" work?

- Which components hide backpressure behind helpful abstractions?

The moment you realize your system is an interconnected network of explicit and implicit queues, latency patterns begin to make sense. Retry storms make sense. Tail latency spikes make sense. Traffic hotspots make sense. "Random" slowdowns stop looking random.

Everything becomes explainable.

Closing Perspective

The dirty secret of modern software architecture is that scaling turned everything into a buffer, and nobody updated the mental models. Services don't fail because they're too slow. They fail because their implicit queues fill faster than they can drain.

When you hear engineers say "we didn't expect that amount of traffic," what they really mean is: "We didn't know where the queues were."

The more distributed the system, the more true this becomes.

You can't stop systems from becoming distributed queues. But you can make sure they are intentional queues instead of accidental ones.

That's the difference between a system that scales and a system that breaks.