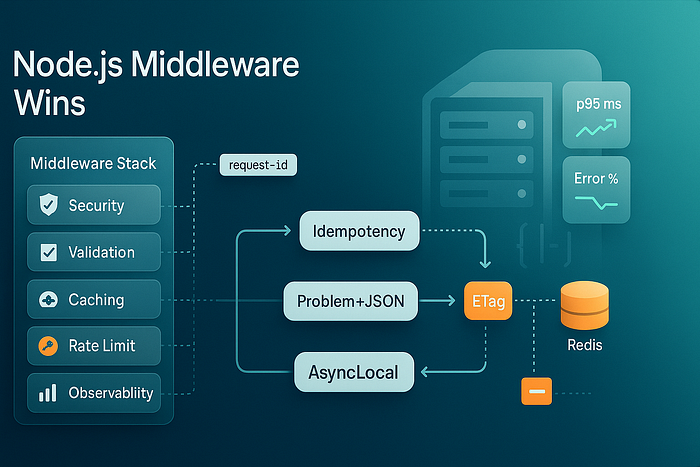

Eight Node.js middleware patterns — request IDs, typed validation, smart caching, rate limits, idempotency, and more — to simplify backends and reduce bugs.

You can't out-abstract messy request flows. But you can tame them.

These are the eight middleware tricks that took my Node.js services from "spaghetti with logs" to boring, dependable backends. They're small changes with outsized impact — easy to adopt whether you use Express or Fastify.

1) Correlate Everything with AsyncLocalStorage

Add a request ID once, use it everywhere — logs, metrics, downstream headers — without passing it through function arguments.

// request-context.js

import { AsyncLocalStorage } from 'node:async_hooks';

export const ctx = new AsyncLocalStorage();

export function withRequestContext(req, res, next) {

const requestId = req.get('x-request-id') || crypto.randomUUID();

res.set('x-request-id', requestId);

ctx.run({ requestId, start: Date.now() }, () => next());

}

// usage in any file (no param threading!)

import { ctx } from './request-context.js';

function log(msg, extra = {}) {

const c = ctx.getStore() || {};

console.log(JSON.stringify({ msg, rid: c.requestId, ...extra }));

}Why it simplifies: No more plumbing IDs through 7 layers "just for logging." Everything is auto-correlated and searchable.

2) Type-Safe Validation at the Edge (Zod + Inference)

Validate as middleware and ship typed data to handlers so your business logic never sees junk.

import { z } from 'zod';

const CreateUser = z.object({

email: z.string().email(),

plan: z.enum(['free','pro']).default('free'),

tags: z.array(z.string()).max(5).optional()

});

export function validate(schema) {

return (req, res, next) => {

const parsed = schema.safeParse({ ...req.body, ...req.query, ...req.params });

if (!parsed.success) return res.status(400).json({ error: parsed.error.flatten() });

req.valid = parsed.data; // typed payload

next();

};

}

// route

app.post('/users', validate(CreateUser), async (req, res) => {

/** @type {z.infer<typeof CreateUser>} */

const data = req.valid;

// ... create user safely

res.status(201).json({ ok: true });

});Why it simplifies: All routes share the same validation story; handlers are clean and autocompletion-friendly.

3) Smart Caching: ETag, Cache-Control, and Tiny Server Cache

Emit ETags for idempotent resources and short-TTL server caching for bursty traffic.

import crypto from 'node:crypto';

const serverCache = new Map(); // tiny in-memory cache

export async function etagAndCache(ttl = 10_000) {

return async (req, res, next) => {

const key = req.originalUrl;

const entry = serverCache.get(key);

if (entry && Date.now() - entry.t < ttl) {

res.set('ETag', entry.tag).set('Cache-Control', 'public, max-age=10');

if (req.get('If-None-Match') === entry.tag) return res.status(304).end();

return res.json(entry.payload);

}

const original = res.json.bind(res);

res.json = (payload) => {

const tag = `"${crypto.createHash('sha1').update(JSON.stringify(payload)).digest('hex')}"`;

serverCache.set(key, { t: Date.now(), tag, payload });

res.set('ETag', tag).set('Cache-Control', 'public, max-age=10');

return original(payload);

};

next();

};

}Why it simplifies: Clients stop redownloading unchanged data; your app avoids recomputing hot responses during spikes.

4) Rate Limits with Sliding Window (and Friendly Errors)

Protect upstreams and give users clear feedback. Use Redis in prod; here's a memory version that's drop-in.

const buckets = new Map();

export function rateLimit({ windowMs = 60_000, limit = 60 }) {

return (req, res, next) => {

const now = Date.now(), key = req.ip;

const hits = (buckets.get(key) || []).filter(ts => now - ts < windowMs);

hits.push(now); buckets.set(key, hits);

if (hits.length > limit) {

res.set('Retry-After', Math.ceil((windowMs - (now - hits[0])) / 1000));

return res.status(429).json({ error: 'rate_limited', hint: 'Try again shortly.' });

}

next();

};

}Why it simplifies: One guardrail saves you from a thousand "why is the DB melting?" postmortems.

5) Idempotency Keys for POST (Goodbye Duplicate Orders)

Double submits happen — mobile retries, browser back button, flaky networks. Make POSTs safe with an idempotency key.

const idempotent = new Map();

export function idempotency() {

return async (req, res, next) => {

const key = req.get('Idempotency-Key');

if (!key) return next(); // require per-route

if (idempotent.has(key)) return res.json(idempotent.get(key));

const original = res.json.bind(res);

res.json = (payload) => { idempotent.set(key, payload); return original(payload); };

next();

};

}

// route

app.post('/orders', idempotency(), async (req, res) => {

// create order once; repeats return cached response

res.status(201).json({ id: 'ord_123', status: 'created' });

});Why it simplifies: You stop writing "did we already do this?" logic in every handler.

6) Structured Errors with RFC 9457 "Problem Details"

Normalize errors so clients (and humans) can rely on a single shape.

class Problem extends Error {

constructor(status, title, detail, type = 'about:blank', extras = {}) {

super(detail); Object.assign(this, { status, title, detail, type, ...extras });

}

}

export function problemHandler(err, req, res, _next) {

const p = err instanceof Problem

? err

: new Problem(500, 'Internal Server Error', 'Unexpected failure');

res.status(p.status).type('application/problem+json').json({

type: p.type, title: p.title, status: p.status, detail: p.detail, ...p

});

}

// use

app.get('/payments/:id', async (req, res, next) => {

try { throw new Problem(404, 'Not Found', 'Payment does not exist'); }

catch (e) { next(e); }

});

app.use(problemHandler);Why it simplifies: One error contract across your API; no more switch-cases for "which error shape is this?"

7) Security Defaults in One Place

Bundle the boring (and easy-to-forget) security headers and input hygiene into a single middleware.

import helmet from 'helmet';

import xssClean from 'xss-clean';

export function security() {

return [

helmet({ contentSecurityPolicy: { useDefaults: true } }),

xssClean(), // sanitize user input

(req, res, next) => { // secure defaults

res.set({

'X-Content-Type-Options': 'nosniff',

'Referrer-Policy': 'strict-origin-when-cross-origin',

'Permissions-Policy': 'geolocation=(), microphone=()'

});

next();

}

];

}Why it simplifies: You stop sprinkling headers across handlers and reviews; the baseline is always safe.

8) Observability Middleware: Latency, Payloads, Outcomes

Measure what matters with zero friction. Pair this with the request context from Trick #1 and you've got production-grade logs.

export function observe() {

return async (req, res, next) => {

const start = process.hrtime.bigint();

res.on('finish', () => {

const ms = Number(process.hrtime.bigint() - start) / 1e6;

const payload = {

rid: (ctx.getStore() || {}).requestId,

path: req.originalUrl, method: req.method,

status: res.statusCode, ms,

inBytes: +req.get('content-length') || 0,

outBytes: +res.getHeader('content-length') || 0

};

console.log(JSON.stringify({ level: 'info', ...payload }));

if (ms > 500) console.warn('slow', payload);

});

next();

};

}Why it simplifies: Every request logs the same way; slow paths and big payloads become obvious without extra code.

A Minimal "Backbone" You Can Drop In

Here's how these pieces fit together in Express (swap equivalents for Fastify hooks):

import express from 'express';

import { withRequestContext } from './request-context.js';

import { security } from './security.js';

import { observe } from './observe.js';

import { rateLimit } from './ratelimit.js';

import { problemHandler } from './problem.js';

import { etagAndCache } from './etag-cache.js';

const app = express();

app.use(express.json({ limit: '1mb' }));

app.use(withRequestContext, ...security(), observe(), rateLimit({ limit: 120 }));

app.get('/healthz', (req, res) => res.json({ ok: true }));

app.get('/pricing', await etagAndCache(10_000), (req, res) => res.json({ plans: ['free','pro'] }));

// ...other routes...

app.use(problemHandler);

app.listen(3000);What you get: correlated logs, typed inputs, predictable errors, fair usage, and performance that "just works" — with almost no code in your handlers.

Small Notes from the Field

- Express vs Fastify? Fastify is faster out of the box and has a nice schema-first approach; Express has a bigger ecosystem. These patterns work in both.

- Redis everywhere in prod. Use it for rate limits, idempotency, and short-TTL caches; memory maps are fine to prove the pattern.

- Keep middleware tiny. Each should do one thing. The moment it needs a README, it's probably two middlewares.

Wrap-Up

Middleware is where backends get their manners. Centralize cross-cutting concerns and your route handlers become delightfully boring — focused on the domain, not on plumbing. Start with request context, typed validation, and problem-details errors. Add caching, rate limits, and idempotency as your traffic grows. Round it off with security defaults and observability, and you'll ship faster with fewer surprises.

If this helped, drop a comment with your favorite middleware pattern — or the one that saved you from a 3 a.m. incident — and follow for more practical Node.js and backend engineering playbooks.