Boeing Associate Technical Fellow /Engineer /Scientist /Inventor /Cloud Solution Architect /Software Developer /@ Boeing Global Services

The ascent of Artificial Intelligence (AI) and Deep Learning (DL) has, in recent decades, sometimes been portrayed as a revolutionary break from traditional quantitative methods. Yet, to truly understand the mechanics of a multi-layered neural network is to encounter a modern engineering marvel built entirely upon two centuries of rigorous classical statistics.

This article aims to bridge that perceived divide, motivated by the fact that recognizing the statistical lineage of deep learning demystifies its processes, improves diagnostic skill (such as identifying overfitting or convergence issues), and ensures the rigour of its application. Far from inventing a new mathematical language, deep learning scales, accelerates, and integrates foundational statistical techniques to manage data, measure uncertainty, and ultimately, learn the complex patterns of the world. The seamless integration of classical statistical theory across the data lifecycle — from preprocessing to optimization — serves as the invisible, yet indispensable, scaffolding for modern AI.

Descriptive Statistics: Anchoring the Network

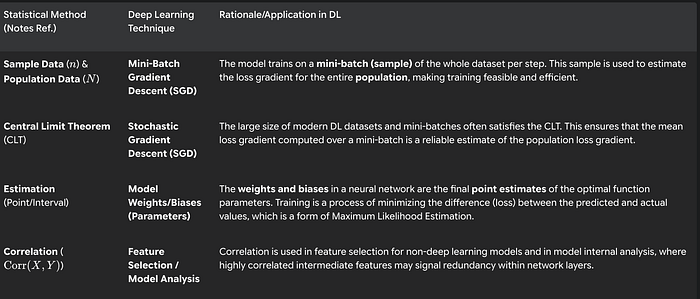

Training a deep neural network requires predictable, stable data. This need is met directly by Descriptive Statistics, which govern the initial transformation and conditioning of information before it enters the high-dimensional layers. Core measures of central tendency and dispersion, such as the Mean $(\mu)$ and Standard Deviation $(\sigma)$, are repurposed for dynamic network stabilization.

The powerful Batch Normalization technique performs a continuous Z-Score transformation within the network: the batch mean is subtracted, and the result is divided by the batch standard deviation. This data-centring speeds up convergence and dramatically stabilizes the training process. Similarly, the initial selection of the network's weights and biases is often distributed according to$\sigma$, adhering to the principle of ensuring unit variance across inputs to prevent signals from diminishing or exploding. While less dramatic, the Range is used in the basic Min-Max Normalization, which scales features like image pixel values into a uniform range, ensuring all inputs are treated equally.

Probability Distributions: Defining the Problem and Managing Uncertainty

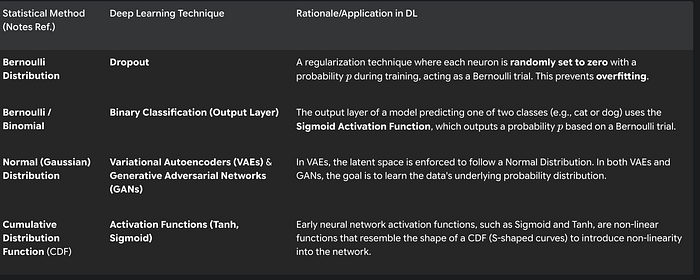

The success of a deep learning model is measured by its ability to model a target probability distribution. This places the theory of Probability Distributions directly at the heart of model design and regularization.

In classification, the output is inherently probabilistic. A Binary Classification model predicting one of two outcomes relies on activation functions like Sigmoid, which output a probability that estimates a single-trial Bernoulli Distribution. For more complex generative tasks, models like Variational Autoencoders (VAEs) explicitly enforce constraints on their latent representation space, compelling it to follow a known structure, typically a Normal (Gaussian) Distribution. The ultimate function of VAEs and GANs is to successfully learn and reproduce the underlying probability distribution of the input data, directly translating a core statistical goal into a complex AI application. Furthermore, the defensive technique against overfitting known as Dropout is a direct implementation of the Bernoulli Distribution, as it randomly sets a neuron to zero with probability $p$ during training, forcing the network to learn more robust, distributed representations.

Inferential Statistics and Sampling: Justifying the Learning Process

Finally, the entire mechanics of optimization and generalization are validated by Inferential Statistics. Deep learning is fundamentally an estimation problem: we use a finite Sample dataset to make reliable conclusions about an infinite population dataset $ (N)$.

The method of choice for nearly all deep learning tasks is Stochastic Gradient Descent (SGD), which adjusts parameters based on small batches of data. The mathematical feasibility of this approach is provided by the Central Limit Theorem (CLT). The CLT ensures that, when a sufficiently large mini-batch is used, the calculated mean loss gradient is a reliable, unbiased estimate of the population gradient. Without this statistical guarantee, training efficiency would collapse. The final result of this estimation process — the learned weights and biases — represents the network's optimized point estimates of the functional relationship between input and output, directly realizing the statistical objective of Maximum Likelihood Estimation.

In conclusion, the journey from the Gaussian curve to the deep neural network is a continuous, not fractured, history. The statistical foundations of Deep Learning ensure its rigour and explain its success. Far from being a "black box" of obscure algorithms, modern AI is a highly sophisticated, hyper-efficient machine for statistical inference. Its greatest challenge moving forward is not generating more parameters, but rather, using statistical principles — particularly around uncertainty quantification and interpretability — to ensure its results are not only accurate but also trustworthy and explainable. The future of AI is inseparable from the rigorous application of the same fundamental statistical methods that have guided scientific discovery for centuries.