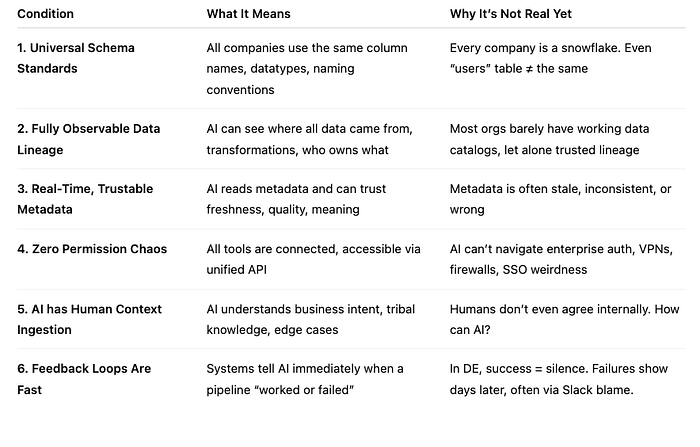

1. 🧼 Universal Schema Standards

In theory: All data looks the same — perfect, uniform, like IKEA furniture. In reality: Every company is a garage sale of chaos.

You think AI is ready to handle data from Company A's user_id, Company B's usr_id, and Company C's mysterious uid that actually means "unicorn identifier"?

Think again.

Even the AI's like:

"Wait… these are all USERS?? You guys need therapy, not SQL."

2. 🕸️ Fully Observable Data Lineage

In theory: AI sees the full story of where data came from, what touched it, and where it ends. In reality: Data lineage looks like spaghetti thrown at a whiteboard in the dark.

Data Engineer:

"Where does this number come from?" Manager: "Airtable > to Google Sheets > exported to Excel > uploaded to S3 > loaded to BigQuery > renamed 'final_table2'." AI: 404: Emotional damage

3. 🔍 Real-Time, Trustable Metadata

In theory: AI reads the labels and knows what's in the data. In reality: The label says "value"… and no one remembers what it means.

Some metadata hasn't been updated since Obama was in office. Some says "last updated: today" but hasn't changed in 2 years. AI shows up all eager and hopeful, then says:

"Sorry, I can't work under these conditions."

4. 🔐 Zero Permission Chaos

In theory: AI can access everything. Unified APIs. Fully open. A dream. In reality: One S3 bucket has 13 levels of permissions, 2 VPNs, and a retired IAM role still holding things hostage.

The AI tries to connect to a warehouse and hits:

- OAuth error

- MFA block

- Firewall timeout

- Slack ping from IT saying "You weren't supposed to touch that"

AI:

"I just wanted to read the orders table. Now I'm in Gitmo."

5. 🧠 AI Understands Human Context

In theory: AI knows what "churn" means to marketing, sales, finance, and your cat. In reality: Your team can't even agree if churn is 30 days of no login, or 60 days after refund, or when Janet "just feels like they're gone."

AI:

"I found 4 definitions of churn in your Notion doc. Would you like me to panic now or later?"

6. ⏱️ Feedback Loops Are Fast

In theory: AI makes a mistake, learns, fixes itself. In DE? You push a pipeline Monday. No one notices it's broken until Friday. Why? Because no one checked the dashboard until the board meeting.

Then suddenly:

"Why does our revenue chart look like it fell off a cliff!?" And the AI's like: "I was not trained for delayed judgment and passive-aggressive Slack threads."

🎤 In Conclusion:

AI isn't replacing DEs because the job is hard. It's because the job is weird.

- The tools lie

- The data gaslights

- The people disagree

- And the only sign you're doing it right is that… 📉 nothing breaks

So yeah, maybe the robots will catch up. But for now?

We build the pipelines. We fix the chaos. We make the dashboards not lie.

And when AI finally joins the team?

"Welcome to the mess, buddy. Grab a ticket. We've got some dbt tests to write."

🕰️ Why it's 5+ years away:

Org-level chaos → Every team uses different tools, naming, logic

No global standardization → DE is glue, and glue is unique per company

Low incentives to standardize → Nobody wants to pay the price to "clean it up"

AI is great at syntax, not semantics → It can write PySpark, not resolve the intent behind a flawed metric

Feedback is non-deterministic → AI doesn't know if it broke something, because… no one might notice until end-of-quarter