Introduction:

In the vast landscape of deep learning, pre-trained neural networks stand as formidable giants, having traversed millions of images to learn intricate patterns and representations. Leveraging these pre-trained networks for feature extraction offers a shortcut to understanding complex visual data, unlocking a treasure trove of insights. In this comprehensive guide, we'll embark on a journey into the realm of feature extraction, unraveling the mathematical intricacies and demonstrating practical implementation with Python code.

Understanding Feature Extraction:

Feature extraction is the process of capturing meaningful representations from raw data, enabling machines to understand and interpret complex patterns. In the realm of computer vision, feature extraction plays a pivotal role in analyzing and understanding images. By extracting features from pre-trained neural networks, we can leverage the knowledge acquired from vast image datasets to enhance the performance of our own tasks.

The Power of Pre-trained Neural Networks:

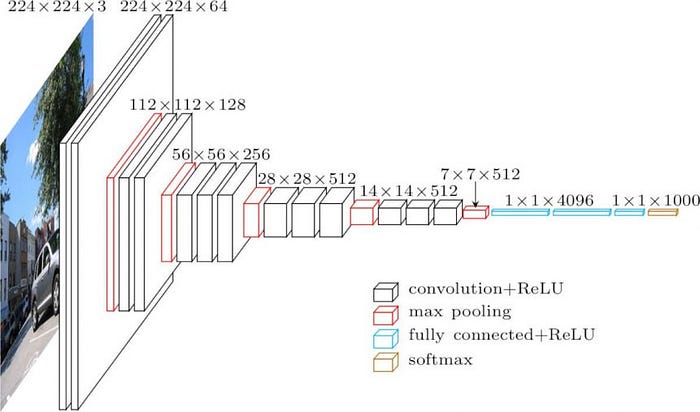

Pre-trained neural networks, such as VGG, ResNet, and Inception, have been trained on massive image datasets like ImageNet, learning to extract hierarchical features that capture rich visual semantics. These networks act as powerful feature extractors, transforming raw images into high-level representations that encode valuable information about the underlying visual content.

Mathematical Details:

Let's delve into the mathematical underpinnings of feature extraction with pre-trained neural networks. At its core, feature extraction involves passing raw images through the pre-trained network and extracting the activations of specific layers. These activations serve as the extracted features, capturing the visual semantics of the input images. Mathematically, this process can be represented as follows:

Python Code Example:

Let's demonstrate how to perform feature extraction with a pre-trained neural network using Python and TensorFlow:

import tensorflow as tf

from tensorflow.keras.applications import VGG16

from tensorflow.keras.preprocessing import image

from tensorflow.keras.applications.vgg16 import preprocess_input

import numpy as np

# Load pre-trained VGG16 model

model = VGG16(weights='imagenet', include_top=False)

# Load and preprocess image

img_path = 'example_image.jpg'

img = image.load_img(img_path, target_size=(224, 224))

x = image.img_to_array(img)

x = np.expand_dims(x, axis=0)

x = preprocess_input(x)

# Extract features

features = model.predict(x)

print(features.shape)In this example, we load the pre-trained VGG16 model without the fully connected layers (include_top=False). We then load and preprocess an example image before passing it through the VGG16 model to extract features. The resulting features represent the high-level representations of the input image captured by the pre-trained network.

Conclusion:

Feature extraction with pre-trained neural networks offers a powerful approach to understanding and analyzing visual data. By leveraging the knowledge acquired from vast image datasets, we can extract rich representations that encode valuable information about the underlying visual content. With the mathematical foundations and practical implementation demonstrated in this guide, you are equipped to unlock the potential of feature extraction and unveil insights hidden within complex visual data.

References: