Introduction

Large Language Models (LLMs) have demonstrated remarkable capabilities across various tasks. While much attention has been given to optimizing user prompts tailored to specific tasks, system prompts — task-agnostic instructions guiding model behavior — have remained largely underexplored. The paper "System Prompt Optimization with Meta-Learning" by Yumin Choi, Jinheon Baek, and Sung Ju Hwang introduces a novel approach to optimize these system prompts using meta-learning, aiming for robustness across diverse user prompts and transferability to unseen tasks.

The Motivation

Optimizing system prompts presents unique challenges:

Task-Agnostic Nature: System prompts must generalize across various tasks and domains.

Interaction with User Prompts: They need to synergize effectively with diverse user prompts.

Lack of Prior Focus: Most existing research has concentrated on user prompt optimization, leaving system prompts underexplored.

Addressing these challenges requires a method that can learn from multiple tasks and adapt accordingly.

How It Works

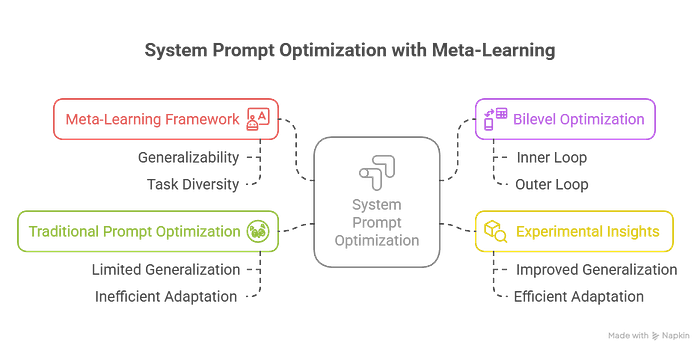

The proposed approach employs a bilevel optimization framework:

1. Meta-Learning Framework

A meta-learning strategy is adopted to optimize system prompts. This involves training across various tasks to ensure the system prompt's generalizability.

2. Bilevel Optimization

The optimization is structured in two levels:

- Inner Loop: User prompts are optimized for specific tasks.

- Outer Loop: The system prompt is optimized to perform well across all tasks, considering the adaptations made in the inner loop.

This structure ensures that the system prompt learns to be effective across diverse scenarios.

Experimental Insights

The method was evaluated on 14 unseen datasets spanning 5 different domains. Key findings include:

- Improved Generalization: The optimized system prompts performed robustly across various tasks.

- Efficient Adaptation: The approach required fewer optimization steps for test-time user prompts while achieving improved performance.

- Synergistic Interaction: The bilevel optimization facilitated effective synergy between system and user prompts.

How It Beats Traditional Prompt Optimization

Traditional prompt optimization methods often focus solely on user prompts, leading to:

Limited Generalization: User prompts optimized for specific tasks may not perform well on others.

Inefficient Adaptation: Each new task may require substantial re-optimization.

In contrast, this meta-learning approach offers:

- Robust System Prompts: Optimized to perform well across diverse tasks.

- Efficient User Prompt Adaptation: Requires fewer steps to adapt to new tasks.

- Enhanced Synergy: Improved interaction between system and user prompts leads to better overall performance.

Key Takeaways

- Novel Approach: Introduces bilevel optimization for system prompt optimization.

- Meta-Learning Framework: Ensures generalizability across tasks.

- Improved Performance: Demonstrates enhanced performance on unseen datasets.

- Efficient Adaptation: Reduces the need for extensive re-optimization for new tasks.

Learn More

- 📄 Paper: arXiv:2505.09666

- 💻 Code: GitHub Repository

Follow me for more deep dives into the future of LLMs, AI agents, and efficient model training.