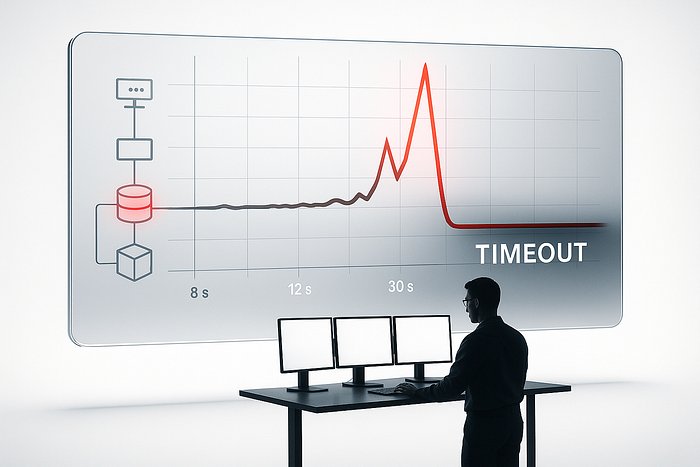

8 seconds. 12 seconds. 30 seconds. Timeout.

I watched our primary database lock up under 400,000 concurrent connections.

Now I was learning what happens when you scale faster than your architecture can handle.

Every shortcut we took at 100 users became a crisis at 100,000. Every "we'll fix it later" turned into "fix it now or the company dies."

Here is exactly how we screwed up and what actually saved us.

That One Python File

When we had 100 users, our entire backend was a single file called main.py.

Not joking. 4,832 lines of Python that handled everything from password resets to push notifications.

[nginx] --> [gunicorn with 4 workers] --> [main.py] --> [PostgreSQL]

|

(yes, all of it)The function that killed us first looked innocent enough:

def get_user_feed(user_id):

# worked fine in dev

posts = db.query("""

SELECT * FROM posts p, users u, comments c

WHERE p.user_id = u.id

AND c.post_id = p.id

AND u.id IN (

SELECT followed_id FROM follows WHERE follower_id = %s

)

""", user_id)

return postsAt 100 users: 38ms response time At 5,000 users: 2.4 seconds At 10,000 users: nginx timeout

My junior developer fixed it in 20 minutes after I spent 6 hours trying complicated solutions:

def get_user_feed(user_id):

posts = db.query("""

SELECT p.id, p.title, p.created_at

FROM posts p

JOIN follows f ON p.user_id = f.followed_id

WHERE f.follower_id = %s

ORDER BY p.created_at DESC

LIMIT 25

""", user_id)

return postsPlus this index that I should have created day one:

CREATE INDEX idx_follows_lookup ON follows(follower_id, followed_id);10,000 users: 67ms. Problem solved.

Lesson? Your database is not magic. It needs indexes like your car needs oil.

Redis, My Complicated Ex

We added Redis at around 20,000 users because some blog post said it would solve everything.

Our implementation:

def get_profile(user_id):

cached = redis.get(f"user:{user_id}")

if cached:

return json.loads(cached)

user = db.query("SELECT * FROM users WHERE id = %s", user_id)

redis.set(f"user:{user_id}", json.dumps(user))

return userSee the problem? No expiration.

We cached user profiles forever. A user changed their profile picture, but their ex still saw the old one for three weeks.

That was a fun support ticket.

But the real disaster came when we tried to get clever:

def get_trending_posts():

# this seemed smart at midnight

cache_key = f"trending:{date.today()}"

cached = redis.get(cache_key)

if cached:

return cached

# heavy query

posts = calculate_trending() # 8 second query

redis.setex(cache_key, 3600, posts)

return postsWhen that cache expired at peak traffic, 2,000 concurrent requests all tried to calculate trending posts simultaneously.

The database server actually started smoking. Not metaphorically. The ops team sent me a photo.

Fixed it with a mutex pattern :

def get_trending_posts():

cache_key = f"trending:{date.today()}"

cached = redis.get(cache_key)

if cached:

return cached

lock = redis.set(f"lock:{cache_key}", "1", nx=True, ex=10)

if not lock:

sleep(0.5)

return get_trending_posts()

posts = calculate_trending()

redis.setex(cache_key, 3600 + randint(0, 600), posts)

return postsRandom expiration jitter. Stupid simple. Saved the company.

Microservices: The Cure That Almost Killed the Patient

At 180,000 users, we took one look at main.py and nearly had a stroke. Within two months, we had containerized everything into microservices.

Before (working):

[Big Ball of Mud]

|

[Database]After (not working):

[auth-service] [user-service] [notification-service]

\ | /

\ | /

[api-gateway]---[email-service]

| |

[order-service] [analytics-service]

| |

[payment-service] [search-service]

\ /

\ /

[RDS cluster]

|

[Read replicas]First problem: We forgot to add health checks. The user service died, but the gateway kept sending it traffic for 15 minutes. Users thought we were hacked.

Second problem: No circuit breakers. When the email service got slow, it took down the notification service, which took down the order service, which took down everything. We called it "cascade Friday."

Emergency band-aid that worked:

def call_service(url, data, retries=3):

for i in range(retries):

try:

r = requests.post(url, json=data, timeout=2)

if r.status_code == 200:

return r.json()

except:

if i == retries - 1:

return {"error": "service down"}

sleep(0.1 * (2 ** i)) # exponential backoff

return NoneNot elegant. But it stopped the bleeding while we figured out proper service mesh.

The Stuff Nobody Tells You

After burning through three database administrators and two AWS bills that made my eyes water, here is what actually matters:

Your clever code is tomorrow's production incident. That beautiful abstraction you wrote? It is why the new engineer cannot figure out why requests randomly fail.

Database indexes are not optional. Every missing index is a future 3 AM phone call. Create them now or explain to investors later why the site is down.

Caching is not a architecture strategy. It is a band-aid. Fix your slow queries first, then add caching. Not the other way around.

Test with realistic data. Your local database with 10 users is not production with 500,000. We learned this when our "fast" query took 30 seconds on real data.

Keep one foot in the old world. That legacy code you want to rewrite? It is the only thing working when your microservices mesh catches fire.

What Really Saved Us

You know what actually got us through the worst nights?

Engineers who cared more about fixing problems than blaming people.

We had a junior developer who stayed up for 32 hours straight debugging a memory leak.

A designer who learned SQL to help optimize queries. An intern who figured out our cache stampede problem before any senior engineer.

Tech stack decisions are reversible. Hiring people who give a damn is not.

We are at 1.3 million users now. Still running some of that original Python code. Still discovering indexes we should have created two years ago. Still shipping bugs on Fridays.

But now we have a team that knows how to fight fires together. That is worth more than any architecture diagram.