Hey, you all!! I'm presenting here a TensorFlow implementation of the particle swarm optimization algorithm. A brief explanation of PSO (Particle Swarm Optimization) and its implementation in Tensorflow.

TL/DR: PSO implementation using tensorflow. Link to colab.

There are plenty of resources for PSO, so I'll avoid explaining the algorithm in detail and leave here the link for the original paper and the Wikipedia article. For now, it is enough to say that PSO is a kind of metaheuristic optimization based on swarms. Each particle on the swarm will use its personal information plus the swarm's info, trying to find the optimal value for the function. Those pieces of information are called p-best best and g-best, respectively, and they are basically the best place visited by the particle and the best place seen by all the swarm.

One of the advantages of using tensorflow for PSO is that you can deal with all the swarm info as tensors. Then, you may update in one shot. All the swarm velocities and positions can be updated at once.

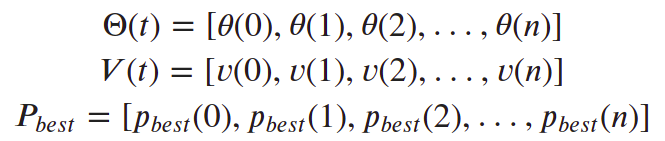

Each particle will have a position(𝜃), a velocity(𝑣), and an individual best (𝑝𝑏𝑒𝑠𝑡). And the swarm will have the global best (𝑔𝑏𝑒𝑠𝑡), which is the best position ever visited by any particle. Since we are using tensorflow, we may have this info in a tensor, avoiding loopings over the swarm, so we'll have:

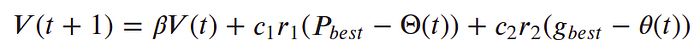

Then, we can update the whole swarm's velocities in just just one operation:

, where 𝑐1 and 𝑐2 are the cognitive and social coefficients, respectively, and 𝑟1 and 𝑟2 are uniformly distributed random numbers in the range [-1,1). Then the particle position is updated as:

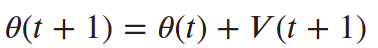

Below, I'm putting all these steps together in the PSO algorithm, and the figure on the right helps us to understand the behavior of a single particle. Notice how the resultant vector v(t+1) (in red) is basically composed of the three movements of the particle in a given iteration, namely the inertia (βv(t), which is a vector pointing in the same direction as it was in the previous iteration), the instinctive movement (vp, which is a vector pointing to the pbest), and the collective movement (vg, which is a vector pointing to the gbest).

And there is the tensorflow implementation of this algorithm (you can also check out the colab):

And did you notice? There is only one for loop, for the iterations. Since we are dealing with tensors, there is no need to run through each particle on the swarm.

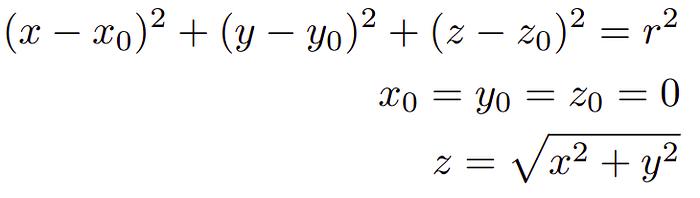

Finally, let's go for an example. We can try to find out the optimum on a sphere, it is a simple function and would be helpful for visualizing the behavior of the swarm. So our fitness function will be defined as:

Then, we can instantiate the class defined up there to find the center of this sphere:

This snippet of code will create a swarm of 100 particles uniformed distributed between [-1,1)(as it is building the swarm with the default parameters of the class defined before). The optimization process can be visualized in the next animation:

Now, let's compare swarms of different sizes. Bellow, we have a swarm composed only of 2 particles and the other one composed of 100 (I'm leaving the trace in the top plot because it is hard to follow the movement of only 2 particles). Notice how hard it is for the smaller swarm to find convergence:

That was the so-called vanilla-PSO, the basic and simplest version. It is a unimodal optimizer, which means that it can find optimal only in functions with no more than one optimal point. There are many different versions of PSO, and many of them propose changes to deal with this. But one straightforward solution would be multiple swarms. Below, in the left image, we have only one swarm of 100 individuals trying to find the multiple optimal points. On the right, we split this swarm into four swarms of 25 individuals, and it is easy to notice that they can find the multiple optima much easier. Remember: that is only an example. There are better ways to deal with multi-modal optimizations.

And that is it! I hope you enjoyed the read. If you have any questions or suggestions, please contact me! Have a good one!