1. Deletion Methods

a. Listwise (Complete Case) Deletion

- How it works: Remove entire rows (cases) with any missing values.

- When to use:

Data is MCAR (Missing Completely at Random).

Missingness is minimal (<5% of data).

- Pros: Simple, no computational overhead.

- Cons:

Loss of sample size → reduced statistical power.

Biased results if data is not MCAR.

b. Pairwise Deletion

- How it works: Use all available data for each calculation (e.g., correlation uses all non-missing pairs).

- When to use:

Missingness is MCAR or MAR.

Different variables have different missing patterns.

- Pros: Retains more data than listwise deletion.

- Cons:

Can produce inconsistent sample sizes across analyses.

Biased if missingness is MNAR.

2. Imputation Methods

a. Mean/Median/Mode Imputation

- How it works: Replace missing values with the mean (numeric), median (skewed data), or mode (categorical).

- When to use:

Small missingness in MCAR data.

Quick baseline approach.

- Pros: Simple, fast.

- Cons:

Underestimates variance.

Distorts relationships between variables.

b. Regression Imputation

- How it works: Predict missing values using linear/logistic regression on other variables.

- When to use:

Missingness is MAR (predictable from other variables).

Strong correlations exist between variables.

- Pros: More accurate than mean imputation.

- Cons:

Overestimates model fit (imputed values are perfect predictions).

Doesn't account for uncertainty.

c. K-Nearest Neighbors (KNN) Imputation

- How it works: Replace missing values with the average of the *k* most similar rows.

- When to use:

Data has meaningful similarity (e.g., clustering).

Missingness is MAR.

- Pros: Captures local patterns.

- Cons:

Computationally expensive for large datasets.

Sensitive to choice of k.

d. Multiple Imputation (MICE — Multiple Imputation by Chained Equations)

- How it works:

- Create multiple imputed datasets with random noise.

- Analyze each dataset separately.

- Combine results (Rubin's rules).

- When to use:

Missingness is MAR or MNAR.

High-quality imputation needed.

- Pros:

Accounts for imputation uncertainty.

Gold standard for MAR data.

- Cons:

Complex to implement.

Computationally intensive.

e. Time-Series Imputation (Forward/Backward Fill, Interpolation)

- How it works:

Forward fill: Use last observed value.

Linear interpolation: Estimate missing values between two points.

- When to use:

Time-series or longitudinal data.

Missingness is MAR.

- Pros: Preserves trends.

- Cons:

Can introduce artificial patterns.

Not suitable for non-sequential data.

3. Model-Based Methods

a. Maximum Likelihood (Expectation-Maximization — EM Algorithm)

- How it works: Uses observed data to estimate missing values iteratively.

- When to use:

Missingness is MAR.

Data follows a known distribution (e.g., normal).

- Pros: More efficient than multiple imputation.

- Cons: Requires distributional assumptions.

b. Bayesian Imputation

- How it works: Treats missing data as parameters and estimates them using Bayesian inference.

- When to use:

Small datasets with MNAR missingness.

High uncertainty in missing values.

- Pros: Handles uncertainty rigorously.

- Cons: Computationally complex.

4. Advanced Techniques for MNAR Data

a. Selection Models

- How it works: Models the missingness mechanism explicitly (e.g., Heckman correction).

- When to use: When missingness depends on the missing values (MNAR).

- Pros: Corrects for bias.

- Cons: Requires strong assumptions.

b. Pattern-Mixture Models

- How it works: Analyzes different missingness patterns separately.

- When to use: When subgroups have different missing data mechanisms.

- Pros: Flexible for MNAR.

- Cons: Hard to interpret.

c. Sensitivity Analysis

- How it works: Tests how results vary under different MNAR assumptions.

When to use: When MNAR is suspected but unverifiable.

- Pros: Quantifies bias risk.

- Cons: Doesn't "fix" missingness.

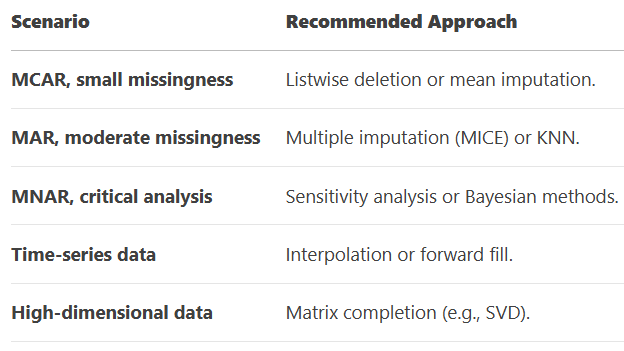

Note:

MCAR: Deletion or simple imputation is acceptable.

MAR: Use MICE, regression, or KNN.

MNAR: Requires advanced modeling (Bayesian, sensitivity analysis).

Always report missingness patterns and justify your method.