In today's fast-paced tech world, have you ever wondered how to boost the performance of large language model (LLM) inference when computational demands skyrocket? Imagine running your favorite LLMs faster and more efficiently by leveraging multiple GPUs — all while maintaining clarity and simplicity in your code.

In my experience, multi-GPU setups not only reduce inference time but also unlock new possibilities for real-time applications and research. In this article, we'll dive into using Hugging Face's 🤗 Accelerate package to perform inference across multiple GPUs, explore minimal working examples, and discuss performance benchmarks. So, grab your coffee ☕, and let's embark on this exciting journey!

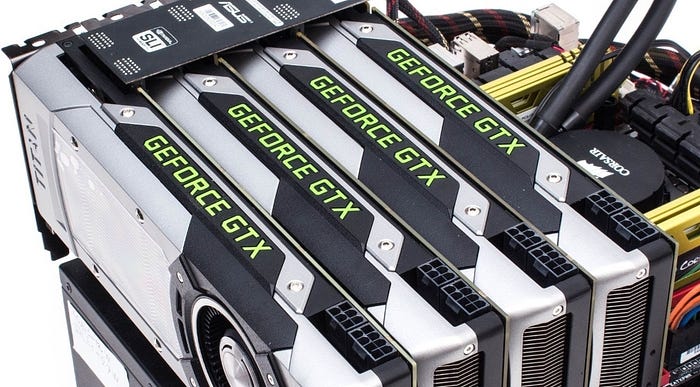

Large language models (LLMs) have reshaped the way we think about natural language processing. However, as these models become more complex and computationally intensive, inference times can grow exponentially. This is where the magic of multi-GPU setups comes into play. By distributing the workload across several GPUs, we can significantly reduce latency and enhance throughput.

Consider a scenario: You're tasked with processing thousands of text prompts for real-time chatbot responses during a major product launch. Relying on a single GPU might leave you bottlenecked, leading to delays and a poor user experience. By harnessing multiple GPUs, you not only accelerate inference but also ensure smooth scaling for production environments.

Did you know? According to recent benchmarks, leveraging up to 4 GPUs can result in nearly linear performance gains — until communication overhead begins to introduce a plateau. Let's delve into how you can achieve this using 🤗 Accelerate.

Meet 🤗 Accelerate: The Ultimate Multi-GPU Helper

The 🤗 Accelerate package by Hugging Face is designed to simplify training and inference across multiple devices — CPUs, GPUs, and even TPUs. Its intuitive API allows developers to write code that scales seamlessly with minimal modifications.

Key Benefits of 🤗 Accelerate:

- Ease of Use: Write code once and run it on different hardware configurations.

- Scalability: Efficiently distribute workloads across multiple GPUs.

- Flexibility: Supports both simple examples and complex production setups.

Accelerate abstracts away much of the boilerplate associated with device management, allowing you to focus on model development and performance tuning. Here's a quick glimpse into the world of multi-GPU "message passing" using Accelerate:

from accelerate import Accelerator

from accelerate.utils import gather_object

accelerator = Accelerator()

# Each GPU creates its own message.

message = [f"Hello this is GPU {accelerator.process_index}"]

# Gather messages from all GPUs.

messages = gather_object(message)

# Only the main process outputs the messages.

accelerator.print(messages)This snippet, often referred to as a "Hello World" for multi-GPU setups, demonstrates how each GPU can contribute to a collective task. The output showcases messages from each GPU, making it a great starting point to understand parallel processing with Accelerate.

Getting Started: A Simple "Hello World" Example

Starting with a basic example might seem trivial, but it lays the foundation for more complex tasks. Let's break down the basic steps:

- Initialize the Accelerator: The Accelerator object orchestrates the entire multi-GPU setup.

- Process Identification: Each GPU assigns itself a unique process index, which can be used to track and manage parallel tasks

- Gathering Information:

The

gather_objectutility collects messages or data from all GPUs and makes them available to the main process. - Output: Finally, only the main process prints the results, ensuring that the output is neat and not duplicated.

This minimalistic approach ensures you understand the core mechanics before diving into more complex inference tasks.

Diving Deeper: Single GPU vs. Multi-GPU Inference

Once comfortable with the basics, it's time to tackle a more advanced scenario: performing inference on multiple GPUs using a simple, non-batched approach. Here's an example using a popular LLM — the Llama-2–7b model:

Simple Multi-GPU Inference

from accelerate import Accelerator

from accelerate.utils import gather_object

from transformers import AutoModelForCausalLM, AutoTokenizer

from statistics import mean

import torch, time, json

accelerator = Accelerator()

# 10*10 Prompts from a diverse selection of famous first lines in books.

prompts_all = [

"The King is dead. Long live the Queen.",

"Once there were four children whose names were Peter, Susan, Edmund, and Lucy.",

"The story so far: in the beginning, the universe was created.",

"It was a bright cold day in April, and the clocks were striking thirteen.",

"It is a truth universally acknowledged, that a single man in possession of a good fortune, must be in want of a wife.",

"The sweat wis lashing oafay Sick Boy; he wis trembling.",

"124 was spiteful. Full of Baby's venom.",

"As Gregor Samsa awoke one morning from uneasy dreams he found himself transformed in his bed into a gigantic insect.",

"I write this sitting in the kitchen sink.",

"We were somewhere around Barstow on the edge of the desert when the drugs began to take hold.",

] * 10

# Load the model and tokenizer.

model_path = "meta-llama/Llama-2-7b-hf"

model = AutoModelForCausalLM.from_pretrained(

model_path,

device_map={"": accelerator.process_index},

torch_dtype=torch.bfloat16,

)

tokenizer = AutoTokenizer.from_pretrained(model_path)

# Synchronize GPUs and start the timer.

accelerator.wait_for_everyone()

start = time.time()

# Distribute prompts among available GPUs.

with accelerator.split_between_processes(prompts_all) as prompts:

results = dict(outputs=[], num_tokens=0)

# Each GPU processes its share of prompts.

for prompt in prompts:

prompt_tokenized = tokenizer(prompt, return_tensors="pt").to("cuda")

output_tokenized = model.generate(**prompt_tokenized, max_new_tokens=100)[0]

# Remove the prompt from the generated tokens.

output_tokenized = output_tokenized[len(prompt_tokenized["input_ids"][0]):]

# Record the outputs and count the tokens.

results["outputs"].append(tokenizer.decode(output_tokenized))

results["num_tokens"] += len(output_tokenized)

results = [results] # Transform to list for proper gathering.

# Gather results from all GPUs.

results_gathered = gather_object(results)

if accelerator.is_main_process:

timediff = time.time() - start

num_tokens = sum([r["num_tokens"] for r in results_gathered])

print(f"tokens/sec: {num_tokens//timediff}, time {timediff}, total tokens {num_tokens}, total prompts {len(prompts_all)}")- Initialization: The code begins by setting up the Accelerator, which ensures all GPUs are in sync.

- Prompt Distribution: The

accelerator.split_between_processes()function intelligently divides the list of prompts among available GPUs. - Inference Loop: Each GPU tokenizes its prompt, generates new tokens, and decodes the output. Notably, the prompt itself is removed from the final output.

- Result Gathering: Finally,

gather_objectconsolidates the results from all GPUs, and the main process calculates and prints performance metrics.

This example clearly shows the workflow for simple multi-GPU inference. However, for real-world applications, you might want to further optimize throughput using batching.

Batched Inference for Real-World Applications

While the simple inference example is great for understanding the basics, production systems often need to handle larger batches of prompts. Batched inference minimizes overhead by processing multiple prompts simultaneously, which can greatly improve performance.

from accelerate import Accelerator

from accelerate.utils import gather_object

from transformers import AutoModelForCausalLM, AutoTokenizer

from statistics import mean

import torch, time, json\

accelerator = Accelerator()

def write_pretty_json(file_path, data):

import json

with open(file_path, "w") as write_file:

json.dump(data, write_file, indent=4)

# 10*10 Prompts from popular literature.

prompts_all = [

"The King is dead. Long live the Queen.",

"Once there were four children whose names were Peter, Susan, Edmund, and Lucy.",

"The story so far: in the beginning, the universe was created.",

"It was a bright cold day in April, and the clocks were striking thirteen.",

"It is a truth universally acknowledged, that a single man in possession of a good fortune, must be in want of a wife.",

"The sweat wis lashing oafay Sick Boy; he wis trembling.",

"124 was spiteful. Full of Baby's venom.",

"As Gregor Samsa awoke one morning from uneasy dreams he found himself transformed in his bed into a gigantic insect.",

"I write this sitting in the kitchen sink.",

"We were somewhere around Barstow on the edge of the desert when the drugs began to take hold.",

] * 10

# Load the model and tokenizer.

model_path = "meta-llama/Llama-2-7b-hf"

model = AutoModelForCausalLM.from_pretrained(

model_path,

device_map={"": accelerator.process_index},

torch_dtype=torch.bfloat16,

)

tokenizer = AutoTokenizer.from_pretrained(model_path)

tokenizer.pad_token = tokenizer.eos_token

# Function to prepare prompts in batches.

def prepare_prompts(prompts, tokenizer, batch_size=16):

batches = [prompts[i:i + batch_size] for i in range(0, len(prompts), batch_size)]

batches_tok = []

tokenizer.padding_side = "left" # For inference, left-pad the sequences.

for prompt_batch in batches:

batches_tok.append(

tokenizer(

prompt_batch,

return_tensors="pt",

padding='longest',

truncation=False,

pad_to_multiple_of=8,

add_special_tokens=False

).to("cuda")

)

tokenizer.padding_side = "right" # Reset padding side after processing.

return batches_tok

# Synchronize GPUs and start the timer.

accelerator.wait_for_everyone()

start = time.time()

# Distribute prompts across GPUs.

with accelerator.split_between_processes(prompts_all) as prompts:

results = dict(outputs=[], num_tokens=0)

# Process the prompts in batches.

prompt_batches = prepare_prompts(prompts, tokenizer, batch_size=16)

for prompts_tokenized in prompt_batches:

outputs_tokenized = model.generate(**prompts_tokenized, max_new_tokens=100)

# Remove prompt tokens from the generated tokens.

outputs_tokenized = [

tok_out[len(tok_in):]

for tok_in, tok_out in zip(prompts_tokenized["input_ids"], outputs_tokenized)

]

# Count tokens and decode outputs.

num_tokens = sum([len(t) for t in outputs_tokenized])

outputs = tokenizer.batch_decode(outputs_tokenized)

# Store the outputs.

results["outputs"].extend(outputs)

results["num_tokens"] += num_tokens

results = [results] # Ensure proper gathering.

# Gather results from all GPUs.

results_gathered = gather_object(results)

if accelerator.is_main_process:

timediff = time.time() - start

num_tokens = sum([r["num_tokens"] for r in results_gathered])

print(f"tokens/sec: {num_tokens//timediff}, time elapsed: {timediff}, num_tokens {num_tokens}")- Batch Preparation: The

prepare_prompts()function splits prompts into batches and tokenizes them with left padding, ensuring that all sequences in a batch align correctly. - Batched Processing: In contrast to processing one prompt at a time, batches allow the model to utilize GPU resources more effectively, leading to a significant boost in throughput.

- Performance Impact: By batching, you might see an improvement from 44 tokens/sec on a single GPU (in the simple example) to over 520 tokens/sec, depending on your hardware setup. This can be a game-changer for applications requiring real-time performance.

Performance Benchmarks and What They Mean

Understanding the performance implications of using multiple GPUs is crucial. Here's a quick snapshot of what the benchmarks looked like for our experiments:

Non-Batched Inference Performance

- 1 GPU: 44 tokens/sec, time: 225.5s

- 2 GPUs: 88 tokens/sec, time: 112.9s

- 3 GPUs: 128 tokens/sec, time: 77.6s

- 4 GPUs: 137 tokens/sec, time: 72.7s

- 5 GPUs: 119 tokens/sec, time: 83.8s

Batched Inference Performance

- 1 GPU: 520 tokens/sec, time: 19.2s

- 2 GPUs: 900 tokens/sec, time: 11.1s

- 3 GPUs: 1205 tokens/sec, time: 8.2s

- 4 GPUs: 1655 tokens/sec, time: 6.0s

- 5 GPUs: 1658 tokens/sec, time: 6.0s

Key Observations:

- Linear Scaling to a Point: Performance increases almost linearly with up to 4 GPUs. However, after a certain number of GPUs, the benefits plateau due to communication overhead.

- Communication Overhead: As more GPUs are added, the time spent in synchronizing and gathering results increases, which limits the overall speed-up.

- Batching Advantage: Batched inference significantly improves throughput by reducing the per-prompt overhead and making better use of parallel processing capabilities.

These benchmarks demonstrate that while adding more GPUs can help, there is a balance between computational gains and the overhead introduced by inter-GPU communication.

So, where can this multi-GPU inference technique be applied in the real world? Let's explore some practical scenarios:

1. Real-Time Chatbots and Virtual Assistants

Imagine a virtual assistant that needs to handle thousands of requests per minute. By distributing the inference load across multiple GPUs, you ensure:

- Reduced Latency: Faster responses, leading to improved user satisfaction.

- Scalability: The system can handle sudden spikes in demand without compromising performance.

2. Content Generation for Media Platforms

For platforms that generate news articles, social media posts, or creative writing content, multi-GPU inference allows:

- High Throughput: Enabling content creation at scale.

- Efficient Resource Utilization: Optimal use of available GPU resources to maintain quality and speed.

3. Research and Experimentation

Academics and developers experimenting with new LLM architectures can benefit from:

- Faster Experimentation Cycles: Run multiple experiments concurrently on different GPUs.

- Enhanced Model Testing: Evaluate performance under varied batch sizes and configurations.

4. Interactive Applications in Gaming and VR

Gaming companies and VR developers often use LLMs to generate dynamic storylines or interactive dialogues. A multi-GPU setup can:

- Boost Real-Time Processing: Provide near-instantaneous responses.

- Enhance Immersion: Enable more natural interactions with game characters or virtual assistants.

In my experience, implementing these techniques has led to smoother deployments and improved end-user experiences, making them an essential tool for developers working on high-performance AI applications.

Best Practices and Optimization Tips

When diving into multi-GPU inference, keep these best practices in mind:

- Choose the Right Batch Size: Experiment with different batch sizes to find the sweet spot for your specific model and hardware.

- Monitor GPU Utilization: Use tools like NVIDIA's Nsight or built-in monitoring in Accelerate to track resource usage.

- Optimize Tokenization: Efficient tokenization can reduce overhead. Consider pre-tokenizing prompts if they are reused frequently.

- Leverage Mixed Precision: Utilize data types like

bfloat16to speed up computation without sacrificing accuracy. - Explore Alternative Tools: For production environments, consider complementary tools like VLLM, 🤗 Text Generation Inference, or FastChat for even more efficient deployments.

Remember, the ultimate goal is to balance speed with accuracy. By carefully tuning your multi-GPU setup, you can achieve impressive performance gains without compromising the quality of the generated text.

Frequently Asked Questions (FAQ)

Q1: What is the 🤗 Accelerate package?

🤗 Accelerate is a library by Hugging Face designed to simplify the process of training and running inference on multiple devices (GPUs, CPUs, TPUs) with minimal code changes. It abstracts away device management and synchronization, making it easier to scale your models. 🔍

Q2: Why does performance plateau after using 4 GPUs?

While adding more GPUs initially leads to near-linear improvements, the communication overhead between GPUs eventually limits further gains. This synchronization time, coupled with hardware constraints, leads to a performance plateau. 📊

Q3: How can batching improve inference performance?

Batching allows multiple prompts to be processed simultaneously, reducing per-prompt overhead. This leads to significantly higher throughput, as seen in our benchmarks where batched inference far outperformed the simple, non-batched approach. 💡

Q4: Are there any alternatives to 🤗 Accelerate for multi-GPU inference?

Yes, for production systems you might consider exploring tools like VLLM, 🤗 Text Generation Inference, or FastChat, which offer additional features and optimizations for large-scale deployments. 🔗

Q5: What are the key benefits of using multi-GPU setups for LLM inference?

Multi-GPU setups provide enhanced throughput, reduced latency, and the ability to scale applications in real-time. This is particularly important for applications like chatbots, content generation, and interactive AI systems. ⚡

Conclusion: Your Next Steps in Multi-GPU Inference

To recap, multi-GPU inference using Hugging Face's 🤗 Accelerate package represents a powerful tool for any developer working with large language models. Whether you're aiming to boost the speed of real-time chatbots or scaling up content generation pipelines, these techniques allow you to leverage hardware resources efficiently while minimizing latency.

In my experience, the journey from a simple "Hello World" example to a full-fledged batched inference system is both exciting and rewarding. By understanding the intricacies of GPU communication overhead, fine-tuning batch sizes, and exploring complementary tools, you can create robust, scalable systems capable of handling the most demanding AI tasks.

So, how do you see multi-GPU inference benefiting your projects? Whether you're a researcher, a developer, or a business looking to enhance your AI capabilities, now is the time to experiment and iterate. Try out these examples, tweak the parameters, and share your experiences with the community. After all, every improvement you make brings us one step closer to faster and more efficient AI systems.

Further Reading and References

For those interested in diving deeper into the topic, here are some additional resources and research papers that might help:

- Hugging Face Documentation: Detailed guides and API references for the 🤗 Accelerate package and transformers library.

- VLLM Project: An alternative approach for scalable LLM inference.

- 🤗 Text Generation Inference: Insights on efficient text generation in production. FastChat: Open-source framework for chatting with large language models in real-time.

Research Papers:

- "Efficient Inference for Large-Scale Language Models" — explores optimization strategies and benchmarks.

- "Scaling Neural Network Inference on Multi-GPU Systems" — a deep dive into the hardware considerations and communication overhead in multi-GPU setups.

Final Thoughts

Embracing multi-GPU inference with 🤗 Accelerate not only improves performance but also opens the door to innovative applications and new research opportunities. By following the examples and best practices discussed in this article, you can transform your approach to handling large language models, ensuring that your AI applications run smoother and faster than ever before.

Remember, the journey of optimization is continuous. As you experiment with your setup, keep track of performance metrics, stay updated with the latest research, and don't hesitate to explore new tools and frameworks. Every tweak and adjustment brings you closer to harnessing the full potential of your hardware and AI models.