After spending months working with large language models such as GPT4 and Claude 3 Haiku, consulting and building services on top of it for clients, I have come to realize how essential it is to avoid using LLMs in isolation. This concept may initially seem abstract what it means to use LLMs in isolation; if you can give me a few minutes of your time to explain, it will all start to make sense.

The average person uses LLM in a singular way i.e Prompt response, prompt, response. This is usually filled with a lot of trial and error and a lot of back-and-forth until you get to the exact answer you are looking for. If you use LLMs long enough, you may start to get frustrated with the generic responses you get at times by going through these circular circles of inquiry.

The true power of LLMs lies in approaching their use as you would when building application logic, with clear context boundaries and clear personas. Clear examples are important to guide the models effectively. You need to stop thinking of them as magic genies that know everything. Instead, focus on incorporating these models into broader workflows, where existing workflows can be married with different variations of these AI models working in tandem with each other to solve complex problems

LLM models have had their crypto ICO moment. The cost of using these LLM models has become much cheaper for high-volume transactional tasks, making it more accessible and a prime time to start seeking creative and innovative approaches that LLMs can complement as part of your new or existing workflows.

I have compiled a highly opinionated list of out-of-the-box ideas that go beyond just using LLMs, such as a Google lookup or document search alternative tool, that can become product ideas for your next LLM-powered projects. If executed well, these ideas have potential upsides to drive real value whether you want to incorporate them in your business for your employer as part of the side gigs you work on.

1. Chained RAG Conversational Chatbots with custom Knowledge

When building bots for real-world use cases, clients want their data to be secure and have predictable costs. Hence, for this demographic category, the way to go is to create something that does not use a paid service and can be self-hosted. This is becoming easier with new open-source models that are on par with Openai's models.

The key here is to ensure this kind of ChatGPT bot can be deployed inside utilized in-house collaborative tools such as Slack, Microsoft Teams, or even within WhatsApp groups.

Honestly — slapping documents on and creating a bot that answers questions on documents so far, from my experience working for a large Fintech organization, has led to stale bots that go unutilized due to various reasons, which can be summed up as poorly implemented logic or the overall documentation the bot leverages on becomes quickly outdated and it's just faster to DM you or your next colleague, to ask for information.

The drawback of LLM chatbots is if your documentation is not regularly updated, it defeats the purpose of having the bot in the first place, as people will always revert to the mean of not using the bot in the first place. It's essential not just to throw random documents at your LLM with vector database search lookups. Without a meticulous thought process of organizing your knowledge base, it will become just another unoptimized directory search engine full of hallucinations.

What you want to do is to build lookup logic that is tailored to the conversation. Let's take, for example, a support chatbot in your Telegram or Slack group. The bot will react in a workflow manner where multiple workflows can be invoked based on the conversation or prompt. If a bot is not able to answer a question, designing a feedback loop can help solve this problem. This can be as simple as prompting the user to choose from a predefined list of actions to perform, for example

- Lookup document repository like a wiki page or Google Docs for information to solve your problem

- Lookup Slack history conversations

- Connect to a support system such as Jira, raise support issues, or tap into your incident response management service to invoke flows.

- Tag who is on-call to handle the issue

For those that code, this will look familiar as it's a logical step of event.s

The above example illustrates precisely what I mean about not building LLM bots in isolation but marrying them within existing workflows and systems to augment tasks.

This means you can design bots that interact with your applications and solve problems like support inquiries or even issues, which is where the real value proposition lies. Productivity gain means you have enough time to make money elsewhere.

How can you achieve this workflow type of agent communication? They are frameworks like CrewAI and LangGraph, which are tailored to handle Cyclic workflow chained thought processes in LLMs.

The basic idea is agents that can receive feedback from other agents' previous outputs, which serves as input for the next agent. With this concept, you can build better RAG chatbots that can leverage tools that can enrich your data retrieval while maintaining the memory of past interactions.

2. Automatic Flow Generation

Generative AI has unlocked exciting new possibilities, particularly in game design. Let's take a look at a hypothetical example for illustration purposes to get ideas flowing. Imagine a service where, through a simple interface, you input the name of the flow you want to create. This flow can define specific actions and behaviors, which can then be linked together to form more complex and refined in-game interactions.

Developers can build services tailored to these specific use cases by leveraging the chaining capabilities of large language models (LLMs) like AutoGPT. One compelling example is automated flow creation in game design, which opens the door to innovative in-game workflows and conversational role-play scenarios.

Conversational NPCs (Non-Player Characters) in games are generally flat and repeative. With LLM in the mix, we can now design NPCs that can engage with players using natural language. By tapping into the capabilities of an LLM, NPCs can dynamically understand and respond to player inputs, creating more immersive and interactive gameplay.

In addition to dialogue, the AI can assist players with in-game tasks. For example, in-game workflow assistance can guide players through complex challenges like crafting or solving puzzles. This deepens immersion and integrates support into the narrative, making each task feel meaningful and interconnected.

LLMs offer vast potential for crafting realistic, dynamic conversations based on diverse NPC personalities. Imagine an NPC that remembers previous interactions and responds contextually, enhancing player immersion. Moreover, LLMs can generate dynamic narratives that evolve based on the player's choices, enabling personalized storytelling where decisions impact the game's progression and outcomes.

Although LLMs like ChatGPT aren't yet true artificial general intelligence (AGI), we can enhance their capabilities to make NPCs appear more AGI-like by improving contextual understanding and knowledge.

You might be thinking about latency; while it's an important consideration for in-game speed, solutions like Azure OpenAI allow you to provide dedicated instances for your LLMs, which can alleviate some of these concerns.

3. Payment and order placement Bots

While this may require a lot of work to get things to work in a proper deterministic approach with careful prompt engineering coupled with code integrations that can perform valid checks and balances, it's possible to design a reliable bot that can be embedded into any platform or mobile app and carry out predefined instructions while providing a conversation interface for users to interact with.

Using natural language can be an opportunity to design minimalist interfaces or what you may hear as conversational interfaces that provide users with a chat-like experience. Here is an oversimplified Github repo that gets the point across.

Do take note when you start heading down this rabbit hole path of applications that need less accountability; extensive testing will be required as you may not want to let loose unsupervised AI to your paying customers, which can lead to untended consequences.

4. Data Visualisation Dashboard

One of the more interesting underrated use cases of LLM models is the ability to interpret and understand structured datasets, which can easily be translated into dynamic dashboard visualizations using NLP. These interactive dashboards allow novice users to query and view data visually in real-time without having to fork up considerable upfront investments to build customized solutions or pay for bespoke SAAS services like Power BI.

The value proposition is in productivity and potential client cost-savings, where data inquiry and insights can be shifted more towards natural language. The ability to use NLP means data can be more easily analyzed data from different perspectives without needing a high developer to build custom static reports.

For instance, you can use the data visualization dashboard and ask ChatGPT to

"Provide the data for demographic audience X between 2022–2023 who had Diabetes".

You can refine this query by adding variables such as,

"Provide the data of demographic audience X women between 2022–2023 who had Diabetes".

In this way, you can analyze the same data with different combinations of variables while being able to visualize, compare, and contrast.

For certain complex calculations, instead of having a large language model (LLM) produce the answer directly, it can be more effective to have the LLM generate code that performs the calculation and then execute that code to obtain the result. For a quick example, you can refer to Langchain framework documentation here.

To get started, refer to this article, which provides further insights and inspiration on querying your data using Langchain.

5. Micro-Chatbots

I like to refer to this idea as micro-chatbots, where chatbots are treated as single-use utilities or small companions that serve one specific use-case when chained together to perform a task. This idea is also referred to as agent chaining when you use a framework such as langchain, which has formed the base design pattern followed by most similar frameworks. If you have dabbled with Langchain, agent tools, or OpenAI functions, tools this should ring a bell.

Zapier Zaps and Vectorshift, a SAAS workflow platform, are the best examples that come close to this idea. The content of a disposable chatbot can be a PDF or other document type, a website, or a conversation transcript between a customer and a service representative.

Semantic search can be performed conversationally on text data. There are two approaches to building this: either providing users with a wizard-based user experience where users can select the components and model they wish to use to build the bot and provide the system messages and guard rails for the bot in an assisted fashion, or giving the user the ability to tailor a custom bot to their needs.

To better understand these concepts, check out Zaiper workflows, which is the closest example I can think of that illustrates this point well.

If you are a native backend developer, a second approach is power user conversational user interfaces that use NLP, where power users can speak bot creation into existence. Users will have the choice of API services the bot should interact with to achieve its goal, similar to AutoGPT.

6. Fraud Detection and Sentiment Analysis using LLM NLP Abilities

LLMs are pretty good with sentiment analysis and, hence, can be used to detect fraud. With fraud, scammers always find different ways to commit cybersecurity fraud. It involves bypassing the security protocols of software and applications. In this way, software engineers must stay ahead and ensure they provide security to their clients.

While LLM can not be used alone, data preprocessing will be vital to ensure the best outcomes, which involves classifying text into different sentiment categories (positive, negative, neutral). At the same time, GPT-3.5 is more geared toward generating coherent and contextually relevant responses, but now you are not limited to just this. You have GPT0, GPT4, AWS Titan, Anthropic Claude 3 Sonnet, Claude 3 Haiku, Cohere, and Mistral AI, all of which have much larger token sizes, meaning you can perform more complex tasks without the limitations we had in 2023.

Professionals can generate engineering project ideas using ChatGPT in this regard. The AI-powered chatbot can use its deep learning capabilities to analyze the text's source. It can detect whether a genuine authority sends the text or if it is the work of scammers.

In this way, engineers can use ChatGPT to develop software and applications for fraud detection and incorporate other sophisticated technologies to enhance their results.

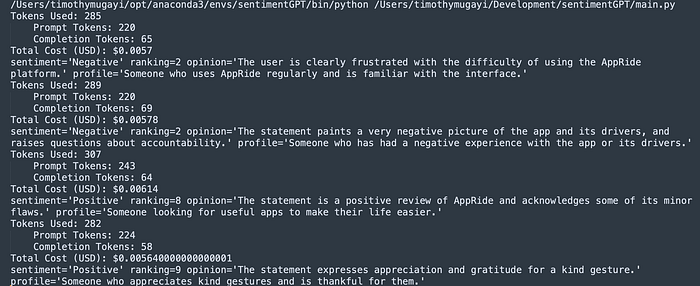

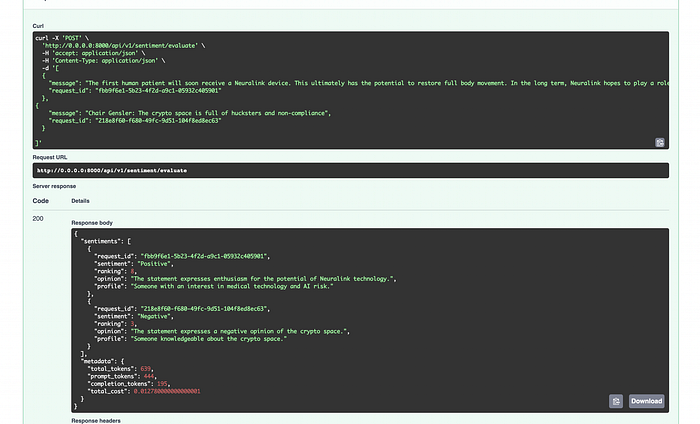

Let's take a look at a basic code example below that can illustrate this. Take note you need to have an OPENAI_API_KEY key setup as part of your environment variables to get Python code running. The link to the GitHub repo with the required dependencies is attached below.

SentimentGPT is a simple demo microservice I built to assist in basic sentiment classification. The idea around the service is to use it to pipe big data sources, such as Google Big Query, and perform the classification.

import json

import os

import openai

from json import JSONDecodeError

from typing import Any, List

from pydantic import BaseModel, ValidationError, Field

from config import get_openai_token_cost_for_model

PROMPT = """

What is the sentiment of this statement?

Ranking On a scale of 1 to 10, how positive or negative is this review?

Opinion What is your opinion of this statement?

Profile What is the profile of the person who would write this review?

---------

{statement}

------

Format response as as JSON object

example:

{{

"sentiment": "Neutral",

"ranking": 5,

"opinion": "The statement presents an interesting possibility that should be further investigated.",

"profile": "Someone interested in global events and current affairs."

}}

"""

class SentimentResponseSchema(BaseModel):

sentiment: str = Field(...,

description="The sentiment associated with the response.")

ranking: int = Field(...,

description="The ranking or score of the response. a numeric measure of the bullishness or postive / bearishness negative. This ranges from 1 (extreme negative sentiment) to 10 (extreme positive sentiment.")

opinion: str = Field(...,

description="Model opinion or feedback.")

profile: str = Field(...,

description="The user's profile classification")

class OutputParserException(ValueError):

def __init__(self, error: Any):

super(OutputParserException, self).__init__(error)

class SentimentOutputParser(BaseModel):

response_schemas: Any

text: str

def parse_json(self) -> SentimentResponseSchema:

try:

json_obj = json.loads(self.text.strip())

except (ValidationError, JSONDecodeError) as e:

raise OutputParserException(f"Got invalid JSON object. Error: {e}")

expected_keys = [_field for _field in list(SentimentResponseSchema.__annotations__)]

for key in expected_keys:

if key not in json_obj:

raise OutputParserException(

f"Got invalid return object. Expected key `{key}` "

f"to be present, but got {json_obj}"

)

return SentimentResponseSchema.model_validate(json_obj)

class SentimentAgent(object):

model_name: str = "text-davinci-003"

@classmethod

def evaluate_sentiment(cls, prompt: str) -> SentimentResponseSchema:

if prompt is None:

raise ValueError("prompt required")

openai.api_key = os.environ.get("OPENAI_API_KEY")

response = openai.Completion.create(

model=cls.model_name,

prompt=prompt,

temperature=0.7,

max_tokens=256,

top_p=1,

frequency_penalty=0,

presence_penalty=0

)

usage = response.get("usage", {})

prompt_tokens = usage.get("prompt_tokens", 0)

completion_tokens = usage.get("completion_tokens", 0)

total_tokens = usage.get("total_tokens", 0)

total_cost: float = 0.0

completion_cost = get_openai_token_cost_for_model(

cls.model_name, completion_tokens, is_completion=True

)

prompt_cost = get_openai_token_cost_for_model(cls.model_name, prompt_tokens)

total_cost += prompt_cost + completion_cost

cost_info = (

f"Tokens Used: {total_tokens}\n"

f"\tPrompt Tokens: {prompt_tokens}\n"

f"\tCompletion Tokens: {completion_tokens}\n"

f"Total Cost (USD): ${total_cost}"

)

print(cost_info)

return SentimentOutputParser(

response_schemas=SentimentResponseSchema,

text=response['choices'][0]['text']).parse_json()

def get_google_play_store_app_reviews() -> List[str]:

reviews = [

"Booking a ride is is error prone and unintuitive. You cannot select your target destination directly on the map, you must choose from a list that AppRide knows. Once selected, the map opens asking you where you would like to be picked up. You cannot check your destination the map until after AppRide starts looking for a driver. Such a bad interface.",

"Drivers tend to cancel last minute. The app is very bad at updating the location of the driver. ",

"Excellent and must have app for living in Thailand and any other country that uses AppRide! Only negative is that when ordering food or groceries, there is a lot that is in Thai and/or another language. Thankfully, there are pictures for everything and you can always screenshot then use google translate, but it would be great if there was a way to have a built-in translator. That has caused very few issues for me, but it is an issue. However, NOT one that should scare someone away from using this.",

"I would like to extend my thanks to the driver. He has been patient with us, and pleasant with a ready smile. He has been accommodating and did his best to help us fit the TV inside his cab, not a small feat and we manage to settle in. His service is exceptional and worth mentioning. It is rare to see such kindness. Thank you so much for all your help, your service is commendable and greatly appreciated"

]

return reviews

# Press the green button in the gutter to run the script.

if __name__ == '__main__':

reviews = get_google_play_store_app_reviews()

for review in reviews:

sentiment = SentimentAgent.evaluate_sentiment(PROMPT.format(statement=review))

print(sentiment)

For full source code and PyPI dependencies, see Git repo here. I have expanded more on the above idea to provide you with some clarity on how you can build this as a service.

While the costs can quickly add up, leveraging an open-source model could be an alternative approach when scaling your solution. One needs to factor in costs and the potential ROI returned by the outcome of using paid models such as GPT. You may also want to try out Ollama-3, which you can run on your local machine for free.

7. Personalized Recommendation Engine Using Machine Learning

Recommender systems have become an integral part of our daily lives, helping us navigate the sheer volume of online information. These systems curate personalized recommendations from e-commerce platforms to streaming services based on our preferences, browsing history, and other data points.

Imagine integrating a bot with N e-commerce sites and asking GPT to provide insights and the best prices or recommendations across multiple channels.

ChatGPT makes for the perfect tool that can become an assistant with the goal of being a helpful assistant used to create applications that can analyze the patterns of behavior of the users.

For instance, the analysis of a dataset shows that people in a certain age group like to listen to a particular genre of music. This knowledge can be used to tailor the kind of music an individual likes. The by-product equates to benefits repeated by the company, which will lead to a better understanding of their customers. At the same time, the customers will not have to waste time searching for their preferred services.

Recommendation engines come in all shapes and sizes. An interesting area for me personally is food ordering and trip planning. Yes, you can build something very sophisticated that you can sell as a service if done correctly. Even if you are not very good at building beautiful user interfaces, there are many incarnations similar to langchain, e.g., crewAI, that allow you to achieve this to a degree.

An alternative is an API-based service that can provide a channel for users to easily integrate into your service. Think of ChatGPT plugins, where the underlying layer of the API is also a chain of ML models that provide customized recommendations based on previous preferences.

8. Synthetic Data generators

As organizations grow, data privacy and governance become crucially important. When it comes to software development, having access to data is essential for developers to test, maintain, and replicate similar environments that mimic closer to production, given the compliance issues that plague large organizations and concerns about co-mingling production data in test environments where this is strictly a no-no. There are real use cases for LLMs here for Synthetic data preparation.

There are many benefits that Synthetic data provides, such as

- Provides a cheaper solution for data preparation in situations where one needs to rebalance by evenly distributing datasets

- It helps maintain PII data privacy, mitigates HIPAA risks, and encourages adherence to data regulations such as GDPR when testing, training, and replicating at scale.

- It helps promote fairness and inclusivity in AI models if data generation is prepared in such a way that it avoids biases.

One of the challenges startups face is needing more data. Building any custom data generation solution could lead to a perpetual project that needs a long runway to return ROI. Leveraging LLM could be the sweet spot speed vs. cost.

How to incorporate billing into LLM-powered Applications

After all, when you build any software, the goal is usually to capitalize on an opportunity that can derive some ROI. The way you build in monetization into your LLM applications is something vital that needs to be preplanned before you launch your solution, as there is a cost associated per transaction, which can quickly rack up large bills. I wanted to take a moment to provide you with some ideas on how you may want to approach monetization.

Consumers bring their own OpenAI keys with this model. There are no upfront costs for the cloud LLM usage; hence, there is no need to build anything extra to manage the quota. You will only be billed for the computing power that is hosting your application. This approach means building a feature that allows secure storage of the user's API keys. You should also ensure you get some legal advice to make sure privacy policies are clear and protect you and your users.

Quota-based + server hosting costs. This approach means any LLM idea you build quota-based or subscription model will be required as part of the application code.

Lease your code for a Yearly monthly fee. B2B: If you are a freelance developer or looking just to get started, this could be a viable option with lower upfront costs. Costs here would be time to ensure you maintain the code.

Tokenization by incorporating a cryptocurrency: With this strategy, you can be more inclusive in your reach. This approach offers several key benefits, including global accessibility without relying on traditional banking, frictionless and low-fee payments ideal for micro-transactions, and the ability to monetize via token economies where users purchase tokens to access the LLM's features.

This approach also ensures transparency and decentralization through blockchain while enabling a flexible, pay-as-you-go model where users pay only for the services they consume, as seen in projects like PAAL AI and ChainGPT.

Final Thoughts

AI can provide significant value to businesses in various industries. As we transition out of the LLM hype cycle, I think it is a good time to start thinking more seriously about use cases that can benefit from LLMs, which can drive real value and ROI in your existing workflows.

If you are looking for more innovative, out-of-the-box ideas, you can build with LLM models; an excellent place to start for inspiration is theresanaiforthat.com, which provides a large list of ideas driven by different LLM models.

There is also an X thread highlighting top AI Agents showcased via a Hackathon hosted by by MultiOn & AgentOp, which lists some interesting ideas to get you started here.

If this piece helped spark ideas I would love to here from you in the comments section.