Kubernetes powers modern cloud infrastructure, but uncontrolled clusters often waste 30–50% of spend on idle resources and misconfigurations. This guide delivers actionable FinOps strategies, code examples, and real-world use cases to reclaim that waste without sacrificing performance.

Understanding Kubernetes Cost Drivers

Kubernetes costs stem from compute nodes, storage, networking, and load balancers, amplified by overprovisioning and dynamic scaling. Nodes represent 60–70% of expenses, where idle capacity and oversized pods drive waste. Labels and namespaces enable cost allocation, but poor tagging obscures visibility into team or app-level spending

Resource requests guarantee minimum allocation for scheduling, while limits cap maximum usage to prevent one pod from starving others. Without them, Kubernetes defaults to limits-as-requests, leading to inefficient node packing and evictions during bursts. Common pitfalls include setting requests too high (overprovisioning) or omitting limits (noisy neighbor issues).

Key FinOps Principles for K8s

FinOps aligns engineering, finance, and business through visibility, forecasting, and optimization phases. In Kubernetes, start with tagging: apply labels like team: devops, env: prod, and app: ecommerce to every resource for accurate showback. Use namespace ResourceQuotas to enforce budgets per team.

Implement the "right-sizing, right-zone, right-time" framework. Right-sizing matches requests/limits to 95th percentile usage; right-zone selects cheaper regions; right-time schedules non-prod workloads on spot instances. Anomaly detection via alerts catches spikes early, preventing bill shocks.

Setting Resource Requests and Limits

Proper requests/limits prevent overcommitment. Requests inform the scheduler; limits enforce hard caps via cgroups.

Here's a YAML example for a Deployment:

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-app

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.21

resources:

requests:

memory: "64Mi"

cpu: "250m"

limits:

memory: "128Mi"

cpu: "500m"Apply it: kubectl apply -f deployment.yaml. Verify with kubectl describe pod <pod-name>, showing allocated resources. Best practice: Set limits 10-30% above requests for bursty apps like Java; use Vertical Pod Autoscaler (VPA) to recommend values from metrics.

Use Case: E-commerce Platform Rightsizing An e-commerce firm analyzed Prometheus metrics revealing pods requesting 2x actual CPU usage. Adjusting to p95 values cut CPU by 30% and memory by 25%, saving 20% on bills without downtime.

Implementing Autoscaling

Horizontal Pod Autoscaler (HPA) scales replicas based on CPU/memory targets; Cluster Autoscaler adds/removes nodes.

YAML for HPA:

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: nginx-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: nginx-app

minReplicas: 3

maxReplicas: 10

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 70Load test with hey or wrk to see scaling. Combine with Karpenter or Cluster Autoscaler for node efficiency.

Use Case: Batch Processing A media company used HPA for video transcoding jobs, scaling from 5 to 50 pods during peaks and down to 2 off-peak. This reduced costs 40% while handling 30% more throughput.

Leveraging Spot Instances

Spot instances offer 60–90% discounts but can interrupt. Use node pools with taints and tolerations.

Example NodeGroup config (EKS via Terraform snippet):

resource "aws_eks_node_group" "spot" {

node_group_name = "spot-nodes"

cluster_name = "my-cluster"

instance_types = ["m5.large", "c5.large"]

capacity_type = "SPOT"

scaling_config {

desired_size = 2

max_size = 10

min_size = 0

}

taint {

key = "spot"

value = "true"

effect = "NO_SCHEDULE"

}

}Tolerate in pod spec: tolerations: [{key: "spot", operator: "Equal", value: "true", effect: "NoSchedule"}]. Tools like Karpenter automate diversification.

Use Case: CI/CD Pipelines A dev team ran Jenkins on spot nodes, tolerating interruptions via checkpoints. Savings hit 70% for non-urgent builds, freeing budget for prod.

Namespace Quotas and Multi-Tenancy

ResourceQuota limits total namespace usage:

apiVersion: v1

kind: ResourceQuota

metadata:

name: team-dev-quota

namespace: dev

spec:

hard:

requests.cpu: "4"

requests.memory: "8Gi"

limits.cpu: "6"

limits.memory: "12Gi"

pods: "20"Apply per namespace for team isolation. LimitRange sets defaults: defaultRequest: {cpu: 100m}.

Use Case: Multi-Team Cluster A SaaS provider enforced quotas across 10 teams, preventing one from monopolizing nodes. This enabled safe multi-tenancy, cutting per-team costs 25% via shared infra.

Monitoring and Visibility Tools

Kubecost/OpenCost allocate costs to namespaces/pods using Prometheus. StormForge or Goldilocks suggest rightsizing.

Dashboards show: cluster efficiency (target >85%), top spenders. Integrate with Cloud Billing for forecasts

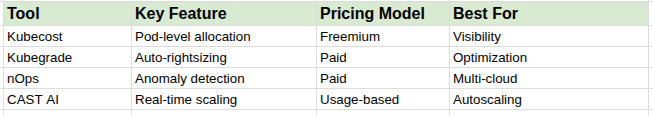

Top Tools Comparison

Advanced Optimization Strategies

Node Affinity and Topology Spread: Schedule workloads to cheapest zones: nodeAffinity: {requiredDuringSchedulingIgnoredDuringExecution: {nodeSelectorTerms: [{matchExpressions: [{key: zone, operator: In, values: [us-west-2a]}]}]}}.apptio

Cleanup Idle Resources: CronJob to delete Completed Jobs, unused PVCs: kubectl patch cronjob <name> -p '{"spec":{"successfulJobsHistoryLimit":3}}'.rishabhsoft

FinOps Workflow: Weekly reviews with engineering; chargeback via labels. AI tools like ScaleOps predict usage.

Use Case: Gaming Company A gaming firm combined VPA, spot instances, and Kubecost. Idle pods dropped 60%, spot usage hit 50% of non-critical load, yielding 50% savings. Performance improved via precise scaling.

Measuring Success and Next Steps

Track metrics: cost per namespace, efficiency ratio (utilized/allocated), savings runway. Aim for 20–40% reduction in 90 days.

Start small: Profile one namespace, apply requests/limits, add HPA. Scale to cluster-wide with tools. Collaborate via FinOps teams for sustained gains.