Part of my series on Automating Cybersecurity Metrics. GitHub. Also Deploying a Static Website. The Code.

Free Content on Jobs in Cybersecurity | Sign up for the Email List

In the last post I covered some of the security considerations and best practices if you choose to use GitHub Actions.

I also explained in the last two posts why I am apprehensive to use it, but if I did I would host a private runner. That is more time and expense I don't want to spend right now. If you host your own GitHub server in your own private network then the risk is lower but access across the Internet is a bit too risky for me.

Trigging the build from the deployment environment and a nonce

So if we don't use GitHub Actions what other options do we have? Most of the time when you're dealing with network security you allow outbound access a bit more freely but you block inbound access as much as possible. When you allow inbound access typically that access has to go through layers of security to get to your data. This defense in depth model is a security best practice.

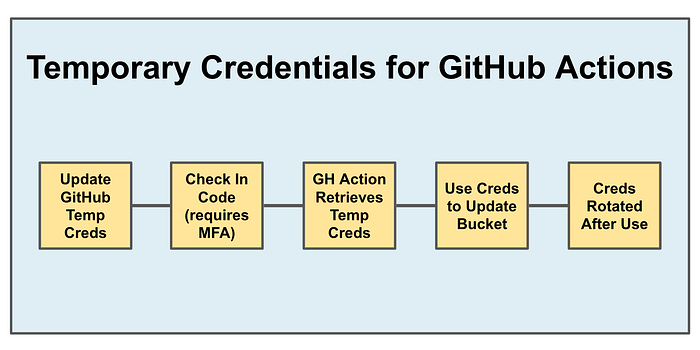

My first idea was something like this where you trigger a build in your own environment. That generates temporary credentials that can be used one time. GitHub Actions can reach out to Secrets Manager with long term credentials that can only read the secret. The GitHub action updates the credentials and then the credentials and related session is revoked.

That approach is similar to using OIDC where the cloud provider grants temporary credentials. In fact you might be able to emulate that with OIDC if you can terminate the credentials after the single action is complete, and you want to only allow the action if someone has triggered a push with MFA.

The benefit of the above is that the credentials to update the website S3 bucket do not live on GitHub. Github only has credentials that allow retrieval of those credentials.

If GitHub itself were somehow compromised and someone got access to the credentials in GitHub secrets or in a log somewhere or via code injection as explained in prior posts, they could reach out and get credentials out of AWS. But those AWS credentials used to update the S3 bucket should be stale if they were credentials that could only be used once, like a nonce. (A nonce is a token that can only be used once to prevent replay attacks.)

For the above with private IP address access such as I have set up, you would still need a private runner and that's all a bit much for a few static websites.

Pull versus Push, backups, multi-factor deployments, and integrity checks

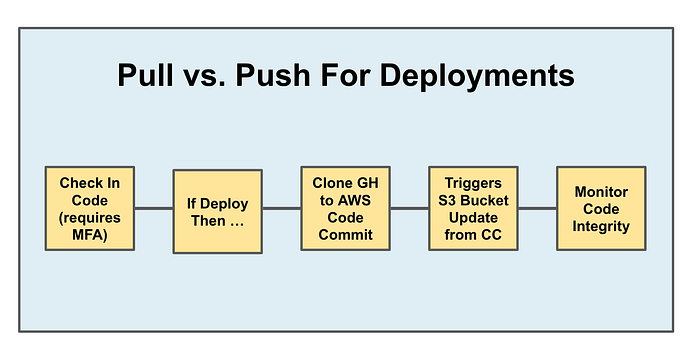

That could work but I also thought of another approach. What if I just write a script that I use to check in code (as I have done before) that handles other actions at the same time. The code check in requires MFA. I don't store the credentials and the MFA. It requires a human to enter a password and check in code. Whether or not this is the case depends on how you configure GitHub as I've explained in other posts.

That same script can ask the user if they also want to deploy the code they are checking in. You might not want to deploy every single time you check in code in my experience so I like having the option better in a development environment.

If the user wants to deploy the code, it is cloned from GitHub to AWS Code Commit. AWS Code Commit can reside in a private network. Perhaps the code is pulled down by some intermediary process locally and then checked into AWS Code Commit somehow via a private network. In any case the process that clones from GitHub only gets read access, not write. So that process can't tamper with the code in GitHub.

Then the code is pushed to AWS Code Commit. At some point an integrity check could exist that ensures the code that came from GitHub has not been altered. This integrity check needs to be handled by separate credentials than those that had permission to push to Code Commit.

Once the code hits Code Commit that could trigger a Lambda function or — perhaps a batch job (where this series started out) — to deploy the code to an S3 bucket. The process that deploys the code to the S3 bucket has no direct access to GitHub and can have read only access from AWS Code Commit.

So maybe it looks something like this:

Why bother with all of that? Way back at the beginning of my first business, Radical Software, Inc., a guy helping me created a public FTP website with both read and write access. Shortly thereafter the guys I was working with called to ask why were were using up all the bandwidth on their T1 line. At the time, a T1 line was huge. "Um, I'm not?" Turns out someone was — the folks who found our FTP folder and were using it to serve up WAREZ content (pirated software, typically.)

It was then that I learned that in general, you don't want to give both read and write access. For example, when you host a web site in your S3 bucket, you only want to give people browsing the ability to read the data but not write to it. Of course, looking back, our FTP folder should have been on a private network and used proper authentication — but way back then — I saw a lot of things you don't want to know about.

Let's say credentials get compromised. Let's say it's by attackers who want to deploy ransomware to your S3 bucket. They get the credentials used to deploy code to your S3 bucket. Well, in that case, they can encrypt all the data in your S3 bucket, but they only have read access to AWS Code Commit so they can't encrypt that code, nor do they have access to GitHub.

The model above provides the following benefits:

- Backups

- Separation of duties for different sets of credentials.

- No single set of credentials can delete, encrypt, or tamper with all the code in the process.

- You can check the code integrity by comparing the S3 bucket to AWS Code Commit and the GitHub repository.

- If someone at AWS tampered with your code during the deployment process, your code integrity checks would alert you.

- If someone at GitHub tampered with the code and you are monitoring drift detection between your S3 bucket and code in GitHub you would get an alert.

- Multiple sets of logs in your deployment process help protect against log tampering at either cloud service.

- Versions in three separate places, including S3 bucket versioning that you ensure your role that deploys code to the S3 bucket cannot access or alter.

OK all that could be overkill for a simple static website but it's better than direct access for GitHub actions to deface your website, deploy cryptominers, or serve up malware!

Perhaps there's some better model and I'm not yet sure if I'm going to implement all of the above exactly as written. I may only implement a subset of that and reserve the right to change my mind as I go. :-) But this is the type of threat modeling and thought process you should be using when designing secure systems — and especially deployment systems.

Why? Read about the Solar Winds Breach.

Next up:

Follow for updates.

Teri Radichel | © 2nd Sight Lab 2023

The best way to support this blog is to sign up for the email list and clap for stories you like. If you are interested in IANS Decision Support services so you can schedule security consulting calls with myself and other IANS faculty, please reach out on LinkedIn via the link below. Thank you!

About Teri Radichel:

~~~~~~~~~~~~~~~~~~~~

Author: Cybersecurity for Executives in the Age of Cloud

Presentations: Presentations by Teri Radichel

Recognition: SANS Difference Makers Award, AWS Security Hero, IANS Faculty

Certifications: SANS

Education: BA Business, Master of Software Engineering, Master of Infosec

Company: Cloud Penetration Tests, Assessments, Training ~ 2nd Sight Lab

Like this story? Use the options below to help me write more!

~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

❤️ Clap

❤️ Referrals

❤️ Medium: Teri Radichel

❤️ Email List: Teri Radichel

❤️ Twitter: @teriradichel

❤️ Mastodon: @teriradichel@infosec.exchange

❤️ Facebook: 2nd Sight Lab

❤️ YouTube: @2ndsightlab

❤️ Buy a Book: Teri Radichel on Amazon

❤️ Request a penetration test, assessment, or training

via LinkedIn: Teri Radichel

❤️ Schedule a consulting call with me through IANS ResearchMy Cybersecurity Book: Cybersecurity for Executives in the Age of Cloud