This article talks about my bootstrapped startup journey so far. You will get to know lots about web AI agents, the importance of reaching users as early as possible and see how & why I pivoted to do things that won't scale.

Idea

I had a startup idea around 12 months back. It was somewhere between August 2023. I was working as a contract full-stack engineer at a Google.org funded non-profit — Learning Equality.

I heard about AutoGPT in one of Sandeep Maheshwari's AI videos. I curiously looked into AutoGPT's GitHub. I cloned and ran it locally using my OpenAI API key.

AutoGPT at that time was an AI agent that would take a natural language instruction and step-by-step it would execute that instruction.

For example, if you said AutoGPT to "search for flights from Mumbai to SF" then AutoGPT would open a browser, then navigate to Google.com, search for flights by filling up the form, parse the results from the webpage and finally return it to the command line.

AutoGPT worked by prompting GPT-4. It would first make a step by step plan and then execute each step by giving GPT-4 a list of available commands it could execute to complete the step.

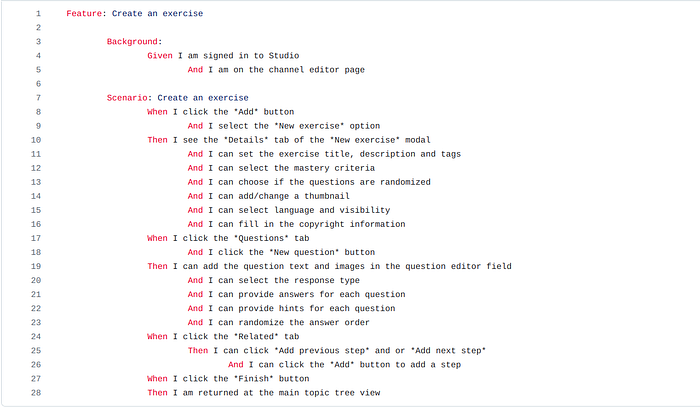

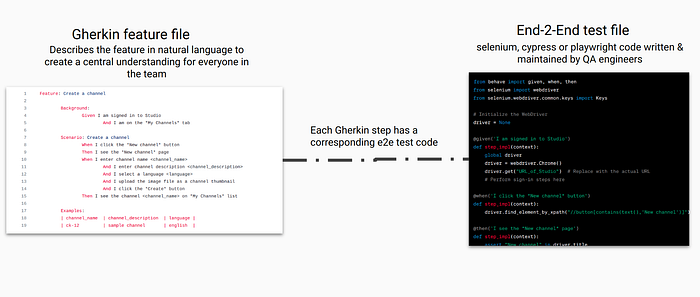

At Learning Equality, we were doing manual QA testing of our web app using Gherkin stories. A Gherkin story is a natural language description of how a feature should work.

I thought to myself, an AI agent should be able to read this Gherkin story and intelligently perform the steps.

An idea for my startup was born.

An AI agent that can automate manual QA testing.

Digging into web AI agents

I knew nothing about AI. And I knew very little about QA testing.

So, the first thing I did was I went deep into QA testing. I learned that Gherkin stories can already be automated using end-to-end (E2E) testing libraries like Playwright, Cypress or Selenium.

End-to-end testing simulates how a real user would perform a feature in your app. Usually these days, QA automation engineers write end-to-end test cases in Playwright.

For example, in case of Doordash, to make sure adding food to the cart works, there will be an end-to-end test that will perform all the steps to add food to the cart just like a user would do.

I talked to my few developer mates, and I learned that end-to-end testing has two major challenges:

- E2E tests are flaky, they break when the app's code changes.

- writing and maintaining E2E tests are no-fun for a software team.

My mind ran fast.

I thought, even if the app's code changes, an AI agent should be able to figure out the right element to click on because the AI agent won't be dependent on DOM selectors to locate an element. The AI agent will look at the screen just like a human and figure out the right element to interact with.

So, now it was time for me to develop the AI agent!

I thought I will need some sort of reinforcement learning to train an agent to perform browser tasks. I had no idea about reinforcement learning. So, I took an AI course taught by Arvind Nagaraj and Balaji Vishwanathan.

Via that course together with my two AI-enthusiast friends, I learned neural networks from scratch. I developed a convolutional neural network model for the famous MNIST dataset that could recognize handwritten digits.

I learned how LLMs are trained and basics of how they work under the hood. I learned about vector databases, embeddings and multi-modality.

That course made me realize that reinforcement learning won't be the right path forward. We already have intelligent models (GPT-3.5 & 4) that could be utilized.

I made the very first proof-of-concept — a GPT-3.5 powered AI agent which was able to execute only very simple steps like clicking on primary buttons or typing on form fields. Upgrading to GPT-4 also didn't yield satisfactory results.

The GPT-4 powered agent was expensive and failed on majority of steps. I felt demotivated. But internally I knew there must be a way to make it work.

I kept researching on web agents. During my research, I learned about Adept. A company building AI agents that can automate browser tasks.

I signed up for their Adept Experiments program, basically, it was a beta access to their AI agent via a Chrome extension.

I got invited to try out their Chrome extension. It was the most accurate AI web agent I had seen so far. My mind was blown away. I could see the huge potential of web AI agents!

I thought, what if I could use their internal models via an API?

I cold-emailed their founders and some senior employees. One of their employees replied saying that they weren't focused on providing third-party access to their in-house models.

Now, I thought, I will need to build stuff in-house. I started reading research papers on autonomous web UI agents. I landed on a promising paper "You Only Look at Screens: Multimodal Chain-of-Action Agents".

The authors (Zhuosheng Zhang, Aston Zhang) developed a model called Auto-UI that used a vision encoder to directly look at the webpage like a human eyes.

While I was learning about neural nets and LLM internals, I had realised that the closer we get to how a human mind's think, the closer we will get to human-like artificial intelligence.

The Auto-UI model worked just like how we humans interact with webpages — by looking at the screen and trying to figure out the right element to click on.

I thought, "yeah. I have found it! It's time to run the model".

For around next 20–25 days, me and my friend Akash tried understanding & running the model locally. But we were not able to.

Again, I was surrounded by the feeling of not knowing what to do next.

It was January 2024, time for CES 2024. Everyone who was interested in the AI space got their Twitter filled with videos and tweets of Rabbit R1 and their Large Action Model.

I was not interested in the R1 device. But I was impressed with the idea of the Large Action Model.

I called my friend Akash, told him about the Large Action Model. I told him, either we need to build our own Large Action Model or we need an API access to it somehow.

I messaged my teacher Arvind Nagaraj, asking if he has any advice on building something similar to the Rabbit's Large Action Model.

We talked on Discord for the next week or so, he told me about Multi-On and Open Interpreter. They had their APIs available for use.

I tried Multi-On's chrome extension, back then, it failed to perform steps I expected it to pass.

Some days later we met on Google Meet and I told Arvind sir everything — about my idea, Adept model's potential, the failed PoC and the failed Auto-UI model attempt.

During the last 10–15 minutes of the call, he suggested me to try using a vision model like GPT-4 Vision. He suggested me to prompt the model with the screenshot of the web page and try to figure out a way for utilizing GPT-4 Vision capabilities to act on web pages.

That thought stayed with me for some days. After some days, I saw the SeeAct paper on Twitter that Arvind sir retweeted. I read the paper.

That paper showed me some hope that a good-enough AI agent can be built to perform steps on a browser by following natural language instructions like "Click on the 'Search' button on the side of the input box".

Going full-time with my startup

I always wanted to work on my own thing full-time. And I had found it.

I met a founder of a software consultancy business. He had built and maintains several software products for his clients. I shared with him that I am building an AI agent to automate the QA testing of web apps.

I asked him if he would be interested in using my AI agent for testing the web apps he had built. He looked interested and told me that he might invest if the product worked well.

All these past months, I had been trying to balance my part-time job at Learning Equality and working slowly on my idea.

I assumed the worst case would be I get failed at building this startup. I was ready for this worst case. But I could not stop myself from following my heart because I was in love with the idea of helping software companies.

So, I decided to leave my part-time job and focus entirely on my startup.

I set a deadline for myself. 30 days. To get the MVP ready by building an AI agent using GPT-4 Vision.

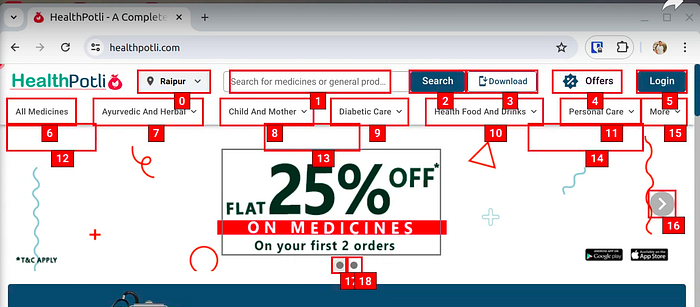

I completed my challenge and built the MVP in 30 days. It worked by labeling the interactive elements on the web page with numbers. Then it asked GPT-4 Vision to pick the most accurate element number to click on given the natural language description of the element.

It worked well in many situations. But I could see it failing in many more situations.

I decided to find a user and iterate on my MVP with the user's feedback.

Working with a YC-funded company's CTO

I first met Ujjwal, co-founder & CTO of GimBooks (YC W21) at a cafe. We discussed about my idea and he told me to show the MVP. We planned a meet.

I showed him the MVP, he played with it. He saw potential in the idea. He connected me to his QA engineer.

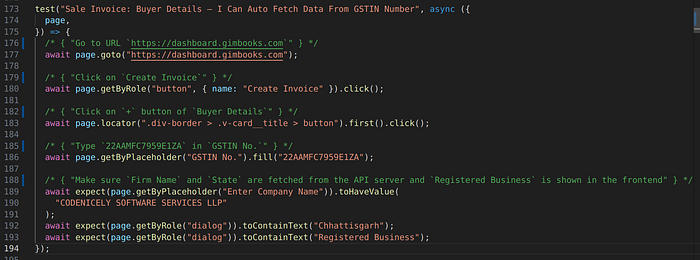

I met the QA engineer over Google Meet. I asked about how they have been doing QA of their web app. I carefully listened to his answers and also looked at the end-to-end test code he tried writing at some point in the past.

Then, I met the QA engineer in-person to let him play with the MVP and to talk more deeply with him about the problems GimBooks have been facing with QA.

I realized that the QA engineer has no bandwidth to write end-to-end tests in the first place. He was already very occupied doing exploratory testing of their mobile apps.

So, I decided to write the end-to-end tests for GimBooks by myself.

Doing the thing that won't scale

After deciding to write the end-to-end tests for them by myself. I had two options. First, to use my AI product to write the tests. Second, to code the tests manually by hand using Playwright.

My AI agent was not reliable enough to be able to cover the test cases so I decided to manually write using Playwright. Also I thought, using Playwright to write the E2E tests would make me experience the pains of a QA automation engineer.

I had read Paul Graham sir's most talked-about essay, Do things that don't scale. I could see it happening. I was actually doing a thing that's not going to scale.

I spent most of my time learning about how experienced QA automation engineers approached writing E2E tests for an application. Then, I wrote around 20–25 E2E tests and developed a new MVP for the GimBooks team. The new MVP was built for the CTO and their developers to look at the test cases' steps and run in real-time.

Up-till now, I had just words of Paul Graham sir on my mind. But now I understood why a startup initially needs to do things that don't scale.

To provide the highest amount of value possible as fast as a founder can to initial users, it needs to do thing that won't scale.

How a founder can reach a position where he can do the thing that won't scale?

First, reach a user as quickly as you can and do everything to genuinely help them. If you want to get anything out of this story. Get this. Reach a user as early as possible.

Second, spend a lot of time with your user. Don't just listen to what they are saying. Ask them about what they did to solve it in the past. Look at their past actions. Try figuring out why they are not able to solve it even now. Their past and current behavior is more important than what words they say.

In my case, the user had no bandwidth for writing E2E tests. So, I did.

Looking forward

GimBooks did not convert to a paying customer. Ujjwal (the co-founder) told me that they were more focused on their mobile apps and QA automation testing of their web app was not their priority at that time.

A failure and a success.

I failed at getting a paying customer but succeeded at experiencing the doing of things that won't scale.

So, what's the path forward from here, if it's not going to scale?

Recently, I read some parts of Sahil Lavingia's book, The Minimalist Entrepreneur, it suggested that doing freelance initially for the first set of customers can be a great way to learn and to get positive cash flow which would give some breathing room for future steps. I relate with it.

So currently, all my focus is to serve my initial customers by providing them E2E testing as a service. And then, I will let my customers give direction to my journey. I will listen & observe them. They will take me to the right place. Like GimBooks took me to doing a thing that won't scale.

I will really love to hear from you guys about the things you did in your journey that didn't scale. Please, share those with me on Twitter (DMs are open for all). You should also follow me there as I will be sharing learnings and stories of my journey.