The first quarter of 2025 has been ripe with outstanding announcements from the major players in quantum technology. The common theme that most companies are focusing on right now is to make quantum computers more reliable by suppressing the errors that inevitably creep up when quantum information is stored in a noisy environment.

As 2024 came to a close, Google announced Willow — their first quantum chip operating below threshold, i.e. capable of better performance (due to better error correction) with increasing system size. A few weeks later, in January 2025, Xanadu unveiled Aurora, their first scalable, networked, and modular photonic quantum computer. While this technology does not address error correction explicitly, it does present a workable pathway towards scalable architectures by cleverly interconnecting chips with a network of optical fibers. In February, Microsoft followed suit with the hyped announcement of their Majorana-1 chip.

Microsoft is taking a completely orthogonal approach to quantum error correction by betting everything on the design of topological superconductors, a special type of material that is predicted to host Majorana quasiparticles. Majorana quasiparticles should be fault-tolerant by design due to their non-local information storage, but so far the results presented by Microsoft have been met with skepticism.

Bold promises of practical applications are being made left and right, sometimes on shaky technical grounds, but the momentum that the field is building up is undeniable.

In this frenzy, a new contender for the role of quantum trailblazer is Amazon. The e-commerce giant is not totally new to the quantum ecosystem. For example, in 2019 it launched Amazon Braket — a cloud-based service providing access to various quantum hardware platforms. However, its contributions towards proper universal quantum computing platforms had so far flown under the radar. Now, Amazon has made a bold entrance into the quantum "space race" by partnering with quantum scientists at Caltech lead by Oskar Painter. Together, the team has unveiled its newest Ocelot quantum chip in late February. And, as customary, the press release follows the publication of a technical paper in Nature, where the performance of their latest architecture is presented. Let's dissect together this new milestone in quantum computing!

The Quest to Tame Errors

The approaches of the aforementioned companies might all seem different, but in reality their efforts are driven by the same fundamental challenge: mending the intrinsic fragility of quantum information.

Quantum computing touts paradigm shifts in all kinds of scientific sectors, from material science, to quantum chemistry, to cryptography. What is often not advertised, though, is how these breakthroughs are crucially dependent on reliably controlling a very large number of qubits.

Let's take Shor's algorithm as an example. Devised by American mathematician Peter Shor in 1994, this algorithm utilizes quantum resources to rapidly break down large numbers into their prime factorization. Theoretically, Shor's algorithm shows an enormous computational speed-up compared to classical algorithms, running in polynomial vs. (sub)exponential time. This even poses a potential security threat, since many internet protocols like RSA rely on the prime factorization of large integers for information encryption. In fact, governments around the world are already preparing for a post-quantum era. Australia, for example, has announced plans to phase out RSA-based encryption by the end of the decade.

While countries are being overly cautious, there is still a huge technological gap to fill. Shor's algorithm was implemented by IBM as early as 2001 using NMR technology, but at the time the number of qubits available only allowed to decompose 15 into 3 × 5 — quite far from the up to 4096 bit long integers used in RSA encryption. Almost twenty years later, in 2019, IBM attempted to reproduce this algorithm again on their latest IBM Q System One quantum computer to factor 35 into 5 × 7. Alas, the procedure failed because of too many accumulating errors. In fact, current estimates place the number of qubits needed to factor out a 2048 bit RSA integers with current technology at a whopping 20 million, far exceeding what could be implemented in near-term architectures.

It is apparent that simply scaling up the number of qubits is irrelevant if they cannot be made precise enough.

This is why companies are investing so heavily into error correction methods. To get there, each company is leveraging the strengths of its own technological platform: Google is implementing error correction by building logical qubits with redundant superconducting physical qubits; Microsoft is pursuing topological qubits that suppress errors at the hardware level; Xanadu is building networked photonic modules to scale up without relying on cryogenics. The common thread is clear — whoever can tame error correction while keeping the system scalable may well define the next era of computing.

With Ocelot, Amazon is taking yet another approach to error correction by employing different building blocks, in which some type of error correction is already embedded in the device from the ground up. In fact, the name Ocelot — after the elusive wild cat native to the Amazon jungle— is not just a catchy branding choice, but a very clever nod to the core feature at the heart of their quantum architecture: cat qubits.

Cat States

Cat qubits (or cat states) homage Schrödinger's cat, a well-known thought experiment related to the quantum superposition of macroscopic objects. In Schrödinger's somewhat deranged scenario, a cat is placed in a sealed box together with a radioactive source and vial of poison. The radioactive source is connected to a Geiger counter, which is assumed to be precise enough to detect single-atom decays. If the counter detects radioactivity, the poison is released from the vial, killing the cat. But as long as atom decay is detected, the vial will remain intact and the cat alive. Because the radioactive decay is a random, quantum mechanical phenomenon that directly impacts the cat's survival, the animal bizarrely inherits the quantum superposition property of being both alive and dead at the same time until the box is opened to reveal the answer.

In the creation of cat qubits, no animals are involved, and only the essence of quantum superposition is retained. Similar to the eponymous cat, these qubits exist in a superposition of two states — labelled ∣α⟩ and ∣−α⟩:

These states are actually a special type of quantum states called coherent states. Coherent states are quantum states that closely resemble classical oscillations, like a swinging pendulum or a vibrating electromagnetic field. They are well-behaved, stable, and follow predictable paths in phase space, making them ideal building blocks for cat qubits.

In the Ocelot architecture, coherent states are generated by populating microscopic waveguide resonators with photons in a specific quantum configuration using precisely controlled electromagnetic pulses. The label α, in fact, refers to the complex amplitude describing the state of the resonator. The two coherent states have a phase-space separation proportional to |α|, which roughly corresponds to the square root of the mean photon number that the resonator is hosting. Therefore, for large values |α|, the two states have negligible overlap and can be considered macroscopically distinguishable. This is analogous to the two macroscopic states — "dead" and "alive" — that the cat in Schrödinger's though experiment can experience.

A Cat-egorically New Approach to Error Correcting Codes

A particular advantage offered by cat states is that their phase-space separation also delocalizes the quantum information they store. This provides natural protection against a class of errors known as bit-flip errors — random processes in which the state ∣α⟩ transitions to ∣−α⟩ or vice versa. These errors are exponentially suppressed as the separation between the coherent states increases, a feature ultimately enabled by the large number of quantum states the waveguide resonator can host. In other words, cat states provide passive protection by design against certain types of noise, and this protection improves dramatically as the number of photons in the resonator increases.

In the Ocelot chip, bit-flip errors occur on average every 1 ms during standard operation — hundreds of times longer than the 3 µs error correction cycle time.

However, the cat-state architecture doesn't provide everything for free. While it offers stronger protection against bit-flip errors with higher photon numbers, the system remains vulnerable to another common type of quantum error: phase-flip (or dephasing) errors. These introduce random shifts in the relative phase between ∣α⟩ and ∣−α⟩, progressively degrading the information stored in the superposition.

In the Ocelot, phase-flip errors are caused by single photons escaping from the resonator and by heating. Worse yet, the rate of these errors scales linearly with |α|². So, while operating at higher photon numbers suppresses bit-flip errors, it exacerbates phase-flip errors. Choosing the optimal number of photons is therefore a compromise, and some level of quantum error correction remains unavoidable. Luckily, phase-flip errors can also be mitigated before error correction is applied.

Stabilization by Loss

It turns out that while single-photon losses are bad for the cat state, two photon losses are not. In fact — provided that the leaked energy is promptly pumped back in — they can even end up stabilizing the state over time! How is this possible?

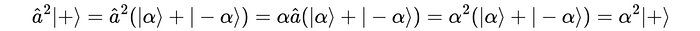

The starting point is the realization that the coherent states ∣±α⟩ that the cat state is made out of are not fundamentally altered by single-photon loss, but they do acquire an extra factor. In quantum jargon, we say they are eigenstates of the annihilation operator â, and in mathematical terms we write:

Now, when we apply the same operation to the cat states, i.e. the superposition of the two coherent states, we obtain:

The cat states do not remain invariant: the even cat state

is mapped to the odd one (and viceversa):

Thus, single-photon loss doesn't preserve the computational basis ∣±α⟩. It introduces phase-flip errors.

However, if we apply the same operation twice in a row — encoding a two-photon loss process — we recollect the state with the same parity! For example:

This means that two-photon loss, in contrast to single-photon decay — is symmetry-preserving with respect to the logical encoding in the cat code.

If we take care of compensating for the overall amplitude loss by actively pumping energy back into the system, then two-photon loss can be exploited to passively stabilize the qubits against phase-flip errors.

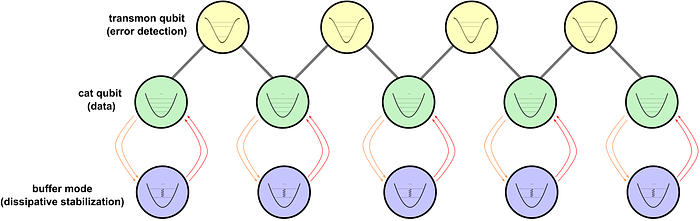

This is precisely what the Ocelot chip does. It engineers a special kind of dissipation that doesn't simply remove photon pairs randomly, but it does so in a tailored way anchored to the specific amplitude α of the coherent states ∣±α⟩. This is achieved by coupling each resonator hosting the cat qubits to another circuit element called a buffer mode. The buffer is driven in just the right way so that every time two photons are lost from the resonator, the system also gets a small energy boost, keeping the size of the cat qubits from shrinking. As a result, any drift away from these states is automatically suppressed by the dissipation itself.

The dissipative stabilization preserves the long 1-ms bit-flip times while pushing the phase-flip times to around 30 µs. This results in a strong imbalance, or noise bias, between bit-flip and phase-flip errors, which is exactly what additional error-correcting codes can take advantage of.

The Repetition Code

Despite the advantages of dissipative stabilization, a lifetime of 30 µs is still too short for reliable quantum information storage during computations. To address this, error correction is needed to reset the system when significant errors occur. The Ocelot uses a technique called bosonic error correction, named after the bosonic nature of the cat states (bosons are particles that can share the same quantum state, like photons).

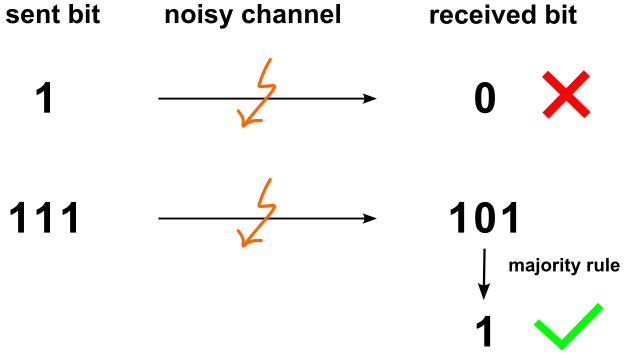

First of all, logical information is distributed across multiple physical cat states using a repetition code. The term "repetition" refers to encoding the same logical qubit multiple times across different resonators to enable error detection and correction. This principle of redundancy is already well known in classical telecommunications. There, instead of sending a single bit — e.g., encoded as "1" — the information is repeated multiple times, such as in the sequence "111".

The reason is simple: if only one bit is sent, and it gets corrupted during transmission, the information is lost. By repeating the bit, however, we can still recover the original message even if a single bit flip occurs. This is done using a simple majority vote: if more "1"s than "0"s are received (e.g., "111", "101", "110"), the signal is decoded as "1"; otherwise (e.g., "001", "100"), it's decoded as "0". The greater the repetition, the lower the chance of multiple simultaneous bit flips, and the better the protection against errors.

The quantum repetition code follows the same logic: it protects information by spreading a logical qubit redundantly across multiple physical components, so that errors affecting individual parts can be identified and corrected based on their collective behavior.

The cat qubit repetition code helps preserve quantum information, but by itself it doesn't overcome a fundamental hurdle imposed by the laws of quantum mechanics: we cannot measure the state of the cat qubits directly, because doing so would collapse the quantum state, and with it the superposition that gives quantum computing its superpowers. Instead, we need to perform indirect measurements.

In practice, this is achieved by placing ancilla qubits between the data-storing cat qubits (technically, these are coupled via noise-biased controlled-X gates). The ancillas interact with the cat qubits to extract information about errors accumulating between them without disturbing their quantum state. Moreover, these gates are specifically engineered to minimize the risk of introducing bit-flip errors, allowing the error-correction code to focus primarily on correcting phase-flip errors.

While ancilla qubits could, in principle, also be bosonic cat qubits, they are more difficult to stabilize and control, requiring complex drive schemes. For this reason, transmon qubits are used as ancillas instead. Transmon qubits are a type of superconducting qubit known for their robustness and controllability, and they are widely used in other quantum processors, such as Google's.

Each ancilla monitors one pair of cat qubits and acts as a parity-check detector, coupling to two neighboring cat qubits. This allows it to detect differences between them, such as whether one has experienced photon loss relative to the other. This detection procedure, called syndrome measurement, proceeds in cycles. Each cycle comprises the initialization of the ancilla, two CX gates between the ancilla and its adjacent data qubits, and finally, the measurement and reset of the ancilla.

Importantly, during the measurement process, the system continues to stabilize the cat qubits using two-photon dissipation, preserving their delicate noise bias.

A key achievement of the Ocelot architecture is that it maintains long logical bit-flip times (up to 1 ms) even during repeated syndrome measurements. This is crucial because even a single bit-flip in any of the constituent cat qubits would otherwise corrupt the entire logical state. Thanks to bit-flip suppressing design of the cat qubits and careful calibration of the CX gates, the system remains robust, enabling effective phase-flip correction with minimal bit-flip corruption.

Performance Below Threshold

So, how does the quantum chip fare after all this error correction is put in place? Quite well! The Amazon/Caltech team has demonstrated quantum error correction below threshold — a milestone in fault-tolerant quantum computing, which was already celebrated by Google's Willow chip a few months before.

What does this "below threshold" mean? In essence, it means that as the architecture grows larger, the error correcting code performs better.

In the specific case of the Ocelot, the scientists measured the logical qubit fidelity of a distance-3 repetition code (i.e. with 3 cat qubits and 2 ancillas) and compared it with the performance of a distance-5 repetition code (with 5 cat qubits and 4 ancillas). Their results demonstrate that the logical phase-flip probability per cycle is systematically lower in the distance-5 setup and for all ranges of photon number used, meaning that the larger system outperforms the smaller one.

Furthermore, when all types of errors are taken into account, the minimum logical error per cycle for the distance-5 code is 1.65%, slightly lower than the smaller distance-3 codes (whose best performance is around 1.67%), even though the former involves more qubits and gates.

This confirms that the physical error rates of the Ocelot are low enough for scaling to larger systems to be beneficial. Moreover, the better protection from phase-flip errors of the distance-5 code enables it to operate at higher photon number (i.e. higher value of ∣α∣²), exploiting the larger noise bias of the cat qubits in that regime.

The Future Looks Bright — Literally

With their Ocelot chip and innovative bosonic error correction scheme, Amazon firmly establishes itself as a serious contender for the title of quantum computing leader. It places the company squarely in the same conversation as Google and IBM. It is now not merely a cloud provider, but a front-line innovator shaping the future of quantum hardware and error correction.

So, what's in store for the future?

Amazon's immediate roadmap will likely focus on advancing bosonic error correction and scaling their system to include more cat qubits.

The authors of the Nature paper outline a clear near-term goal: reducing the overall logical error per cycle to 0.5%, achievable by further optimizing the current circuitry and shortening the error correction cycle time. However, a fundamental challenge remains: bit-flip errors cannot be corrected with the repetition code. While these errors are naturally suppressed by the structure of cat qubits, ensuring that they remain negligible as the system scales will be critical to maintaining low logical error rates.

Currently, the dominant errors stem from intrinsic decoherence in the data qubits. But as the system grows larger, correcting bit-flips induced by the transmon ancillas will become increasingly important. One potential path might involve concatenating cat qubits into surface codes, an approach reminiscent of strategies pioneered by Google. In the longer term, though, Amazon may transition toward fully cat-based architectures, provided they can reduce control errors in cat–cat CX gates. This would eliminate transmons entirely, enabling all logic and syndrome extraction to be carried out within a network of photonic resonators and pioneering a true "quantum computer of light."

The future of quantum technologies — and quantum computing in particular — looks bright. As we've seen with the intercoupling of photonic resonators and transmon qubits, different hardware approaches remain deeply interconnected. There is a lot that the leading companies can learn from one another, but there are also many hardware advantages that they might be reluctant to share. One can only hope that a spirit of knowledge exchange and collegiality will prevail in the quantum ecosystem in the years ahead, accelerating progress for all.