Our goal was simple: to understand what these AI agents can do and how they might change the way we work with information and solve problems. This blog post outlines our research, findings, and thoughts on the future of AI agents and their potential applications.

What are AI Agents?

AI agents are smart computer programs that can work on their own. Unlike regular software that just follows set rules, AI agents can understand what you want them to do, come up with their own plans, and change how they work if needed. They use powerful language understanding tools (like GPT or Claude) to figure out information and decide what to do next. This makes AI agents really good at handling different tasks without needing step-by-step instructions every time.

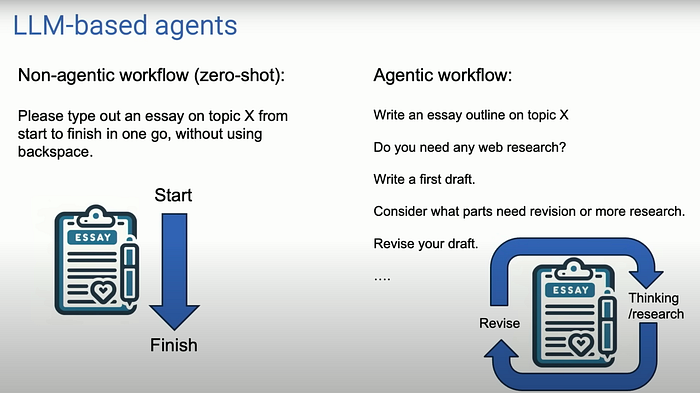

How AI agents differ from traditional LLMs:

- Workflow: Traditional LLMs typically follow a "non-agentic" workflow where you input a prompt and get a single response. AI agents use an "agentic" workflow that is more iterative and complex.

- Task Approach: LLMs generate responses in one go, while agents break down tasks into steps, plan, and can revise their work.

- Tool Use: Agents can interact with various tools and external resources, while traditional LLMs are limited to their training data.

- Reflection and Self-improvement: Agents can review and improve their own work, whereas LLMs typically don't have this capability.

- Collaboration: AI agents can work together in multi-agent systems, taking on different roles to solve complex problems.

- Adaptability: Agents can adjust their approach based on intermediate results or feedback, while LLMs generally provide static responses.

- Time Frame: LLMs provide instant responses, but agents may take longer to complete tasks as they go through multiple steps and iterations.

Initial Research

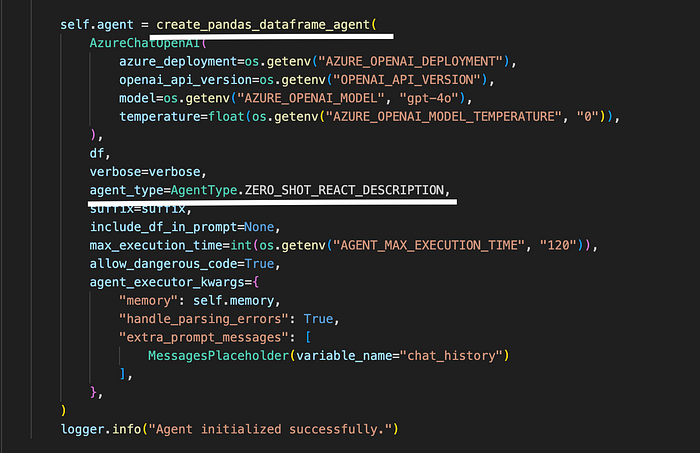

Our journey began with Langchain agents, which proved to be an accessible starting point. We first experimented with their Zero Short ReAct (Reasoning and Acting) agent, designed to break complex tasks into manageable steps using a cycle of thought, action, and observation. This approach showed promise in combining reasoning traces with task-specific actions.

In the below code snippet, we created an Excel agent calling Langchain's Create Pandas Dataframe agent

Note — The agent type could be ReAct, OpenAI Functions, OpenAI Tools, Tool Calling and each agent type comes with its pros and cons.

Langchain is well-suited for building complex AI applications that require multiple tools and decision-making capabilities.

Key features of using Langchain:

- Can use multiple LLMs and tools in a single application

- Provides a wide range of pre-built tools and integrations

- Allows for the creation of complex, multi-step workflows

- Supports various programming languages (Python, JavaScript, etc.)

CrewAI

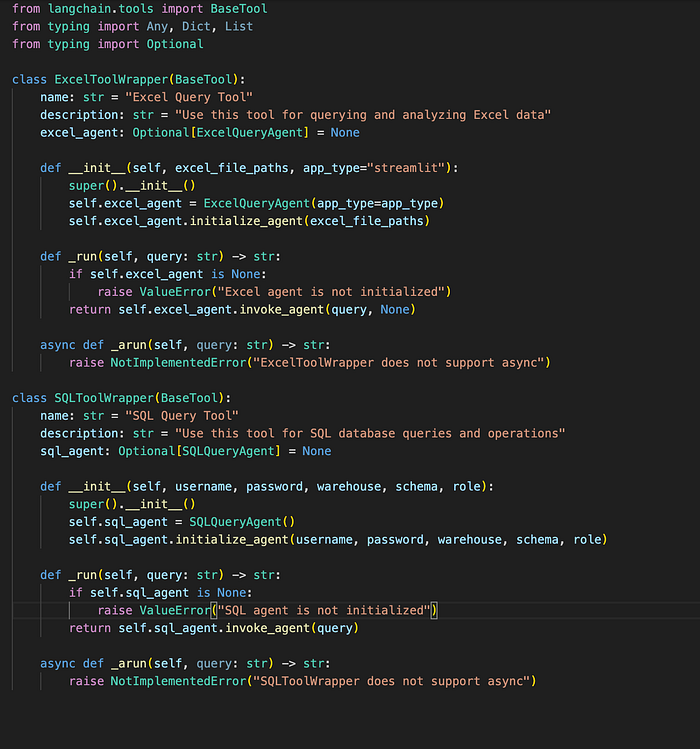

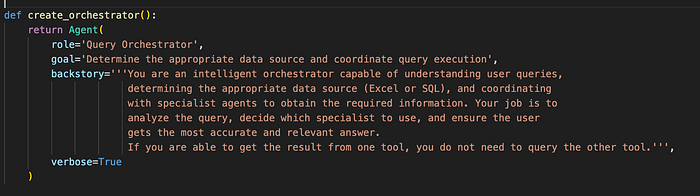

Additionally, we evaluated CrewAI, a framework for creating AI agents that work together as a team to accomplish tasks. It's designed to simulate collaborative environments where multiple AI agents with different roles work together.

Since CrewAI understands langchain tools, we repurposed our langchain tools within CrewAI by creating wrappers to parse them as CrewAI tools.

We then created an orchestrator which could navigate between these two tools based on User's query.

Each of these tools were assigned their specific tasks so the orchestrator was able to understand which tool had the relevant information.

Key features of CrewAi:

- Focuses on task-oriented, collaborative AI workflows

- Simulates human-like team structures with roles and hierarchies

- Simplifies the process of creating multi-agent systems

CrewAI has a unique approach to simulating a team among AI agents which makes it particularly useful for tasks that benefit from collaborative problem-solving or role-based task allocation.

Tool Calling Agent

Throughout our experiments, we discovered that while these open-source frameworks are excellent for quickly building applications, they may not always align with the rapidly evolving landscape of LLMs.

We wanted an approach that could give us a little more flexibility and control over the LLM calls.

The tool-calling functionality emerged as the most flexible and powerful option, offering greater control over LLM calls with less complexity. This approach allows for the integration of multiple tools, such as connecting to different databases, API integration, and Data Visualization Tools (Matplotlib or Seaborn for creating charts and graphs), File Manipulation Tools (PDF, CSV, Excel).

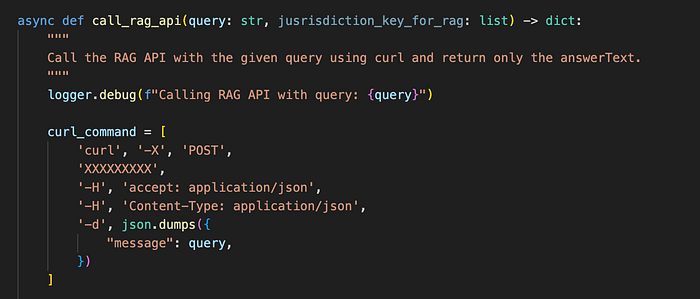

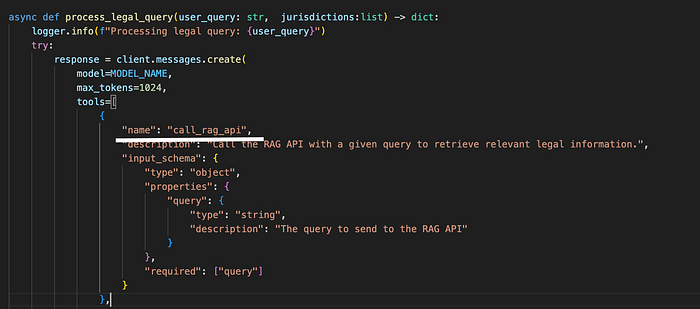

Here is an example of a tool that calls an API to get the answer.

The above code passes the tool — 'call_rag_api' with a defined description and Input Schema to the LLM, note that the defined clear structure of a tool is the key to ensuring the LLM understands the tool and is able to access and call the tool when needed.

Key features:

- Can interact with a wide range of external tools and APIs

- Enhances LLM capabilities with real-world data and actions

- Implementation can vary widely depending on the specific LLM and tools

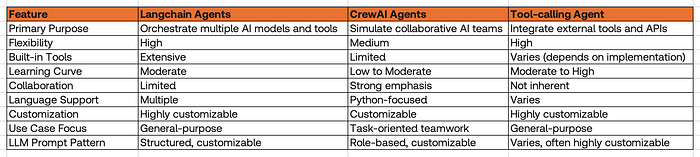

To provide a clearer comparison, we've summarized the key features of the different agents we explored:

Implementing AI Data Agents: A Practical Example

With nearly 15 years in the data industry, I've been working at building data pipelines, designing data architecture, and developing solutions for data lakes. This extensive background has given me a unique perspective on the potential of AI agents in revolutionizing data management and analysis.

My journey with AI agents began with a Data Analyst Agent: an agent that could seamlessly connect to our data lake in Snowflake. This initial project has since evolved into a more comprehensive solution — a data lake agent that's changing how we interact with our data.

Our data lake agent exemplifies how AI can enhance data accessibility and analysis. Here's what it can do:

- Connect directly to our Snowflake data lake

- Interpret natural language questions from users

- Generate appropriate SQL queries based on these questions

- Execute the queries on Snowflake

- Return the results in a user-friendly format

This agent effectively bridges the gap between complex data structures and non-technical users, democratizing data access within our organization.

Looking ahead, we're excited about leveraging Snowpark to take our data lake agent to the next level. Snowpark, Snowflake's developer framework, allows us to write and execute Python code directly within the Snowflake ecosystem. This means we can:

- Perform complex data transformations and analytics without moving data out of Snowflake

- Utilize Snowpark's DataFrame API for more intuitive data manipulation

- Create and use User-Defined Functions (UDFs) in Python

- Integrate machine learning workflows directly into our data pipeline

- Optimize performance by leveraging Snowflake's powerful compute resources

By combining our AI agent with Snowpark's capabilities, we're opening up new possibilities for advanced analytics and machine learning operations right where our data resides. This integration will allow our agent to not only query data but also perform complex computations and apply machine learning models in a highly efficient, secure, and scalable manner.

The agents have the potential to not only streamline our current processes but also to open up entirely new avenues for data exploration and insight generation, all while maintaining the security and performance benefits of cloud-native data platforms.

From Data Lakes to Intelligent Ecosystems: The Promise of AI Agents

My take on the future of AI agents is optimistic. I believe that these agents are going to grow significantly in capability and utility. The power of creating tools and passing them to agents has shown remarkable improvement in reducing hallucinations and increasing accuracy. For instance, if you ask an LLM to perform a complex calculation like 79793+34235, it might give the correct answer, but there's also a chance of hallucination. However, if we create a calculator tool and instruct the LLM to always use this tool for mathematical questions, we can ensure accurate results and verify whether the tool was used.

This is just one example, but imagine the potential of agents that can not only think and answer but also act on the user's behalf. We envision a future where we could ask an agent to create or modify database tables, add new rows or columns based on our analysis, or even connect to an API to fetch data load it into a database and build dashboards. The potential of these agents is immense.

However, it's important to acknowledge the risks associated with AI agents, particularly the possibility of making decisions based on incorrect information. If an LLM can hallucinate, it can also act inappropriately on the user's behalf. To protect users from such risks, it is crucial to implement robust validation mechanisms, ensure transparency in agent actions, and establish safeguards that limit the scope of autonomous actions. This includes continuous monitoring, human oversight, and the ability to override or correct the agent's decisions when necessary.

Conclusion

It's worth noting that open-source frameworks like Langchain and CrewAI offer significant benefits in rapidly developing agentic applications. These frameworks provide pre-built components and abstractions that can dramatically reduce development time and complexity. For researchers or developers looking to quickly prototype or experiment with AI agents, these tools can be invaluable.

However, creating custom tools and agents, while potentially overwhelming, offers greater flexibility and control. The choice between using open-source frameworks or building custom solutions often depends on the specific research approach and project requirements. For instance, projects requiring fine-grained control over agent behavior or integration with specialized tools might benefit more from a custom approach.

During our research, we also explored Anthropic's tool-calling capabilities, which showed promising results. The Anthropic approach offers a balance between flexibility and ease of use, making it an attractive option for many applications.

As AI continues to advance, we're likely to see a shift towards more sophisticated, flexible AI agents capable of performing complex tasks. or a set of tasks. These agents could potentially revolutionize how businesses handle data, making advanced analytics more accessible and efficient. However, it's crucial to consider the ethical implications and potential limitations of relying too heavily on AI.

We believe that AI agents could revolutionize how we build orchestration workflows. We could develop specialized agents for various roles, such as data analyst agents, data engineering agents, and even data scientist agents. Each of these could handle complex tasks in their domain, collaborating seamlessly to provide comprehensive data solutions.

The rapid evolution of LLM agents presents exciting opportunities. Whether using open-source frameworks for quick prototyping or developing custom solutions for specific needs, the key is to choose the approach that best fits the project's goals and constraints. As these technologies continue to advance, staying informed and adaptable will be crucial for leveraging their full potential in real-world applications. The future of AI agents is not just about automating tasks; it's about augmenting human capabilities and opening up new possibilities in how we interact with and derive insights from data.