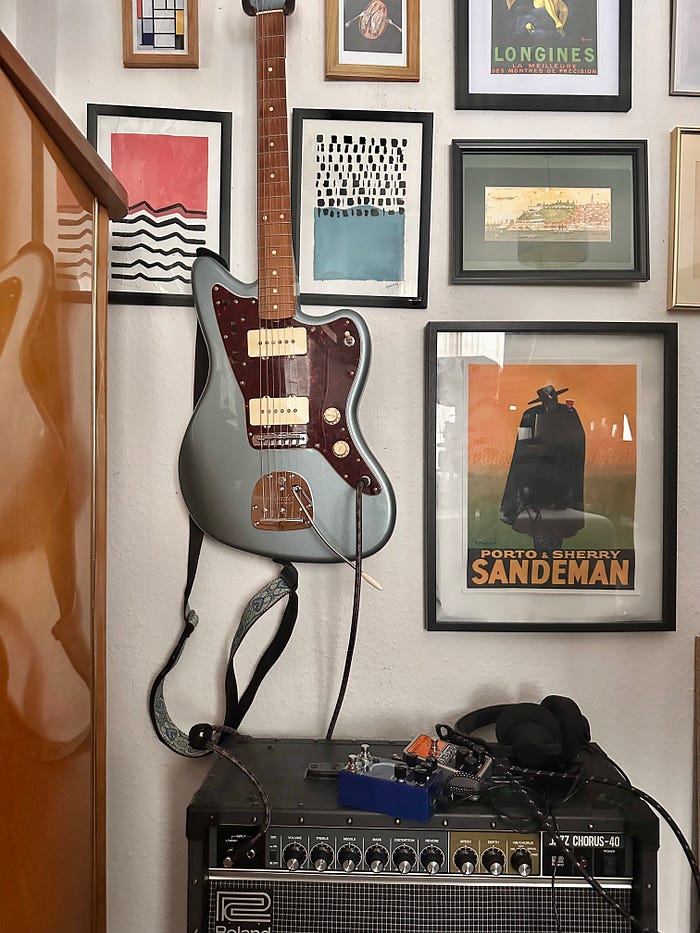

It's been a while since my last post, and I know I promised a part 2. But guess what? Summer finally arrived in Germany, and I got a bit distracted. I also bought the beauty below and decided to revive my inner shoegazer. Between practicing and soaking up the sun, I've been living the good life. But now, it's time to get back on track and dive into the next phase of our nutritional co-pilot project!

Quick recap

As we dive into the next phase of building our nutritional co-pilot, let's reflect on the foundation we've laid. In Part 1, we tackled created a first draft for extracting recipes from YouTube videos using a local AI model. This groundwork set the stage for what comes next. For a detailed look at how this was implemented, check out Part 1 of this series.

Enhancing Our Workflow with LangChain and Pydantic

As we move forward with our nutritional co-pilot project, let's highlight two key tools that boost our workflow: LangChain prompt templates and Pydantic parsing.

Prompt templates are a game-changer for generating consistent and efficient responses from our AI models. They give our prompts a uniform structure, making outputs more predictable and reliable. Plus, they let us reuse and customize prompts easily, saving time and reducing redundant code.

Pydantic parsing, on the other hand, ensures that the data we extract is always well-structured. Using prompt templates and Pydantic parsing together creates a solid framework for our application, making it easier to manage and scale our solution.

Enhanced Data Structure for Improved AI Interactions

We'll take the prompt a step further by adding field descriptions and Docstrings. These enhancements not only make our schema more robust but also improve interactions with LLMs.

Here's how I adjusted the Pydantic model:

Old:

import json

from typing import List, Optional

from pydantic import BaseModel, Field

class Recipe(BaseModel):

name: str

ingredients: List[str]

protein: Optional[float] = None

carbs: Optional[float] = None

fat: Optional[float] = None

calories: Optional[int] = None

preparation_steps: str

preparation_time: Optional[int] = NoneNew:

from pydantic import BaseModel, Field

from typing import List, Optional

class Ingredient(BaseModel):

name: str

quantity: str

class Recipe(BaseModel):

"""Schema representing a recipe with ingredients (as strings) and their quantities."""

name: str = Field(description="The name of the recipe.")

ingredients: List[Ingredient] = Field(description="List of ingredients.")

protein: Optional[float] = Field(description="The amount of protein contained in the recipe.")

carbs: Optional[float] = Field(description="The amount of carbs contained in the recipe.")

fat: Optional[float] = Field(description="The amount of fat contained in the recipe.")

calories: Optional[int] = Field(description="The calories which the recipe contains.")

preparation_steps: str = Field(description="The preparation steps.")

preparation_time: Optional[int] = Field(description="The preparation time in minutes.")

additional_notes: Optional[str]= Field(description="Any additional notes.")Adding field descriptions and a Docstring to our Pydantic model isn't just about aesthetics. There are several practical benefits. LLMs understand and process data more effectively when they know the purpose and context of each field. By providing descriptions, we clarify what each piece of data represents, leading to more accurate responses. Descriptions also guide the generation process.

Clear, simple code improves readability for both human developers and AI assistants. As the schema evolves, descriptions maintain clarity about each field's purpose, making updates easier. They add semantic information to type hints, providing a full picture of each field. When Pydantic performs data validation, these descriptions generate meaningful error messages, helping users understand input issues.

Integrating the Enhanced Schema with LangChain

Now that we've enhanced our Pydantic model with field descriptions and a Docstring, let's integrate this schema into our processing pipeline using LangChain's PydanticOutputParser. This integration ensures our outputs are structured and validated according to our schema.

pydantic_parser = PydanticOutputParser(pydantic_object = Recipes)

format_instructions = pydantic_parser.get_format_instructions()The PydanticOutputParser is a powerful tool that ensures the outputs generated by the LLM conform to a predefined schema. By passing our Recipes model to the PydanticOutputParser, we instruct the parser to validate and format the LLM's output according to our schema. This integration not only ensures the data adheres to the expected structure and types, but it also automates formatting and enhances reliability. This makes the data easier to process further, whether for storage, analysis, or display.

structured_text = "{instruction}\n{format_instructions}"

message = HumanMessagePromptTemplate.from_template(structured_text)

prompt = ChatPromptTemplate.from_messages([message])

output_parser = JsonOutputParser(pydantic_object=Recipes)In this code snippet, we define a structured text template with placeholders for dynamic content, create a human message template to maintain consistent instructions, and combine it into a chat prompt template for clear and structured interactions with the language model. The JsonOutputParser then ensures the generated outputs conform to our predefined Recipe schema, validating and structuring the data accordingly.

chain = prompt | llm | output_parserIn the last snippet, we use LangChain's Expression Language (LCEL) to create a processing pipeline using the | operator, which connects different components called "runnables." The chain combines our structured prompt, the language model (Mistral 7B), and the output_parser. The prompt provides clear instructions to the language model, which processes the input and generates a response. This response is then parsed by the output_parser to ensure it matches our predefined Recipes schema. We invoke the chain with the invoke method, passing in the instruction to extract products, the transcript of the video and the format instructions.

Conclusion

In this post, we enhanced our data model and leveraged LangChain's tools to create a robust pipeline for structured and validated outputs from our local AI implementation. These improvements significantly boost the reliability and efficiency of our nutritional co-pilot.

Stay tuned for Part 3!

Before you go:

References:

- LangChain. (n.d.). https://www.langchain.com/

- Pydantic. (n.d.). GitHub — pydantic/pydantic: Data validation using Python type hints. GitHub. https://github.com/pydantic/pydantic

- TheBloke/Mistral-7B-Instruct-V0.2-GGUF · Hugging face. (n.d.). https://huggingface.co/TheBloke/Mistral-7B-Instruct-v0.2-GGUF (available under Apache2.0 license)