We've all been there. You get a suspicious email, hear a questionable story, or meet someone new. Your first move? You open a browser and start digging. That gut instinct to investigate? That's OSINT in its most basic form. Whether we realize it or not, most of us are already practicing amateur open-source intelligence every day.

OSINT or Open-Source Intelligence sounds like jargon fit for a spy movie. But at its heart, it's just the formal name for finding and connecting the dots of information people leave in public view. For cybersecurity pros, journalists, and researchers, it's an indispensable tool. But this power to uncover comes with a deep responsibility not to invade.

So, how do you navigate this digital landscape without crossing lines? How do you be a detective without becoming a stalker? This isn't just about the tools you use; it's about the ethical compass you follow.

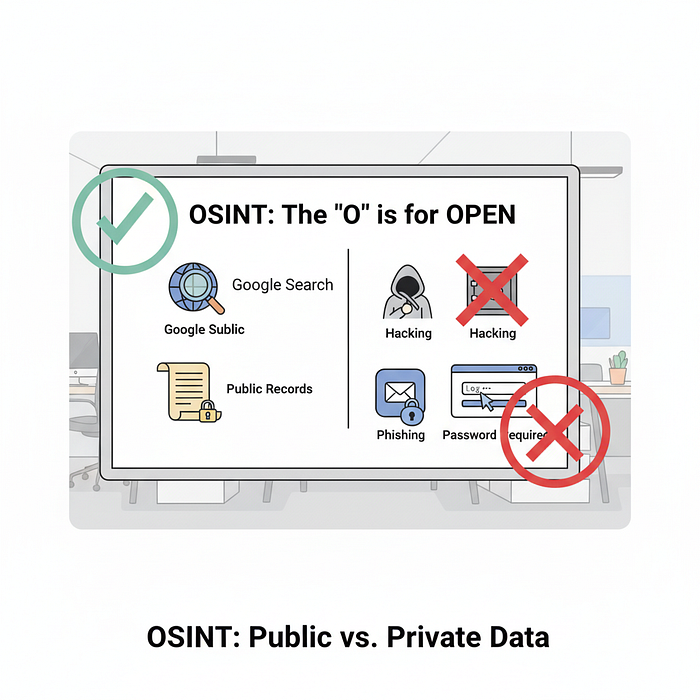

What OSINT Really Means (It's Not Hacking)

I like to think of OSINT as the art of being a digital magpie, but one with a very specific shopping list. You're only picking up the shiny pieces of information that are already out in the open, free for the taking. We're talking about:

- Stuff you can find on Google (the surface web and a little bit of the deep web, too).

- Public government records — like business filings and property deeds.

- Social media profiles set to "public."

- News archives and academic papers.

- The metadata hidden in photos and documents.

The crucial thing to remember is the "O" for "Open." If you have to hack, phish, or use a password to get in, it's not OSINT. It's that simple. In cybersecurity, this is the foundation of so much good work. It's how a analyst finds a trove of a company's internal documents accidentally left public on a cloud server, or how a researcher tracks a disinformation campaign by tracing fake accounts back to their source. You're working with the digital footprint we all, to some degree, willingly or unwillingly, leave behind.

The Thin Line: Investigation vs. Invasion

This is the heart of the matter. The line between smart investigation and creepy invasion isn't always a bright, clear one. It often comes down to your intent and the context.

Let me give you a couple of real-world contrasts.

The Ethical Investigator: Imagine you're a security professional. You get a phishing email targeting your company. The "From" address looks suspicious. So, you do some digging. You look up the domain's registration details (using a public WHOIS database). You see it was registered just 24 hours ago with fake information. You then search for that domain on threat intelligence platforms and find others have flagged it for malware. You've just used OSINT to protect your organization. Your intent was defense. Your impact was positive.

The Unethical Invader: Now, picture an online argument that gets heated. One person decides to "win" by gathering ammunition. They take the other person's username, find their public social media profiles, cross-reference an old blog post to find their hometown, and then pull a property record to find a relative's name. They then bundle this all up and post it online to shame and intimidate the person. This is doxxing. The information was public, but the intent was malicious, turning a collection of harmless data points into a weapon.

See the difference? One is about protection and truth. The other is about power and harm. It's the difference between being a guardian and being a troll with a research skillset.

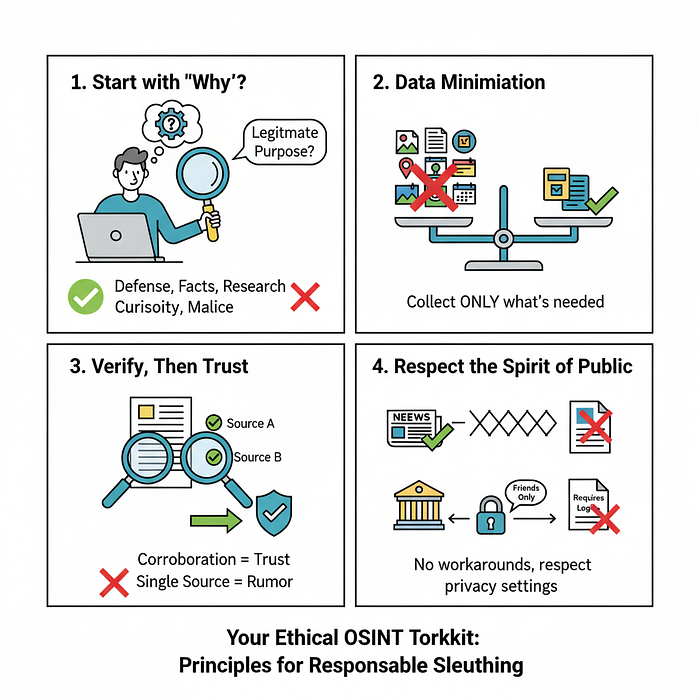

Your Ethical OSINT Toolkit: Best Practices for the Responsible Sleuth

Knowing the line exists is one thing; knowing how to stay on the right side of it is another. Here's a practical toolkit of principles I try to live by.

- Start with "Why?": Before you fall down the rabbit hole, pause and ask yourself: "What is my legitimate purpose here?" If the answer is "I'm just curious" or "I don't like this person," close the tabs. Your purpose should be defensible — like improving security, verifying facts, or conducting authorized research.

- Embrace Data Minimization: This is a big one. Just because you can find out everything about someone doesn't mean you should. Only collect what you directly need. Investigating a fake company? You need the domain info, not the CEO's daughter's school photos.

- Verify, Then Trust: The internet is a web of lies and misinformation. Never trust a single source. That LinkedIn profile looks legit? Great. Now, cross-reference it with a business filing, a GitHub account, or a news mention. Corroboration is your best friend. An unverified finding is just a rumor.

- Respect the "Spirit of Public": This is a nuanced one. Technically, an unlisted YouTube video isn't "public," even if you have the link. A Facebook post visible to "Friends of Friends" is in a gray zone. My rule of thumb: if I had to use a workaround or found it through a leak, it's probably off-limits. Err on the side of respecting the intent of the privacy setting, not just the technical loophole.

- Clean Up After Yourself: You've finished your investigation. What now? Don't just hoard the personal data you've collected. Securely delete it. Holding onto it creates an unnecessary risk. Think of it like a crime scene investigator — once the case is closed, you don't keep the evidence in your garage.

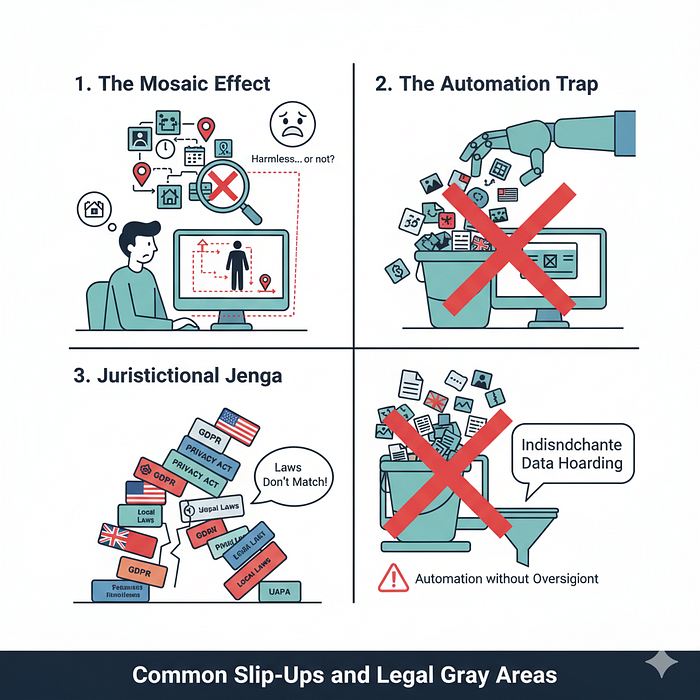

Common Slip-Ups and Legal Gray Areas

Even with the best intentions, it's surprisingly easy to cross a line without meaning to. I've seen smart people stumble into these common traps — and I've learned from a few myself.

- Watch Out for the Mosaic Effect. Be careful when piecing together public information. Individual data points — like a social media check-in, a public run on a fitness app, or a calendar note — seem harmless alone. But combined, they can reveal someone's full daily routine and location. You might not intend to stalk someone, but by assembling these pieces, that's effectively what you're doing. Always consider the complete picture you're creating, not just the individual parts.

- The Automation Trap: Powerful tools like Maltego or SpiderFoot are amazing, but they can make you lazy. They suck up data indiscriminately, often pulling in personal details you don't need and shouldn't have. Automation without oversight is how ethical investigations can quickly veer into unethical data hoarding.

- Jurisdictional Jenga: The law hasn't kept up with technology. What's legal for you to access in your country might be a privacy violation in the subject's country. Laws like Europe's GDPR have strict rules about processing personal data, even if it's public. When in doubt, it's better to be conservative.

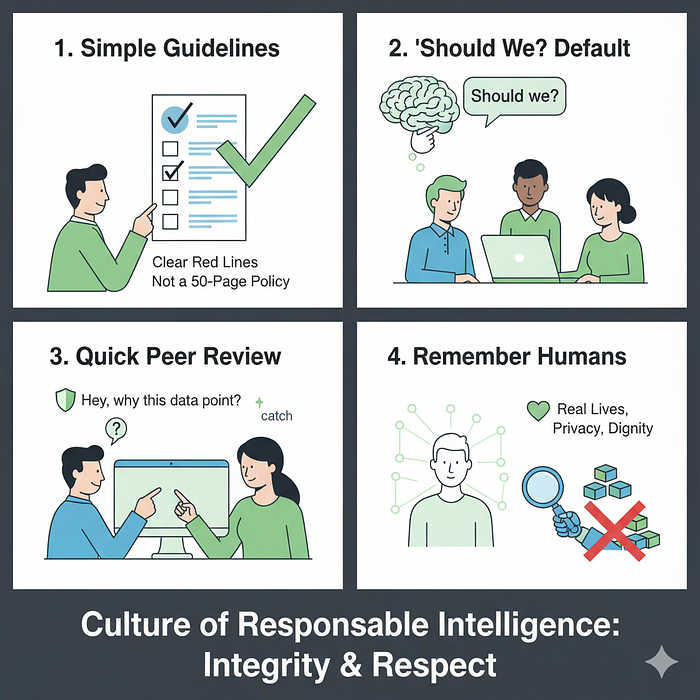

Building a Culture of Responsible Intelligence

Ethical OSINT can't just be one person's concern — it needs to be baked into how your whole team operates. Here's what that looks like in practice:

First, make your guidelines simple and memorable. Instead of a 50-page policy nobody reads, create a one-page checklist with clear red lines. Something people can actually remember and use.

Second, make "should we" your team's default question. It's not enough to ask "can we find this information?" The more important question is always "should we?" Is this actually necessary, or are we just being nosy?

Third, implement quick peer reviews. Before wrapping up an investigation, have someone glance at your process. A simple "hey, walk me through why we needed this data point?" can catch ethical slips before they happen.

Finally, remember these aren't abstract data points — they're traces of real human lives. That IP address belongs to a person. That social media post came from someone with feelings and a right to privacy.

At the end of the day, your most important OSINT tool isn't some fancy software — it's your integrity. Good OSINT is built on trust: trust in your process, trust from the people you're protecting, and the confidence that you're making the internet a better place, not a creepier one.

It's about proving that in an age of information overload, the smartest thing you can be is responsible.