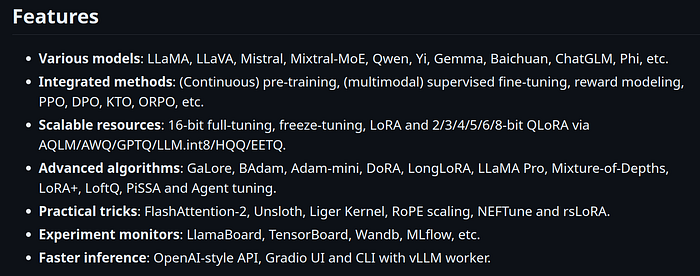

This will be a short and to the point article. Use Llama-Factory because it very easy to set up compared to other frameworks (basically very less dependency issue). Below are the features it supports.

Jun run below 4 lines for Setup:

git clone - depth 1 https://github.com/hiyouga/LLaMA-Factory.git

cd LLaMA-Factory

pip install -e ".[torch,metrics]"

pip install - no-deps -e .Use it in a fresh environment to present dependency issues.

After setting it up, there are 2 ways to use Llama-Factory:

- Using GUI

- Directly from Terminal (I recommend this)

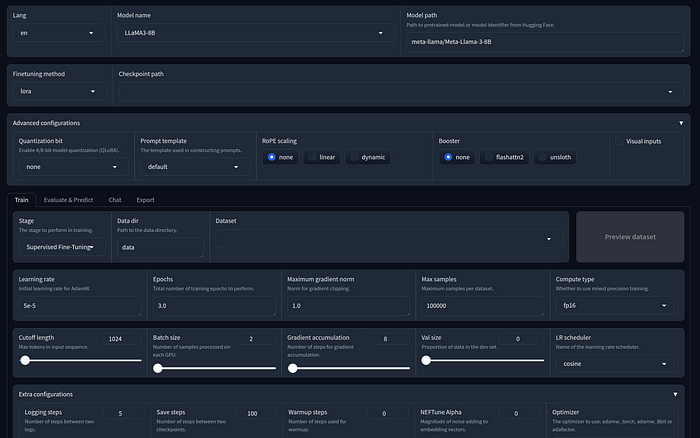

You can use GUI, to see what arguments you can choose:

cd LLaMA-Factory

llamafactory-cli webui

From its GUI, you can choose the arguments and then click Preview Command and use it to train the model without GUI.

An Eg on how to fine-tune Llama3–8b using Supervised Fine Tuning 4 bit Quantized Lora:

llamafactory-cli train

--stage sft \

--do_train True \

--model_name_or_path NousResearch/Meta-Llama-3-8B-Instruct \

--preprocessing_num_workers 16 \

--finetuning_type lora \

--quantization_bit 4 \

--template llama3 \

--flash_attn auto \

--dataset_dir data \

--dataset alpaca_en_demo \

--cutoff_len 1024 \

--learning_rate 5e \-05

--num_train_epochs 2 \.0

--max_samples 100000 \

--per_device_train_batch_size 8 \

--gradient_accumulation_steps 8 \

--lr_scheduler_type cosine \

--max_grad_norm 1 \.0

--logging_steps 5 \

--save_steps 100 \

--warmup_steps 0 \

--optim adamw_torch \

--packing False \

--report_to none \

--output_dir saves/LLaMA3-8B/lora/trainTest \

--fp16 True \

--plot_loss True \

--ddp_timeout 180000000 \

--include_num_input_tokens_seen True \

--lora_rank 8 \

--lora_alpha 16 \

--lora_dropout 0 \

--lora_target all \How to Train your model on a custom Dataset?

Just drop the dataset in ./data folder in the same format as example datasets present there. The format is different for SFT, DPO, KTO, etc. And then add your dataset information in the data/dataset_info.json and then you are good to go.

To run Inference on Base Model :

llamafactory-cli chat examples/inference/llama3.yamlYou can use any supported model by editing the yaml file:

model_name_or_path: NousResearch/Meta-Llama-3.1–8B-Instruct

template: llama3To run Inference on Lora Finetuned Model :

llamafactory-cli chat examples/inference/llama3_lora_sft.yaml

model_name_or_path: NousResearch/Meta-Llama-3–8B-Instruct

adapter_name_or_path: saves/LLaMA3–8B/lora/train_testing_2

template: llama3

finetuning_type: loraAlso these Above inference basically runs src/llamafactory/chat/chat_model.py

So you can directly edit this python file do run inference on some Custom Dataset.

Happy Finetuning!!!