Stable Diffusion 3 Medium is an advanced text-to-image model known as a Multimodal Diffusion Transformer (MMDiT). This model delivers stunning improvements in image quality, understanding complex prompts, handling text in images, and using resources efficiently.

Stable Diffusion 3 Medium is Stability AI's latest image generation model, supporting both text-to-image and image-to-image generation.

SD3 Medium weights are now available for download on Hugging Face: Check Here

📋 Model Overview

- Created by: Stability AI

- Model Type: MMDiT text-to-image generative model

- Description: This model generates images from text prompts. It uses three fixed, pre-trained text encoders:

OpenCLIP-ViT/GCLIP-ViT/LT5-xxl

But before we go into details, let's see it in action.

🛠️Steps we need to follow;

Login to HuggingFace and agree to use the Stable Diffusion 3 Medium model.

⚙️ Setting Up the Project Environment: Best Practices

Create a conda environment after cloning the repository.

### Create a conda environment after cloning the repository

```bash

conda create -p venv python -y

```

### activate conda envirnoment

```bash

source activate ./venv

```💬 Define Project Requirements

Before using SD3 Medium with Hugging Face, ensure you install the following libraries and log in using your Hugging Face token.

### requirements.txt

torch

gradio

diffusers

transformers

sentencepiece

protobuf

accelerate

huggingface_hub[cli]

### install the requirements

```bash

pip install -r requirements.txt

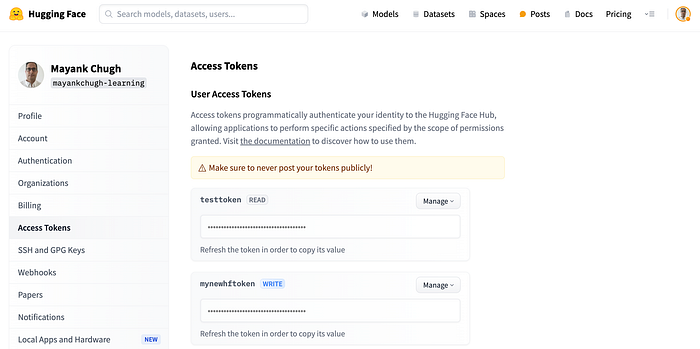

```🔐 HuggingFace token

3. 🤖 Implementing the Gradio App

This code requires a GPU to run efficiently. Ensure you are using a platform that meets this requirement. The code can be executed on the CPU, but it will take time, completely depending on your system configuration.

Run the code below

import torch

import gradio as gr

from diffusers import StableDiffusion3Pipeline

def image_generator(prompt):

device = "cuda" if torch.cuda.is_available() else "cpu"

pipeline = StableDiffusion3Pipeline.from_pretrained(

"stabilityai/stable-diffusion-3-medium-diffusers",

torch_dtype=torch.float16 if device == "cuda" else torch.float32,

text_encoder_3=None,

tokenizer_3=None

)

pipeline.enable_model_cpu_offload()

#pipeline.to(device)

image = pipeline(

prompt=prompt,

negative_prompt="blurred, ugly, watermark, low, resolution, blurry",

num_inference_steps=40,

height=1024,

width=1024,

guidance_scale=9.0

).images[0]

return image📦 Imports:

torch: A popular deep learning library used for tensor computations and building neural networks.gradio: A library for creating interactive user interfaces for machine learning models.StableDiffusion3Pipelinefromdiffusers: A specific pipeline from thediffuserslibrary that facilitates the use of Stable Diffusion 3 for text-to-image generation.

📝 Function Definition:

image_generator(prompt): A function that takes a text prompt as input and generates an image based on that prompt.

🖥️ Device Selection:

- The code checks if a CUDA-compatible GPU is available. If so, it sets the device to "cuda" for GPU acceleration. If not, it defaults to using the CPU.

🏗️ Pipeline Initialization:

StableDiffusion3Pipeline.from_pretrained(...): Loads a pre-trained Stable Diffusion 3 model from the specified repository.torch_dtype: Specifies the data type for tensor computations. Usesfloat16for GPUs (to save memory and speed up computations) andfloat32for CPUs.text_encoder_3andtokenizer_3are set toNone, indicating that the default text encoders and tokenizers will be used.

⚙️ Model Offloading:

pipeline.enable_model_cpu_offload(): Enables offloading parts of the model to the CPU when they are not in use to save GPU memory.- The commented line

#pipeline.to(device)would move the entire pipeline to the specified device (GPU or CPU), but it's commented out, likely because theenable_model_cpu_offloadmethod is handling memory management.

🖼️ Image Generation:

- The

pipelineobject is called with several parameters to generate an image: prompt: The text prompt describing what the generated image should depict.negative_prompt: A list of undesirable attributes to avoid in the generated image (e.g., "blurred, ugly, watermark").num_inference_steps: The number of steps the model takes to generate the image. More steps generally result in higher quality but take longer to compute. Here, it's set to 40.- In the code, the number of inference steps is 40, the default is 50. Higher steps result in better quality but take longer to generate. Adjust this based on your needs.

heightandwidth: Dimensions of the generated image, both set to 1024 pixels.guidance_scale: A parameter that influences how much the model should follow the prompt versus generating more creative variations. A higher value makes the image more closely match the prompt. Here, it's set to 9.0.- The

pipelinereturns a list of images, and.images[0]extracts the first image from this list.

🔄 Return Statement:

- The generated image is returned as the output of the

image_generatorfunction.

💭 📬 Testing the code

image_generator("Indian cricket team winning world cup")Would you like to see the image generated by the code? see below

📱💬 Gradio code — frontend app

def image_generator(prompt):

device = "cuda" if torch.cuda.is_available() else "cpu"

pipeline = StableDiffusion3Pipeline.from_pretrained("stabilityai/stable-diffusion-3-medium-diffusers",

torch_dtype=torch.float16 if device == "cuda" else torch.float32,

text_encoder_3=None,

tokenizer_3 = None)

pipeline.enable_model_cpu_offload()

pipeline.to(device)

image = pipeline(

prompt=prompt,

negative_prompt="blurred, ugly, watermark, low, resolution, blurry",

num_inference_steps=40,

height=1024,

width=1024,

guidance_scale=9.0

).images[0]

return imageStability AI offers some initial free credits(Get your API key here), which you can use to generate images on platforms like Fireworks AI incase, if you prefer to generate images using GUI instead of Hugging Face pipeline.

For direct API access to the model, check out the stability ai page — Check Here. (Detailed blog for accessing through API will follow next)

Conclusion

Stable Diffusion 3 marks a major leap forward in AI-driven image generation. Its enhanced capabilities in text comprehension, image quality, and performance offer new opportunities for developers, artists, and enthusiasts to explore their creativity.

By applying the techniques and optimizations outlined in this article, you can customize Stable Diffusion 3 to suit your specific requirements, whether you're using cloud-based solutions or local GPU setups. Experimenting with various prompts and settings will help you uncover the full potential of this powerful tool, enabling you to bring your imaginative ideas to life.

As AI-generated imagery continues to evolve rapidly, Stable Diffusion 3 leads this exciting transformation. We are just beginning to see the creative possibilities that future iterations will bring. So, dive in, experiment, and let your creativity soar with Stable Diffusion 3!