Disclaimer: The code in this article is for demonstration purposes only. It is not for use beyond sandboxed testing and is not 100% accurate.

The Last Great War

Although I rarely watch TV, one of my favorite activities is watching the Transformers cartoon with my oldest child; he is obsessed, can narrate most scenes, and builds popular characters with Lego as he watches. Transformers is about a war between the good bots (auto bots), led by Optimus Prime, and the bad bots, "The Decepticons," led by Megatron, the formidable leader of the Decepticons.

"The Decepticons are a malevolent race of robot warriors, brutal. The Decepticons are driven by a single undeviating goal: total domination of the universe."

One episode explored the stealthy tactics the villainous bots employ to elude human detection, unveiling that only the noble bots have the sharp acumen required to spot the Decepticons and halt their nefarious plans in their tracks. This captivating episode inspired me to write this piece as it aligns with one of my core security values: "Developers shouldn't have to make security decisions; systems should."

Not all visitors are welcome:

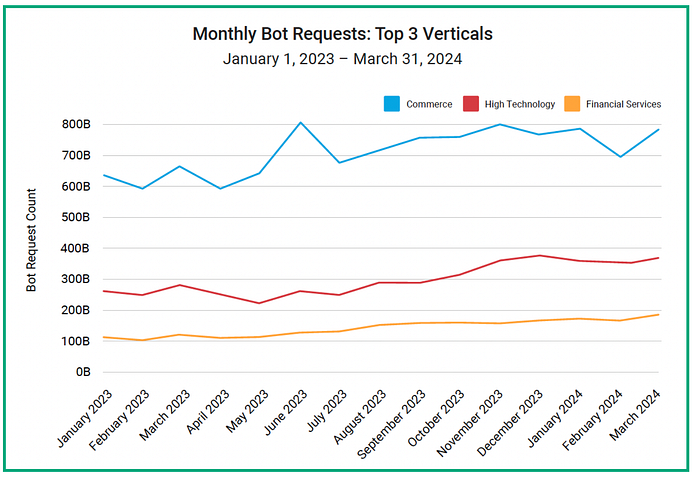

Recent research by Barracuda Networks revealed that bots accounted for nearly two-thirds of global internet traffic in early 2021, with malicious bots comprising almost 40%. This prevalence of non-human activity, a fact that many may not be fully aware of, is reshaping the digital landscape, presenting opportunities and significant challenges.

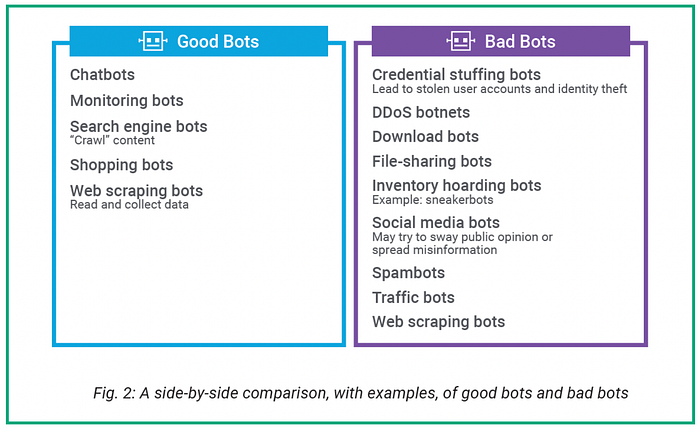

Bots, or internet robots, are automated software applications designed for specific online tasks. They range from benign and essential, like search engine crawlers, to malicious and disruptive, such as those engaged in click fraud or DDoS attacks. Understanding this ecosystem is not just crucial; it's empowering for anyone involved in digital operations and makes them feel informed and prepared.

Types of Bots:

- Click Bots: Simulate ad clicks, skewing analytics and draining ad budgets.

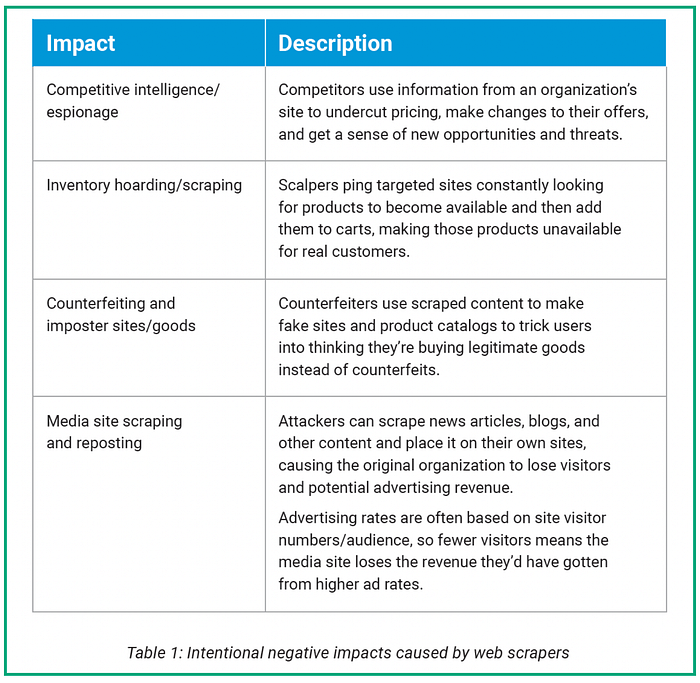

- Scraper Bots: Harvest content and data, often detrimental to original creators.

- Spam Bots: Flood comment sections and inboxes with unwanted content.

- Impersonator Bots: Mimic human behavior to bypass security measures.

- Beneficial Bots: Include search engine crawlers and monitoring bots.

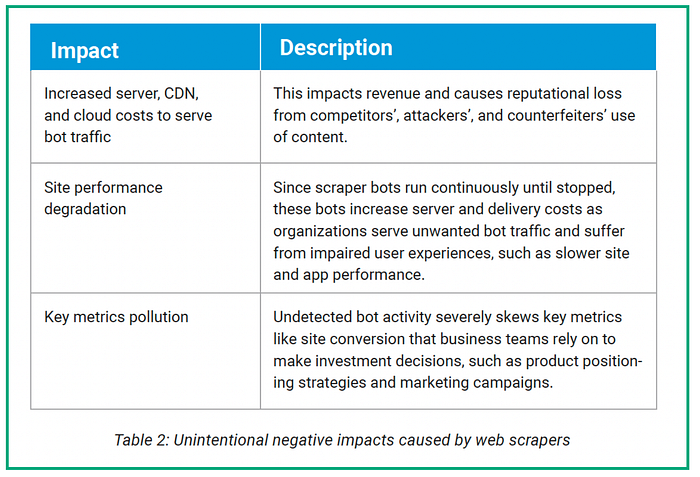

The impact of bot traffic extends beyond mere annoyance. It can significantly affect website performance, data accuracy, and revenue. With digital ad fraud projected to cost $100 billion by 2023, understanding and mitigating bot traffic has become crucial.

Web Scraping Evasion Techniques: A Technical Analysis

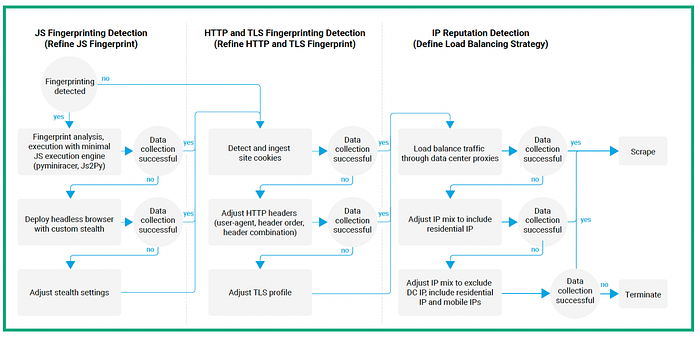

As defensive measures evolve, so do the techniques employed by sophisticated web scrapers. Let's examine the advanced evasion methods used by modern bots to circumvent detection:

- JavaScript Fingerprinting Evasion:

- Minimal JS Execution: Bots use lightweight JS engines to handle basic operations without triggering alarms.

- Headless Browser Deployment: More advanced bots utilize headless browsers with stealth plugins to mimic human-like interactions.

- Example (Python with Selenium):

from selenium import webdriver

from selenium.webdriver.chrome.options import Options

chrome_options = Options()

chrome_options.add_argument("--headless")

chrome_options.add_argument("--window-size=1920x1080")

driver = webdriver.Chrome(options=chrome_options)

driver.get("https://example.com")

fingerprint = driver.execute_script("""

return {

userAgent: navigator.userAgent,

language: navigator.language,

screenRes: window.screen.width + 'x' + window.screen.height,

timezone: Intl.DateTimeFormat().resolvedOptions().timeZone

};

""")

print(fingerprint)

driver.quit() 2. HTTP and TLS Fingerprinting Circumvention:

- Header Spoofing: Bots adjust HTTP headers to match popular browsers.

- TLS Profile Manipulation: Advanced bots modify TLS parameters to evade fingerprinting.

Example (Python with custom SSL context):

import ssl

import requests

from urllib3.poolmanager import PoolManager

from urllib3.util.ssl_ import create_urllib3_context

class CustomHttpAdapter(requests.adapters.HTTPAdapter):

def __init__(self, ssl_context=None, **kwargs):

self.ssl_context = ssl_context

super().__init__(**kwargs)

def init_poolmanager(self, connections, maxsize, block=False):

self.poolmanager = PoolManager(

num_pools=connections,

maxsize=maxsize,

block=block,

ssl_context=self.ssl_context

)

def create_custom_ssl_context():

context = create_urllib3_context()

context.set_ciphers('ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256')

context.options |= (ssl.OP_NO_SSLv2 | ssl.OP_NO_SSLv3 | ssl.OP_NO_TLSv1 | ssl.OP_NO_TLSv1_1)

return context

session = requests.Session()

custom_adapter = CustomHttpAdapter(ssl_context=create_custom_ssl_context())

session.mount('https://', custom_adapter)

response = session.get('https://www.howsmyssl.com/a/check')

print(response.json())3. IP Reputation Evasion:

- Proxy Rotation: Bots use pools of proxies to distribute traffic and avoid IP-based rate limiting.

- Residential and Mobile IP Usage: Advanced bots leverage residential or mobile IPs to appear more human-like.

Example (Python with proxy rotation):

import requests

from itertools import cycle

proxies = [

{'http': 'http://proxy1:8080'},

{'http': 'http://proxy2:8080'},

{'http': 'http://proxy3:8080'}

]

proxy_pool = cycle(proxies)

for i in range(5):

proxy = next(proxy_pool)

try:

response = requests.get('http://httpbin.org/ip', proxies=proxy, timeout=5)

print(f"Request {i+1}: IP = {response.json()['origin']}")

except:

print(f"Request {i+1}: Failed")Defending Against Web Scraping with AWS WAF

As web scraping techniques become more sophisticated, defense mechanisms are crucial. AWS Web Application Firewall (WAF) offers a powerful solution to protect against these advanced threats. Here's a simple guide to configuring AWS WAF for anti-scraping protection:

- Create a Web ACL:

- Sign in to the AWS Management Console and open the WAF console.

- Choose "Create web ACL," set a name (e.g., "AntiScrapingWAF"), and choose the resource type.

2. Implement IP-based Rules:

- Add an IP set rule to block known scraper IP addresses:

{

"Name": "BlockKnownScrapers",

"Priority": 10,

"Action": {

"Block": {}

},

"Statement": {

"IPSetReferenceStatement": {

"ARN": "arn:aws:wafv2:us-east-1:123456789012:regional/ipset/known-scrapers/a1b2c3d4-5678-90ab-cdef-EXAMPLE11111"

}

}

}3. Rate-Based Rule for Request Throttling:

- Add a rate-based rule to limit requests from a single IP:

{

"Name": "RateLimitRule",

"Priority": 20,

"Action": {

"Block": {}

},

"Statement": {

"RateBasedStatement": {

"Limit": 2000,

"AggregateKeyType": "IP"

}

}

}4. User-Agent String Analysis:

- Implement a string match rule to detect suspicious User-Agent strings:

{

"Name": "BlockSuspiciousUserAgents",

"Priority": 30,

"Action": {

"Block": {}

},

"Statement": {

"OrStatement": {

"Statements": [

{

"ByteMatchStatement": {

"SearchString": "python-requests",

"FieldToMatch": {

"SingleHeader": {

"Name": "user-agent"

}

},

"TextTransformations": [

{

"Priority": 0,

"Type": "LOWERCASE"

}

],

"PositionalConstraint": "CONTAINS"

}

},

{

"ByteMatchStatement": {

"SearchString": "scrapy",

"FieldToMatch": {

"SingleHeader": {

"Name": "user-agent"

}

},

"TextTransformations": [

{

"Priority": 0,

"Type": "LOWERCASE"

}

],

"PositionalConstraint": "CONTAINS"

}

}

]

}

}

}5. Implement Token-Based Authentication:

- Create a Lambda function for token generation and validation.

- Add a WAF rule to check for valid tokens:

{

"Name": "ValidateToken",

"Priority": 40,

"Action": {

"Block": {}

},

"Statement": {

"ByteMatchStatement": {

"SearchString": "valid_token",

"FieldToMatch": {

"SingleHeader": {

"Name": "X-Auth-Token"

}

},

"TextTransformations": [

{

"Priority": 0,

"Type": "NONE"

}

],

"PositionalConstraint": "EXACTLY"

}

}

}6. CAPTCHA Implementation:

- Set up a CAPTCHA service and create a Lambda function for validation.

- Add a rule to trigger CAPTCHA for suspicious requests:

{

"Name": "TriggerCaptcha",

"Priority": 50,

"Action": {

"Challenge": {}

},

"Statement": {

"AndStatement": {

"Statements": [

{

"RateBasedStatement": {

"Limit": 100,

"AggregateKeyType": "IP"

}

},

{

"ByteMatchStatement": {

"SearchString": "/sensitive-data",

"FieldToMatch": {

"UriPath": {}

},

"TextTransformations": [

{

"Priority": 0,

"Type": "NONE"

}

],

"PositionalConstraint": "STARTS_WITH"

}

}

]

}

}

}7. Geolocation-Based Rules:

- Implement rules based on the geographic origin of requests:

{

"Name": "GeoBlockHighRiskCountries",

"Priority": 60,

"Action": {

"Block": {}

},

"Statement": {

"GeoMatchStatement": {

"CountryCodes": ["CN", "RU"]

}

}

}Advanced Considerations:

- Implement dynamic rule updates using AWS Lambda to adapt to new threats.

- Integrate machine learning models with Amazon SageMaker for sophisticated bot detection.

- Combine WAF with other AWS services like Shield for DDoS protection and CloudFront for edge computing capabilities.

- Continuously monitor and tune your defenses by regularly reviewing WAF logs and metrics.

Conclusion

The battle against advanced web scraping is ongoing and complex. As scraping techniques evolve, so must our defense strategies. By understanding the sophisticated methods employed by modern bots and implementing protection measures like those offered by AWS WAF, we can significantly mitigate the risks posed by malicious automated traffic.

Remember, this is not a set-it-and-forget-it solution. Adequate protection requires continuous monitoring, analysis, and adaptation. Stay informed about emerging threats, regularly review your security logs, and be prepared to adjust your defenses as new evasion techniques emerge.

Combining technical knowledge with vigilant practices can create a more secure and reliable digital ecosystem. This will ensure that our online resources remain accessible to legitimate users while thwarting the efforts of malicious bots.

Lastly, the conversation doesn't end here. What's your experience with WAFs? Have you implemented one in your organization? What challenges or successes have you encountered?