In an age where AI is quickly becoming part of everyday tools, building systems that move beyond surface-level conversation is no longer optional — it's essential.

This project explores the creation of a context-aware, multimodal chatbot powered by Gemini Flash 2.0 on Vertex AI, enriched with Retrieval-Augmented Generation (RAG), and enhanced by report and mind map generation features.

Key Features

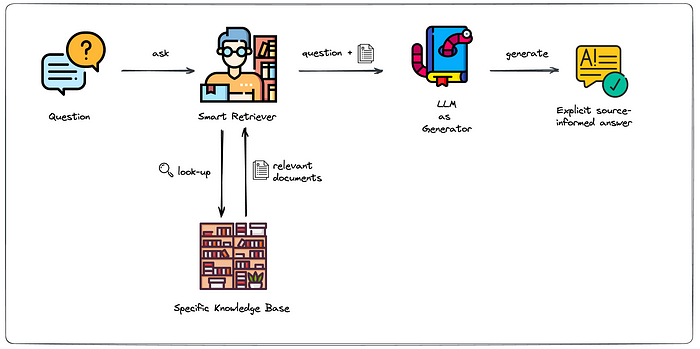

Context-Aware Responses with RAG

Instead of relying solely on the model's memory, RAG enabled the chatbot to pull relevant data from document sources, grounding each answer in real, verifiable information. This greatly reduced hallucinations and increased trust.

Gemini Flash on Vertex AI

Gemini Flash 2.0 brought the perfect mix of speed and cost-efficiency. It was particularly suited for real-time applications, with Vertex AI providing scalable infrastructure for deployment and fine-tuning.

Multimodal Input Support

The chatbot could handle both textual input and uploaded documents, allowing users to query specific files and receive accurate responses based on their content. Future-ready support for image and voice inputs was part of the design architecture.

Report Generation

Every interaction could be transformed into a structured report: summarizing key takeaways, answers, and retrieved content in a clean, readable format.

Mind Map Creation

Inspired by tools like NotebookLM, the bot could generate visual mind maps of the key concepts discussed in a conversation. This helped users organize thoughts and study topics visually, improving both retention and clarity.

Technologies Used

- Frontend: React.js

- Backend: Python

- Cloud Hosting & LLM: Vertex AI with Gemini Flash

- Retrieval: RAG using custom document sources

- Output Generation: Report formatter and Mind Map generator (custom logic)

Why This Matters: Many chatbots today are fast , but lack context. Others generate fluent responses , but are factually weak.

This project bridges the gap by ensuring the chatbot:

-Retrieves real-time information

-Generates grounded, reliable answers

-Summarizes information automatically

-Supports multimodal interactions

-Turns knowledge into visuals for better understanding

This isn't just a chatbot- it's a knowledge assistant, built for users who need answers they can use and trust.

Final Thoughts

With the rise of LLMs and AI services, the future isn't just about building smarter models. It's about building more meaningful experiences around them.

This chatbot combines performance with purpose: speed with substance and conversation with cognition.

The next steps could include voice-based interaction, semantic search, multi-language support, and plug-and-play integration with knowledge bases or enterprise tools.

We're just scratching the surface of what's possible when AI is designed to assist, organize, and enhance thought, not just mimic it.