TLDR

- Every agent interaction produces a next-state signal. Every existing system wastes it. OpenClaw-RL recovers it as live training data.

- Fully async architecture: the agent keeps serving while it learns in the background. Zero interruption.

- Two methods (Binary RL + On-Policy Distillation) combine to jump personalization scores from 0.17 to 0.81 in 16 steps.

- Works for personal agents AND general agents (terminal, GUI, SWE, tool-call). Open source, Princeton.

Friends link for everyone: Follow the author, publication, and clap. Join Medium to support other writers too! Cheers

Here's the thing: every AI agent you've ever deployed is already sitting on a goldmine of training data. Every single interaction. Every user reply, every tool execution result, every terminal output, every GUI state change. It's all there. Rich, structured, free.

And every single system throws it away.

The user says "no, I meant the other file." That's a training signal. The test suite returns a stack trace after the agent's code edit. Training signal. The terminal spits back an exit code. Training signal. All of it, discarded as mere "context for the next action." Not converted into gradients. Not used to update weights. Just… consumed and forgotten.

A team at Princeton just dropped a paper that makes this waste impossible to ignore. OpenClaw-RL. And unlike most papers that rebrand existing ideas with a new acronym, this one actually identifies something fundamental that the entire agentic RL community has been sleeping on.

What Exactly Is the "Next-State Signal"?

Simple. After an agent takes action a_t, something happens. The user replies. The tool returns output. The GUI transitions. That "something" is the next state s_{t+1}. In standard RL for LLMs, this signal either gets ignored entirely or reduced to a terminal outcome reward at the very end of a long trajectory.

OpenClaw-RL argues this signal carries two distinct forms of recoverable information. First, evaluative signals: did the action work? A user re-query implies dissatisfaction. A passing test signals success. A PRM can convert these into scalar rewards turn by turn. Second, directive signals: how should the action have been different? When a user writes "you should have checked the file first," that's not just a thumbs-down. It's telling you which tokens should change and how. Scalar rewards can't capture this. You need something richer.

Truth be told, the evaluative part isn't entirely new. PRMs have been studied in math reasoning for a while. But applying them as a live, online process reward across heterogeneous interaction streams (conversations, terminals, GUIs, SWE tasks, tool calls) simultaneously? This isn't the usual "we trained on a fixed dataset" story. This time, it's live.

The Architecture That Makes It Work

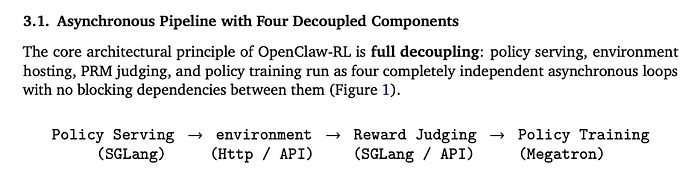

OpenClaw-RL is built on the slime async framework, and it decouples everything. Four independent loops running with zero blocking dependencies:

The model serves your next request while the PRM judges your previous response and the trainer applies gradient updates from two interactions ago. No component waits for another. For personal agents, your device connects through a confidential API. For general agents at scale, hundreds of parallel environments run on cloud services.

Oh! And it classifies every API request into "main-line" turns (trainable: actual responses and tool executions) versus "side" turns (non-trainable: memory organization, auxiliary queries). So it knows exactly what to learn from.

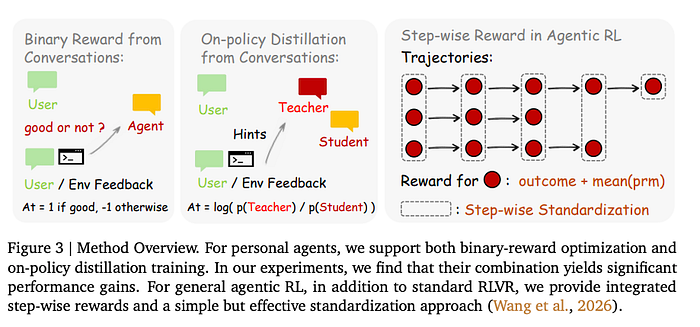

Binary RL: The Coarse but Reliable Signal

The PRM evaluates each agent response given the next-state feedback. Did the user seem satisfied? Did the tool call succeed? The judge scores it: +1 (good), -1 (bad), or 0 (neutral). Multiple independent evaluations via majority vote for robustness.

These scalar rewards feed into a PPO-style clipped surrogate loss with asymmetric bounds. Standard stuff, well-understood optimization. Binary RL accepts every scored turn, works with any next-state signal including terse reactions, and provides broad gradient coverage.

But here's the thing: it's coarse. One scalar per sequence. When the user says "you should have checked the file before editing it," Binary RL gives you a -1. That's it. All the directional information about what to change and how? Lost.

Hindsight-Guided On-Policy Distillation: The Secret Weapon

This is where OpenClaw-RL gets genuinely clever. OPD recovers the directive information that Binary RL throws away, and it does it without requiring a separate, stronger teacher model.

Four steps.

- Step one: the judge extracts a concise "hint" from the next-state signal (1 to 3 sentences of actionable correction).

- Step two: quality filtering; only turns with clear, extractable correction direction make the cut.

- Step three: the hint gets appended to the original prompt, creating an "enhanced teacher context"; what the model would have seen if the user had given the correction upfront.

- Step four: the policy model is queried under this enhanced context, and the per-token log-probability gap between the "teacher" (hint-augmented) and "student" (original) distributions becomes the advantage signal.

A_t = log π_teacher(a_t | s_enhanced) - log π_θ(a_t | s_t)

This is beautiful because it's self-distillation. No external teacher. No pre-collected feedback pairs. The model teaches itself using hindsight that was already there in the conversation. Some tokens get reinforced, others get suppressed. Per-token directional guidance; infinitely richer than a single scalar.

But wait. OPD is picky. It only trains on turns where a clear correction direction exists. Sparse samples. That's why you need both methods running together.

The Combined Method Changes the Numbers

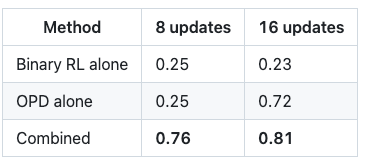

The numbers don't lie. Starting from a base personalization score of 0.17:

Are we serious? Binary RL alone actually degrades after 16 steps. OPD alone shows delayed gains (sparse samples, remember?) but eventually climbs hard. But combined? 0.76 after just 8 updates. From 0.17.

In concrete terms: a student using OpenClaw for homework needed only 36 problem-solving interactions before the agent learned to stop sounding like AI (no more "bold" formatting, no more robotic step-by-step). A teacher grading homework needed just 24 interactions before the agent started writing friendlier, more specific feedback. The student scenario jumped from 0.17 to 0.76. The teacher from 0.22 to 0.90.

It's Not Just Personal Agents

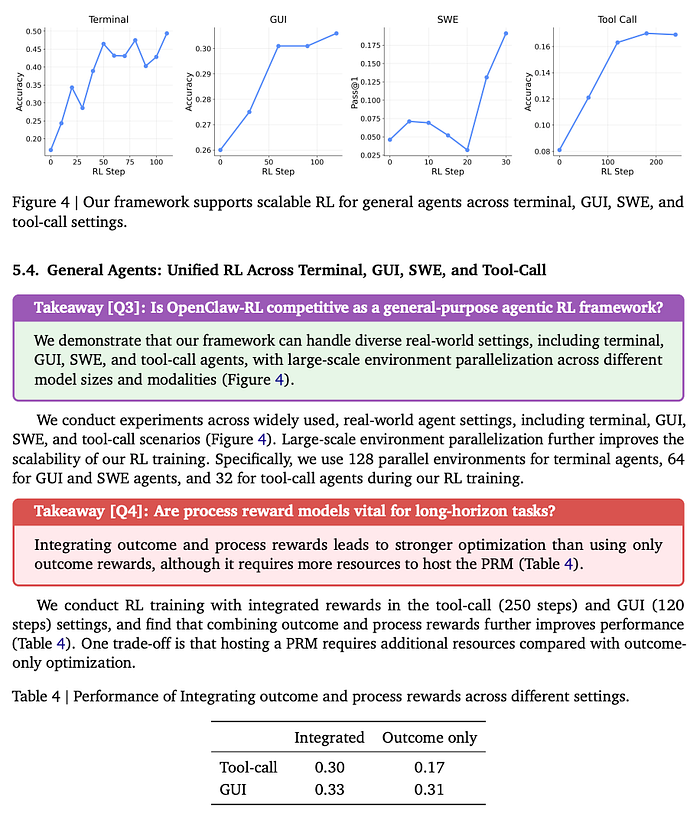

The same infrastructure handles general agentic RL across terminal, GUI, SWE, and tool-call settings with massive parallelization (128 environments for terminal, 64 for GUI/SWE, 32 for tool-call). Different models for different settings: Qwen3–8B for terminal, Qwen3VL-8B-Thinking for GUI, Qwen3–32B for SWE.

For general agents, they integrate process rewards with outcome rewards. The results on tool-call: 0.30 with integrated rewards vs 0.17 outcome-only. That's a 76% improvement just from adding step-wise PRM signals. Not earth-shattering absolute numbers, but the directional evidence is clear: process rewards matter for long-horizon agentic tasks, and OpenClaw-RL makes them practical at scale.

Why This Actually Matters

Every agentic framework right now adapts through memory files, system prompts, and skill libraries. The base model weights never change. OpenClaw-RL changes the weights. While you're using it. Without interrupting service.

The entire stack (policy model, judge, trainer) runs on your own infrastructure. No third-party API calls. Your conversation data stays local. And everything is logged to JSONL in real time for full observability.

This is the first system that unifies personal agent personalization and general agent training in the same loop, from the same next-state signals, across heterogeneous interaction types. Conversations, terminals, GUIs, code repos, tool calls; they're all just MDPs with different transition functions. The training signal is universal.

The agent that improves by being used isn't a research prototype anymore. It's open source, it's async, it runs on your hardware, and it learns from the data you're already generating and discarding.

This is my perspective. You should do what you are comfortable with. But if you're building agentic systems and you're not recovering next-state signals for training, you're leaving the most natural, most abundant, most information-rich supervision source on the table. OpenClaw-RL just showed you exactly how to pick it up.

If you have read it until this point, Thank you! You are a hero (and a Nerd ❤)! I try to keep my readers up to date with "interesting happenings in the AI world," so please 🔔 clap | follow | Subscribe 🔔